The Ultimate Guide to Data Matching Software and Services

Why Organizations Lose Millions to Duplicate, Siloed Data Every Year

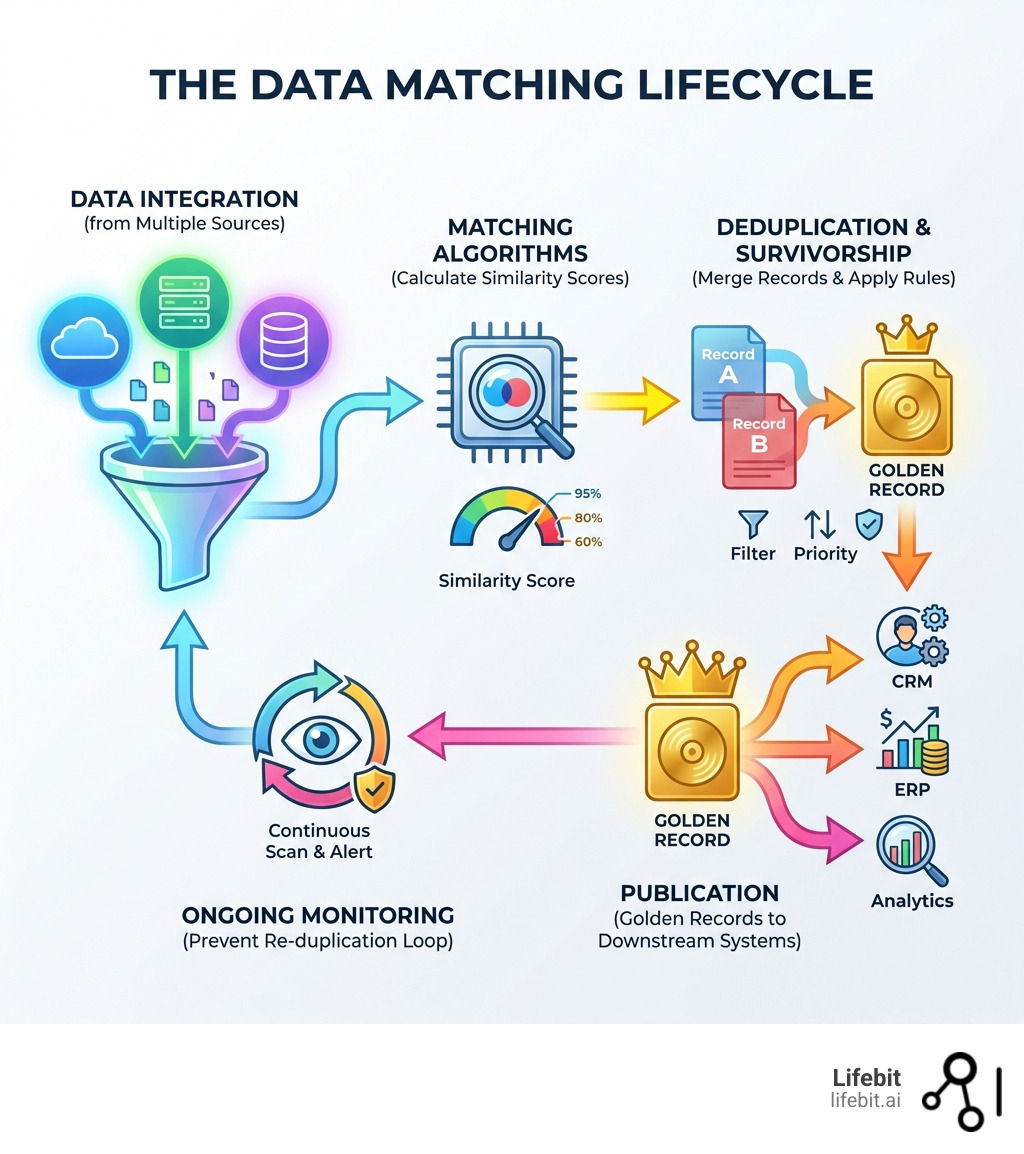

Data matching software and services help organizations identify, link, and merge duplicate or related records across disconnected databases—eliminating costly errors, enabling regulatory compliance, and opening up a unified view of customers, patients, or citizens. In an era where data is generated at an exponential rate, the ability to reconcile disparate data points is no longer a luxury; it is a fundamental requirement for operational survival.

What Data Matching Software and Services Do:

- Identify duplicates using exact, fuzzy, phonetic, or AI-powered algorithms to find hidden overlaps.

- Link records across siloed systems without unique identifiers, such as matching a CRM record to a legacy billing system.

- Create golden records by merging the best data from multiple sources through sophisticated survivorship rules.

- Reduce costs by cutting database size, storage overhead, and the manual labor required for data cleanup.

- Enable compliance with GDPR, CCPA, and HIPAA by minimizing data footprints and ensuring the “Right to be Forgotten” can be executed accurately.

- Support real-time or batch processing for millions of records daily, ensuring data remains clean as it enters the ecosystem.

Organizations dealing with fragmented data face serious consequences. Duplicate customer records lead to inconsistent marketing, wasted ad spend, and poor experiences. In healthcare, fragmented patient records can delay care, lead to incorrect medication administration, or trigger severe compliance violations. Government agencies struggle to link citizen data across departments, slowing policy decisions and emergency response times. According to research, duplicate data costs enterprises millions annually—from inflated storage bills to missed revenue opportunities—and poor data quality undermines AI models, analytics, and overall operational efficiency. This phenomenon, often called “Data Debt,” compounds over time, making it increasingly difficult for organizations to pivot or innovate.

Data matching solves these problems by comparing records across systems, calculating similarity scores, and flagging or merging duplicates. Modern platforms use fuzzy matching to handle typos, phonetic algorithms to catch name variations, and machine learning to improve accuracy over time. They can process millions of records without manual prep, deploy in cloud or on-premise environments, and integrate with CRM, ERP, and data warehouse systems. The result: a single source of truth that drives smarter decisions, faster insights, and lower risk.

I’m Dr. Maria Chatzou Dunford, CEO and Co-founder of Lifebit, a federated genomics and biomedical data platform. With over 15 years in computational biology, AI, and health-tech, I’ve built tools that empower precision medicine and secure data integration—including data matching software and services that link sensitive health records across institutions without moving data. In this guide, I’ll walk you through how data matching works, which algorithms matter, and how to choose the right solution for your organization.

Data matching software and services terms to know:

- data matching software freeware

- data matching technology

- entity resolution software

How Data Matching Software and Services Cut Costs and Eliminate Data Silos

In the modern enterprise, data is rarely in one place. It lives in CRMs, ERPs, legacy spreadsheets, and cloud warehouses. Without a way to bridge these silos, your organization is essentially flying blind. Data matching software and services provide the “connective tissue” needed for record linkage and entity resolution—the process of determining if two different records refer to the same real-world entity. This process is critical for creating a cohesive data architecture that supports advanced analytics and business intelligence.

The financial impact of failing to link these records is staggering. Research indicates that duplicate data costs can range from $10 to $100 per record depending on how long the error persists. For an enterprise with millions of records, a 10% duplication rate can lead to millions in wasted operational spend. By implementing probabilistic record linkage systems, we can move beyond simple “exact match” logic to find hidden connections that human eyes—and basic spreadsheets—would miss. This is particularly relevant in marketing, where sending multiple catalogs to the same household not only wastes money but also damages brand perception.

| Feature | Exact Matching | Fuzzy/Probabilistic Matching |

|---|---|---|

| Logic | Binary (Yes/No) | Similarity Scores (0-100%) |

| Sensitivity | Low; fails on typos | High; catches variations |

| Use Case | Unique IDs (SSN, SKU) | Names, Addresses, Phone Numbers |

| Manual Effort | Low | Requires threshold setting |

| Accuracy | 100% for identical strings | High for “real-world” messy data |

Beyond cost, there is the issue of risk. Incomplete data leads to flawed analytics. If your data matching isn’t robust, your “data-driven” decisions are based on half-truths. High-quality matching ensures that when you look at a customer or a patient, you see the full context of their interactions, not just a fragmented slice. For example, in the financial sector, failing to match a customer’s credit card account with their mortgage account can lead to missed opportunities for cross-selling or, worse, an inaccurate assessment of credit risk.

Core Functionalities of Modern Data Matching Software and Services

Modern solutions have evolved far beyond simple SQL joins. Today’s data matching software and services offer a suite of automated tools designed to handle the complexity of global data. These tools are built to manage the “Three Vs” of big data: Volume, Velocity, and Variety.

- Automated Mapping: Using word clouds, pattern recognition, and semantic analysis to automatically identify which fields (e.g., “First_Name” vs. “FNAME”) should be compared. This reduces the time spent on manual data preparation by up to 80%.

- Configurable Match Scores: Assigning weights to different fields. For example, a match on a rare surname might carry more weight than a match on a common city name. This allows for a more nuanced approach to data similarity.

- Confidence Levels: Providing a numeric value (0-100%) for every match, allowing data stewards to automate “high-confidence” merges while flagging “low-confidence” pairs for manual review. This hybrid approach combines the speed of automation with the precision of human oversight.

- Scalability: The ability to process millions of records daily. Some enterprise solutions can ingest and match data with no limit on record volume, which is essential for industries like finance or public health where data streams are constant.

- Data Profiling: Before matching begins, the software analyzes the quality of the source data, identifying missing values, outliers, and formatting inconsistencies that could skew results.

At Lifebit, we take this a step further with federated capabilities. Our platform allows for advanced matching and harmonization across global datasets without the need to move sensitive data into a central repository. This is a game-changer for international research collaborations where data sovereignty laws prevent the physical transfer of data across borders. More info about the Lifebit platform can help you understand how we bridge the gap between security and data utility.

Achieving a Single Customer View through Data Enrichment

The ultimate goal of most data matching projects is the creation of a “Single Customer View” or a “Golden Record.” This is the version of the truth that everyone in the organization agrees upon. It serves as the foundation for personalization, customer support, and strategic planning.

To get there, we use survivorship rules. If System A has a customer’s old address but System B has their new one, the software must decide which data “survives” into the golden record based on timestamps, source reliability, or completeness. This data unification allows for a 360-degree view that transforms customer experience. For instance, a leading financial services provider reported a $1M revenue boost simply by consolidating records to better understand their existing client base and tailoring their offerings accordingly.

Advanced Algorithms: From Fuzzy Logic to AI-Driven Matching

If data were perfect, we wouldn’t need sophisticated software. But data is messy. People move, they misspell their names, they use nicknames, and they enter information in different formats. This is where advanced algorithms come into play, acting as the engine behind data matching software and services.

- Deterministic Logic: This is “exact matching.” If Field A equals Field B, it’s a match. It’s great for Social Security numbers or SKU codes but fails if someone types “St.” instead of “Street” or “Jon” instead of “John.”

- Phonetic Algorithms: These match words based on how they sound rather than how they are spelled. Common examples include Soundex, Metaphone, and Double Metaphone. This is vital for catching variations like “Stephen” vs. “Steven” or “Catherine” vs. “Katherine.”

- Numeric Matching: Designed to handle transpositions in phone numbers, dates of birth, or zip codes. It can account for common entry errors, such as swapping two digits in a year.

- Distance-Based Algorithms: Techniques like Levenshtein Distance calculate the number of edits (insertions, deletions, substitutions) required to change one string into another. Jaro-Winkler is another popular method that gives more weight to strings that match from the beginning.

The Role of AI in Next-Gen Data Matching Software and Services

The biggest leap in the industry is the shift toward AI and Machine Learning. Traditional systems rely on “hard-coded” rules that are brittle and hard to maintain. When the person who wrote the rules leaves the company, the system often breaks or becomes obsolete as data patterns change.

Next-gen data matching software and services use unsupervised and semi-supervised learning to find patterns in the data itself. These AI agents can:

- Identify transpositions: Recognizing that “John Smith” and “Smith, John” are likely the same person by understanding the structure of names.

- Real-time Ingestion: Performing sub-second matching as data enters the system, preventing duplicates from being created in the first place. This is often called “point-of-entry” deduplication.

- Handle International Data: AI models can be trained on hundreds of millions of global names, making them culturally aware of naming conventions in different regions, such as the UK, Singapore, or Israel. For example, they can understand that in some cultures, the family name comes first.

- Active Learning: The system learns from human decisions. If a data steward manually confirms that two records are a match, the AI adjusts its internal weights to improve future automated decisions.

Phonetic and Multicultural Intelligence in Record Linkage

In a globalized economy, your software must understand that “Robert” might be “Bob” and that naming conventions vary wildly across cultures. Multicultural intelligence involves using pre-trained libraries—sometimes containing over 800 million names—to handle transliterations and multi-script data (e.g., matching a name written in Arabic script with its Latinized version).

For organizations operating across five continents, this isn’t just a “nice-to-have” feature; it’s a requirement for accuracy. Without it, your match rates on international datasets will plummet, leading to fragmented profiles, missed insights, and potential compliance risks when dealing with international watchlists or sanctions. Advanced software now includes “alias detection” to identify individuals who may be intentionally using variations of their name to evade detection in financial or security contexts.

Solving the Toughest Challenges in Data Quality Management

The road to clean data is paved with challenges. The most common hurdle is the “incomplete record.” If a record is missing a phone number or an email, standard matching often fails. Software solutions address this by using data profiling to identify gaps and then using data enrichment to fill them from third-party sources, such as credit bureaus or public records.

Another challenge is the “false positive”—when the software incorrectly merges two different people (e.g., a father and son with the same name living at the same address). Advanced software uses “exception handling” to flag these for human review, ensuring the integrity of the database isn’t compromised by over-eager automation. Conversely, “false negatives” occur when the system fails to link two records that actually belong to the same entity, leading to missed opportunities and fragmented views.

The Importance of Data Governance and Stewardship

Technology alone cannot solve the data quality problem. It requires a framework of data governance. This involves defining who owns the data, who is responsible for its accuracy, and what the standards for data entry are. Data stewards play a crucial role here; they are the subject matter experts who review flagged matches and refine the rules used by the matching software. By combining powerful software with a clear governance strategy, organizations can ensure that their data remains an asset rather than a liability.

The importance of this was highlighted during the global health crisis. Research on government data sharing during the pandemic showed that the ability to link data across national and local levels was crucial for an effective response. However, skills gaps and poor data quality often hindered these efforts, demonstrating that even the best technology requires a foundation of data literacy and clear processes.

Ensuring Data Compliance and Security

In the era of GDPR and CCPA, data matching is a double-edged sword. While it helps you find all the data you have on a person (necessary for “Right to be Forgotten” or “Subject Access Requests”), it also involves handling sensitive Personally Identifiable Information (PII). If not handled correctly, the matching process itself could become a security risk.

Modern data matching software and services prioritize security through:

- Data Hashing: Comparing records using encrypted “tokens” (like SHA-256) so the actual sensitive values are never exposed during the matching process. This allows for “blind matching” where two parties can find common records without seeing each other’s raw data.

- Minimizing Data Movement: Processing data “in-place” where it lives (e.g., in a Trusted Research Environment or a federated cloud) rather than moving it to a central server. This is a core tenet of the Lifebit philosophy.

- Audit Trails: Maintaining a clear, immutable history of every merge, split, and change. This is essential for regulatory audits and for reversing incorrect merges if new information comes to light.

- Role-Based Access Control (RBAC): Ensuring that only authorized personnel can see sensitive fields during the manual review process.

Industry Use Cases: Healthcare, Finance, and Public Sector

- Healthcare: Matching patient records across dozens of different source systems (pharmacy, lab, clinical, billing) to ensure doctors have a complete medical history. This reduces medical errors, prevents duplicate testing, and improves patient outcomes. In the context of genomics, matching clinical data with genomic sequences is essential for identifying the genetic drivers of disease.

- Finance: Detecting fraud by matching transaction records against global watchlists and sanctions lists in real-time. One financial firm saw a $1M boost after consolidating disparate records to better understand customer lifetime value and risk exposure. It also streamlines the Know Your Customer (KYC) process during onboarding.

- Public Sector: Creating a “Citizen 360” view. By linking data across agencies (tax, social services, education), governments can better serve their populations, identify vulnerable individuals who need support, and reduce the time spent on manual partner consolidation by up to 75%.

- Retail and E-commerce: Linking online browsing behavior with in-store purchase history to create highly personalized marketing campaigns. This helps in understanding the “omnichannel” journey of a customer, leading to higher conversion rates and improved loyalty.

Frequently Asked Questions about Data Matching

What is the difference between data matching and entity resolution?

While often used interchangeably, data matching is the specific technical process of identifying similarities between two or more records. Entity resolution is the broader strategic outcome—it is the process of linking those records to a single real-world entity (like a person, a product, or a business) and maintaining that link over time as new data arrives. Entity resolution often involves more complex logic regarding how entities relate to one another (e.g., identifying that three different people belong to the same household).

How does data matching software ensure regulatory compliance?

It helps by creating a smaller, cleaner, and more manageable data footprint. When you have fewer duplicates, it is significantly easier to parse your database for specific records during a GDPR or CCPA data request. Furthermore, advanced tools use hashing and encryption to protect data during the comparison phase, ensuring that PII is never unnecessarily exposed. It also helps in maintaining data accuracy, which is a core requirement of most privacy regulations.

Can these services handle millions of records in real-time?

Yes. Enterprise-grade solutions are built for massive scale, often using multi-threaded, in-memory processing and distributed computing frameworks. This allows them to match millions of records daily and even perform sub-second “lookup before create” checks. This real-time capability is essential for preventing the “pollution” of a database at the point of entry, ensuring that a duplicate is never created in the first place.

What is the “Golden Record” and why is it important?

The Golden Record, or Single Source of Truth (SSOT), is a master version of a data entity that contains the most accurate and complete information available from all source systems. It is important because it ensures that every department in an organization is working from the same information, which eliminates confusion and improves the accuracy of reporting and analytics.

How do you handle data in different languages or scripts?

Modern data matching software uses Unicode support and specialized transliteration libraries to handle data in various scripts, such as Cyrillic, Arabic, or Kanji. AI models are often trained on multicultural datasets to understand the nuances of how names and addresses are structured in different parts of the world.

Conclusion: Future-Proofing Your Data Strategy

Data is your organization’s most valuable asset, but only if it is accurate, accessible, and actionable. Data matching software and services are the foundation of any modern data strategy. They allow you to break down silos, reduce operational costs, and gain a significant competitive edge through a single, unified view of your truth. Without these tools, organizations are left struggling with fragmented insights and increased risk.

As we move toward a future dominated by AI, machine learning, and large-scale analytics, the quality of your underlying data will determine your success. AI is only as good as the data it is trained on; “garbage in, garbage out” remains the golden rule of computing. By investing in robust data matching and entity resolution today, you are building the infrastructure necessary for the innovations of tomorrow.

At Lifebit, we are committed to helping organizations in biopharma, government, and healthcare harness the power of their data through secure, federated AI and advanced harmonization. We believe that the most sensitive data in the world should be the most useful, and that starts with ensuring it is correctly linked and understood.

Stop losing money to duplicates and fragmented insights. Start building your golden records today to unlock the full potential of your data ecosystem.