Beyond ETL: A Guide to Seamless Data Model Transformation

Why Data Model Change Powers Modern Analytics

Data model change is the process of converting data from one structure, format, or schema into another to make it usable for analysis, reporting, or integration. Organizations today collect data from more than 400 sources on average—including apps, websites, APIs, IoT devices, and electronic health records—but this raw data is rarely ready for analytics without change.

Quick answer for those searching “data model change”:

- Definition: Converting data structures, formats, and schemas to prepare data for analysis

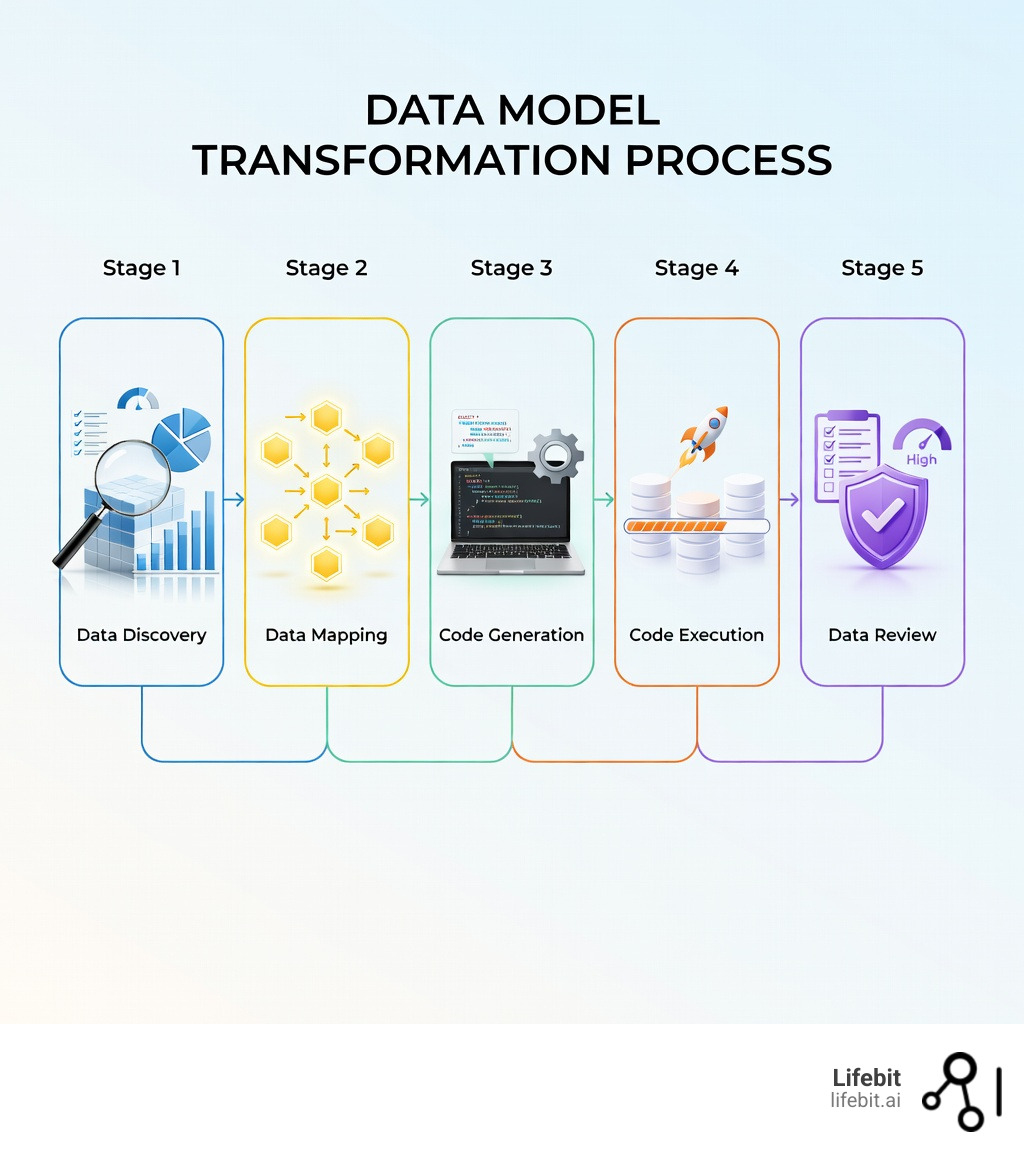

- Core processes: Data findy → mapping → code generation → execution → review

- Main types: Constructive (creating features), destructive (removing irrelevant data), formatting (standardizing), structural (reorganizing)

- Key benefit: Transforms inconsistent raw data into standardized, analysis-ready datasets

- Common uses: ETL/ELT pipelines, data warehousing, machine learning preparation, multi-source integration

The challenge is particularly acute in healthcare and life sciences. When a pharmaceutical company needs to analyze patient records from dozens of hospitals, each using different data models, change becomes critical. One hospital might record dates as “MM-DD-YYYY” while another uses “YYYY-MM-DD.” Patient identifiers, drug names, and diagnosis codes rarely match across systems. Without change, this data chaos makes accurate analysis impossible.

Data change cleans inconsistencies, fills missing values, removes duplicates, and standardizes formats so different datasets can work together. It’s the foundation that makes everything else possible—from business intelligence dashboards to AI-powered drug findy.

I’m Maria Chatzou Dunford, CEO and Co-founder of Lifebit, where I’ve spent over 15 years building platforms that handle data model change across federated biomedical environments, enabling researchers to analyze genomic and clinical data without moving it from secure locations. My work on Nextflow and computational biology tools has given me deep experience in changing complex, multi-source health data for precision medicine.

Data model change helpful reading:

Why Data Model Change is the Key to Accurate Analytics

In the modern data stack, the ability to pivot and adapt your data structures is not just a technical luxury; it is a prerequisite for survival. When we talk about data model change, we are essentially talking about the bridge between “raw noise” and “actionable signal.”

High-quality analytics depend entirely on the integrity of the underlying data. If your data model is fragmented, your insights will be too. Research into model transformation approaches shows that automated modifications are essential for creating platform-specific models that maintain consistency across large-scale systems. For us at Lifebit, this means ensuring that a researcher in London can query data from a biobank in Singapore as if it were sitting in the same room.

Standardization is the secret sauce here. By utilizing data harmonization services, organizations can bridge the gap between disparate datasets, ensuring that every data point follows the same “rules of engagement.” This is particularly vital for machine learning; an AI model trained on inconsistent data is like a student learning from a textbook with missing pages—it simply won’t perform.

How data model change ensures accuracy

Accuracy isn’t just about getting the numbers right; it’s about ensuring the meaning remains intact. Data model change facilitates this by enforcing consistency. For example, if one source records gender as “M/F” and another as “1/0,” a change layer maps these to a unified standard.

This process involves:

- Error Correction: Identifying and fixing malformed data during the mapping phase.

- Inconsistency Resolution: Ensuring that “Customer_ID” in your CRM matches “UserID” in your billing software.

- Advanced Harmonization: Moving beyond integration to truly understand the semantic relationships between data points.

The Core Processes and Types of Data Change

Changing a data model isn’t a “one-and-done” task. It’s a structured journey that ensures the data remains reliable from extraction to visualization.

The Five Pillars of Change

- Data Findy: We start by profiling the data. What do we have? Is it messy? (Spoiler: It usually is).

- Data Mapping: This is where the “blueprint” is created. We define how a field in Source A becomes a field in Target B. In health data, this often involves following the OMOP Complete Guide to map clinical records to a common dental language.

- Code Generation: Once the map is ready, we generate the instructions (SQL, Python, or specialized DSLs) to move the data.

- Execution: The heavy lifting happens here. The code runs, and the data is reshaped.

- Review: We validate the output. Did the change break anything? Does the final count match the source?

Interestingly, modern systems are moving toward bidirectional model transformations, which allow changes in one model to reflect back in another, keeping entire ecosystems in sync.

Integrating data model change into ETL and ELT workflows

The debate between ETL (Extract, Transform, Load) and ELT (Extract, Load, Transform) has shifted in favor of the latter, thanks to powerful cloud warehouses like Snowflake and BigQuery. In an ELT workflow, we load raw data first and then perform data model change within the warehouse itself.

For life sciences, tools like the Data Factory Map to OMOP allow us to orchestrate these changes at scale, ensuring pipeline efficiency even when dealing with petabytes of genomic data.

| Feature | ETL (Traditional) | ELT (Modern) |

|---|---|---|

| Change Timing | Before loading | After loading |

| Flexibility | Lower (rigid schema) | Higher (transform as needed) |

| Scalability | Limited by processing server | Unlimited (cloud-native) |

| Best For | On-premise, small datasets | Big data, AI/ML, Cloud |

Constructive vs Destructive change types

Not all changes are created equal. We generally categorize them into four buckets:

- Constructive: Adding value. Think “Feature Engineering”—creating a “BMI” column from “Height” and “Weight.”

- Destructive: Pruning the hedges. Removing irrelevant columns or duplicates to save space and improve focus.

- Formatting: The “aesthetic” fix. Changing date formats or currency symbols.

- Structural: The “architectural” fix. Moving from a flat Excel sheet to a relational star schema.

Best Practices for Implementing a Data Model Change

If you want to avoid a “data swamp,” you need to follow a few ground rules. First, always use automated data profiling. Manually checking 400 sources is a recipe for burnout.

The Golden Rules of Change

- Standardize Early: Use consistent naming conventions. If you use

snake_casein one table andCamelCasein another, your developers will eventually want to have a very stern word with you. - Handle Missing Data Gracefully: Decide whether to fill gaps (imputation) or exclude them. This is one of the biggest technical challenges in health data.

- Validate, Validate, Validate: Implement automated checks to ensure the data adheres to interoperability standards.

Overcoming common implementation challenges

The biggest problems are usually latency and governance. When real-time insights are expected, slow change pipelines can kill a project. Furthermore, when dealing with clinical data, you cannot just move data around willy-nilly. You need to ensure that every change is logged, audited, and compliant with regulations like GDPR or HIPAA.

Real-World Use Cases: From Telemetry to Precision Medicine

Data model change isn’t just for IT departments; it’s saving lives and making businesses smarter.

- Precision Medicine: By harmonizing disparate electronic health records, we enable researchers to find patterns in rare diseases that were previously hidden in siloed data.

- Financial Services: Banks use data model change to merge real-time telemetry from mobile apps with historical transaction data to detect fraud in milliseconds.

- E-commerce: Retailers aggregate daily sales into weekly trends, changing raw “clicks” into “customer lifetime value” metrics.

Our work with the Lifebit Data Bridge is a prime example. We allow organizations to transform and analyze their data where it lives, removing the need for risky data transfers while maintaining the highest standards of research precision.

Conclusion

At Lifebit, we believe that data shouldn’t be a barrier to innovation. By mastering data model change, organizations can open up the full potential of their data assets, whether they are in London, New York, or spread across five continents. Our federated AI platform is built to handle these complexities, providing secure real-time access to global biomedical data so you can focus on the “Aha!” moments of findy rather than the “Oh no” moments of data cleaning.

Frequently Asked Questions

What is the difference between data change and data modeling?

Data modeling is the blueprint—the design of how data should be structured. Data model change is the construction—the actual process of reshaping data to fit that blueprint.

Why is automation necessary for large-scale changes?

With the average company using over 400 data sources, manual change is impossible. Automation ensures speed, reduces human error, and allows for scalability as your data grows.

How does change impact machine learning model training?

ML models are only as good as the data they consume. Change ensures that features are normalized and standardized, which directly leads to higher accuracy and more reliable predictions.