No Cost, Big Impact: Free Fuzzy Data Matching Software You Need

Why Accurate Data Matching Matters for Your Research

Free data matching software helps you identify duplicate records, link related data across systems, and clean messy datasets—without spending a dollar. In an era where data is generated at an unprecedented rate, the ability to reconcile disparate information sources is no longer a luxury; it is a fundamental requirement for any data-driven organization. Whether you are a solo researcher or part of a large-scale clinical trial, the integrity of your results depends entirely on the quality of your underlying data.

| Tool | Best For | Key Feature |

|---|---|---|

| OpenRefine | Data cleaning and change | No-code, runs locally |

| Datablist | Fast online matching | Exact, phonetic, and fuzzy algorithms |

| Python Record Linkage Toolkit | Small to medium datasets | Research-focused library |

| Splink | Large-scale linkage | Probabilistic matching at scale |

| Zingg | ML-based entity resolution | Unified views across silos |

Data matching—also called record linkage or entity resolution—is the process of identifying records that refer to the same real-world entity across one or more datasets. This process is inherently complex because real-world data is rarely standardized. A single individual might appear as “J. Doe” in one database, “Johnathan Doe” in another, and “John Doe” in a third. Without sophisticated matching logic, these three records remain isolated, leading to fragmented insights and duplicated efforts.

Whether you’re consolidating patient records, detecting financial fraud, or building a Customer 360 view, accurate matching is essential. Poor data quality costs organizations millions in lost insights, wasted resources, and regulatory risk. In the healthcare sector, for instance, failing to match a patient’s record across different departments can lead to dangerous medical errors or redundant testing.

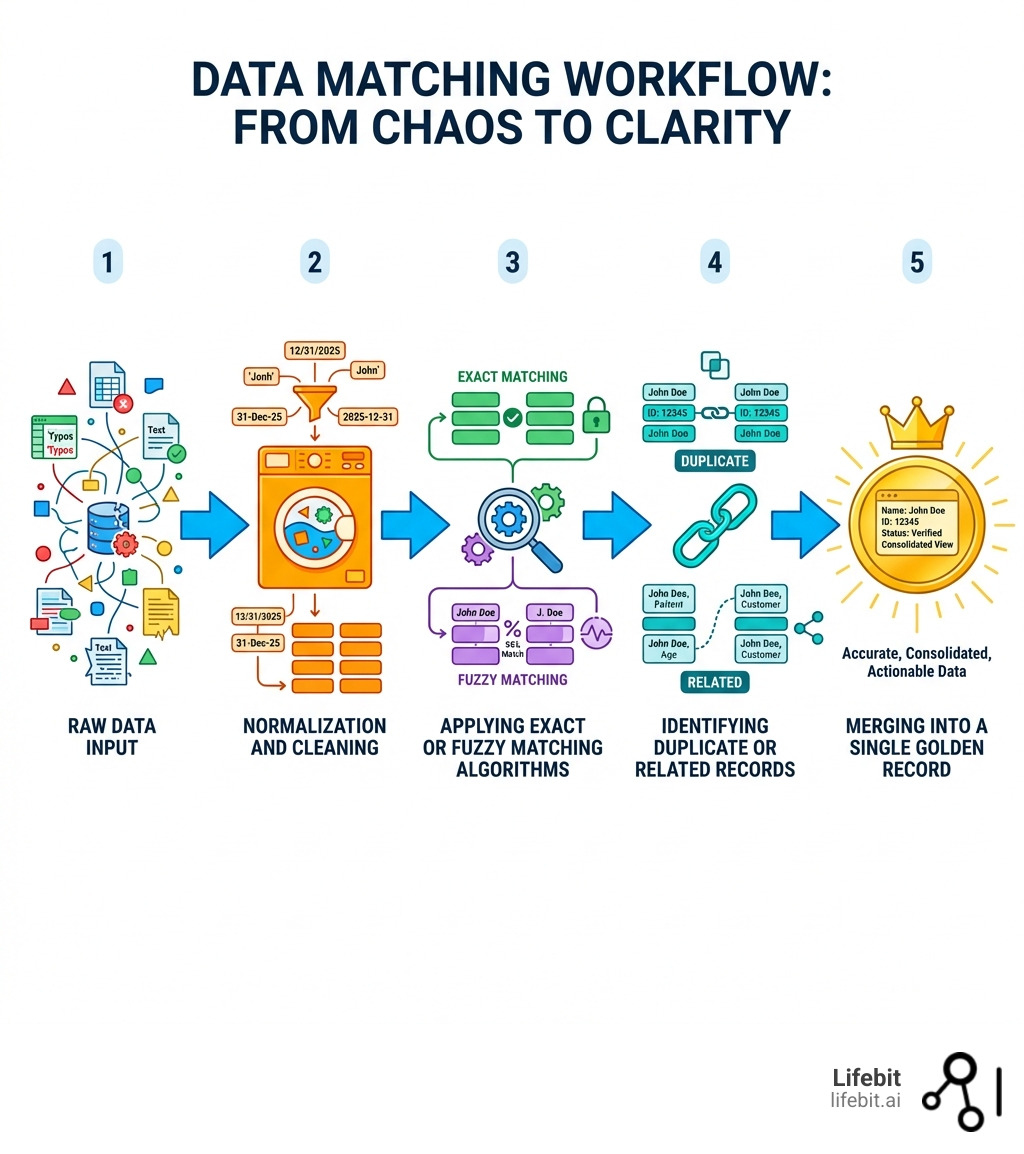

The challenge? Real-world data is messy. Names are misspelled. Addresses are incomplete. Records lack common identifiers like Social Security Numbers or unique IDs. That’s where free data matching software comes in—it uses exact matching, fuzzy logic, phonetic algorithms, and machine learning to find connections your spreadsheets can’t. These tools bridge the gap between raw, chaotic data and a structured, reliable “Golden Record.”

I’m Dr. Maria Chatzou Dunford, CEO and Co-founder of Lifebit, where I’ve spent over 15 years building tools for genomic and biomedical data integration. Throughout my career, I’ve seen how the right free data matching software can transform raw, fragmented data into actionable insights—especially in regulated environments where precision matters. In the world of genomics, where we deal with petabytes of data, even a 1% error rate in data matching can lead to completely different scientific conclusions. Whether you’re a researcher, data analyst, or healthcare leader, this guide will help you choose the right tool and start cleaning your data today.

Free data matching software basics:

Why Data Matching Is Critical for Accurate Analytics

We know that data is the lifeblood of modern research, but raw data is rarely “ready to wear.” According to the Wikipedia page on Record Linkage, data matching is the procedure of bringing together information from two or more records believed to belong to the same entity. Without it, your analytics are built on a foundation of duplicates and fragments, which can lead to the “garbage in, garbage out” phenomenon where even the most advanced AI models produce flawed results.

The High Cost of Data Fragmentation

Imagine a public health database where “John Smith” and “Jon Smith” are listed separately because of a typo. Without free data matching software, a researcher might double-count this individual, leading to skewed statistics regarding disease prevalence or treatment efficacy. In a commercial context, this fragmentation leads to “marketing waste,” where the same customer receives multiple identical catalogs or emails, damaging brand reputation and wasting budget.

Data matching solves these issues by using various techniques that go beyond simple VLOOKUPs in Excel:

- Exact Matching: This is the simplest form, comparing fields for a 100% character-for-character match. It is highly effective for unique identifiers like Social Security Numbers, National Provider Identifiers (NPI), or SKU numbers. However, it fails the moment a single character is out of place.

- Fuzzy Matching: This uses string similarity metrics to find “near matches.” For example, it can recognize that “123 Main St.” and “123 Main Street” are likely the same location. Common algorithms include Levenshtein distance, which counts the number of edits (insertions, deletions, substitutions) needed to turn one string into another.

- Phonetic Algorithms: These match names that sound the same but are spelled differently. Algorithms like Soundex or Metaphone convert words into a code based on their pronunciation. This is particularly useful in international datasets where names like “Smyth” and “Smith” or “Rodriguez” and “Rodrigues” are common variations.

- Geospatial Matching: Advanced tools can match records based on geographic proximity, recognizing that two records with slightly different address strings actually point to the same latitude and longitude coordinates.

By removing duplicates and bridging data silos, we create a “Customer 360” or “Patient 360” view. This is vital for fraud detection, where criminals often use slight variations of an identity (e.g., changing a birth year or middle initial) to open multiple accounts. In clinical research, it ensures that patient journeys are tracked accurately across different hospitals, pharmacies, and labs, providing a holistic view of health outcomes.

7 Ways to Use Free Data Matching Software to Clean Your Records

When we look for free data matching software, we usually encounter two types: Free and Open-Source Software (FOSS) and “Freemium” online tools. Both offer powerful ways to clean records without the heavy price tag of enterprise suites like IBM InfoSphere or Informatica. Choosing between them depends on your technical comfort level and the sensitivity of your data.

| Approach | Best Use Case | Technical Level |

|---|---|---|

| Local Open Source | Highly sensitive data (PII) | Intermediate |

| No-Code Online Tools | Marketing lists & CSVs | Beginner |

| Python Libraries | Large-scale research | Advanced |

| ML-Based Linking | Complex entity resolution | Advanced |

Data Matching with Free and Open Approaches

Clean Messy Data with OpenRefine: Originally known as Google Refine, OpenRefine is a power tool for “messy” data. It runs as a local server on your computer, meaning your data stays private—a critical feature for our colleagues in the UK, Europe, and Canada handling sensitive information under GDPR or HIPAA. It allows you to “cluster” similar entries using algorithms like Fingerprint, N-Gram Fingerprint, and Metaphone. You can merge thousands of variations of a single name into a standard format with just a few clicks. Furthermore, OpenRefine supports GREL (General Refine Expression Language), allowing for complex data transformations that go far beyond standard spreadsheet functions.

Normalize Names with Datablist: For those who prefer a web-based experience without the need for local installation, Datablist offers a generous free plan. It includes a specialized “Name Parser” that can split “Dr. John Doe Jr.” into its constituent parts: Title (Dr.), First Name (John), Last Name (Doe), and Suffix (Jr.). By standardizing these fields before matching, you significantly increase the accuracy of your deduplication efforts. Datablist is particularly effective for small business owners or marketing teams who need to clean up mailing lists quickly.

Automate Deduplication with Zingg: Tools like Zingg represent the next generation of data matching. Zingg uses machine learning to identify duplicates, which is a game-changer for complex datasets. Instead of writing hundreds of “if-then” rules (e.g., “if name matches and zip code matches, then link”), you simply “train” the software on a few dozen examples of matches and non-matches. The ML model then learns the underlying patterns of your specific data quirks, such as common nicknames or regional address formats, and applies that logic to millions of records.

Link Across Collections with Splink: If you have two different datasets—say, a list of clinical trial participants and a separate lab results file—tools like Splink can perform probabilistic record linkage at scale. Developed by the UK Ministry of Justice, Splink is designed to handle millions of rows efficiently. It implements the Fellegi-Sunter model, the gold standard for probabilistic linkage, and can run on backends like DuckDB or Apache Spark, making it one of the most powerful free tools available for data scientists.

Use Smart Matching Algorithms: Don’t just rely on exact matches. Use algorithms like Jaro-Winkler, which is specifically designed for short strings like person names, giving more weight to matches at the beginning of the string. Another powerful tool is the Levenshtein distance, which is ideal for catching typos. By combining multiple algorithms, you can create a “weighted” matching score that provides a much more nuanced view of data similarity than a simple “yes/no” match.

Leverage a Merging Assistant: Many free tools now include a User Interface (UI) that shows you two potential matches side-by-side. This “human-in-the-loop” approach is essential for high-stakes data, such as medical records. The software does the heavy lifting of finding potential matches, but a human expert makes the final call on whether to merge them. This ensures that you maintain high precision while still benefiting from the speed of automation.

Maintain Data Privacy with Local Processing: In the biomedical field, data privacy is paramount. By using tools that run locally (like OpenRefine or Python libraries), we ensure that sensitive Protected Health Information (PHI) never leaves our secure environment. This avoids the security risks associated with uploading sensitive data to third-party cloud services, ensuring compliance with institutional review boards (IRB) and international privacy laws.

Must-Have Features in Free Data Matching Software

Not all free data matching software is created equal. When we evaluate tools for our projects at Lifebit, we look for these specific capabilities to ensure the tool can grow with the project’s complexity:

Graphical User Interface (GUI): For many researchers and analysts, a Graphical User Interface (GUI) is non-negotiable. It allows non-technical users to visualize the data, see the effects of different cleaning steps in real-time, and manually inspect matching results. A good GUI reduces the learning curve and makes the tool accessible to the entire team, not just the data engineers.

Application Programming Interface (API): For developers and data engineers, an Application Programming Interface (API) is essential. It allows you to integrate the matching process directly into larger data pipelines. For example, you could set up an automated workflow where new data entering a system is automatically cleaned and matched against existing records before being stored in a database.

Machine Learning Capabilities: Advanced tools offer Supervised Learning, where the model learns from labeled examples provided by the user. This is particularly useful for datasets with complex, non-obvious relationships. Some tools also offer Unsupervised Learning, which can find patterns and clusters in the data without any prior training. The most advanced tools use “Active Learning,” where the software identifies the most “uncertain” cases and asks the user for feedback, rapidly improving its accuracy with minimal human effort.

Scalability and Performance: The volume of data is a critical factor. A tool that works perfectly for 5,000 records might crash when faced with 5 million. Tools like Splink are specifically designed for high-performance scenarios, supporting distributed computing backends like Apache Spark or AWS Athena. When choosing a tool, always consider your future data growth; it’s better to choose a scalable tool now than to have to migrate your entire workflow later.

Extensibility: Can the tool be extended with custom scripts? Tools like OpenRefine allow you to write custom GREL or Python scripts to handle unique data challenges, such as converting specialized medical codes or parsing non-standard date formats. This flexibility is vital for specialized research fields.

How to Compare Data and Find Mismatches Fast

To find mismatches effectively and efficiently, we follow a structured workflow that minimizes computational overhead while maximizing accuracy. This process is often referred to as the “Record Linkage Pipeline.”

Step 1: Data Loading and Profiling

It starts with Data Loading, where we bring disparate sources together. Before matching, we perform “Data Profiling” to understand the quality of each field. Which fields have the most missing values? Which fields have the highest variance? This helps us decide which fields are reliable enough to be used for matching.

Step 2: Normalization and Standardization

Next is Normalization—converting all text to lowercase, removing punctuation, and standardizing addresses (e.g., changing “Rd.” to “Road”). This step is crucial because it eliminates “noise” that would otherwise cause matching algorithms to fail. For example, standardizing dates to the ISO 8601 format (YYYY-MM-DD) ensures that “01/02/2023” and “Feb 1, 2023” are recognized as the same day.

Step 3: Blocking Rules

Comparing every record to every other record is computationally expensive. If you have two datasets with 100,000 records each, a naive comparison would require 10 billion operations ($O(n^2)$). Blocking Rules solve this by grouping similar records into “blocks” based on a reliable attribute, such as the first three digits of a zip code or the birth year. We then only compare records within the same block, drastically reducing the number of comparisons needed.

Step 4: Scoring and Linkage

Once blocked, we apply Deterministic Rules (e.g., if the Email matches exactly, it’s a link) or Probabilistic Linkage. In probabilistic linkage, we assign a similarity score to each pair of records based on how many fields match and how “rare” those matches are. For instance, a match on a rare last name like “Quizenberry” is given more weight than a match on a common name like “Smith.”

Step 5: Evaluation and Metrics

To measure success, we use three key metrics that are standard in the field of information retrieval:

- Precision: What proportion of the links identified by the software are actually correct? High precision means fewer “false positives.”

- Recall: What proportion of the total actual links in the dataset did the model find? High recall means fewer “false negatives.”

- F1-score: This is the harmonic mean of precision and recall, providing a single score that balances both. You can read more about these on the Precision and Recall metrics page.

We also look at Information Gain—how much “uncertainty” did we remove from the dataset by identifying these duplicates? This helps justify the time and resources spent on the data matching process to stakeholders.

Frequently Asked Questions about Data Matching

What’s the Difference Between Exact and Fuzzy Matching?

Exact matching is binary: either the fields are identical or they are not. It is fast but brittle. Fuzzy matching uses mathematical formulas to determine a similarity score between 0 and 1. Common formulas include Levenshtein distance (the number of edits needed to change one string into another) or Jaro-Winkler (which favors matches at the start of the string). Fuzzy logic is what allows a tool to know that “Lifebit” and “Life bit” are likely the same entity, even if they aren’t character-identical.

Can Free Data Matching Software Handle Large Datasets?

Yes, but the choice of tool is critical. Datablist and OpenRefine are excellent for datasets under 1 million records, as they typically process data in-memory. For larger scales, such as national census data or global genomic databases, we recommend Splink. Splink is a Python package designed for probabilistic record linkage at scale, supporting millions or even billions of rows by using distributed computing backends like AWS Athena, DuckDB, or Spark.

Do I Need Coding Skills to Use These Tools?

Not necessarily. Tools like OpenRefine, Datablist, and WinPure Clean & Match (which offers a free version/trial) are designed for users with zero coding skills, providing intuitive menus and visual feedback. However, if you want to perform advanced record linkage on massive datasets or automate the process within a pipeline, some knowledge of Python or R will help you leverage powerful libraries like the Python Record Linkage Toolkit or Zingg.

Is My Data Secure with Free Tools?

Security depends on the tool’s architecture. Open-source tools that run locally (like OpenRefine or Splink) are highly secure because your data never leaves your computer or your organization’s private cloud. Web-based “Freemium” tools require you to upload your data to their servers. Before using a web-based tool, always check their privacy policy and terms of service, especially if you are handling sensitive PII or PHI. For highly regulated industries, local or self-hosted open-source solutions are always the safer bet.

How Do I Handle “False Positives” in Data Matching?

False positives occur when the software incorrectly identifies two different people as the same person. To minimize this, you can increase the “similarity threshold” (e.g., requiring a 95% match instead of 85%) or add more matching criteria (e.g., requiring a match on both Name and Date of Birth). Most professional workflows include a manual review step for records that fall into a “gray area” of similarity scores.

Conclusion: Scale Your Data Strategy with Lifebit

While free data matching software is an incredible starting point for cleaning and deduplicating your records, enterprise-grade research often requires more than just a standalone tool. As your data grows in volume and complexity, the challenges of maintaining data quality, ensuring security, and enabling global collaboration become exponentially more difficult.

At Lifebit, we understand that data quality is only one piece of the puzzle. Our federated AI platform provides secure data access to global biomedical and multi-omic data without moving the data itself. This “data-to-code” approach is the future of secure research. We integrate advanced data matching, normalization, and harmonization directly into our Trusted Data Lakehouse (TDL). This ensures that whether you are a researcher in London, a clinician in New York, or a data scientist in Singapore, your research is built on a foundation of accurate, compliant, and high-quality data that is ready for analysis.

If you’re ready to move beyond basic deduplication and into the world of real-time insights, federated analytics, and global collaboration, we are here to help. Our team of experts can help you navigate the complexities of data integration at scale. Contact us today to see how we can power your next-generation research and help you unlock the full potential of your data.