Building a Better Foundation with Health Data Platforms

Stop Losing Clinical Insights: How Health Data Platforms Power AI Medicine

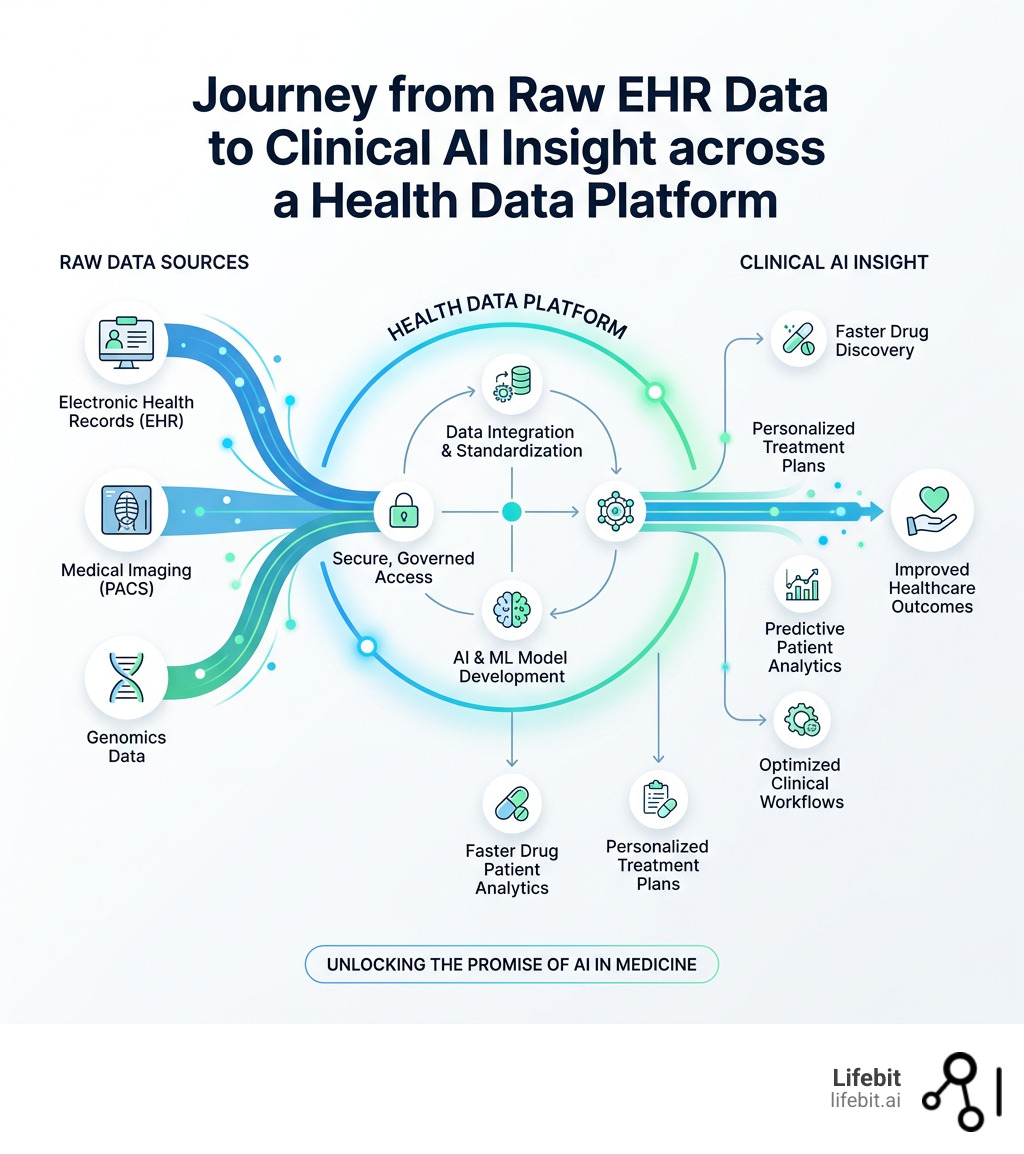

Health data platforms are systems that collect, store, and manage electronic health records (EHRs) and other clinical data — making that data accessible for research, AI development, and healthcare decision-making.

Here is a quick overview of what they are and why they matter:

| What | Why It Matters |

|---|---|

| Centralize EHRs, claims, imaging, and genomics | Breaks down data silos that block research |

| Enable secure, governed data access | Protects patient privacy under HIPAA, PHIPA, and GDPR |

| Support AI/ML model development | Turns raw clinical data into actionable insight |

| Serve researchers, educators, and data providers | Broadens who can benefit from health data |

| Range from open repositories to federated environments | Different needs require different access models |

The promise of AI in medicine is real — but it depends entirely on access to high-quality, well-organized health data. Right now, that data is everywhere and nowhere at once. It sits in hospital systems, research databases, government registries, and private networks, rarely talking to each other. A single hospital can generate up to 50 petabytes of data per year, yet an estimated 95% of it goes unused without the right infrastructure to aggregate and analyze it.

The result? Slower drug discovery. Weaker AI models trained on incomplete populations. Researchers spending months negotiating data access instead of doing science.

The good news is that a new generation of health data platforms is changing this — offering structured, secure, and scalable ways to unlock clinical data without compromising patient privacy.

I’m Maria Chatzou Dunford, CEO and Co-founder of Lifebit, and I’ve spent over 15 years building computational tools and health data platforms that make sensitive biomedical data accessible for research across secure, federated environments. In this guide, I’ll break down how these platforms work, how they differ, and what to look for when evaluating them.

Health data platforms terms to learn:

Stop Wasting Health Data: Power AI with Secure Platforms

In medical research, we are currently “data rich but insight poor.” We have mountains of information, but it is locked behind digital walls. To move from raw data to a life-saving AI algorithm, we need a foundation that prioritizes security, interoperability, and scalability. The current landscape of healthcare is characterized by a “Data Paradox”: while hospitals generate more data than ever before—ranging from high-resolution medical imaging to real-time physiological monitoring—the vast majority of this information remains inaccessible for the very research that could improve patient outcomes.

This wastage isn’t just a technical oversight; it is an economic and clinical crisis. When data is trapped in silos, we lose the ability to detect rare disease patterns, we fail to validate AI models across diverse populations, and we delay the delivery of precision medicine. To bridge this gap, health data platforms must evolve beyond simple storage repositories. They must become active ecosystems where data is not just stored, but curated, protected, and made computationally ready for the most demanding machine learning workloads. By leveraging secure platforms, we can ensure that every byte of clinical data has the potential to contribute to a breakthrough, moving us closer to a future where AI-driven insights are a standard part of every patient’s care journey.

Why Traditional Storage Fails: The Rise of Modern Health Data Platforms

For decades, health data was stored in “silos”—isolated systems within a single hospital or clinic. If a researcher at a university in Toronto wanted to study patient deterioration using data from a hospital in London, they faced a bureaucratic nightmare. Traditional storage methods simply weren’t built for the “Big Data” era of AI and machine learning (ML). These legacy systems were designed for administrative billing and individual patient record retrieval, not for the high-throughput analysis required by modern data science.

The primary challenges we face include:

- Data Silos: Information is trapped in proprietary formats that don’t “talk” to each other. This fragmentation prevents the creation of large, diverse datasets necessary to train unbiased AI models.

- Privacy Concerns: Regulations like the Personal Health Information Protection Act (PHIPA) in Ontario and the Health Insurance Portability and Accountability Act (HIPAA) in the USA set high bars for data protection. Without a specialized platform, meeting these standards is nearly impossible for individual researchers, often leading to “analysis paralysis” where data is never shared due to fear of non-compliance.

- Standardization Issues: One hospital might record “heart rate” as a single integer, while another uses a complex waveform. Without standards, AI models get confused, leading to “garbage in, garbage out” scenarios.

- Technical Debt: Many hospital systems rely on aging infrastructure that cannot handle the petabyte-scale requirements of modern genomics or high-resolution imaging.

Modern health data platforms solve this by creating a unified environment. They don’t just “hold” data; they clean it, standardize it, and make it ready for a computer to read. This process, often referred to as ETL (Extract, Transform, Load), is the backbone of clinical AI. Research published in Nature Medicine highlights that the value of health datasets for AI depends entirely on these standards. Without them, we are just looking at digital noise.

To dive deeper into how these systems are built, check out our biomedical research data platform complete guide.

How Governance Models Keep Data Safe Without Slowing Down Science

How do you give a researcher access to 20,000 patient records without revealing who those patients are? This is the “Goldilocks” problem of health data: you need enough detail for the science to work, but enough privacy to protect the individual. The solution lies in robust governance frameworks that automate the trust process.

Many leading platforms, such as the Health Data Nexus (HDN), use a Zoned Access Model:

- Zone 1 (Open/Public): Contains synthetic or highly aggregated data that poses zero privacy risk. This is ideal for students learning the basics of health informatics or for developers testing their code before moving to sensitive datasets.

- Zone 2 (Registered): Requires a user to be “credentialed” (e.g., through a university login like eduGAIN). You might need to sign a Data Use Agreement (DUA) and demonstrate a legitimate research interest.

- Zone 3 (Controlled): This is where the most sensitive, granular data lives. Access requires ethics board (REB) approval, specific training (like TCPS 2 in Canada), and often a “no-download” policy where the data never leaves the platform. This zone is often referred to as a “Safe Haven” or “Trusted Research Environment.”

This tiered approach ensures that privacy and ethical use aren’t just “checked boxes” but are baked into the software itself. The WHO’s data principles and protection policies emphasize that “good data is essential to good decision-making,” and these governance models are the engine that makes that data “good.”

Technical Features of Secure Health Data Platforms

If governance is the “law” of the platform, the technical infrastructure is the “police.” To be truly secure, a modern platform should ideally be built on cloud infrastructure (like Google Cloud Platform or AWS) and use advanced containerization like Kubernetes. This allows for “Data Sovereignty,” where data can remain in its country of origin while still being accessible for global research.

Key technical must-haves include:

- No Direct Downloads: This is critical. Instead of downloading a massive CSV file to your laptop (where it could be lost or stolen), you bring your code to the data. You work in a secure “sandbox” where all activity is audited.

- Scalability: AI models require massive computing power. A platform should be able to spin up 100 CPUs or multiple GPUs for a complex deep learning analysis and then shut them down when finished to save costs.

- CI/CD Pipelines: Continuous Integration and Continuous Deployment mean the platform is constantly being updated with the latest security patches and feature enhancements without going offline.

- User-Friendly Interfaces: If a doctor needs a PhD in computer science just to open a dataset, the platform has failed. We need intuitive dashboards that allow for easy data discovery, cohort building, and visualization.

- Audit Trails: Every action taken by a researcher—from the queries they run to the code they execute—must be logged. This ensures accountability and helps institutions maintain compliance with strict privacy laws.

For a full breakdown of these environments, see our guide to secure data environments.

From Tabular EHRs to CT Scans: Unlocking Multimodal Data

The term “health data” used to mean a spreadsheet of blood pressure readings. Today, it’s much more. We are entering the era of multimodal data, where AI models look at the “whole patient” by integrating disparate data streams. This holistic view is essential for precision medicine, where treatments are tailored to an individual’s unique biological and environmental profile.

Modern health data platforms must support:

- Tabular Data: Traditional EHR records, medication lists, and lab results. This is the foundation of clinical history.

- Imaging: CT scans, MRIs, and X-rays. For example, HDN hosts over 1,000 Cervical Spine CT scans for fracture detection research. Managing these requires specialized DICOM viewers and high-performance storage.

- Signals: Sleep laboratory data, ECGs, and EEG readings. These high-frequency time-series data points are goldmines for predicting acute events like cardiac arrest or seizures.

- Voice: Recent projects like Bridge2AI-Voice are even using ethically sourced voice recordings to detect health changes, such as early signs of Parkinson’s or respiratory distress.

- Genomics: The “blueprint” of a patient, which requires petabytes of storage. Analyzing whole-genome sequences alongside clinical outcomes is the “holy grail” of modern drug discovery.

To make this mix of data usable, platforms are moving toward the FHIR (Fast Healthcare Interoperability Resources) and OMOP (Observational Medical Outcomes Partnership) standards. These frameworks act as a universal translator. FHIR is excellent for exchanging data between systems in real-time, while OMOP is the gold standard for large-scale observational research. By mapping data to a Common Data Model (CDM), we ensure that a “heart attack” recorded in a London hospital is recognized as the same event in a New York clinic, allowing for global meta-analyses that were previously impossible.

We specialize in helping organizations with mapping data to OMOP standards to ensure their data is research-ready from day one. This involves complex data engineering to ensure that semantic meaning is preserved across different languages and coding systems (like ICD-10, SNOMED-CT, and LOINC).

Supporting Researchers and Educators via Health Data Platforms

Health data platforms aren’t just for big pharma; they are essential for the next generation of students and the democratization of science. By lowering the barrier to entry, these platforms allow researchers from underfunded institutions or developing nations to participate in world-class science.

We see this most clearly in Datathons. These are competitive events where students and researchers have 48 hours to solve a clinical problem using real-world data. For example, the Toronto Health Datathons (2023-2025) used the HDN platform to allow hundreds of students to analyze datasets on patient deterioration and COVID-19 outcomes. These events serve as a “stress test” for the platform’s usability and scalability.

By providing pre-configured analysis environments—loaded with tools like Jupyter Notebooks, RStudio, and VS Code—platforms allow researchers to start coding in minutes rather than weeks. This collaborative innovation is often supported by open-source models, where the community contributes code to improve the platform for everyone. Furthermore, these platforms facilitate “Reproducible Science,” where a researcher can share their exact analysis environment with a peer to verify their findings.

Check out our solutions for data providers to see how we help institutions share their data safely with these global research communities.

Comparing Different Approaches to Health Data Access

Not all health data platforms are created equal. Depending on your goal—whether it’s a quick student project or a multi-year clinical trial involving sensitive genomic data—you’ll need a different type of access and infrastructure. The choice of platform often dictates the speed of innovation and the level of risk an organization assumes.

| Platform Type | Best For | Security Level | Data Movement |

|---|---|---|---|

| Public Repositories | Rapid prototyping, learning ML basics | Low (Public data) | Downloadable |

| Academic Databases | Clinical signal processing, physiological research | Medium (Credentialing required) | Often Downloadable |

| National Health Data Trusts | Population health, government policy | High (Strict controlled access) | No-download (TRE) |

| Modern Cloud Platforms | AI/ML research, education, multimodal data | High (Zoned access, cloud-only) | No-download (TRE) |

| Federated AI Platforms | Large-scale pharma R&D, multi-omic analysis | Maximum (Data stays in situ) | Zero Movement |

While public and academic repositories provide valuable datasets for initial testing, they often rely on data downloads, which is a major security risk for sensitive patient info. Once data is downloaded to a local machine, the data provider loses all control and visibility over how that data is used.

Modern “Trusted Research Environments” (TREs) are the gold standard because they provide a “walled garden” where data is safe, but the tools are powerful. However, even TREs face challenges when data needs to be combined from multiple countries with different legal jurisdictions. This is where Federated Learning and Federated Analytics come in. In a federated model, the data never moves. Instead, the AI model travels to the data, learns from it locally, and only sends back the “insights” (mathematical weights) to a central server. This bypasses the need for complex data transfer agreements and ensures maximum privacy. To understand why “federated” is the future of this comparison, read our federated data platform ultimate guide.

Frequently Asked Questions about Health Data Platforms

What is the primary purpose of a health data platform?

The primary purpose is to centralize fragmented health data and provide a secure, standardized gateway for researchers and clinicians to analyze it. This accelerates the development of AI tools, improves patient outcomes, and reduces the time and cost of medical research by eliminating the need for each researcher to build their own infrastructure.

How do these platforms ensure patient privacy and ethical use?

They use a combination of “de-identification” (removing names, addresses, and IDs), strict governance (checking the credentials of every user), and technical barriers (preventing data from being downloaded). Most also require users to complete ethics training, such as the TCPS 2 in Canada or HIPAA training in the US, and sign legally binding Data Use Agreements.

What are the main barriers to accessing health data for AI research?

The “Big Three” are silos (data is stuck in one place), lack of standards (data is messy and inconsistent), and privacy fears. Many hospitals are afraid to share data because they don’t want to risk a privacy breach or lose control over their intellectual property. Modern platforms solve this by proving that data can be shared safely and transparently.

What is the difference between a Data Lake and a Health Data Platform?

A Data Lake is a storage repository that holds a vast amount of raw data in its native format. A health data platform is a more comprehensive solution that includes the Data Lake but adds layers of governance, data harmonization (like OMOP mapping), secure analysis environments (TREs), and user management tools specifically designed for the healthcare context.

Can health data platforms handle genomic data?

Yes, modern platforms are specifically designed to handle the massive scale of genomic data (VCF and BAM files). They often integrate with high-performance computing (HPC) resources to allow researchers to run complex bioinformatic pipelines alongside clinical EHR data, which is essential for identifying genetic markers for diseases.

How does federated learning work in these platforms?

Federated learning allows AI models to be trained on data across multiple locations without the data ever leaving its original site. The platform orchestrates the movement of the algorithm to the various data sites, aggregates the results, and produces a global model that is more robust and diverse than one trained on a single dataset.

Conclusion: Building the Future of Medicine on Federated Data

At Lifebit, we believe that the next medical breakthrough shouldn’t be delayed because a researcher couldn’t get access to a dataset. Our next-generation federated AI platform is designed to solve the “data movement” problem. Instead of moving sensitive data across borders—which triggers endless legal and security hurdles—we bring the analysis to the data.

Our platform components, including the Trusted Research Environment (TRE) and Trusted Data Lakehouse (TDL), provide biopharma companies, governments, and public health agencies with real-time access to global biomedical and multi-omic data. Whether it’s AI-driven safety surveillance for pharmacovigilance or large-scale genomic research, we provide the secure, compliant infrastructure needed to turn raw data into life-saving insights.

By using a federated approach, we ensure that data holders maintain complete control over their information while allowing researchers in London, New York, Singapore, and beyond to collaborate on the world’s toughest health challenges.

Ready to see how we can transform your research? Access the Trusted Data Marketplace and start building on a better foundation today.