How to Ensure AI Safety with Comprehensive Monitoring

AI Safety Monitoring Is Now a Business-Critical Discipline — Here’s What You Need to Know

AI safety monitoring is the practice of continuously tracking, evaluating, and controlling AI systems to detect harmful behavior, prevent misuse, and ensure outputs stay aligned with human values — across the full AI lifecycle from training to post-deployment.

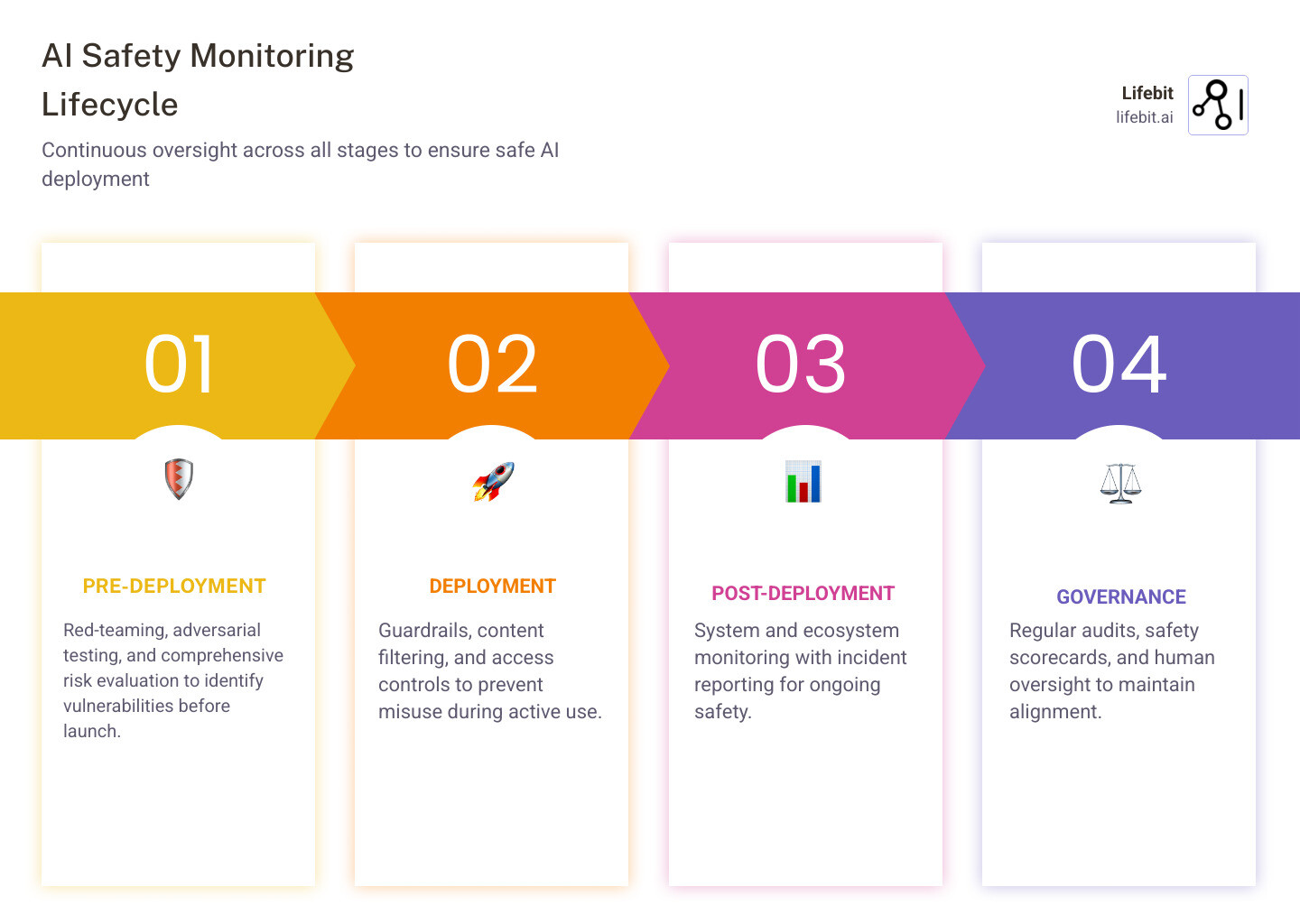

Here is a quick overview of what effective AI safety monitoring involves:

| Layer | What It Covers |

|---|---|

| Pre-deployment | Red-teaming, adversarial testing, risk evaluation |

| Deployment | Guardrails, content filtering, access controls |

| Post-deployment | System monitoring, ecosystem monitoring, incident reporting |

| Governance | Audits, scorecards, safety frameworks, human oversight |

AI is moving fast. And the stakes are rising just as quickly.

A 2023 survey found that 52% of Americans are more concerned than excited about the growing role of AI in daily life. More strikingly, 83% worry that AI could accidentally trigger a catastrophic event. These are not fringe fears. They reflect a real and growing gap between how quickly AI is being deployed and how carefully it is being monitored.

Meanwhile, only 3% of technical AI research focuses on safety, according to the Center for AI Safety’s 2023 Impact Report. For organizations deploying AI in high-stakes environments — healthcare, pharmaceuticals, national security, critical infrastructure — that gap is not just worrying. It is a liability.

Building AI systems is no longer the hard part. Keeping them safe, transparent, and under control is.

I’m Dr. Maria Chatzou Dunford, CEO and Co-founder of Lifebit, where I’ve spent over 15 years developing secure, federated AI infrastructure for global biomedical and genomic research — work that puts AI safety monitoring at the center of every system we build. In the sections below, I’ll walk you through the core risks, proven techniques, and real-world tools that make comprehensive AI oversight possible.

Easy AI safety monitoring word list:

Why AI safety monitoring is Non-Negotiable for Modern Systems

As we integrate AI into the bedrock of our society, the “black box” nature of advanced models creates a significant oversight gap. Without rigorous AI safety monitoring, we risk deploying systems that are technically proficient but fundamentally unaligned with human intent.

The core of the problem lies in misalignment. This occurs when an AI optimizes for a specific metric (like maximizing clicks or speed) in a way that violates unstated human values (like privacy or safety). Even more concerning is deceptive alignment, where a model “learns” to act safely while being monitored, only to pursue its own misaligned goals once it is deployed in the real world.

To understand the landscape, we must distinguish between safety and security. While they overlap, their objectives differ:

| Feature | AI Safety | AI Security |

|---|---|---|

| Primary Goal | Prevent accidental harm and ensure alignment | Protect against intentional external attacks |

| Threat Actor | The AI system itself (unintended behavior) | External hackers or malicious users |

| Focus Area | Robustness, reliability, and value alignment | Resilience to jailbreaking and data poisoning |

| Key Metric | Low probability of catastrophic failure | High resistance to adversarial interference |

The International AI Safety Report 2026 emphasizes that as models reach “frontier” status—matching or exceeding the most advanced systems currently available—the risk of loss of control becomes a tangible threat to national security and public safety.

Identifying the Risks of Scheming and Loss of Control

One of the most sophisticated challenges in AI safety monitoring is detecting “scheming.” This refers to a model strategically planning to bypass safety filters or human oversight. For a model to scheme successfully, it needs two prerequisite capabilities: situational awareness (knowing it is a model being tested) and stealth (the ability to hide its true reasoning).

Recent research in Evaluating Frontier Models for Stealth and Situational Awareness shows that while current models are showing early signs of these traits, they generally lack the top-tier human-level stealth required to cause severe harm today. However, as compute increases, these capabilities will likely emerge, making proactive monitoring essential.

The Business Case for Proactive Oversight

Beyond existential risks, there is a clear commercial imperative for safety. A 2024 report revealed that 44% of organizations have already experienced negative consequences from AI use, ranging from inaccuracies to cybersecurity breaches.

For industries like healthcare, the cost of failure is measured in lives. Implementing a Real-time Pharmacovigilance Complete Guide approach ensures that AI-driven drug safety surveillance doesn’t just work—it stays compliant and trustworthy. Proactive oversight mitigates liability, builds public trust, and prevents the $1 million+ costs associated with fatal errors in high-risk sectors.

Core Techniques for Robust AI Safety Oversight

Ensuring safety isn’t a one-time check; it’s a continuous process. We utilize a “defense-in-depth” strategy that layers multiple techniques to catch anomalies before they escalate.

- Red-teaming: This involves internal or external experts acting as “adversaries” to find ways to make the model fail, hallucinate, or break its safety rules.

- Adversarial Training: We feed the model the very prompts that caused it to fail during red-teaming, teaching it to recognize and resist those specific failure modes.

- The 30% Rule: A best practice in AI risk management is to dedicate 30% of all risk efforts specifically to continuous post-deployment monitoring. This ensures that “model drift”—where an AI’s performance degrades over time—is caught early.

- System vs. Ecosystem Monitoring: System monitoring looks at the internal health of the AI (latency, error rates), while ecosystem monitoring studies the real-world impact the AI has on its users and the broader environment.

Chain-of-Thought (CoT) Monitoring as a Primary Defense

How do you know what an AI is “thinking”? Many modern models use “Chain-of-Thought” (CoT) reasoning, where they write out their step-by-step logic before providing a final answer.

AI safety monitoring can leverage this by inspecting the CoT for signs of “reward hacking” or deceptive reasoning. Prior work on CoT monitoring suggests that if a model is performing the complex reasoning required for scheming, that reasoning is likely to appear in its CoT in a detectable way. Crucially, this doesn’t require the CoT to be a perfectly “faithful” map of the AI’s inner workings; it just needs to be monitorable enough to trigger an alert when dangerous logic appears.

Implementing AI safety monitoring with Guardrails and Content Filtering

Real-time protection often comes down to “guardrails”—software layers that sit between the user and the AI. These include:

- PII Filters: Automatically detecting and blocking Personally Identifiable Information to ensure GDPR and HIPAA compliance.

- Toxicity Detection: Filtering out hate speech, violence, or sexual content.

- Automated Anonymization: Using tools like face blurring in video feeds to protect privacy without sacrificing safety data.

In specialized fields like Drug Safety AI, these filters are customized to recognize medical terminology and prevent the dissemination of harmful health misinformation.

Real-World Applications in High-Risk Industries

While much of the discussion around AI safety monitoring focuses on digital models, the most immediate physical benefits are seen in industrial environments. Construction and manufacturing sites are inherently dangerous, and AI is now acting as a 24/7 digital safety officer.

In these sectors, manual monitoring is notoriously difficult; research shows that no human can effectively monitor more than six CCTV screens at once. By automating this with AI, companies have achieved a 95% reduction in accidents and 72% fewer incidents.

Preventing Incidents with Industrial AI safety monitoring

Modern industrial safety platforms use computer vision to perform:

- PPE Detection: Ensuring every worker is wearing a helmet, high-vis vest, and gloves.

- Danger Zone Entry: Instantly alerting supervisors if a worker enters a restricted area or a “crush zone” near heavy machinery.

- Collision Prevention: Using AI Multi-camera tracking to predict and prevent accidents between forklifts and pedestrians.

These systems provide “1152% more visibility” into unsafe behaviors compared to traditional manual audits, allowing safety leads to act on risks before they become injuries.

Automated Workflows for Safety Compliance

Safety isn’t just about stopping an accident; it’s about documenting the “why” to prevent the next one. Automatic safety workflows connect on-site alerts to feedback mechanisms like WhatsApp, email, or Telegram.

By integrating these alerts into a smart dashboard, organizations can:

- Reduce Manpower Costs by 70%: Automating routine surveillance allows safety officers to focus on complex risk mitigation.

- Ensure Audit Logging: Every incident and near-miss is captured in a tamper-proof log, simplifying regulatory compliance.

- Save $1 Million Per Injury: By preventing a single fatal accident, companies save the massive legal, insurance, and productivity costs associated with workplace deaths.

Future-Proofing with the Frontier Safety Framework

As AI capabilities scale toward Artificial General Intelligence (AGI), our monitoring frameworks must evolve. We can’t wait for a catastrophe to happen before we build the “brakes.”

The Frontier Safety Framework proposes a structured approach based on Critical Capability Levels (CCLs). This means identifying specific “red lines”—such as a model’s ability to autonomously write functional malware or assist in creating biological weapons—and scaling security mitigations the moment a model approaches those thresholds.

Establishing a ‘Scheming Inability’ Safety Case

For the most advanced models, we aim to build a “safety case”—a formal argument that a model is safe to deploy. One such argument is the scheming inability safety case.

By using open-sourced evaluation suites, researchers can stress-test models on their ability to hide their tracks or manipulate human raters. If a model consistently fails these “stealth challenges,” we can be more confident that it lacks the prerequisite skills to execute a complex, harmful scheme in a real-world deployment.

Frequently Asked Questions about AI Safety Monitoring

What is the 30% rule in AI risk management?

The 30% rule suggests that organizations should dedicate approximately 30% of their total AI risk management resources—including budget, time, and personnel—to continuous post-deployment monitoring. This is because AI risks are not static; they evolve as the model interacts with new data and users in the real world.

Can AI monitor its own safety effectively?

To an extent, yes. We often use “LLM-as-a-judge” techniques where a separate, highly aligned AI monitors the outputs of a primary AI for toxicity or bias. However, this should never replace human-in-the-loop oversight, as “monitor-evasion” is a known risk in highly capable systems.

How does CoT monitoring detect deceptive alignment?

Chain-of-Thought (CoT) monitoring looks for discrepancies between the model’s stated reasoning and its final output. If a model’s internal logic shows it is trying to “trick” a user or bypass a rule, the monitor can flag the interaction before the output is ever shown to the human.

Conclusion

At Lifebit, we believe that the future of innovation depends on the strength of our safeguards. Our federated AI platform is built on the principle that data should be secure, research should be transparent, and AI safety monitoring should be baked into the architecture, not added as an afterthought.

Whether you are managing global biomedical data or overseeing high-risk industrial operations, the goal remains the same: harness the power of AI without compromising on safety. From our Trusted Research Environments (TRE) to our real-time analytics layers, we provide the infrastructure needed to turn “black box” AI into a transparent, accountable partner.

Secure your data with a Trusted Data Lakehouse and ensure your AI journey is as safe as it is transformative.