7 Data Matching Tools That Actually Work

What is Data Harmonization and Why is it Essential?

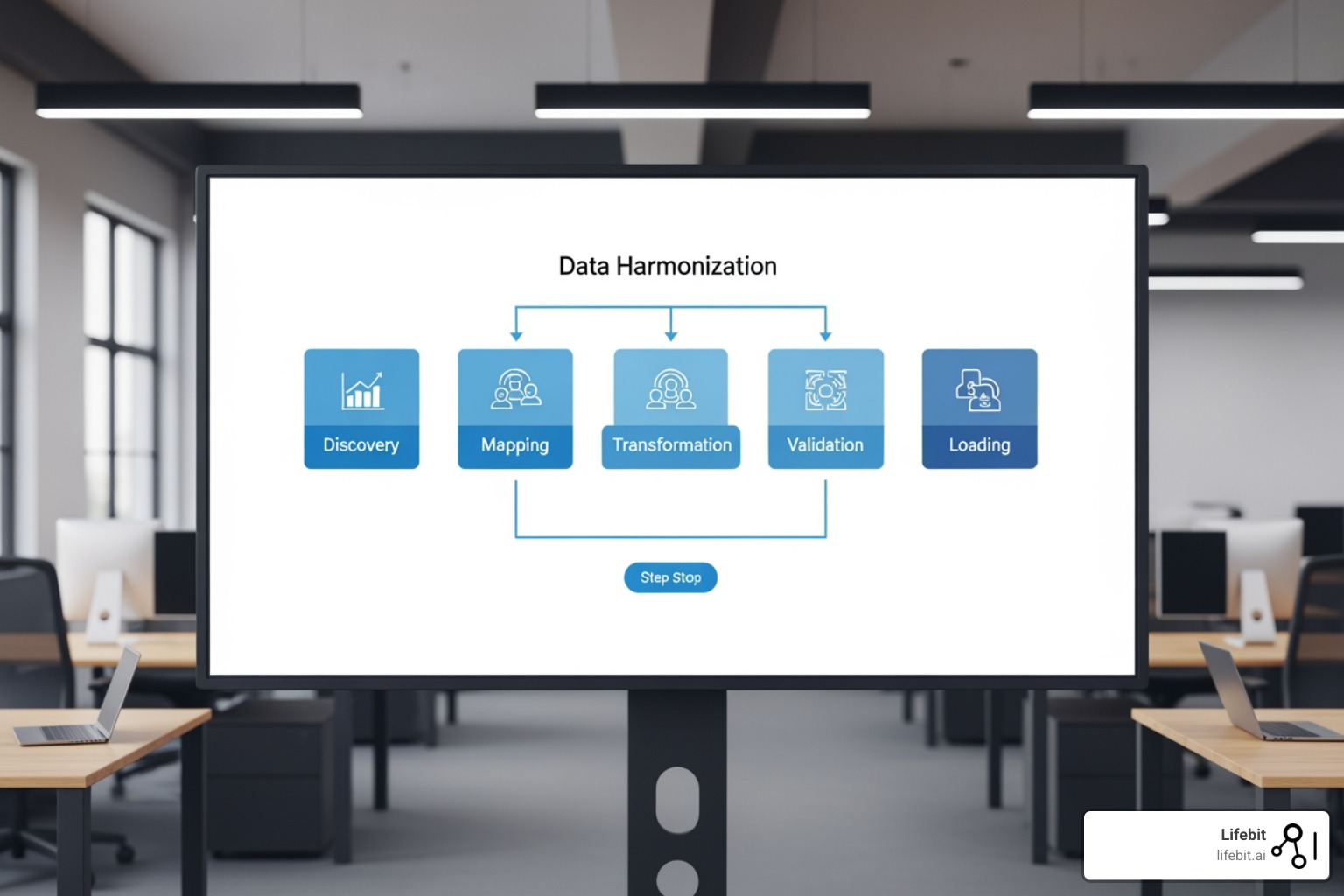

At its core, data harmonization is the process of taking disparate datasets—often with different formats, naming conventions, and structures—and transforming them into a cohesive, unified view. While data integration simply brings data together, harmonization ensures that the data actually makes sense when combined. This involves resolving two primary types of conflict: structural heterogeneity (differences in database schemas) and semantic heterogeneity (differences in the meaning of the data itself).

For a deep dive into the fundamentals, check out our data harmonization meaning complete guide. The goal is to achieve semantic reconciliation, where the meaning behind the data is aligned. It’s the difference between having two columns named “Cost” and “Spend” and realizing they both represent “Total Marketing Outlay.” Without this alignment, any downstream analysis is fundamentally flawed, as you are essentially comparing apples to oranges.

In fields like biomedical research, this is non-negotiable. Projects like the HBM4EU project (2017-2022) demonstrated this by harmonizing human biomonitoring data across various European studies to ensure they were interoperable with tools like MCRA and IPCHEM. This project required the alignment of chemical exposure data from over 28 countries, each using different laboratory protocols and reporting units. Similarly, the Scientific research on the NIH Common Fund Data Ecosystem highlights how vital it is to align data formats and schemas to allow researchers to query across pediatric, genomic, and undiagnosed disease datasets simultaneously. By creating a cross-program discovery portal, the NIH enables researchers to find correlations between rare genetic variants and clinical phenotypes that were previously hidden in isolated silos.

Without understanding the nuance of beyond integration understanding data harmonization, organizations risk drawing “insights” from data that isn’t actually comparable. This leads to the “garbage in, garbage out” phenomenon, where sophisticated AI models produce incorrect results because the underlying training data was never properly aligned.

Key Principles of Data Harmonization Tools

Effective data harmonization tools operate on several core principles to ensure scientific rigor and data integrity:

- Record Linkage & Entity Resolution: This is the process of identifying when two records in different systems refer to the same person or object. For example, identifying that “John A. Smith” in a clinical trial database is the same individual as “J. Smith” in an electronic health record.

- Data Cleansing: This involves removing duplicates, correcting syntax errors, and handling missing values. It is the foundational step that ensures the data is “clean” enough for transformation.

- Schema Alignment: Mapping different database structures to a common model. This requires defining a “target schema” and creating transformation rules to move data from the source to the target.

- Semantic Mapping: Ensuring units of measure (e.g., mg/dL vs. mmol/L) and definitions are identical. This often involves the use of standardized ontologies like SNOMED-CT or LOINC in healthcare.

- Validation: Using automated rules to ensure the output meets quality standards. Validation checks might include range checks (e.g., ensuring a patient’s age is not 200) or logic checks (e.g., ensuring a discharge date is after an admission date).

To learn more about the specific “how-to,” read about data harmonization techniques and what is health data standardisation.

Overcoming Barriers to Effective Data Alignment

Despite the benefits, the road to “clean” data is paved with challenges. Data harmonization overcoming challenges often involves navigating institutional silos and administrative red tape. Data ownership is a significant hurdle; many departments are hesitant to share data due to privacy concerns or a lack of standardized sharing agreements.

In specialized fields, the hurdles are even higher. For instance, research on barriers in organ transplantation data shows that many general tools lack the granularity to handle specific concepts like MELD scores or HLA matching. These scores are critical for prioritizing patients on transplant lists, and even a minor discrepancy in how they are calculated or recorded can have life-altering consequences. Other common health data standardisation technical challenges include inconsistent granularity—where one dataset records “Smoker: Yes/No” and another tracks “Packs per day”—and varying naming conventions that make automated matching difficult. Furthermore, the sheer volume of data generated by modern medical devices and genomic sequencing requires tools that can scale horizontally across cloud environments.

7 Data Harmonization Tools for Rigorous Research

Choosing the right software depends on your specific domain, budget, and technical expertise. Some focus on open-source flexibility, while others offer data harmonization services bridging the gap between disparate datasets through managed platforms.

| Feature | Open-Source Tools | Commercial Platforms |

|---|---|---|

| Cost | Free / Low-cost | Subscription / Enterprise |

| Scalability | Varies by tool | High / Cloud-native |

| Ease of Use | Often requires coding | Often No-code / Low-code |

| Support | Community-driven | Dedicated support teams |

1. OpenRefine for Messy Datasets

OpenRefine is the “Swiss Army Knife” of data cleaning. Formerly known as Google Refine, it is a powerful, free, open-source tool designed specifically for working with “ugly” data. It operates locally on your machine, ensuring that sensitive data does not need to be uploaded to a third-party server, which is a critical feature for many researchers.

It excels at taking a chaotic spreadsheet and exploring it through facets, allowing you to spot inconsistencies instantly. For example, you can use text facets to see all variations of a city name (e.g., “New York,” “new york,” “NY”) and merge them with a single click. Beyond simple cleaning, OpenRefine supports the General Refine Expression Language (GREL), which allows for complex data transformations using regular expressions and logic.

One of its most powerful features is its ability to connect to external reconciliation services. This allows you to link your local data to global authority files like Wikidata or ORCID. If you have a list of protein names, you can use a reconciliation service to automatically fetch their official UniProt IDs, ensuring your dataset is aligned with international standards. Because it is community-driven, there is a massive library of tutorials and plugins available for almost any data-wrangling task, from handling JSON structures to geocoding addresses.

2. Rmonize for Epidemiological Studies

For researchers in epidemiology and public health, Rmonize is a specialized R package that brings much-needed structure to retrospective harmonization. Retrospective harmonization is the process of aligning data that has already been collected across different studies, which is a common requirement for meta-analyses and large-scale cohort studies. It was developed following the scientific research on rigorous data harmonization guidelines from Maelstrom Research.

Rmonize uses a metadata-driven approach, relying on two primary user-generated documents: a DataSchema (which defines the target variables, their types, and permitted values) and Data Processing Elements (which detail the specific transformation rules for each source dataset). This separation of “what the data should look like” from “how to transform it” ensures that the entire harmonization process is documented, reproducible, and validated.

The package provides functions to automate the application of these rules, generate detailed harmonization reports, and perform quality control checks. For instance, if a transformation rule results in a value that falls outside the range defined in the DataSchema, Rmonize will flag it for review. This level of rigor is critical for high-stakes medical research where errors in data processing can lead to incorrect clinical conclusions. By using Rmonize, researchers can move away from ad-hoc scripts and toward a standardized, auditable workflow.

3. Harmony for Multilingual Mental Health Data

Harmony is a unique AI-driven tool specifically built for social sciences and mental health research. In these fields, data is often collected via questionnaires and surveys, which can vary significantly in wording even when they measure the same underlying construct. Unlike traditional tools that rely on keyword matching, Harmony uses transformer neural networks to understand the meaning of questions.

For example, it can recognize that a question about “feeling anxious” in an English GAD-7 questionnaire is semantically identical to its Portuguese translation or even a differently worded question like “Have you felt on edge lately?” Harmony converts these questions into vector embeddings—mathematical representations of meaning—and calculates the cosine similarity between them. This allows researchers to harmonize datasets across different languages and cultures without needing a manual translation dictionary for every single item.

This is particularly transformative for global mental health studies, where researchers often struggle to combine data from different regions. Harmony allows for the rapid comparison of instruments like the PHQ-9 (depression) and the GAD-7 (anxiety) across diverse populations. By automating the identification of equivalent items, Harmony reduces the time required for manual mapping from weeks to minutes, allowing researchers to focus on analyzing the data rather than cleaning it. The tool is open-source and available as both a web interface and a Python library, making it accessible to both non-technical researchers and data scientists.

4. JEDAI for Entity Resolution

When you are dealing with millions of records and need to know if “John Doe” in Database A is the same as “J. Doe” in Database B, JEDAI (Java Entity Duplication Adjudication Integration) is the tool for the job. It is a high-scalability toolkit for record linkage and entity resolution, designed to handle the “Big Data” challenges of modern integration projects.

JEDAI represents the evolution of the field, as detailed in the book Four Generations of Entity Resolution. It handles heterogeneity across datasets with ease by employing a multi-step workflow: blocking, block processing, entity matching, and entity clustering. Blocking is a crucial technique that groups similar records together to reduce the number of comparisons needed, which is essential for maintaining performance when processing millions of records.

The tool supports both schema-aware and schema-agnostic entity resolution. Schema-agnostic resolution is particularly useful when dealing with semi-structured data from the web, where the attributes of an entity might not be clearly defined. JEDAI also includes advanced features like progressive entity resolution, which provides the most likely matches first, allowing for real-time applications. Its modular architecture allows users to swap different algorithms for each step of the process, making it a favorite for large-scale integration projects in sectors like finance, e-commerce, and government registry management.

5. BigGorilla for Data Preparation

BigGorilla is an open-source ecosystem that provides modular components for data preparation. Instead of being a single monolithic “app,” it offers a collection of tools for data acquisition, schema matching, and entity matching, all designed to work seamlessly within the Python data science stack.

This modular approach is perfect for data scientists who want to pick and choose specific components to integrate into their existing pipelines. For example, you might use BigGorilla’s “FlexMatcher” for automated schema mapping, which uses machine learning to suggest how columns from a new dataset should map to your master schema. This is significantly faster than manual mapping, especially when dealing with hundreds of source files.

BigGorilla emphasizes reusability and community collaboration. It provides a platform for researchers to share their data preparation workflows, allowing teams to build custom solutions without reinventing the wheel for every new dataset. The ecosystem integrates with popular Python libraries like Pandas and Scikit-learn, making it easy to incorporate into existing machine learning workflows. By focusing on the “data preparation” phase of the pipeline, BigGorilla addresses the most time-consuming part of the data science lifecycle, enabling faster transitions from raw data to model training.

6. DataHarmonizer for Clinical Templates

DataHarmonizer is a web-based tool originally developed for genomic epidemiology (specifically during the COVID-19 pandemic). It provides a spreadsheet-like interface but with a powerful twist: it enforces strict validation against standardized templates in real-time. This is known as “prospective harmonization,” where data is standardized at the point of entry rather than after it has been collected.

It is particularly useful for ensuring that data collected at the source—such as in a clinic or lab—is already “harmonized” before it ever reaches a central database. The tool uses the LinkML (Link Modeling Language) framework to define schemas, which allows for complex validation rules including controlled vocabularies, regular expressions, and conditional logic. For example, if a user enters “Positive” for a COVID test result, DataHarmonizer can require that a “Ct value” also be provided.

This approach saves hundreds of hours of cleaning later on and significantly improves data quality. During the pandemic, DataHarmonizer was used by public health labs globally to standardize the metadata associated with SARS-CoV-2 genomic sequences, ensuring that researchers could compare viral variants across different geographic regions. Its ability to work offline in a browser makes it ideal for use in field clinics or labs with intermittent internet connectivity, ensuring that data standardization is never interrupted.

7. OMOP Common Data Model (CDM) Frameworks

The OMOP complete guide describes perhaps the most significant movement in health data today. Managed by the OHDSI (Observational Health Data Sciences and Informatics) community, the OMOP CDM is a standardized framework that allows different healthcare databases—such as Electronic Health Records (EHRs) and insurance claims—to be transformed into a common format.

The power of OMOP lies in its Standardized Vocabularies. It maps local source codes (like a hospital’s internal code for “chest pain”) to international standards like SNOMED-CT, LOINC, and RxNorm. This allows researchers to run the same analytical code across dozens of different hospitals or countries simultaneously. This was famously used by the N3C initiative to harmonize COVID-19 data from four different CDMs into a single OMOP structure for rapid research, creating one of the largest clinical datasets in history.

If you are looking to align EHR data, we highly recommend reading the NIAID data harmonization OMOP guide or taking advantage of an OMOP mapping free offer to get started. The OHDSI community also provides a suite of open-source tools like “White Rabbit” for data profiling and “Usagi” for automated code mapping, which simplify the complex process of ETL (Extract, Transform, Load) into the OMOP format. By adopting OMOP, organizations can participate in global research networks, vastly increasing the statistical power of their studies and accelerating the discovery of new medical insights.

Leading Commercial Data Harmonization Tools and Platforms

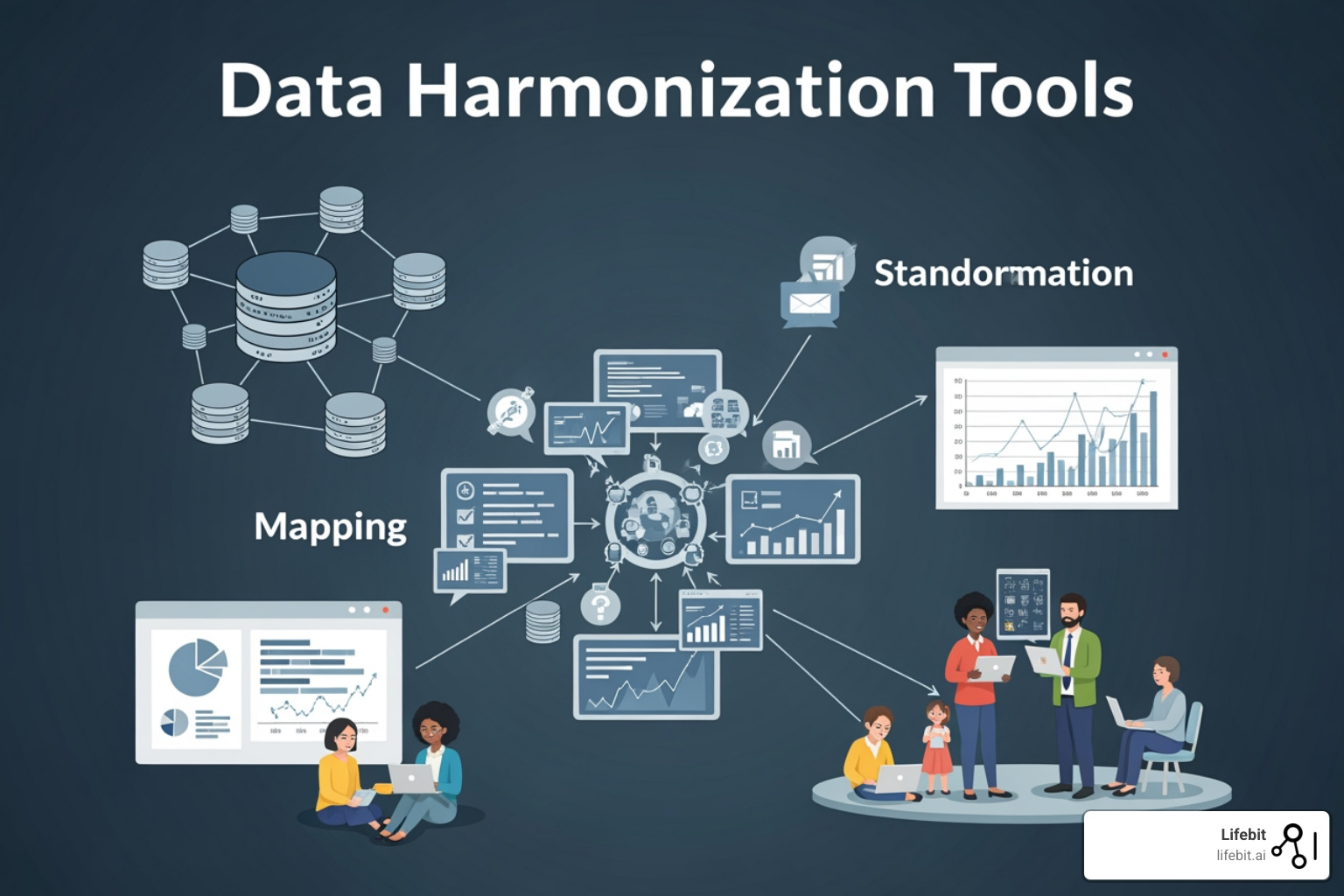

While open-source tools are fantastic for specific tasks, enterprise-level research often requires the heavy lifting of commercial platforms. These tools prioritize automation, real-time sync, and no-code interfaces that allow non-technical stakeholders, such as clinicians and policy makers, to participate in the harmonization process. Commercial platforms often include built-in connectors for hundreds of data sources, from Salesforce and SAP to specialized laboratory information management systems (LIMS).

A health data standardisation end-to-end analysis reveals that commercial platforms often provide a much higher ROI for large organizations. By automating 80% of the manual labor involved in data mapping and cleaning, teams can redirect their most expensive assets—data scientists and clinicians—toward actual discovery and patient care. The seven benefits of health data standardisation include improved data quality, faster time-to-insight, better regulatory compliance, and the ability to leverage advanced AI analytics.

Choosing the Right Data Harmonization Tools for Your Organization

Selecting a tool isn’t just about features; it’s about fit. You must consider several critical factors:

- Scalability: Can the tool handle your data volume as it grows from gigabytes to petabytes? Enterprise tools often use distributed computing frameworks like Spark to process data in parallel.

- Ease of Use: Will your team need a PhD in computer science to run it, or are there intuitive, drag-and-drop interfaces? The “democratization of data” requires tools that are accessible to domain experts.

- Specific Use Cases: Does the tool understand your domain? For example, Data harmonization | VITO HBM is specifically tailored for biomonitoring, while others might focus on financial transactions or supply chain logistics.

- Governance & Compliance: Does it meet GDPR, HIPAA, or ISO standards for data protection? In healthcare, data lineage—the ability to track exactly how a piece of data was transformed from source to destination—is a legal requirement.

- Interoperability: Does the tool support industry standards like FHIR (Fast Healthcare Interoperability Resources) or the OMOP CDM? Avoid tools that lock your data into a proprietary format.

Addressing clinical challenges in health data standardisation requires a tool that balances power with strict security controls. Modern platforms now offer “Data Clean Rooms,” where multiple parties can collaborate on harmonized data without ever seeing the raw, sensitive records of the other participants.

Future Trends: AI, Federated Learning, and CIDM

The future of data harmonization tools is being shaped by “Agentic AI” and privacy-preserving technologies. Our AI for data harmonization complete guide explores how machine learning can now “learn” to map metadata, reducing manual intervention even further. Agentic AI refers to autonomous agents that can explore a new dataset, identify its structure, and suggest the most appropriate harmonization strategy based on historical patterns.

Furthermore, generative AI and OMOP revolutionizing real-world evidence are making it possible to create “digital twins” or synthetic data to fill gaps in datasets. If a clinical trial is missing data for a specific demographic, generative models can create statistically accurate synthetic patients to ensure the analysis remains robust. We are also seeing the rise of Common Image Data Models (CIDM) to standardize pathology and radiology images, allowing AI models to be trained on diverse imaging data from around the world.

Perhaps the most exciting trend is Federated Learning. This allows AI models to be trained across multiple secure sites without the data ever leaving its original location. Instead of moving the data to the model (which raises massive privacy and security concerns), the model is moved to the data. Harmonization is still critical here; for federated learning to work, the data at every site must be harmonized to the same standard so the model can learn consistently. This approach is currently being used to train AI for early cancer detection across networks of hospitals while maintaining 100% patient confidentiality. As these technologies mature, the focus will shift from “how do we clean this data?” to “how do we most effectively use this harmonized knowledge?”

Frequently Asked Questions about Data Harmonization Tools

What is the difference between data integration and data harmonization?

Data integration is the process of combining data from different sources into a single view (the “plumbing”). Data harmonization is the process of ensuring that the data from those different sources is consistent, comparable, and uses the same “language” (the “translation”).

How do AI and machine learning improve the harmonization process?

AI can automate the most tedious parts of harmonization, such as detecting duplicate records (entity resolution), suggesting mappings between different schemas, and even translating clinical notes into structured codes using Natural Language Processing (NLP).

Why is the OMOP CDM important for healthcare data harmonization?

OMOP provides a “Universal Translator” for health data. By converting diverse EHR systems into the OMOP format, researchers can run the same analytical code across dozens of different hospitals or countries simultaneously, vastly increasing the power of their studies.

Conclusion: Stop Cleaning, Start Discovering

The era of spending 80% of your time on data janitorial work is over. Whether you choose an open-source tool like OpenRefine or a comprehensive framework like OMOP, the goal is the same: to turn fragmented data into a strategic asset.

At Lifebit, we believe that the most valuable data shouldn’t be the hardest to use. Our Lifebit data standardisation approach utilizes federated AI to provide secure, real-time access to global biomedical data. By integrating data harmonization tools directly into our platform, we enable researchers to move from raw data to actionable insights in record time.

Explore our use-case-data-harmonization to see how we help global biopharma and government agencies conquer data silos. Ready to transform your research? Secure your data with Lifebit today.