Cracking the Code on Analyzing EHR Data for Better Research Outcomes

Why Integrating and Analyzing EMR/EHR for Research Is Changing Medicine Today

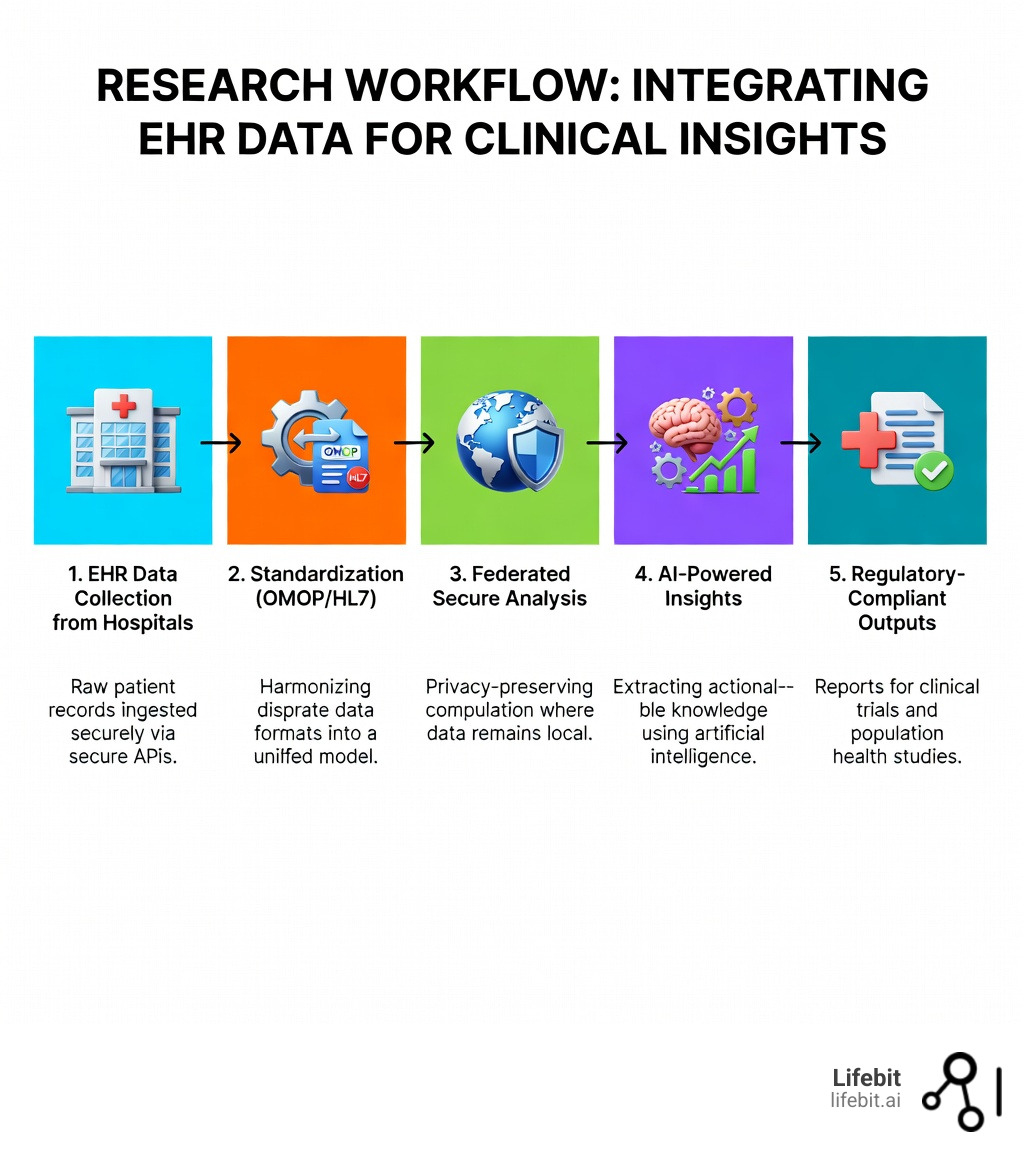

Integrating and analyzing EMR/EHR for research open ups massive datasets that can accelerate clinical trials, improve patient outcomes, and cut research costs by up to 30%. Here’s what you need to know:

- Start with standards: Use HL7, FHIR, or OMOP to ensure interoperability across systems

- Leverage automation: AI and NLP tools can extract insights from unstructured data 50% faster

- Mind the regulations: HIPAA, Common Rule, and IRB approval are non-negotiable for secondary use

- Focus on data quality: Address completeness, consistency, and bias before analysis

- Build for scale: Federated platforms let you analyze data in situ without moving sensitive records

Electronic health records have evolved from simple digital filing cabinets into powerful research engines. Over 80% of U.S. hospitals now use EMR systems, creating an unprecedented opportunity to study real-world patient populations. The HITECH Act of 2009 accelerated this shift, turning routine clinical data into a goldmine for pharmacovigilance, cohort identification, and treatment optimization.

But raw EHR data is messy. It lives in siloed systems, comes in dozens of formats, and often contains errors that propagate across visits. Researchers face real barriers: data entry mistakes in 15.3% of transferred records, inconsistent coding practices, and privacy regulations that require careful navigation. The promise is huge—automated recruitment, real-time monitoring, reduced trial costs—but only if you get the infrastructure right.

I’m Maria Chatzou Dunford, CEO of Lifebit, where I’ve spent over 15 years building platforms that make integrating and analyzing EMR/EHR for research practical and secure for global pharma, public health agencies, and academic institutions. My work bridges computational biology, AI, and federated analytics to open up insights from siloed health data without compromising patient privacy.

Integrating and analyzing emr/ehr for research helpful reading:

The Evolution of Digital Records: From Simple Filing to Research Powerhouses

We’ve come a long way since the days of handwritten notes stuffed into manila folders. The journey from From papyrus to the electronic tablet: A brief history of the clinical medical record shows that while the goal—recording patient care—has remained the same for 3,500 years, the medium has fundamentally shifted.

The real change began in the 1960s with prototypes like the Problem-Oriented Medical Information System. Institutions like the Mayo Clinic and the U.S. Department of Veterans Affairs (with its VistA system) pioneered the transition to digital. However, adoption remained a slow burn for decades. It wasn’t until the HITECH Act in 2009 that the “wave finally broke.” By 2011, more than half of physicians were using electronic systems, and by 2021, hospital adoption in the U.S. soared past 80%.

This evolution isn’t just about going paperless; it’s about the “secondary use” of data. EMRs (Electronic Medical Records) were originally digital versions of a single practice’s charts. Today’s EHRs (Electronic Health Records) are designed to follow the patient across different providers and systems. For us as researchers, this means access to longitudinal data—a continuous story of a patient’s health over years, rather than a single snapshot. This historical depth is what allows us to identify rare disease cohorts, like Kaiser Permanente did for membranous nephropathy, or predict disease progression with unprecedented accuracy.

Overcoming the Infrastructure Barrier: Standards for Integrating and Analyzing EMR/EHR for Research

If you’ve ever tried to merge data from two different hospitals, you know the “interoperability nightmare.” One system uses custom codes for lab results; another stores everything as a scanned PDF. To make integrating and analyzing EMR/EHR for research work, we have to speak a common language.

We rely on international standards to bridge these gaps:

- HL7 & FHIR: The gold standard for exchanging health information. FHIR (Fast Healthcare Interoperability Resources) is the modern, API-based evolution that allows for real-time data streaming.

- DICOM: Essential for medical imaging, ensuring that a CT scan from London can be analyzed by a researcher in New York without losing metadata.

- OMOP Common Data Model: This is our secret weapon for large-scale analysis. By changing diverse EHR data into the OMOP format, we can run the same analytical code across dozens of different hospital databases simultaneously.

- BPMN & UML: These help us model the complex clinical workflows so we can understand how the data was captured in the first place.

| Feature | EMR (Medical Record) | EHR (Health Record) |

|---|---|---|

| Scope | Single practice/clinic | Multi-institutional/National |

| Interoperability | Low (Proprietary) | High (Standards-based) |

| Research Utility | Limited to specific cohorts | Population-level longitudinal studies |

| Patient Access | Usually restricted | Integrated patient portals |

The structure of the healthcare system itself also plays a role. In “Beveridge model” systems (like the UK’s NHS), data is often more centralized, making it easier to build national research databases. In “Bismarck model” systems (common in parts of Europe), data can be more fragmented across private insurers and providers, requiring more robust Clinical source data production and quality control in real-world studies to ensure the data is “research-ready.”

Best Practices for Integrating and Analyzing EMR/EHR for Research in Trial Design

When we design trials today, we’re no longer limited to the “traditional” model. Integrating and analyzing EMR/EHR for research allows us to use Real-World Data (RWD) to create External Control Arms. Instead of recruiting hundreds of patients for a placebo group, we can sometimes use historical EHR data from patients who received the standard of care. This doesn’t just save money—it’s often more ethical when testing life-saving treatments for rare diseases.

Pragmatic trials are another innovation. These trials happen within the normal clinical workflow. By using the EHR for automated data collection, we reduce the burden on site staff and capture how a drug works in a diverse, “real-world” population, rather than just the highly screened patients found in traditional RCTs.

Technical Requirements for Integrating and Analyzing EMR/EHR for Research

To get under the hood, your infrastructure needs three things:

- Data Linkage Capabilities: You must be able to link EHR data with other sources—like genomic data or pharmacy claims—without re-identifying the patient.

- Harmonization Engines: Tools that automatically map local hospital codes (like “BP Low”) to standardized concepts (like LOINC codes for blood pressure).

- Certification: Using systems that meet CCHIT or similar standards ensures the data you’re pulling is reliable and audit-ready for regulatory bodies like the FDA or EMA.

Accelerating Clinical Trials: Recruitment, RWD, and External Control Arms

One of the most painful parts of any trial is recruitment. We’ve all seen trials stall because they can’t find enough eligible patients. Integrating and analyzing EMR/EHR for research changes the math. In gynecologic oncology, for example, AI-enabled patient matching using EMRs has increased enrollment efficiency by over 50%.

Instead of research coordinators manually sifting through paper charts, we can run automated queries across millions of records to find patients who meet specific inclusion criteria. This doesn’t just speed things up; it makes recruitment more equitable by identifying eligible patients in community clinics, not just big academic centers.

Furthermore, Electronic health records to facilitate clinical research shows that integration reduces the “administrative drag.” When primary care and specialist systems are synced, wait times for referrals can drop by an average of 16.49 days. For a clinical trial, that means faster screening, faster enrollment, and ultimately, getting treatments to patients sooner.

Supercharging Data Interpretation with AI, ML, and NLP

EHR data is a “treasure trove,” but it’s also a mess. About 80% of useful clinical information is buried in “unstructured” text—doctor’s notes, discharge summaries, and radiology reports. This is where Artificial Intelligence (AI) and Natural Language Processing (NLP) come in.

We use NLP to “read” these notes at scale. For instance, in a study of Interstitial Lung Disease (ILD), NLP was used to detect specific patterns in radiology reports with 94.29% accuracy. This allowed researchers to identify patients who had the disease but lacked the proper diagnostic code in their file.

Machine Learning (ML) takes it a step further by predicting outcomes. We’ve seen ML models use VA EHR data to predict acute kidney injury 48 hours before it happens. This kind of Clinical decision support tools in the electronic medical record isn’t just for research; it’s a direct path to saving lives in real-time.

Leveraging AI when Integrating and Analyzing EMR/EHR for Research

AI helps us solve the “missing data” problem. If a patient’s race is missing from their record (which happens in up to 40% of encounters in some studies), AI can often infer missing values by looking at patterns across the rest of the dataset. It also helps with automated screening, flagging patients for trials the moment a relevant lab result is uploaded to the system.

Enhancing Accuracy when Integrating and Analyzing EMR/EHR for Research

The “garbage in, garbage out” rule applies here. We use AI to detect error propagation. If a wrong diagnosis is entered once, it often gets copied and pasted into every subsequent note. Our advanced algorithms can spot these inconsistencies and flag them for review, ensuring that our research is based on the most accurate “truth set” possible.

Navigating the Ethical and Regulatory Minefield of Secondary Data Use

We can’t talk about integrating and analyzing EMR/EHR for research without talking about privacy. Patients trust us with their most sensitive information, and we take that responsibility seriously.

The regulatory landscape—including HIPAA in the U.S., the GDPR in Europe, and the Common Rule—provides the framework for what we can and cannot do. Key to this is de-identification. We use techniques like “date shifting” (moving all dates in a record by a random number of days) to protect anonymity while preserving the temporal relationships in the data.

But de-identification isn’t always enough. For complex research, we often need “Safe Harbor” methods or Data Use Agreements (DUAs) that strictly limit who can see the data and for what purpose. This is why Toward a national framework for the secondary use of health data is so critical. We are moving toward a world where data isn’t “given away” but accessed securely through Trusted Research Environments (TREs).

At Lifebit, we use a federated approach. Instead of moving sensitive patient data to a central server (which creates a massive security risk), we “bring the analysis to the data.” The data stays behind the hospital’s firewall, and only the aggregated, anonymous results are shared. This satisfies even the strictest regulators and keeps patient trust intact.

Frequently Asked Questions about EMR/EHR Research

How does EMR integration reduce clinical trial costs?

Integration leads to a 7.8% decrease in overall bill size and a 30.4% drop in redundant procedures. By allowing researchers to see what tests have already been performed, we eliminate the need to repeat expensive radiographies or lab panels, significantly lowering the per-patient cost of a trial.

What are the biggest challenges in EHR data quality?

The “big three” are entry errors, lack of interoperability, and unstructured text. Research shows that 15.3% of transferred EMRs contain at least one major error. Furthermore, many systems still use proprietary formats that don’t “talk” to each other, making multi-center studies difficult without heavy data cleaning.

How does the HITECH Act impact current medical research?

The 2009 HITECH Act provided over $34 billion in incentives for hospitals to adopt certified EHR technology. This created the massive, standardized digital footprint we use today. Without it, we wouldn’t have the population-level datasets required for modern AI training or large-scale pharmacovigilance.

Conclusion

Integrating and analyzing EMR/EHR for research is no longer a “nice-to-have” experiment—it is the foundation of 21st-century medicine. By moving from siloed paper records to standardized, AI-ready digital ecosystems, we are cutting years off drug development timelines and uncovering insights that were previously invisible.

The path forward requires a commitment to standardized protocols (like FHIR and OMOP), collaborative infrastructure, and ethical governance. We must continue to build systems that respect patient privacy while maximizing the “altruistic value” of their data.

At Lifebit, we are proud to be at the forefront of this shift, providing the federated AI platforms that make this complex work possible. Whether you are identifying a rare disease cohort or running a global phase III trial, the code to better research outcomes is written in the EHR. We just need the right tools to crack it.