Beyond the Spreadsheet: The Best Data Match Software for Every Need

Data Match Software: Best Solutions for 2025

Why Data Match Software is Critical for Modern Organizations

In today’s data-driven world, organizations are drowning in information. Data flows in from every direction—customer interactions, sales transactions, marketing campaigns, social media, and countless third-party sources. While this data holds immense potential, it often exists in fragmented, inconsistent, and duplicated states across various systems. This is where data match software becomes not just a tool, but a strategic necessity. It is the technology that identifies, links, and merges related records across disparate datasets, transforming scattered information into a single, unified, and trustworthy source of truth.

If you’re evaluating solutions, it’s crucial to understand that not all tools are created equal. The market offers a range of options tailored to different needs, scales, and technical capabilities:

Top Data Match Software Categories:

- Enterprise Solutions: These are robust, all-in-one platforms designed for large-scale operations. They offer advanced features for data quality, governance, and master data management (MDM). Companies like Informatica, IBM, and Talend provide comprehensive suites that can handle massive volumes of data and complex integration scenarios. These are ideal for large corporations with dedicated IT and data management teams.

- No-Code/Low-Code Platforms: Catering to business users and data analysts, these tools offer intuitive, visual interfaces that simplify the matching process. They often use drag-and-drop functionality and pre-built templates, allowing non-technical users to clean, match, and merge data without writing a single line of code. Examples include WinPure and OpenRefine.

- Federated & Privacy-Enhancing Solutions: In industries like healthcare and finance, data privacy and security are paramount. Federated data matching solutions allow organizations to analyze and link data across different locations without ever moving or centralizing the raw data. This is achieved by sending queries to the data sources and only returning aggregated, anonymized results. Lifebit’s federated technology is a prime example, enabling secure research on sensitive patient data.

- Specialized & Niche Tools: Some tools are built for very specific purposes. For instance, there are solutions designed exclusively for mainframe data migration, customer data integration (CDI) for marketing, or product information management (PIM) for e-commerce. These tools excel in their specific domain but may lack the versatility of broader platforms.

The stakes couldn’t be higher. Organizations today generate massive amounts of data across multiple systems—from customer records scattered across CRM, e-commerce, and marketing automation platforms to patient data spread across different hospitals, labs, and electronic health record (EHR) systems. Without proper data matching, you’re left with a fragmented, unreliable view of your operations. This leads to costly inefficiencies, poor customer experiences, and flawed business intelligence.

Consider this reality: Gartner estimates that poor data quality costs organizations an average of $12.9 million every year. This isn’t just about wasted marketing spend; it’s about missed sales opportunities, compliance risks, and strategic decisions based on faulty information. Conversely, organizations that invest in robust data management and matching technologies see tangible returns. A recent study showed that companies using advanced data matching can reduce the time spent on data reconciliation by up to 75% and achieve 96% match accuracy, leading to significant operational cost reductions and a more reliable foundation for analytics and AI.

As Maria Chatzou Dunford, CEO and Co-founder of Lifebit, with over 15 years in computational biology and biomedical data integration, I’ve seen firsthand how data match software transforms organizations. It’s the key to unlocking the true value of data, whether it’s for enabling precision medicine breakthroughs by linking genomic and clinical data, or for powering seamless, personalized customer experiences across all touchpoints. The challenge is choosing a solution that aligns not just with your data, but with your specific business goals, regulatory requirements, and long-term vision.

What is Data Matching Software and Why is it Your Secret Weapon?

Picture this: you’re trying to solve a 10,000-piece jigsaw puzzle, but the pieces are scattered across different rooms, some are duplicates, some are slightly different shades of the same color, and others are missing entirely. That’s what managing data in a modern organization feels like. Data match software is your expert puzzle-solver, using sophisticated algorithms to find all the right pieces, fit them together, and reveal the complete, coherent picture you need to make informed decisions.

In today’s digital economy, data is the lifeblood of your business. Every customer interaction, every transaction, every click on your website generates a piece of this puzzle. The problem is, this data rarely lives in one neat, tidy place. It’s spread across your CRM, ERP, marketing automation platform, billing system, and countless other applications. Each system might have its own way of recording information. For example, “John Smith” in one system might be “J. Smith” in another, and “Jonathan Smyth” in a third. His address might be listed as “123 Main St” in one database and “123 Main Street, Apt 4B” in another.

Without a way to reconcile these differences, you’re operating with a fragmented and often inaccurate view of your own business. You might send three identical marketing emails to the same person, frustrating a valuable customer. Your sales team might not know that a prospect they’re calling has an open support ticket, leading to an awkward and unproductive conversation. Your financial forecasts could be based on inflated customer numbers, leading to poor strategic planning. This is where data match software becomes your secret weapon.

It’s the technology that tackles the fundamental challenges of data quality and data consistency. By systematically identifying and linking related records, it enables:

- Deduplication: Eliminating redundant entries to create a clean, single source of truth.

- Entity Resolution: Understanding that “John Smith,” “J. Smith,” and “Jonathan Smyth” at the same address are, in fact, the same person.

- Data Integration: Seamlessly combining data from different systems to build a comprehensive, 360-degree view of your customers, products, or partners.

The result is a dramatic improvement in operational efficiency, more accurate analytics, and a foundation for true data-driven decision-making. For a deeper dive into how this all works, check out our insights on Data Linkage and our Guide to Data Matching Best Practices.

How Data Matching Algorithms Work

The magic behind data match software lies in its sophisticated algorithms, which go far beyond simple, exact comparisons. These algorithms are designed to mimic human intuition but with the speed and scale of a machine, allowing them to find connections in vast and messy datasets.

Here’s a look at the main types of matching algorithms:

-

Deterministic Matching: This is the most straightforward approach, often called “rules-based matching.” It works by comparing records based on a set of predefined rules and unique identifiers. For example, a rule might state: “If the Social Security Number and Date of Birth are identical, the records represent the same person.” This method is fast, transparent, and highly accurate when you have reliable, unique identifiers like a customer ID, email address, or government-issued number. However, its rigidity is also its weakness. It fails when there are slight variations, typos, or missing data (e.g., “Jon Smith” vs. “John Smith” would not be matched). It’s best used for initial, high-confidence matching before more advanced techniques are applied.

-

Probabilistic Matching: Also known as “fuzzy matching,” this is a more advanced and flexible approach. Instead of relying on exact matches, it calculates a probability or a “match score” that indicates the likelihood of two records referring to the same entity. This method, pioneered by statisticians Halbert L. Dunn and Felker-Sunter, uses a series of weighted comparisons across multiple fields (e.g., name, address, phone number).

For example, a match on a rare last name might be given a higher weight than a match on a common first name like “John.” The algorithm then sums these weights to produce an overall score. If the score is above a certain threshold, the records are considered a match. If it’s below another threshold, they are a non-match. Records falling in between are flagged for manual review. This approach is highly effective at finding non-obvious duplicates and handling the inconsistencies inherent in real-world data.

-

Phonetic and String Similarity Algorithms: These are often used within a probabilistic matching framework to handle variations in spelling and transcription.

- Phonetic Algorithms like Soundex, Metaphone, and Double Metaphone work by encoding words based on how they sound. For instance, “Smith” and “Smyth” would have the same Soundex code, allowing the system to identify them as a potential match despite the different spelling. This is invaluable for names, street names, and other fields prone to phonetic errors.

- String Similarity Algorithms (or “string-distance metrics”) measure the difference between two text strings. Common examples include:

- Levenshtein Distance: Calculates the minimum number of single-character edits (insertions, deletions, or substitutions) required to change one word into the other. For example, the Levenshtein distance between “kitten” and “sitting” is 3.

- Jaro-Winkler Distance: A more sophisticated metric that gives more favorable scores to strings that have matching characters at the beginning of the string. This is particularly useful for matching names, where typos often occur towards the end.

- N-grams: This technique breaks down strings into overlapping sequences of characters (n-grams). For example, the 2-grams (or bigrams) for “apple” are “ap”, “pp”, “pl”, and “le”. The algorithm then compares the set of n-grams between two strings to determine their similarity. This is effective at handling transposed letters and other common typing errors.

-

AI and Machine Learning: The latest evolution in data matching involves using artificial intelligence (AI) and machine learning (ML). These systems can be trained on a sample dataset to “learn” what constitutes a match.

- Supervised Learning: A data steward manually labels a set of record pairs as “match,” “non-match,” or “possible match.” The algorithm learns from these examples to build a predictive model that can then be applied to the entire dataset.

- Unsupervised Learning: The algorithm identifies clusters of similar records without pre-labeled data, grouping them based on inherent patterns.

- Active Learning: This is a hybrid approach where the model identifies the most ambiguous or uncertain pairs and presents them to a human for review. This feedback is then used to continuously refine and improve the model’s accuracy over time.

AI-powered matching is particularly powerful for handling complex, unstructured data and can adapt to new data sources and evolving patterns, significantly reducing the need for manual rule-tuning and improving overall match accuracy.

The Core Benefits: From Deduplication to a Single Customer View

The most immediate and tangible benefit of implementing data match software is duplicate removal. By identifying and merging redundant records, you instantly clean up your database, reduce storage costs, and eliminate the confusion caused by having multiple versions of the same entity. This process, often called record linkage or entity resolution, is the foundational step toward creating a single, reliable source of truth.

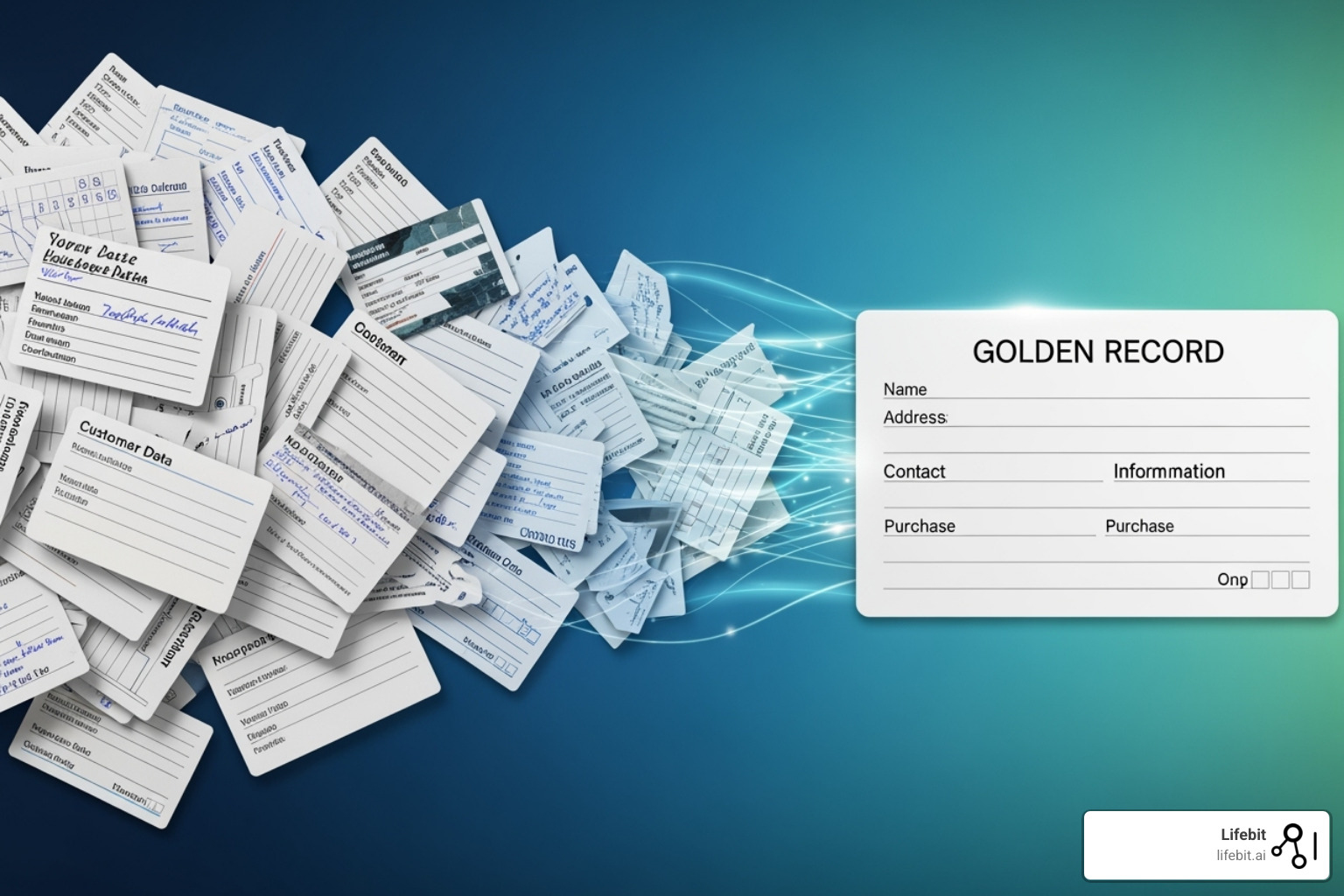

But the advantages go far beyond just a tidy database. Effective data matching is the cornerstone of a robust Master Data Management (MDM) strategy. MDM is the discipline of creating a single, authoritative “golden record” for each critical business entity—be it a customer, patient, product, or supplier. This golden record consolidates the best, most up-to-date information from all your disparate systems into one comprehensive profile.

Achieving this “single source of truth” unlocks a cascade of benefits across the entire organization:

-

A True 360-Degree Customer View: Imagine your sales, marketing, and customer service teams all working from the same, complete customer profile. Sales can see recent support tickets, marketing can personalize campaigns based on purchase history, and service agents have the full context of a customer’s relationship with your company. This unified view is essential for delivering a seamless and personalized customer experience.

-

Enhanced Business Intelligence and Analytics: When your data is clean, consistent, and de-duplicated, your analytics become exponentially more powerful. You can trust the numbers in your reports, uncover more accurate trends, and build more reliable predictive models. This leads to smarter, data-driven decisions at every level of the organization, from strategic planning in the boardroom to operational adjustments on the front lines.

-

Improved Operational Efficiency: Inaccurate or duplicate data creates a tremendous amount of friction and wasted effort. Think of the time spent manually cleaning lists, the cost of sending multiple marketing mailings to the same household, or the frustration of a support agent trying to find a customer’s history across three different systems. By automating the process of data consolidation, data match software frees up valuable employee time and reduces operational overhead. Studies have shown that organizations can achieve up to a 40% reduction in operational costs by improving data quality.

-

Strengthened Compliance and Risk Management: In regulated industries like finance and healthcare, maintaining accurate and auditable data is not just a best practice—it’s a legal requirement. Data matching is critical for complying with regulations like GDPR (General Data Protection Regulation) and CCPA (California Consumer Privacy Act), which give individuals the right to access and erase their data. It’s also essential for Know Your Customer (KYC) and Anti-Money Laundering (AML) checks, where accurately identifying individuals across different datasets is crucial for preventing fraud and financial crime.

-

Increased Data Trust and Governance: When everyone in the organization knows they are working from a single, reliable source of truth, it builds confidence in the data. This fosters a data-driven culture where decisions are based on evidence, not guesswork. A robust data matching process is a key component of any successful data governance framework, ensuring that data is managed as a valuable enterprise asset.

Top-tier solutions achieve impressive results, with many reporting 96% match accuracy or higher. This level of precision means you can trust your unified data to drive critical business decisions, enhance customer relationships, and unlock new opportunities for growth.

Core Features to Demand in Your Data Matching Solution

When evaluating data match software, it’s tempting to focus solely on the core matching algorithm. But that’s like judging a car by its engine alone—you’re missing the transmission, suspension, and steering that actually make it a usable vehicle. A truly effective solution is a comprehensive platform that supports the entire data quality lifecycle, from initial assessment to ongoing governance.

Here’s a breakdown of the essential features to look for:

| Feature Category | Description | Why It’s Important |

|---|---|---|

| Advanced Matching Algorithms | The software should offer a combination of deterministic, probabilistic (fuzzy), and machine learning-based matching techniques. This includes phonetic algorithms (like Soundex, Metaphone), string similarity metrics (like Jaro-Winkler, Levenshtein), and the ability to create custom, complex matching rules. | No single algorithm is perfect for all data types. A flexible, multi-faceted approach allows you to handle everything from simple, structured data to complex, unstructured text, ensuring the highest possible accuracy and minimizing both false positives and false negatives. |

| Data Profiling and Standardization | Before you can match data, you need to understand it. Look for tools that can automatically scan your source data to identify its structure, quality, and completeness. This includes features for parsing, standardizing (e.g., converting “St.” to “Street”), and validating data elements like addresses, phone numbers, and email formats. | Profiling reveals the hidden problems in your data, such as inconsistent formatting or missing values. Standardization ensures that you’re comparing apples to apples, dramatically improving the accuracy of your matching process. |

| Scalability and Performance | The solution must be able to handle your data volumes, both now and in the future. This means efficient processing of millions or even billions of records without a significant drop in performance. Look for features like parallel processing, distributed architecture, and optimized memory management. | Data volumes are growing exponentially. A solution that works for 1 million records today may fail at 100 million tomorrow. Scalability ensures the software remains a long-term asset, not a short-term fix. |

| Connectivity and Integration | A good data matching tool should seamlessly integrate with your existing IT ecosystem. This requires a robust library of pre-built connectors for common databases (SQL, NoSQL), data warehouses (Snowflake, Redshift), CRM systems (Salesforce), ERPs (SAP), and data lakes. A well-documented REST API is also crucial for custom integrations. | Data is rarely in one place. Strong connectivity eliminates the need for complex, manual data extraction and loading processes, allowing you to create automated, end-to-end data quality pipelines. |

| User-Friendly Interface and Workflow Management | The software should empower both technical and non-technical users. A visual, drag-and-drop interface for building matching workflows, clear dashboards for monitoring results, and an intuitive review/stewardship console are essential. | Data quality is a team sport. An accessible UI allows business analysts and data stewards, who have the best contextual understanding of the data, to actively participate in the matching and cleansing process, leading to better outcomes. |

| Master Data Management (MDM) Capabilities | Beyond just identifying duplicates, a top-tier solution should help you create and manage a “golden record” or a single source of truth. This includes features for merging records, survivorship rules (defining which data wins in a conflict), and maintaining a persistent, unified view of your key entities (customers, products, etc.). | MDM is the ultimate goal of most data matching projects. It provides a trusted, 360-degree view that can be used to drive consistent and accurate business operations, from marketing personalization to regulatory reporting. |

| Security and Compliance | In an era of stringent data privacy regulations (like GDPR, CCPA, HIPAA), security is non-negotiable. The software must offer features like role-based access control (RBAC), end-to-end data encryption (both in transit and at rest), and detailed audit logs of all data access and modifications. | A data breach or compliance failure can result in massive fines and reputational damage. Robust security features are essential for protecting sensitive information and ensuring you meet your legal and ethical obligations. |

| Federated and Hybrid Deployment | For organizations dealing with highly sensitive or geographically distributed data, the ability to perform matching without centralizing the data is critical. A federated architecture allows the analysis to happen locally, with only anonymized, non-sensitive results being shared. | This approach is crucial for multi-national corporations, healthcare providers, and research consortia that need to collaborate without violating data sovereignty or privacy regulations. It enables powerful insights while keeping raw data secure in its original location. |

Introduction

What is Data Matching Software and Why is Your Secret Weapon?

Data match software is the technology that identifies and links related records across datasets, changing scattered information into a unified view of your data. If you’re evaluating solutions, here are the key types to consider:

Top Data Match Software Categories:

- Enterprise Solutions – For large-scale operations with complex data migrations (DataMatch Enterprise, Syniti)

- No-Code Platforms – For business users requiring intuitive interfaces (WinPure, Match Data Pro)

- Federated Solutions – For regulated industries requiring secure, distributed analysis (Lifebit)

- Specialized Tools – For specific use cases like mainframe migrations (DataMatch) or analytics (DataDelta)

The stakes couldn’t be higher. Organizations today generate massive amounts of data across multiple systems – from customer records scattered across CRM and billing platforms to patient data spread across clinical and claims databases. Without proper data matching, you’re left with duplicate records, incomplete customer views, and decisions based on fragmented information.

Consider this reality: Entity resolution with modern solutions can reduce time spent on business partner consolidation by 75% during major system migrations. Meanwhile, organizations using advanced data matching report 96% match accuracy and significant operational cost reductions – sometimes up to 40%.

The challenge isn’t just technical – it’s strategic. Poor data quality costs organizations millions in lost revenue, compliance failures, and missed opportunities. But the right data match software doesn’t just solve problems; it open ups potential you didn’t know existed.

As Maria Chatzou Dunford, CEO and Co-founder of Lifebit with over 15 years in computational biology and biomedical data integration, I’ve seen how data match software transforms organizations – from enabling precision medicine breakthroughs to powering regulatory compliance across federated healthcare networks. The key is choosing a solution that matches not just your data, but your specific operational needs and regulatory requirements.

At its heart, data match software is a sophisticated tool designed to bring order to the chaos of disparate data. Imagine your data as a puzzle where pieces are scattered across many boxes, some pieces look similar but aren’t the same, and some are missing vital parts. Data match software helps us find the right pieces, put them together, and ensure they form a complete, accurate picture.

Why is this essential? Because businesses today run on data. Every customer interaction, every product sale, every patient record generates valuable information. However, this data often resides in different systems, entered in varying formats, leading to inconsistencies and duplicates. Without effective data matching, we face significant data quality issues. This means we might be sending duplicate emails to the same customer, misidentifying a patient due to a minor spelling error, or failing to gain a complete view of our supply chain.

Data match software is our secret weapon for achieving data consistency, enabling robust deduplication, and performing powerful entity resolution. It facilitates seamless data integration across systems, leading to vastly improved operational efficiency. For more on how data is linked, you can explore our insights on Data Linkage.

How Data Matching Algorithms Work

The magic behind data match software lies in its sophisticated algorithms, which employ various techniques to identify and link records. These aren’t just simple exact matches; they dig into the nuances of data to find connections even when things aren’t perfectly aligned.

Here’s a look at the main types of matching algorithms:

- Deterministic Matching: This is the most straightforward approach. It relies on exact identifiers, like a unique customer ID or a perfect match on name and date of birth. If two records share these exact values, they are considered a match. It’s fast and highly accurate for clean data but falls short when data is messy or lacks unique identifiers.

- Probabilistic Matching: When exact matches aren’t possible, probabilistic matching steps in. This method assigns a probability score to each potential match based on the likelihood that two records refer to the same entity. It considers multiple attributes (e.g., name, address, phone number) and how closely they match, assigning weights to each attribute’s importance. This allows us to find matches even with minor discrepancies.

- Fuzzy Matching: A crucial component of probabilistic matching, fuzzy matching handles variations, typos, and inconsistencies in data. It uses algorithms that measure the “distance” or similarity between strings, rather than demanding an exact match.

- Jaro-Winkler and Levenshtein distance are common algorithms that calculate string similarity based on character differences, transpositions, or insertions/deletions. For instance, “John Smith” and “Jon Smythe” might be identified as a match due to their high similarity score.

- Phonetic Algorithms like Soundex and Metaphone focus on how names or words sound, rather than how they are spelled. This is incredibly useful for matching names like “Smith” and “Smyth” which sound alike but are spelled differently.

- AI and Machine Learning: Modern data match software increasingly leverages artificial intelligence and machine learning. These advanced techniques can learn from patterns in historical data, adapt to new data variations, and even identify matches that human rules might miss. They are excellent at reducing false positives and false negatives, making the matching process more intelligent and adaptive.

The Core Benefits: From Deduplication to a Single Customer View

The immediate and most visible benefit of data match software is its ability to perform robust deduplication. Imagine the relief of knowing you no longer have multiple, fragmented records for the same customer or patient! This process, often called record linkage, is foundational to creating a clean, reliable dataset.

Beyond simple duplicate removal, these solutions enable us to build a Master Data Management (MDM) strategy. This means creating a “golden record” for each entity – a single, authoritative source of truth that combines all relevant information from various systems. This golden record forms the basis of a comprehensive 360-degree view of our customers, partners, or patients.

The benefits ripple outwards:

- Improved Accuracy and Consistency: With duplicates eliminated and records linked, our data becomes inherently more accurate and consistent. This leads to better reporting and more reliable insights.

- Data Enrichment: A unified view allows us to enrich our data by combining information that was previously siloed. For example, merging sales data with customer support interactions can reveal a more complete picture of customer behavior.

- Improved Decision-Making: When our data is clean, accurate, and comprehensive, our decisions are smarter. We can personalize customer experiences, identify fraud more effectively, or make more informed strategic business choices.

- Operational Efficiency: Eliminating duplicates reduces wasted effort and resources. Think of the savings from not sending multiple marketing emails to the same person, or the improved efficiency in customer service when agents have a complete view of a customer’s history.

- High Match Accuracy: Top-tier data match software is advertised to achieve impressive results, with some solutions boasting up to 96% match accuracy. This level of precision ensures that our data unification efforts are highly effective.

Core Features to Demand in Your Data Matching Solution

When we’re evaluating data match software, we need to look beyond just the ability to find duplicates. A truly effective solution should offer a comprehensive suite of features that support the entire data quality lifecycle.

Here’s a table outlining key features we should demand:

| Feature Category | Description We know you’re looking for solutions beyond the spreadsheet – something that can truly handle the complexities of modern data. That’s why we’re diving into data match software, exploring how these tools can transform your organization, from small businesses to global enterprises. We’ll explore the critical features, benefits, and common use cases that make this software indispensable.

What is Data Matching Software and Why is Your Secret Weapon?

At its heart, data match software is a sophisticated tool designed to bring order to the chaos of disparate data. Imagine your data as a puzzle where pieces are scattered across many boxes, some pieces look similar but aren’t the same, and some are missing vital parts. Data match software helps us find the right pieces, put them together, and ensure they form a complete, accurate picture.

Why is this essential? Because businesses today run on data. Every customer interaction, every product sale, every patient record generates valuable information. However, this data often resides in different systems, entered in varying formats, leading to inconsistencies and duplicates. Without effective data matching, we face significant data quality issues. This means we might be sending duplicate emails to the same customer, misidentifying a patient due to a minor spelling error, or failing to gain a complete view of our supply chain.

Data match software is our secret weapon for achieving data consistency, enabling robust deduplication, and performing powerful entity resolution. It facilitates seamless data integration across systems, leading to vastly improved operational efficiency. For more on how data is linked, you can explore our insights on Data Linkage.

How Data Matching Algorithms Work

The magic behind data match software lies in its sophisticated algorithms, which employ various techniques to identify and link records. These aren’t just simple exact matches; they dig into the nuances of data to find connections even when things aren’t perfectly aligned.

Here’s a look at the main types of matching algorithms:

- Deterministic Matching: This is the most straightforward approach. It relies on exact identifiers, like a unique customer ID or a perfect match on name and date of birth. If two records share these exact values, they are considered a match. It’s fast and highly accurate for clean data but falls short when data is messy or lacks unique identifiers.

- Probabilistic Matching: When exact matches aren’t possible, probabilistic matching steps in. This method assigns a probability score to each potential match based on the likelihood that two records refer to the same entity. It considers multiple attributes (e.g., name, address, phone number) and how closely they match, assigning weights to each attribute’s importance. This allows us to find matches even with minor discrepancies.

- Fuzzy Matching: A crucial component of probabilistic matching, fuzzy matching handles variations, typos, and inconsistencies in data. It uses algorithms that measure the “distance” or similarity between strings, rather than demanding an exact match.

- Jaro-Winkler and Levenshtein distance are common algorithms that calculate string similarity based on character differences, transpositions, or insertions/deletions. For instance, “John Smith” and “Jon Smythe” might be identified as a match due to their high similarity score.

- Phonetic Algorithms like Soundex and Metaphone focus on how names or words sound, rather than how they are spelled. This is incredibly useful for matching names like “Smith” and “Smyth” which sound alike but are spelled differently.

- AI and Machine Learning: Modern data match software increasingly leverages artificial intelligence and machine learning. These advanced techniques can learn from patterns in historical data, adapt to new data variations, and even identify matches that human rules might miss. They are excellent at reducing false positives and false negatives, making the matching process more intelligent and adaptive.

The Core Benefits: From Deduplication to a Single Customer View

The immediate and most visible benefit of data match software is its ability to perform robust deduplication. Imagine the relief of knowing you no longer have multiple, fragmented records for the same customer or patient! This process, often called record linkage, is foundational to creating a clean, reliable dataset.

Beyond simple duplicate removal, these solutions enable us to build a Master Data Management (MDM) strategy. This means creating a “golden record” for each entity – a single, authoritative source of truth that combines all relevant information from various systems. This golden record forms the basis of a comprehensive 360-degree view of our customers, partners, or patients.

The benefits ripple outwards:

- Improved Accuracy and Consistency: With duplicates eliminated and records linked, our data becomes inherently more accurate and consistent. This leads to better reporting and more reliable insights.

- Data Enrichment: A unified view allows us to enrich our data by combining information that was previously siloed. For example, merging sales data with customer support interactions can reveal a more complete picture of customer behavior.

- Improved Decision-Making: When our data is clean, accurate, and comprehensive, our decisions are smarter. We can personalize customer experiences, identify fraud more effectively, or make more informed strategic business choices.

- Operational Efficiency: Eliminating duplicates reduces wasted effort and resources. Think of the savings from not sending multiple marketing emails to the same person, or the improved efficiency in customer service when agents have a complete view of a customer’s history.

- High Match Accuracy: Top-tier data match software is advertised to achieve impressive results, with some solutions boasting up to 96% match accuracy. This level of precision ensures that our data unification efforts are highly effective.

Core Features to Demand in Your Data Matching Solution

When evaluating data match software, it’s tempting to focus solely on duplicate detection. But that’s like judging a car by its ability to start the engine – you’re missing the bigger picture. A truly effective solution needs to support your entire data quality journey, from initial profiling through final governance.

Think of data matching algorithms as the heart of your system. You’ll want solutions that offer multiple approaches: deterministic matching for clean, exact matches; probabilistic matching for handling real-world data messiness; and fuzzy matching capabilities that can handle typos, abbreviations, and variations. The best platforms combine all three approaches, automatically selecting the right method for each situation.

Scalability becomes critical when you’re dealing with large datasets. Your solution should handle everything from thousands of records to terabyte-scale processing without breaking a sweat. Look for platforms that can grow with your needs – what starts as a small customer database today might become a massive multi-source data warehouse tomorrow.

Integration capabilities can make or break your implementation. Your data match software should offer robust APIs, preferably RESTful, along with pre-built connectors to common systems. Whether you need batch processing for large overnight jobs or real-time sync for live customer interactions, the platform should handle both seamlessly.

The user interface matters more than you might think. Data matching isn’t just for technical teams anymore. Business users need intuitive, visual workflows that don’t require a computer science degree to steer. Drag-and-drop functionality and pre-built templates can dramatically reduce implementation time and training needs.

Data profiling and cleansing features should be built right in. Before you can match records effectively, you need to understand what you’re working with. Look for solutions that automatically analyze your data quality, identify patterns, and suggest standardization rules. Address verification capabilities are particularly valuable for customer data.

Workflow automation transforms data match software from a one-time tool into an ongoing solution. The platform should handle routine tasks automatically while flagging exceptions for human review. This keeps your data clean without requiring constant manual intervention.

Compliance support has become non-negotiable in today’s regulatory environment. Your solution should provide built-in audit trails, support for data governance frameworks, and features that help with GDPR, CCPA, and HIPAA compliance. This includes capabilities for handling Subject Access Requests and maintaining detailed processing logs.

For organizations working with sensitive biomedical data, federated architecture capabilities become essential. This allows data matching and analysis to occur across distributed datasets without centralizing the information – maintaining the highest standards of privacy and governance while still delivering comprehensive insights.