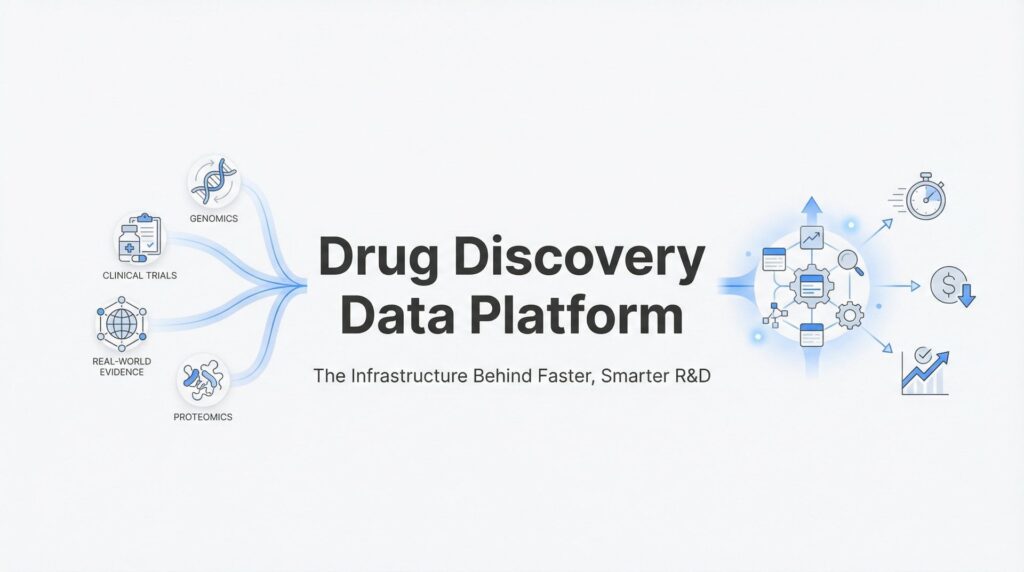

Drug Discovery Data Platform: The Infrastructure Behind Faster, Smarter R&D

Drug discovery is broken. Not because of bad science—the opposite, actually. We’ve never had better tools, smarter researchers, or more promising targets. The problem is data.

A single drug candidate takes 10-15 years and costs upward of $2.6 billion to bring to market. Yet 90% of candidates fail somewhere in the pipeline. The culprit isn’t the biology. It’s the infrastructure.

Genomic data sits in one system. Clinical trial results in another. Real-world evidence scattered across hospitals and registries. Proteomics data locked in a vendor’s proprietary format. Each dataset could hold the answer to your next breakthrough—but they can’t talk to each other. Your researchers spend 60-80% of their time wrangling data into usable formats instead of actually discovering drugs.

A drug discovery data platform changes this equation. It’s the infrastructure layer that unifies disparate data sources, makes them analysis-ready in days instead of months, and enables secure collaboration across teams and partners without creating compliance nightmares. This isn’t about storing more data. It’s about making the data you already have actually useful.

Here’s what you need to know to evaluate whether your organization needs one—and how to choose the right platform for your pipeline.

The Data Problem Killing Drug Discovery Timelines

Let’s start with the reality on the ground. Your organization probably has genomic sequencing data from thousands of patients. Clinical trial data spanning multiple phases. Real-world evidence from hospital systems. Proteomics, metabolomics, imaging data. Each dataset represents millions of dollars in research investment.

And none of them can talk to each other.

This fragmentation isn’t an accident. It’s the result of decades of siloed research efforts, different data standards (OMOP, FHIR, proprietary formats), and systems that were never designed to work together. Genomics platforms speak one language. Electronic health records speak another. Multi-omics data comes in formats that require specialized tools just to open.

The result? Analysis paralysis. Before your computational biologists can even begin target identification, they need to harmonize datasets that were collected using different protocols, stored in incompatible formats, and governed by conflicting access policies. This process typically consumes 60-80% of researcher time. Not hypothesis testing. Not discovery. Data janitorial work.

Then add compliance into the mix. HIPAA requirements mean you can’t just copy patient data to a shared drive. GDPR regulations restrict how European patient data can be processed. GxP validation requirements mean every data transformation needs to be documented and auditable. Traditional infrastructure forces you to choose: either move data (creating compliance risks and delays) or keep it siloed (making cross-dataset analysis nearly impossible).

The opportunity cost is staggering. While your team spends months preparing data for analysis, your competitors are already testing hypotheses. The target you’re validating? Someone else might file a patent while you’re still merging datasets. In an industry where first-to-market advantage can mean billions in revenue, data infrastructure delays aren’t just inconvenient—they’re existential threats.

This is where drug discovery data platforms enter the picture. Not as another place to store data, but as the infrastructure layer that makes fragmented data actually usable for discovery.

Core Capabilities That Define a Drug Discovery Data Platform

A real drug discovery data platform does three things exceptionally well: ingests heterogeneous data fast, enables secure analysis without moving sensitive information, and integrates AI/ML pipelines at scale. Let’s break down what that actually means.

Data Ingestion and Harmonization: This is where most platforms fail. Ingestion isn’t just uploading files. It’s transforming genomic VCF files, clinical FHIR records, imaging DICOM data, and proprietary assay results into a unified, queryable structure. The best platforms handle this transformation in days, not months. They understand OMOP common data models, can parse FHIR resources, and automatically map proprietary formats to standardized ontologies. When a platform claims “48-hour harmonization,” that’s not marketing—that’s the difference between starting analysis this week versus next quarter.

The technical reality: traditional ETL pipelines require teams of data engineers to write custom transformation scripts for each new data source. Modern platforms use AI-powered mapping that learns from your existing data standards and automatically suggests transformations. You review, approve, and move forward. No six-month professional services engagement required.

Secure Compute Environments: Here’s where federated approaches separate leaders from laggards. Traditional platforms force you to centralize data—copy everything to a data warehouse, then analyze it there. This creates three problems: massive data transfer times, compliance violations when moving regulated data, and vendor lock-in when your data lives in someone else’s infrastructure.

Federated platforms flip this model. The data stays where it lives—in your cloud, your data center, your partner’s secure environment. The analysis comes to the data, not the other way around. You deploy secure compute workspaces (often called Trusted Research Environments or TREs) that can query distributed datasets without creating copies. Researchers get the insights. Compliance teams get audit trails showing data never moved. Regulators get documentation that satisfies HIPAA, GDPR, and GxP requirements simultaneously.

Think of it like this: instead of asking every research partner to mail you their data (slow, risky, expensive), you run queries that execute locally on their systems and return only aggregated results. The raw data never leaves its secure environment. The insights flow freely.

AI/ML Integration: This is where platforms prove their value beyond data management. Built-in pipelines for target identification, biomarker discovery, and predictive modeling mean your data scientists spend time refining models, not configuring infrastructure. The platform handles compute scaling, experiment tracking, and result reproducibility automatically.

Practical example: your team wants to identify genetic variants associated with drug response in a rare disease population. A mature platform lets you query federated genomic databases, run GWAS analyses across distributed cohorts, integrate clinical outcome data, and validate findings against real-world evidence—all within the same environment. No data movement. No compliance delays. Just analysis.

The infrastructure handles the complexity: spinning up compute clusters for intensive calculations, managing data access permissions across federated sources, tracking every transformation for audit purposes, and ensuring results are reproducible six months later when reviewers ask questions.

Where These Platforms Accelerate the Discovery Pipeline

Theory is nice. ROI is better. Here’s where drug discovery data platforms deliver measurable acceleration across your pipeline.

Target Identification: Traditional target validation requires manually cross-referencing genomic variants with phenotypic data, literature searches, and pathway analyses. It’s a months-long process involving multiple tools and manual data integration. A unified platform changes the timeline dramatically. You query genomic databases for variants associated with your disease of interest, cross-reference against protein interaction networks, validate against clinical phenotype data, and prioritize targets based on druggability scores—all in a single analysis workflow. What used to take research teams months now happens in days.

The competitive advantage compounds. When you can validate 10 potential targets in the time it previously took to validate one, your probability of finding a winner increases proportionally. You’re not just faster—you’re exploring more of the opportunity space. Organizations leveraging secure data solutions for drug target identification are seeing dramatic improvements in validation timelines.

Patient Stratification: This is where platforms prevent the most expensive failures. Most drug candidates don’t fail because they don’t work—they fail because they don’t work in the patient population you tested them on. Precision medicine promises to solve this by identifying responder populations before trials begin. But that requires integrating genomic data, biomarker profiles, clinical history, and real-world evidence across thousands of patients.

Platforms enable this integration at scale. You can identify genetic signatures that predict drug response, stratify patient populations based on multi-omic profiles, and design trials around responder populations. The result: smaller, faster trials with higher success rates. Instead of enrolling 1,000 patients hoping 15% respond, you enroll 300 pre-selected responders and achieve statistical significance in half the time.

The math works in your favor. Phase III trial costs often exceed $100 million. If better patient stratification increases your success rate from 10% to 30%, you’ve just saved tens of millions in failed trials—not to mention the opportunity cost of pursuing dead-end candidates.

Real-World Evidence Integration: Here’s where platforms extend value beyond initial approval. Post-market surveillance data, electronic health records, and insurance claims databases contain signals about drug safety, effectiveness in diverse populations, and potential new indications. But accessing this data traditionally requires partnerships, data use agreements, and months of negotiation.

Federated platforms enable real-world evidence analysis without data transfer. You can query hospital systems, insurance databases, and patient registries to identify safety signals, validate effectiveness in real-world populations, and discover new indications—all while the data remains in its original secure environment. The hospital never sends you patient records. You never create compliance risk. But you get the insights needed to expand labels, identify at-risk populations, and support new indication filings.

Organizations using this approach have identified new indications years faster than traditional methods. The data was always there. The platform made it accessible.

Security and Compliance: Non-Negotiables for Regulated Data

Let’s address the elephant in every procurement meeting: security and compliance aren’t features—they’re the foundation. If your platform can’t satisfy regulators, nothing else matters.

Why Traditional Cloud Storage Fails Pharma: Standard cloud platforms offer storage and compute. What they don’t offer is the audit trail, access control granularity, and data provenance tracking that regulated research demands. When a regulator asks “who accessed patient 1847’s genomic data on March 15th, what analysis did they run, and what were the results?”, you need answers. Generic cloud storage gives you “someone accessed a file.” That doesn’t pass audit.

GxP validation requirements add another layer. Every data transformation must be documented. Every analysis must be reproducible. Every result must be traceable to source data. Traditional infrastructure requires your team to build this documentation layer manually—spreadsheets tracking data lineage, logs recording access, procedures documenting every transformation. It’s brittle, error-prone, and doesn’t scale.

Federated Analysis as the Compliance Solution: Here’s where federated architectures solve problems that centralized approaches can’t. When data never moves from its original secure environment, entire categories of compliance risk disappear. No data transfer means no HIPAA breach risk from data in transit. No centralized storage means no single point of failure for data security. No copies mean no data lifecycle management complexity. Understanding HIPAA-compliant data analytics is essential for any organization handling protected health information.

The technical implementation matters. True federated platforms deploy compute environments that execute analysis locally on data sources, returning only aggregated results. Access controls operate at the query level—researchers can run approved analyses but can’t export raw data. Audit trails capture every query, every result, and every access attempt. When regulators audit your research, you show them a complete chain of custody without moving any data.

Organizations managing data across borders find this particularly valuable. European patient data can be analyzed without leaving EU infrastructure, satisfying GDPR requirements. US healthcare data remains in HIPAA-compliant environments. Asian datasets stay within local regulatory boundaries. Yet researchers can run unified analyses across all three regions simultaneously. The platform handles the complexity of distributed compute and federated query execution.

Certification Requirements to Look For: Not all compliance claims are equal. When evaluating platforms, verify these certifications: FedRAMP authorization (required for US government health data), ISO27001 certification (international information security standard), HIPAA Business Associate Agreements (required for US healthcare data), and GxP validation capabilities (required for regulatory submissions). These aren’t checkboxes—they’re evidence that the platform was designed for regulated research from day one.

Ask about audit capabilities specifically. Can the platform generate complete audit trails for regulatory submissions? Does it track data lineage from source through transformation to result? Can it prove that an analysis run six months ago is reproducible today? These capabilities determine whether your platform accelerates regulatory approval or creates documentation headaches.

Evaluating Platforms: Questions That Separate Vendors from Partners

Procurement conversations in pharma often focus on features and pricing. The better questions focus on outcomes and partnership. Here’s what to ask.

Data Harmonization Speed: Don’t accept vague timelines. Ask: “If we give you our genomic data in VCF format, clinical data in FHIR, and real-world evidence in proprietary CSV files, how long until we can run cross-dataset queries?” The answer reveals whether they have production-ready harmonization pipelines or will need months of custom development. Platforms claiming 48-hour harmonization should be able to show you examples with similar data complexity. If they hedge or suggest a “discovery phase,” you’re looking at a 6-month professional services engagement, not a platform.

Follow up with: “What happens when we add a new data source in six months?” If the answer involves another lengthy integration project, you’re buying custom development, not scalable infrastructure. The right platform handles new data sources through configuration, not coding. Understanding the broader cloud-based drug discovery platforms market can help you benchmark vendor capabilities.

Deployment Flexibility: This question cuts to control and lock-in. Ask: “Where does this platform run, and who owns the infrastructure?” Three models exist: vendor-hosted (they control everything), customer-hosted (you deploy in your cloud), and hybrid (components in both environments). Each has tradeoffs.

Vendor-hosted is fastest to deploy but creates dependency. If you need to switch vendors or bring capabilities in-house, you’re starting from scratch. Customer-hosted gives you control but requires internal infrastructure expertise. Hybrid offers flexibility but adds complexity.

The critical question: “If we terminate our contract, what happens to our data and our analysis capabilities?” If the answer is “you lose access to everything,” you’re building on rented land. Better platforms deploy in your infrastructure—you own the data, the compute environment, and the analysis history. The vendor provides software and support, not control.

Scalability Proof Points: Pilot projects are easy. Production scale is hard. Ask: “What’s the largest dataset you’ve successfully deployed this platform on, and what was the use case?” Look for evidence of national-scale implementations, multi-petabyte datasets, and complex federated queries across dozens of data sources. If their largest deployment is a 100-patient pilot, they haven’t proven production readiness.

Specific questions that reveal capability: “How many concurrent users can run analyses simultaneously? How do you handle compute scaling when 50 researchers launch intensive ML jobs at once? What’s your largest federated query—how many data sources, how much data, what was the response time?”

Organizations managing national health programs or multi-country research consortia need platforms proven at scale. Ask for references from similar-scale deployments. Talk to their technical teams about challenges they encountered and how the platform handled them. Vendor marketing says everything scales. Customer references tell you what actually works.

Building Your Data-Driven Discovery Strategy

You’re convinced a drug discovery data platform could accelerate your pipeline. Now comes the hard part: implementation. Here’s how to approach it without creating a multi-year IT project.

Start with Your Highest-Friction Data Problem: Don’t try to migrate your entire data ecosystem on day one. Identify the single biggest bottleneck in your discovery process. Is it target validation taking six months because genomic and clinical data can’t be cross-referenced? Is it patient stratification requiring manual chart review because multi-omic data isn’t integrated? Is it collaboration with external partners stalling because data sharing agreements take months?

Pick one problem. Solve it completely. Prove value. Then expand. Organizations that succeed with these platforms start with a well-defined use case, demonstrate ROI in weeks (not years), and use that success to drive broader adoption. Those that fail try to boil the ocean—migrating everything, retraining everyone, and transforming all processes simultaneously. That’s a recipe for stalled projects and skeptical stakeholders.

Prioritize Platforms That Reduce Time-to-Analysis: Storage is cheap. Compute is cheap. Researcher time is expensive. When evaluating platforms, calculate ROI based on how much time you’re giving back to your scientists. If harmonizing a new dataset currently takes your team three months and the platform reduces that to three days, you’ve just freed up nearly a full researcher-year of productivity. Multiply that across your organization.

The same logic applies to analysis workflows. If your computational biologists currently spend two weeks configuring infrastructure before running a single experiment, and the platform reduces that to two hours, you’ve just increased their experimental throughput 40-fold. More experiments mean more shots on goal. More shots on goal mean higher probability of breakthrough discoveries. The evolution toward AI-powered drug discovery is making these efficiency gains even more pronounced.

Calculate the value of speed: What’s a one-month acceleration in target validation worth to your pipeline? What’s the value of identifying a promising drug candidate six months earlier than your competitors? These aren’t hypothetical questions—they’re the business case for infrastructure investment.

The ROI Calculation: Here’s the math that matters. Traditional drug discovery data management requires: data engineers to build ETL pipelines (months of work per data source), compliance specialists to document every transformation (ongoing overhead), IT infrastructure to manage storage and compute (capital and operational costs), and researcher time spent on data wrangling instead of discovery (the biggest hidden cost).

A mature platform eliminates most of these costs. Harmonization happens in days through automated pipelines. Compliance documentation generates automatically. Infrastructure scales on demand without dedicated IT staff. Researchers spend 80% of their time on analysis instead of 20%.

The competitive advantage compounds over time. Every week saved in data preparation is a week gained in discovery. Every month of acceleration in target validation is a month closer to clinical trials. In an industry where patent cliffs and competitor pipelines create existential threats, infrastructure that accelerates discovery isn’t a nice-to-have—it’s a strategic imperative.

The Infrastructure Decision That Defines Your Pipeline

A drug discovery data platform isn’t another software purchase. It’s the infrastructure layer that determines whether your organization discovers drugs in years or decades. The science is hard enough without data infrastructure making it harder.

The winners in pharma R&D will be organizations that can harmonize data in days, analyze it securely across organizational boundaries, and scale insights across their entire pipeline. They’ll validate targets faster, stratify patients more precisely, and leverage real-world evidence to expand indications years ahead of competitors. The losers will continue spending 60-80% of researcher time on data wrangling while their pipelines stall.

The choice isn’t whether to invest in data infrastructure. That decision was made the moment you committed to precision medicine, multi-omics research, or real-world evidence integration. The choice is whether you build that infrastructure on federated platforms designed for regulated research or try to retrofit generic cloud tools that were never built for your compliance requirements.

For organizations managing sensitive biomedical data at scale—whether you’re a biopharma R&D team under pressure to accelerate pipelines, a government health agency building national precision medicine programs, or an academic consortium handling regulated data across institutions—the platform architecture you choose determines what’s possible.

Explore how Lifebit’s federated platform approach enables analysis without data movement. Get started for free and see how fast your data can become discovery-ready when the infrastructure is built for regulated research from day one.