How to Supercharge Your Findings with AI Powered Research

Why AI Powered Research Is Transforming Scientific Discovery

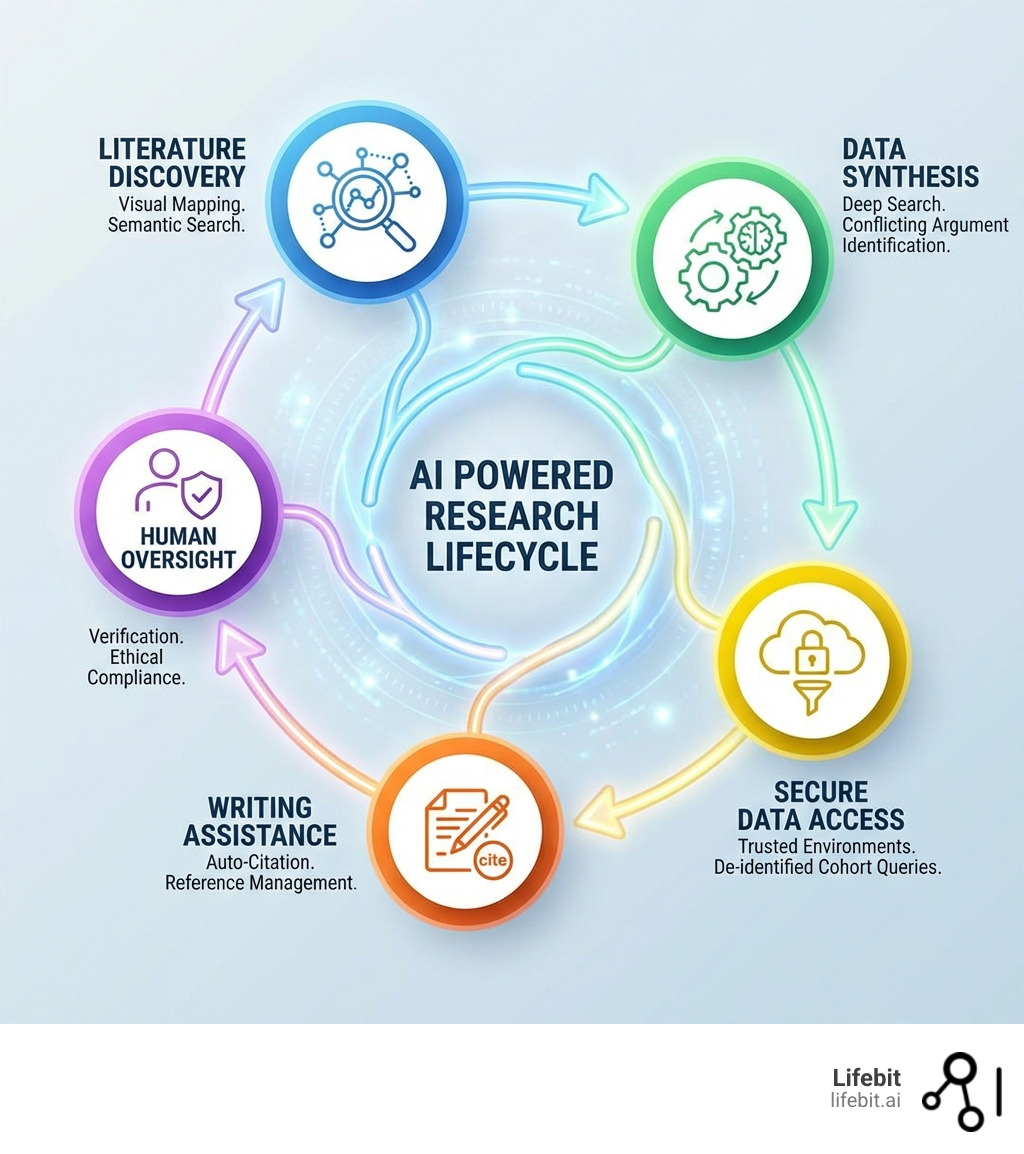

AI powered research is fundamentally changing how scientists discover, synthesize, and act on information—turning weeks of literature review into minutes, unlocking patterns buried in massive datasets, and bridging the widening gap between what’s published and what’s understood.

What AI powered research delivers:

- Automated literature discovery that surfaces relevant papers from millions of sources in seconds

- Intelligent synthesis tools that extract key findings, identify conflicting evidence, and map citation networks

- Real-time data analytics that enable feasibility queries, cohort characterization, and secure access to sensitive datasets

- Writing and citation assistants that draft research documents, manage references, and auto-cite in 2,600+ styles

- Secure, federated environments that analyze data in place without compromising privacy or compliance

Science is exploding in volume. The gap between “published” and “understood” continues to grow. Traditional research methods—manual literature reviews, siloed data repositories, and weeks-long cohort identification—can’t keep pace with the flood of new discoveries across genomics, clinical trials, and real-world evidence.

AI powered research tools are closing that gap. They enable researchers to visualize academic fields, identify patterns faster than any human, and collaborate across secure, compliant platforms. From literature mapping tools like Connected Papers to deep search engines like Consensus, these technologies are making discovery more human, more collaborative, and more creatively charged.

I’m Maria Chatzou Dunford, CEO and Co-founder of Lifebit, where I’ve spent over 15 years advancing AI powered research through federated data analysis, genomics platforms, and secure computational environments that empower precision medicine and biomedical discovery. This guide will show you how to responsibly integrate AI into every stage of your research lifecycle—from initial discovery to final publication.

Handy ai powered research terms:

The Evolution of AI Powered Research Tools

In the “old days” (which were actually just five years ago), literature review meant wrestling with complex Boolean keyword strings in PubMed or Google Scholar and hoping you didn’t miss a seminal paper because of a synonym or a slight variation in terminology. Traditional research was linear, manual, and often siloed, relying heavily on the researcher’s ability to recall specific authors or journals. Today, ai powered research has shifted the paradigm from “searching for keywords” to “discovering concepts.”

This evolution is driven by Natural Language Processing (NLP) and Large Language Models (LLMs) that utilize vector embeddings. Instead of matching characters, these tools represent words and concepts as mathematical coordinates in a multi-dimensional space. This allows the AI to understand that “neoplasm” and “tumor” are semantically related, even if the words themselves share no letters. Modern tools like Connected Papers allow us to visualize academic fields as living ecosystems. By entering a single “seed” paper, you can generate a visual graph that shows how that paper relates to others in the field based on co-citation and bibliographic coupling. This helps researchers identify the “ancestry” of a theory and find the “bridge papers” that connect disparate sub-fields.

Similarly, Semantic Scholar uses AI to provide a “TL;DR” for papers, helping us decide in seconds whether a study is worth a full read. This isn’t just about speed; it’s about depth. These tools analyze citation networks to tell us not just who cited a paper, but how—whether they supported the findings, offered a contrasting view, or simply mentioned the methodology. This level of nuance was previously only possible through hours of manual reading.

Automating Literature Discovery with AI Powered Research

The sheer volume of published science is overwhelming, with thousands of new papers uploaded to repositories like arXiv and PubMed every single day. Tools like Research Rabbit function like a “Spotify for Papers,” learning your interests and suggesting new research based on your “collections.” It leverages massive databases like OpenAlex and Semantic Scholar to create interactive visualizations of research networks.

Key features of AI-driven discovery include:

- Visual Mapping: Seeing the evolution of a theory through citation graphs, allowing you to spot the most influential papers in a cluster instantly.

- Relationship Analysis: Finding papers that are semantically similar even if they don’t share keywords, which is vital for interdisciplinary research where terminology varies.

- Contextual Awareness: Tools like Keenious analyze your entire document to recommend relevant sources as you write, acting as a proactive research assistant that works in the background.

- Agentic Exploration: Some newer tools use “agents” that can autonomously browse the web, follow citation trails, and summarize the findings of an entire niche topic without human intervention.

Enhancing Data Synthesis through AI Powered Research

Once you have the papers, the next hurdle is synthesis. How do you reconcile five different studies with five different results? Consensus uses “Deep Search” to turn days of literature review into minutes. It builds a comprehensive search strategy that expands key terms and identifies conflicting arguments across millions of peer-reviewed papers.

By using Consensus, we can ask a plain-language question like “Does caffeine improve long-term memory?” and receive a synthesized answer grounded in scholarly claims. The platform provides a “Consensus Meter” that shows the prevailing scientific opinion (e.g., 70% of papers say yes, 20% are neutral, 10% say no). This quantitative approach to qualitative literature review is a game-changer for evidence-based medicine and policy-making.

Key Categories of AI Tools for Modern Researchers

The landscape of ai powered research is diverse, ranging from general-purpose Large Language Models (LLMs) to highly specialized agentic assistants designed for specific scientific domains. Understanding which tool to use at which stage of the research lifecycle is critical for maximizing efficiency.

| Tool Category | Primary Function | Examples |

|---|---|---|

| Literature Discovery | Visualizing networks and finding similar papers | Connected Papers, Research Rabbit |

| Synthesis & Search | Answering questions based on evidence | Consensus, Elicit |

| Writing Assistance | Drafting, auto-citing, and library syncing | Jenni AI, Scholarcy |

| Reading Assistants | Summarizing and extracting data from PDFs | ArXiv Intelligence, JSTOR AI |

| Data Extraction | Converting unstructured text into structured tables | Elicit, Iris.ai |

Writing and Citation Assistance

Writing is often the biggest bottleneck in the research lifecycle. Tools like Jenni AI are designed specifically for this, offering “Agentic AI Chat” that consults both the latest research and your uploaded PDFs. It features a one-click sync with reference managers like Zotero and Mendeley, allowing you to import collections (like a “Machine Learning” folder with 185 sources) in seconds.

These assistants don’t just “write” for you; they help you draft grounded content by suggesting the next sentence based on the evidence in your library. With auto-cite functionality supporting over 2,600 styles (APA, MLA, Chicago, Vancouver, etc.), the risk of manual citation errors—and the subsequent headaches during peer review—is significantly reduced. Furthermore, these tools often include plagiarism checkers and AI-detection filters to ensure the integrity of the final manuscript.

Visual Mapping and Relationship Analysis

For a broader view, Elicit and Undermind help researchers navigate the “published vs. understood” gap. Elicit can analyze thousands of papers simultaneously, extracting data into tables to compare methodologies, sample sizes, and outcomes at scale. This level of relationship analysis is critical for systematic reviews and meta-analyses, where researchers must synthesize data from dozens of disparate trials.

Advanced tools are now incorporating “Reasoning Engines” that don’t just summarize text but actually evaluate the strength of the evidence. For example, an AI might flag a study for having a small sample size or a potential conflict of interest, providing a layer of critical appraisal that previously required senior-level expertise.

Benefits of Integrating AI into Your Workflow

Integrating ai powered research tools isn’t just a “nice to have”—it’s a competitive necessity in an era where scientific output is doubling every few years. The primary benefits include:

- Increased Efficiency: Automating the “grunt work” of searching, formatting, and summarizing allows researchers to focus on high-level hypothesis generation and experimental design.

- Deeper Insights: Identifying patterns across disparate datasets that human eyes might miss. AI can spot correlations between a protein in oncology and a pathway in neurology that were previously siloed in different journals.

- Accelerated Discovery: Moving from hypothesis to evidence-backed conclusion faster than ever before. In drug discovery, AI can simulate how billions of molecules interact with a target protein, narrowing down candidates in days rather than years.

For a deeper dive, see our AI-Powered Research Ultimate Guide and explore how these tools specifically impact AI for Medical Research.

Shrinking the Understanding Gap

As science explodes in volume, the bottleneck is no longer access to information—it’s the capacity to understand it. We are currently in a state of “information obesity,” where we have more data than we can possibly process. Tools like ArXiv Intelligence aim to make discovery more human-centric. By providing faster insights and fostering collaboration, these platforms help us move from simply “reading” to truly “understanding” the state of the art.

This is particularly important for interdisciplinary teams. A bioinformatician and a clinical oncologist might use the same AI tool to bridge their knowledge gap, using the AI to translate complex technical jargon into actionable clinical insights. This “semantic translation” is one of the most underrated benefits of AI in the research environment.

Real-Time Evidence and Analytics

In specialized fields like medicine, the stakes are even higher. Our AI-Powered Medical Research Guide highlights how AI can assist in real-time data discovery. Researchers can run feasibility queries and interact with AI agents to characterize cohorts without ever exposing sensitive patient details.

For example, a researcher could ask, “How many patients in the UK Biobank have both Type 2 Diabetes and a specific genetic variant, and what are their average HbA1c levels?” In a traditional setting, this query could take weeks of data access requests and manual cleaning. With AI-powered federated analytics, the answer can be generated in minutes. This is the core of “Data Discovery”—finding the right data instantly while maintaining 100% privacy and regulatory compliance.

Navigating Risks: Ethics, Privacy, and Human Oversight

While the benefits are massive, ai powered research is not without its pitfalls. As we integrate these tools into the scientific method, we must address the “elephant in the room”: data privacy, accuracy, and the potential for algorithmic bias.

- Hallucination: AI models, particularly LLMs, can sometimes confidently present false information or “hallucinate” citations that do not exist. This is why tools grounded in specific databases (like Consensus or Elicit) are safer than general-purpose chatbots.

- Data Privacy: Sending sensitive research data, proprietary formulas, or patient information to public LLMs can violate institutional policies and international laws like GDPR or HIPAA.

- Algorithmic Bias: If the training data for an AI is biased—for example, if it primarily includes studies on Western populations—the research suggestions and summaries will reflect that bias, potentially leading to skewed scientific conclusions.

- The Black Box Problem: Many AI models are “black boxes,” meaning it’s difficult to understand how they reached a specific conclusion. In science, the “how” is often as important as the “what.”

Check our AI Clinical Research Guide 2025 for a detailed breakdown of these risks in regulated environments.

Secure Data Access and Governance

When dealing with biomedical data, security is paramount. Modern platforms use “de-identified” data and strict retention policies to mitigate risk. For instance, OpenAI’s API data usage policies state that data sent via API is not used for training and is retained for only 30 days. However, for many institutions, even this is not enough.

At Lifebit, we advocate for the use of “Honest Brokers”—automated systems that manage data queries so that researchers can understand data lineage and patterns without ever accessing identifiable information. This approach ensures that the data remains within its original secure environment (data residency) while the “insights” are what move. Our Clinical Research AI Complete Guide explains how this governance framework protects both patients and researchers from data breaches.

The Necessity of Human Oversight and the Reproducibility Crisis

AI is a co-pilot, not the captain. Always verify AI-generated claims against primary sources. The most effective researchers use AI to find the “needle in the haystack” but use their own critical thinking to analyze the needle. Ethical compliance requires that the human researcher remains responsible for the final output.

There is also a growing concern regarding the “reproducibility crisis” in AI-assisted research. If an AI tool generates a summary or a data visualization today, will it generate the exact same one tomorrow? Researchers must document their AI prompts and the specific versions of the tools used to ensure that their work can be audited and reproduced by others in the scientific community.

Best Practices for Responsible AI Implementation

To truly supercharge your findings and maintain the highest standards of scientific integrity, follow these best practices for ai powered research:

- Verify Everything: Never copy-paste an AI summary without cross-referencing it with the original PDF. Use the AI to locate the information, but use the source to validate it.

- Use Specialized Tools: Choose tools like scite that provide citation context (supporting vs. contrasting) rather than just general LLMs like ChatGPT, which lack a real-time connection to the scientific literature.

- Prioritize FAIR Data: Ensure your data is Findable, Accessible, Interoperable, and Reusable. AI thrives on FAIR data; the better structured your input, the more accurate the AI’s output will be.

- Disclose AI Usage: Many journals now require a statement disclosing which AI tools were used during the research and writing process. Transparency is key to maintaining trust.

Explore our insights on AI in Drug Discovery to see these practices in action.

Building a Scalable Research Infrastructure

For large-scale projects, you need more than just a browser extension or a standalone app. You need a Trusted Research Environment (TRE). A TRE provides a secure, cloud-aligned workspace where teams can collaborate on sensitive data without the risk of data egress. This infrastructure is essential for AI-Driven Drug Discovery, where security and scalability must go hand-in-hand.

A robust TRE allows for the integration of various AI models directly into the data environment. This means you can bring the model to the data, rather than moving the data to the model. This “federated” approach is the gold standard for modern, large-scale genomic and clinical research.

Optimizing the Deep Learning Stack

For the tech-savvy researcher, the “under the hood” details matter. The performance of AI tools depends on the underlying architecture. Models like DBRX use a “sparse mixture-of-experts” (MoE) architecture, which allows for high-quality outputs with 36B active parameters while remaining computationally efficient.

Tools like LLM Foundry and Composer allow researchers to fine-tune these models on their own specific datasets—such as a library of rare disease papers or proprietary chemical structures. This fine-tuning optimizes performance for niche scientific domains where general models might struggle with specialized terminology or unique data formats. By building a custom “knowledge base” on top of a foundation model, researchers can create a bespoke AI assistant that understands their specific field better than any general tool.

Frequently Asked Questions about AI Research

How do researchers access and pay for these AI tools?

Most tools offer a “Freemium” model designed to lower the barrier to entry. Semantic Scholar is completely free, supported by the Allen Institute for AI. Tools like Consensus and scite offer free tiers with limited searches, alongside paid subscriptions for advanced features like API access, unlimited searches, or integration with reference managers. Many top-tier universities and research institutions now provide enterprise-level subscriptions for their faculty and students, recognizing these tools as essential infrastructure.

What are the primary data sources for AI research assistants?

Most academic AI tools pull from massive, interconnected open databases. Semantic Scholar and OpenAlex are the “big two” that power many other apps, containing metadata for over 200 million papers. These are often supplemented by specialized repositories like PubMed (for medicine), JSTOR (for humanities), and the user’s own uploaded PDF libraries. Some tools also crawl preprint servers like bioRxiv and medRxiv to provide the very latest, though not yet peer-reviewed, findings.

How does AI handle sensitive biomedical data?

In a professional ai powered research environment, data is never “shared” in the traditional sense. Instead, we use federated platforms where the AI “travels” to the data. Sensitive info is de-identified (removing names, IDs, and specific dates), and “secure enclaves” ensure that even the AI provider cannot see the raw, identifiable data. This allows for global collaboration on sensitive topics like rare diseases or pandemic response without compromising individual patient privacy.

Can AI replace the peer-review process?

While AI is being used to assist peer reviewers by checking for statistical errors, image manipulation, or plagiarism, it cannot replace the human judgment required to assess the novelty and significance of a study. AI is a tool for verification and synthesis, but the final evaluation of scientific merit remains a human responsibility.

Is AI-generated content considered plagiarism?

If an AI generates text and a researcher presents it as their own without attribution, it is generally considered a form of academic misconduct. However, using AI to summarize papers or help draft sections based on the researcher’s own data is increasingly accepted, provided the use of the tool is disclosed. Most journals have specific guidelines on AI-authored text; always check the “Instructions for Authors” before submitting.

Conclusion: The Future is Federated

The era of siloed, manual research is over. AI powered research is the key to unlocking the mysteries of our universe—from ending disease to exploring new frontiers. By integrating tools that automate discovery, synthesize complex evidence, and secure sensitive data, we can finally keep pace with the explosion of scientific knowledge.

At Lifebit, we believe the most powerful breakthroughs happen when researchers can access global data securely. Our federated AI platform enables real-time access to multi-omic data, powering large-scale research and pharmacovigilance without moving a single byte of sensitive information.

Ready to take your research to the next level? Supercharge your findings with Lifebit Federation and join the thousands of researchers at top institutions who are already redefining what’s possible.