Why every lab needs an AI-powered pharmacovigilance monitoring system

The Data Tidal Wave: Why Traditional Safety Monitoring is Failing

AI-powered pharmacovigilance monitoring is the use of artificial intelligence — including machine learning, NLP, and generative AI — to automate and enhance drug safety surveillance across the entire post-market lifecycle.

Here is what it does in practice:

- Processes adverse event reports from millions of structured and unstructured sources automatically

- Detects safety signals faster and with fewer false positives than manual review

- Monitors literature, social media, and EHRs in real time, not in batches

- Automates case intake, MedDRA coding, and narrative generation to reduce manual workload

- Flags emerging risks before they reach critical thresholds

The stakes are high. In 2010, the FDA’s FAERS database received around 672,000 adverse event reports. By 2024, that number had exploded past 20 million. Meanwhile, experts estimate that 90% of adverse events still go unreported through traditional spontaneous reporting channels. This phenomenon, often referred to as the “under-reporting iceberg,” means that for every serious side effect captured in a database, nine others may be circulating in patient forums, physician notes, or social media threads, invisible to regulators.

Manual processes were not built for this volume. They were not built for unstructured data pouring in from patient forums, call centers, and electronic health records either. The gap between the data that exists and the data that gets acted on is widening — and that gap costs lives. Historically, pharmacovigilance was a reactive discipline, born out of tragedies like the thalidomide crisis of the 1960s. For decades, the industry relied on the “Yellow Card” system or similar spontaneous reporting forms. While these were revolutionary at the time, they are fundamentally ill-equipped for the era of Big Data. Today, a single pharmaceutical company might receive over a million Individual Case Safety Reports (ICSRs) annually. Processing these manually requires an army of safety scientists, yet the sheer volume ensures that subtle, low-frequency signals are often missed until they become widespread public health issues.

AI does not just speed things up. It fundamentally changes what is possible to monitor, at what scale, and how fast. It allows us to move from a sampling-based approach to a comprehensive, population-wide surveillance model.

I’m Dr. Maria Chatzou Dunford, CEO and Co-founder of Lifebit, and over 15 years of work in computational biology, federated data platforms, and AI-driven health research has shown me why AI-powered pharmacovigilance monitoring is no longer optional for organizations serious about drug safety. In the sections ahead, I’ll walk you through exactly how these systems work, what the evidence says about their impact, and what responsible adoption actually looks like.

For decades, pharmacovigilance (PV) relied on a relatively simple, albeit reactive, model: a patient or physician noticed a problem, filled out a form, and sent it to a regulator or manufacturer. This “spontaneous reporting” system served us well in the 20th century, but it is buckling under the weight of the 21st. The complexity of modern medicine, including biologics, gene therapies, and personalized treatments, means that adverse events are no longer just “rashes” or “headaches”; they are complex interactions involving genomic markers and multi-drug regimens.

The numbers are staggering. As mentioned, the FDA’s Adverse Event Reporting System (FAERS) has seen a near-exponential rise in volume. We’ve gone from roughly 672,640 reports in 2010 to over 20 million in 2024. This isn’t just because more people are getting sick; it’s because we are finally looking in the right places—social media, patient support programs, and electronic health records (EHRs). However, looking is not the same as seeing. When 90% of adverse events (AEs) go unreported in traditional systems, we are essentially trying to navigate an iceberg by looking only at the tip. Traditional PV is plagued by manual bottlenecks where highly trained safety scientists spend 60% to 70% of their time on administrative data entry rather than the actual “science of safety.”

This is where Scientific research on AI in pharmacovigilance highlights a critical shift. We are moving from a world of “batch processing”—where reports are reviewed in weekly or monthly cycles—to a world of Real-time pharmacovigilance complete guide. Without AI, your lab is essentially trying to drink from a firehose while using a cocktail straw. The transition to AI-powered systems allows for the ingestion of “Dark Data”—information that exists in medical journals, conference abstracts, and patient forums that was previously too labor-intensive to monitor systematically.

Core Technologies Powering AI-Powered Pharmacovigilance Monitoring

If the data is the firehose, AI is the advanced filtration system. We use a combination of technologies to make sense of this “tidal wave.” The architecture of a modern PV system is multi-layered, involving specialized algorithms designed to handle the nuances of medical terminology and the noise of real-world data.

- Natural Language Processing (NLP): This is the workhorse of PV. It allows computers to “read” unstructured text—like a doctor’s handwritten note or a frantic tweet—and extract key entities like the drug name, the dosage, and the suspected reaction. Advanced NLP models, such as those based on the Transformer architecture (like BERT or BioBERT), are trained specifically on medical corpora to understand that “MI” stands for Myocardial Infarction in a cardiology report, not Michigan. These models can perform Named Entity Recognition (NER) and Relation Extraction to link a specific drug to a specific adverse event within a complex sentence.

- Generative AI (GenAI) and Large Language Models (LLMs): These go a step further. They don’t just extract data; they can summarize thousands of pages of medical literature or draft a coherent case narrative that meets regulatory standards. GenAI can take raw, fragmented data points from an ICSR and weave them into a clinical summary that a human reviewer can quickly validate. This reduces the “blank page” problem for safety scientists, allowing them to act as editors rather than authors.

- Pattern Recognition and Machine Learning (ML): These algorithms look for “disproportionality”—mathematical signals that a specific drug is causing a specific side effect more often than would be expected by chance. Traditional methods like the Proportional Reporting Ratio (PRR) are being augmented by Bayesian neural networks that can account for confounding factors, such as the patient’s age, underlying conditions, and concomitant medications.

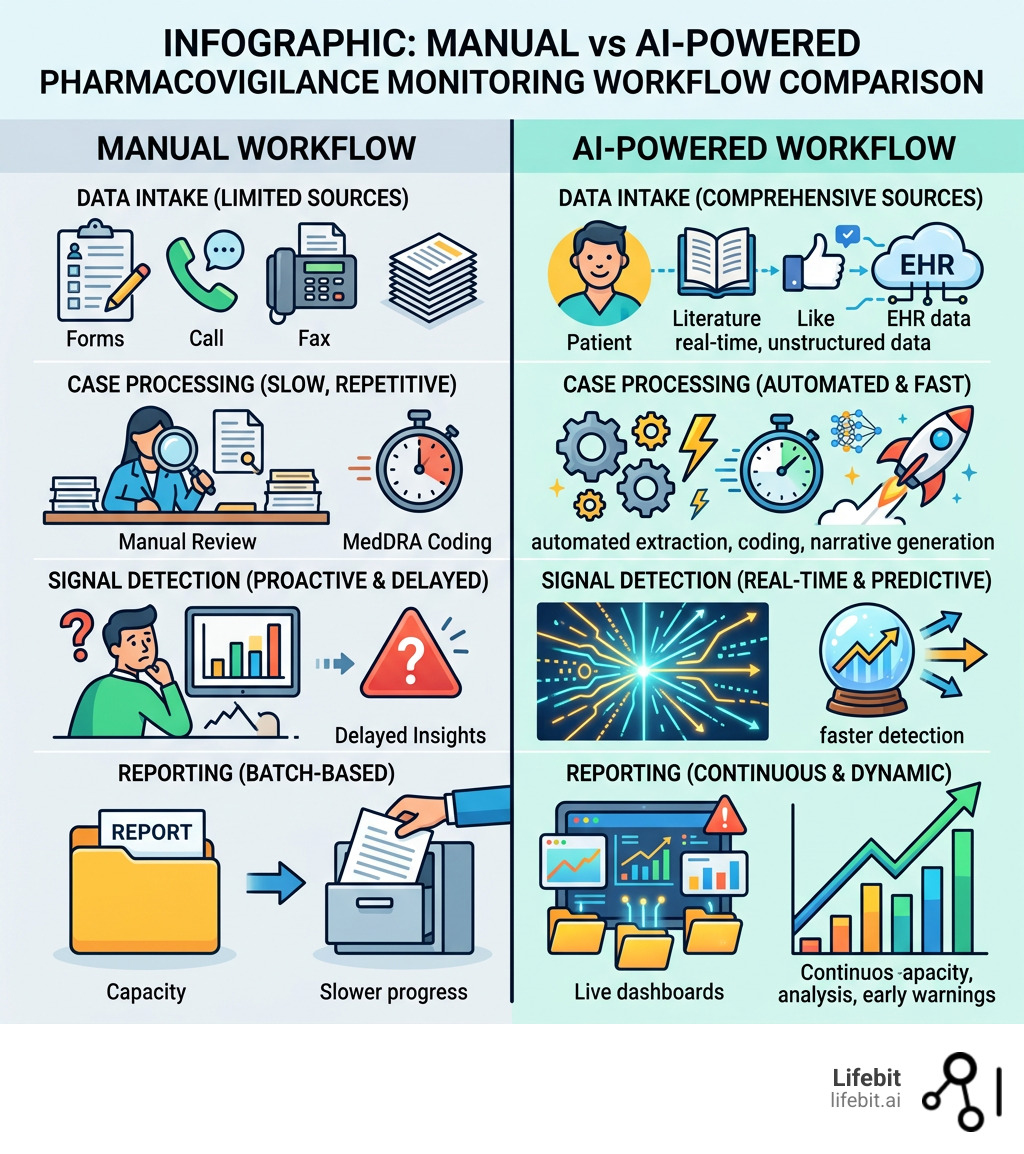

To understand the impact, look at how the workflow changes:

| Task | Manual Workflow | AI-Automated Workflow |

|---|---|---|

| Case Intake | Hours of manual data entry from PDFs/emails | Seconds; AI extracts data directly from source |

| Literature Review | Weekly manual searches and reading | Daily automated scans with 50% time reduction |

| MedDRA Coding | Human lookup of thousands of medical terms | Instantaneous, high-accuracy auto-coding |

| Signal Detection | Reactive review of batch reports | Proactive, real-time monitoring of global data |

| Narrative Writing | 30-60 minutes per complex case | 2-5 minutes for AI draft + human review |

| Data Cleaning | Manual reconciliation of duplicate reports | Automated deduplication using fuzzy matching |

For a deeper dive into these mechanics, check out our AI for pharmacovigilance ultimate guide.

Automating Case Intake with AI-Powered Pharmacovigilance Monitoring

The most labor-intensive part of PV is often the initial intake. A single case might involve a 50-page hospital discharge summary, three lab reports, and a physician’s email. AI-powered pharmacovigilance monitoring uses NLP to scan these “unstructured” documents and automatically populate the safety database. This process, known as “Auto-extraction,” involves identifying the four pillars of a valid safety report: an identifiable patient, an identifiable reporter, a suspect product, and an adverse event.

One of the most exciting developments is in narrative generation. Writing the “story” of an adverse event is a high-skill task. Recent pilots show that LLMs can improve the efficiency of creating these narratives by nearly 30% for standard cases and up to 48% for complex ones. This allows your safety team to focus on the interpretation of the event rather than the typing of it. This is the essence of Drug safety AI. Furthermore, AI can assist in MedDRA (Medical Dictionary for Regulatory Activities) coding. MedDRA contains over 80,000 terms; AI can suggest the most appropriate Lowest Level Term (LLT) based on the clinical context, significantly reducing the variability between different human coders.

Enhancing Signal Detection through AI-Powered Pharmacovigilance Monitoring

Signal detection is the “detective work” of drug safety. Traditionally, this involved looking at internal databases. Today, we must cast a wider net. The challenge is that signals are often buried in “noise.” For example, if a drug is known to cause nausea, how do you detect if it is also causing a rare liver enzyme elevation in a specific sub-population?

AI allows us to monitor social media, where patients often discuss side effects long before they tell their doctors. It allows us to scan EHRs and insurance claims data to see how drugs perform in the “real world”—in patients with multiple conditions who weren’t included in clinical trials. By using AI pharmacovigilance guide 2025, labs can identify a potential safety signal in less than 24 hours, rather than waiting weeks for a pattern to emerge in spontaneous reports. This proactive approach is particularly vital for “orphan drugs” or specialized therapies where the patient population is small and every single data point is critical for understanding the safety profile.

Efficiency Gains: Measuring the Impact of AI in the Lab

We often get asked: “Does this actually work in a real-world lab setting?” The data says yes, emphatically. The implementation of AI is not just a marginal improvement; it represents a step-change in operational capacity. When we look at the Return on Investment (ROI) for AI in pharmacovigilance, we look at three pillars: speed, quality, and cost.

- Literature Review: AI tools have demonstrated a 50% reduction in the time required to screen medical journals for safety reports. In a traditional setting, a safety scientist might spend hours every Monday morning scrolling through PubMed or Embase. AI does this 24/7, flagging only the relevant articles for human review and automatically extracting the ICSR data from the abstract.

- Expectedness Assessment: When a new AE comes in, we have to check if it’s already on the drug’s label (the Investigator’s Brochure or the Summary of Product Characteristics). AI tools can reduce the time spent on this by 30% while simultaneously cutting errors by 60%. By cross-referencing the reported event against a digital library of product labels, the AI ensures that “unexpected” events are escalated immediately.

- Case Processing: Some organizations have reported an 80% improvement in overall case processing efficiency when moving to an AI-first model. This doesn’t mean they fired 80% of their staff; it means their staff could finally handle the 300% increase in report volume without burning out or missing critical deadlines.

Beyond just “going faster,” AI increases the quality of the data. Human beings get tired. After reviewing 500 reports, a human might miss a “missed pregnancy” report or a subtle drug-drug interaction. AI doesn’t get tired. It maintains 100% consistency 24/7. As explored in Research on AI opportunities and challenges in drug safety, the goal isn’t just to save money—it’s to ensure that not a single “needle” is lost in the data haystack.

Furthermore, the cost of a single late regulatory submission can be astronomical, ranging from heavy fines to the suspension of marketing authorization. AI acts as a fail-safe, tracking submission deadlines in real-time and ensuring that “7-day expedited reports” for serious, unexpected adverse reactions (SUSARs) are prioritized and processed with zero latency. This level of operational rigor is simply impossible to maintain manually at the current scale of global drug distribution.

Responsible Adoption: Governance and Regulatory Compliance

With great power comes great regulatory scrutiny. You cannot simply “turn on” an AI and walk away. Organizations like the FDA, EMA, and CIOMS have been very clear: the human must remain in the loop. The regulatory framework for AI in PV is evolving rapidly, with the EMA’s “Reflection paper on the use of artificial intelligence in the medicinal product lifecycle” providing a roadmap for how these technologies should be validated.

At Lifebit, we advocate for a risk-based approach to AI adoption. This means:

- Human-in-the-Loop (HITL): AI suggests the MedDRA code or drafts the narrative, but a qualified safety professional must review and “sign off” on the final decision. The AI acts as a co-pilot, providing the evidence and the draft, while the human provides the clinical judgment and accountability. This is especially critical for causality assessment, where the AI might find a correlation, but the human must determine if the drug actually caused the event.

- Transparency and Explainability (XAI): You must be able to show why the AI made a certain decision. “Black box” algorithms are a no-go in drug safety. If an AI flags a signal, it must be able to point to the specific data points—the specific sentences in a medical journal or the specific clusters in a database—that led to that conclusion. This “audit trail” is essential for regulatory inspections.

- Validation: AI systems must be validated just like any other GxP (Good Practice) system. This includes side-by-side testing against human experts to ensure the AI’s “clinical judgment” is sound. This process, often called Computer System Validation (CSV), involves rigorous testing of the algorithm’s performance across different datasets to ensure it doesn’t exhibit bias or drift over time.

- Data Privacy: With the rise of GDPR and similar laws, protecting patient identity is paramount. This is why our Pharmacovigilance compliance solution focuses on federated AI—bringing the analysis to the data, rather than moving sensitive patient data across borders. Federated learning allows an AI model to be trained on data from multiple hospitals or countries without the raw data ever leaving its original, secure location. This solves the “data residency” problem that has long hindered global safety monitoring.

Adhering to GVP (Good Pharmacovigilance Practices) Module IX is no longer just about having a process; it’s about having a verifiable process. AI provides the digital footprint necessary to prove to regulators that every report was screened, every signal was evaluated, and every decision was based on the totality of available evidence.

Frequently Asked Questions about AI in Drug Safety

Can AI fully replace human safety experts in pharmacovigilance?

Absolutely not. While AI is incredible at pattern recognition and data extraction, it lacks “clinical common sense.” It can flag a correlation, but it takes a human expert to determine causality. For instance, an AI might notice a spike in reports of “dizziness” for a drug, but a human expert might realize that the spike coincides with a change in the drug’s packaging that makes it harder to open, causing patient frustration rather than a physiological side effect. Our goal is to augment the expert, not replace them—turning safety scientists into “safety pilots” who oversee automated systems.

How does AI handle data privacy and GDPR compliance?

This is a major headwind for many labs. Traditional AI requires moving data to a central server, which is a nightmare for GDPR and HIPAA compliance. Lifebit solves this through a federated architecture. We send the “questions” (the algorithms) to where the data lives (the hospital or the national database). The data never moves, but the insights do. This ensures that Patient Identifiable Information (PII) remains behind the hospital’s firewall while still contributing to global safety knowledge.

What are the main challenges to implementing AI in safety workflows?

The biggest hurdles are often cultural and technical. Legacy IT systems are frequently “siloed,” making it hard for AI to access the data it needs. There is also a “trust gap”—safety professionals need to see the AI work consistently before they are comfortable relying on it. Start with small, high-impact pilots (like literature review) to build that trust. Another challenge is “data quality”; if the input data is poor, the AI’s output will be poor. Cleaning and standardizing data before it enters the AI pipeline is a critical first step.

How does AI handle multi-lingual adverse event reports?

Modern NLP models are multi-lingual by design. They can process reports in Spanish, Mandarin, or French and map them to the English-based MedDRA hierarchy. This is a game-changer for global pharmaceutical companies who previously had to employ local translation services for every non-English report, adding days to the processing timeline.

Can AI detect signals in very small patient populations?

Yes. In fact, this is where AI shines. In rare diseases, where there might only be 500 patients globally, traditional statistical methods often fail because the sample size is too small. AI can use “transfer learning”—applying knowledge gained from larger, similar drug classes—to identify potential risks in small cohorts much earlier than traditional disproportionality analysis.

Conclusion: From Reactive to Proactive Safety

The future of drug safety isn’t just about reporting what happened; it’s about predicting what might happen. By 2030, we expect AI-powered pharmacovigilance monitoring to enable “in silico” safety trials—using digital twins and genomic data to predict adverse reactions before a drug is even tested in humans. Imagine a world where we can run a simulation of a new drug against 10 million “digital patients,” each with their own unique genetic profile and medical history, to identify who is at risk of a side effect before the first pill is ever pressed.

We are moving from a reactive “vigilance” model to a proactive “prevention” model. This shift will fundamentally change the relationship between pharmaceutical companies, regulators, and patients. Instead of a drug being “safe” or “unsafe” for the general population, we will understand its safety profile at the individual level. This is the promise of precision medicine, and it is only possible with a robust, AI-driven safety infrastructure.

Our Lifebit R.E.A.L. (Real-time Evidence & Analytics Layer) platform is designed for exactly this transition, providing the federated, secure infrastructure needed to monitor drug safety across global populations in real time. By breaking down data silos and enabling secure collaboration between researchers and clinicians, we are building the foundation for a more responsive healthcare system.

The data tidal wave is already here. You can either be swept away by it, or you can use AI to build a better, safer lighthouse. The transition may seem daunting, but the cost of staying with manual, legacy systems is far higher—both financially and in terms of patient safety.

AI-driven pharmacovigilance solutions are the new standard for patient protection. The technology has matured, the regulatory path is clearing, and the need has never been greater. Start a conversation with us today to explore how our AI-powered pharmacovigilance solutions can enhance your safety practices and help you move from simply managing data to truly protecting lives.