Your AI Powerhouse: Why Databricks is the Platform for Enterprise AI

Why Enterprise AI Needs a Unified Intelligence Platform

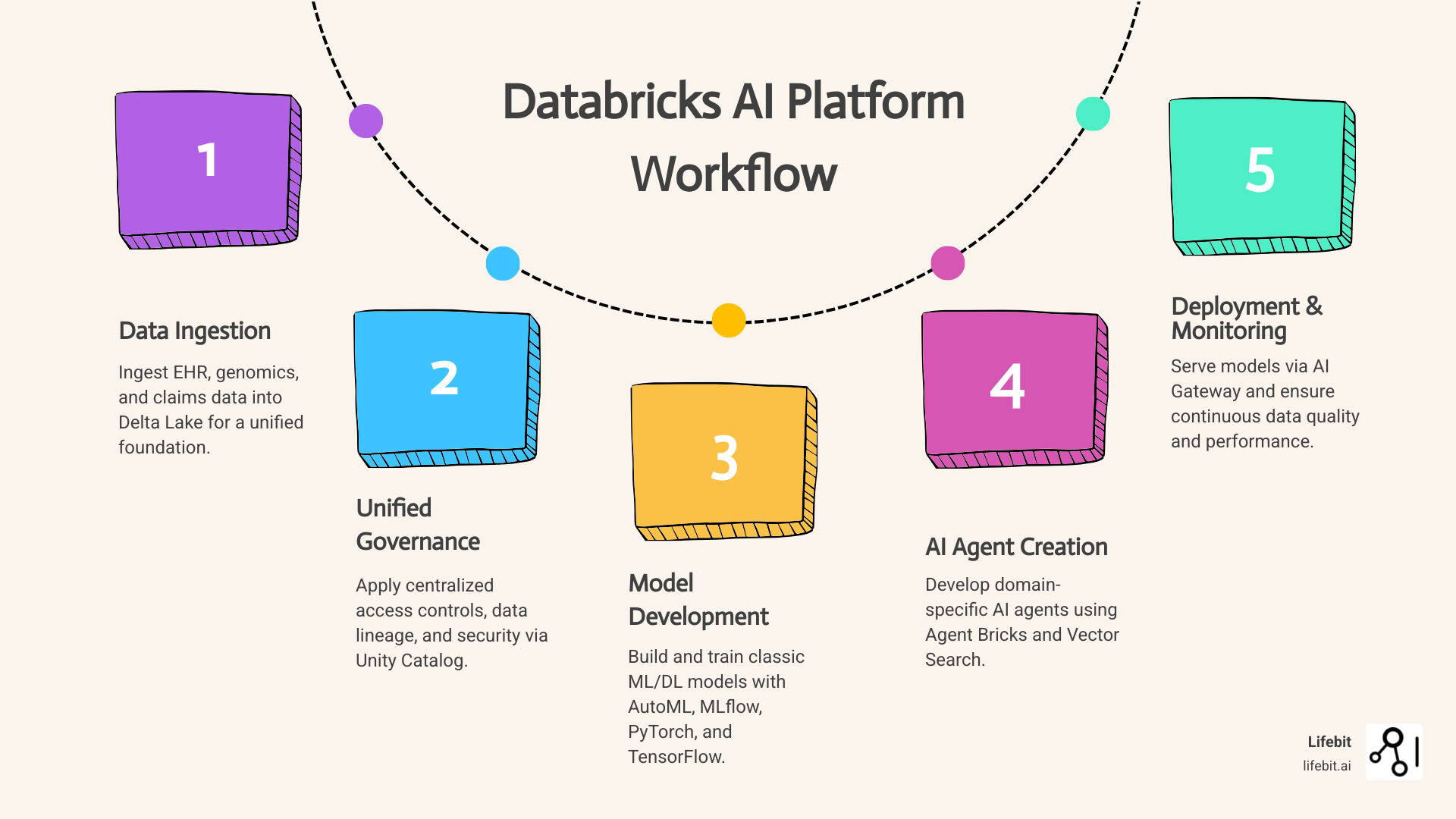

The Databricks AI platform is changing how global pharma, public sector, and research organizations build, deploy, and govern AI at scale. Unlike fragmented toolchains that force teams to juggle separate systems for data storage, model training, and deployment, Databricks unifies the entire AI lifecycle—from raw EHR and genomics data to production-ready AI agents—on a single lakehouse architecture.

What the Databricks AI Platform Delivers:

- Unified Data Intelligence: Lakehouse architecture combining data warehousing and advanced analytics with built-in governance

- End-to-End AI Lifecycle: Integrated tools for data prep, model training (AutoML, PyTorch, TensorFlow), deployment, and monitoring

- Generative AI at Scale: Agent Bricks, Vector Search, and AI Gateway for building domain-specific AI agents on proprietary data

- Enterprise Governance: Unity Catalog providing centralized access controls, data lineage, and FedRAMP-authorized security

- Proven ROI: Customers report 44% accuracy improvements, $10M productivity gains, and 10x cost reductions

The platform solves critical pain points for organizations struggling with siloed data, slow AI deployment cycles, and compliance bottlenecks. By eliminating the need to move data across systems, Databricks enables real-time pharmacovigilance, cohort analysis, and federated analytics—all while maintaining regulatory compliance.

Why this matters for biomedical research: Traditional AI platforms force you to choose between speed and security. The Databricks AI platform delivers both. With native support for multi-omic data integration, secure compute on sensitive patient records, and no-code tools that empower clinical teams alongside data scientists, it’s built for the complexity of modern healthcare and life sciences workflows.

As Dr. Maria Chatzou Dunford, CEO of Lifebit with over 15 years in computational biology and AI, I’ve seen how the databricks ai platform accelerates precision medicine by unifying genomics pipelines, federated data analysis, and compliant AI deployment in one secure environment. Our partnership enables pharma and public sector organizations to move from variant findy to AI-powered target identification without compromising patient privacy or data sovereignty.

Learn more about databricks ai platform:

Stop Juggling Tools: How to Cut AI Costs by 10x with One Unified Platform

In our experience working across 5 continents, from London to Singapore, the biggest barrier to AI success isn’t a lack of talent—it’s the “tool tax.” Most enterprises are buried under a mountain of disconnected subscriptions for data storage, compute, and model serving. The databricks ai platform effectively kills this complexity by offering a single, cohesive environment.

Databricks isn’t just a software company; it’s a powerhouse founded by the creators of Apache Spark. They recently reported a staggering $1.6 billion in revenue for the 2023 fiscal year and have been on an acquisition spree to solidify their lead. By snapping up MosaicML for $1.4 billion and data-management giants like Tabular and Neon for over $1 billion each, they’ve built what we consider the “Swiss Army Knife” of AI.

The secret sauce is the Data Intelligence Engine. Unlike generic AI that reads the public internet and hallucinates about your proprietary research, this platform understands the unique semantics of your data. It learns from your SQL queries, your pipelines, and your metadata to provide insights that are actually relevant to your business.

Unifying the Lifecycle with the Databricks AI Platform

We often tell our partners in Canada and the USA that AI is 10% model and 90% data plumbing. The databricks ai platform handles that plumbing through its unified lifecycle tools:

- MLflow: The world’s leading open-source solution for MLOps. It tracks every experiment, so when you find a model that works, you can actually reproduce it.

- Unity Catalog: This is the “brain” of the operation. It provides a single permission model for structured and unstructured data, ensuring that your sensitive multi-omic data is only seen by those with the right clearances.

- Delta Lake: It brings ACID transactions to your data lake, meaning your data is always reliable, even if a massive ingestion job crashes halfway through.

By consolidating these into one platform, organizations avoid the “code rot” that happens when one tool updates and breaks the rest of the chain.

Scaling Generative AI using the Databricks AI Platform

Generative AI is the shiny new object, but for a pharma company in Europe or a government agency in Israel, “shiny” isn’t enough—it has to be secure. Databricks’ Mosaic AI allows us to build domain-specific applications using state-of-the-art models like Meta’s Llama 3, Anthropic’s Claude 3, and OpenAI’s GPT-4.

Through a $100 million partnership with Anthropic and a similar deal with OpenAI, Databricks has integrated these LLMs directly into the workflow. But here’s the kicker: your data never leaves the secure environment. You get the power of GPT-4 with the privacy of a zero-trust architecture.

Tools like Agent Bricks and Vector Search allow us to create “RAG” (Retrieval-Augmented Generation) systems. This means your AI agent doesn’t just guess; it “reads” your internal documents and provides answers grounded in your specific enterprise data.

Watch how Block built an AI agent system using Mosaic AI to automate operations for their sellers

From Raw Data to Production: Mastering the AI Lifecycle

The journey from a raw CSV file to a production-ready AI model is usually a nightmare. On the databricks ai platform, it’s a streamlined process. Whether you are training a classic linear regression for lead scoring or a deep learning model for image processing, the platform scales with you.

| Feature | Classic Machine Learning | Generative AI (GenAI) |

|---|---|---|

| Primary Tool | AutoML | Mosaic AI Agent Framework |

| Data Type | Structured (Tables) | Unstructured (Text, Images, PDF) |

| Governance | Model Registry | Unity Catalog + AI Gateway |

| Efficiency | Automated Hyperparameter Tuning | 10x reduction in cost via fine-tuning |

| Accuracy | High for forecasting | 96% accuracy with RAG systems |

Training Classic and Deep Learning Models

For those who don’t want to write thousands of lines of code, AutoML is a lifesaver. It automatically selects the best algorithms and tunes hyperparameters, providing a high-quality model in minutes.

For deep learning, the platform provides pre-configured runtimes for PyTorch, TensorFlow, and Keras. Need to train a massive model? Databricks offers serverless GPU compute. You don’t have to manage the underlying infrastructure; you just focus on the science. Integration with Ray and DeepSpeed allows for distributed training across hundreds of nodes, which is essential for the large-scale research we perform at Lifebit.

Deploying and Serving at Scale

Once a model is trained, it needs a home. Model Serving on Databricks allows you to deploy models as scalable REST endpoints. These endpoints support automatic scaling—if your traffic spikes, the platform spins up more resources; when it drops, it scales down to save you money.

The AI Gateway adds a crucial layer of management for GenAI. It allows you to track usage, log payloads for auditing, and set rate limits so one rogue developer doesn’t accidentally spend your entire AI budget in a weekend.

Governance and Security: Protecting Your Proprietary Intelligence

In the biomedical and public health sectors, security isn’t a “nice to have”—it’s a legal requirement. The databricks ai platform is built with a “security-first” mindset. It is FedRAMP Authorized, making it suitable for even the most sensitive government contracts in the USA and beyond.

Monitoring and Productionizing ML Workflows

Productionizing AI isn’t a “set it and forget it” task. You need to know if your model is starting to drift or if the data quality is dropping. Data Quality Monitoring and Governance tools in Databricks detect anomalies and track data freshness automatically.

With Lakeflow Jobs, we can orchestrate complex data preparation pipelines that feed directly into our models. This integration ensures that the data your model sees in production is formatted exactly like the data it was trained on, preventing the “training-serving skew” that kills most AI projects.

Unity Catalog provides the ultimate audit trail. You can see exactly which user accessed which data point to train which model. This level of lineage is vital for regulatory compliance in pharmacovigilance and clinical trials.

Why Legacy Data Warehouses are Dead: The 12x Price-Performance Advantage

If you are still using a traditional cloud data warehouse, you are likely overpaying. Databricks’ lakehouse architecture offers 12x better price/performance for SQL and BI workloads. This isn’t just a marketing stat; it’s a result of the platform’s ability to run high-performance queries directly on your data lake without needing to move or replicate data.

Real-World Impact: How Industry Leaders Achieve 44% Better Accuracy

The results speak for themselves. One financial data provider used the databricks ai platform to build a text-to-code agent that improved response accuracy by 44%. A payments company automated seller operations, resulting in $10M in productivity gains.

Even in the media world, companies have seen a 10x reduction in costs for personalized viewer experiences by switching to Databricks for their ML workloads.

Democratizing AI Across the Organization

One of our favorite features is the AI Playground. It allows non-technical team members to test different LLMs side-by-side using a simple chat interface. They can adjust parameters and prompts without writing a single line of Python.

This democratization is key. When your clinical researchers, marketing teams, and data scientists all speak the same “data language” on the same platform, innovation happens faster. You move from being a company that uses AI to being a “Data + AI company.”

Frequently Asked Questions about Databricks AI

What is the difference between Mosaic AI and traditional MLflow?

While MLflow is an open-source tool for managing the machine learning lifecycle (tracking, models, registry), Mosaic AI is the broader, integrated suite within Databricks. Mosaic AI includes MLflow but adds enterprise-grade features like Model Serving, the AI Gateway, Vector Search, and the Agent Framework specifically designed for Generative AI.

How does Agent Bricks simplify AI agent development?

Agent Bricks is a no-code/low-code builder that allows you to create AI agents grounded in your enterprise data. It handles the complex “RAG” plumbing for you, connecting your data sources to your models and providing built-in evaluation tools (using “AI judges”) to ensure the agent’s answers are accurate and safe.

Can the Databricks AI platform handle multi-cloud deployments?

Yes! One of the biggest advantages of the databricks ai platform is its “cloud-agnostic” nature. It runs natively on AWS, Azure, and Google Cloud. This prevents vendor lock-in and allows global organizations to maintain a consistent data strategy across different regions and providers.

Conclusion: Start Your AI Change Today

The databricks ai platform is more than just a collection of tools; it is a unified foundation for the future of enterprise intelligence. By combining the scale of a data lake with the performance of a data warehouse and the cutting-edge capabilities of Mosaic AI, it empowers organizations to turn raw data into a competitive advantage.

At Lifebit, we take this a step further. Our next-generation federated AI platform integrates seamlessly with the Databricks ecosystem, enabling secure, real-time access to global biomedical and multi-omic data. Whether you are in London, New York, or Singapore, we help you power large-scale, compliant research through our Trusted Data Lakehouse (TDL) and R.E.A.L. analytics layer.

Don’t let your data stay siloed. Stop struggling with fragmented AI strategies and start building on a platform that scales with your ambition.

Learn more about Lifebit’s federated AI services