Ultimate Checklist for Large-Scale Biopharma Data Software

Why Biopharma Data Software Is Make-or-Break for Your R&D Pipeline

What are the best biopharma data software solutions for large-scale research? The top solutions include:

- Unified data integration platforms that harmonize multi-omics, clinical trial data, and real-world evidence across siloed systems.

- AI-ready environments with federated analytics for secure, compliant analysis without moving sensitive data.

- Enterprise-grade platforms offering scalable cloud infrastructure, automated workflows, and full regulatory audit trails.

- Trusted Research Environments (TREs) that enable real-time collaboration across global teams while maintaining data sovereignty.

- End-to-end solutions like Lifebit that integrate the entire R&D lifecycle from findy to manufacturing.

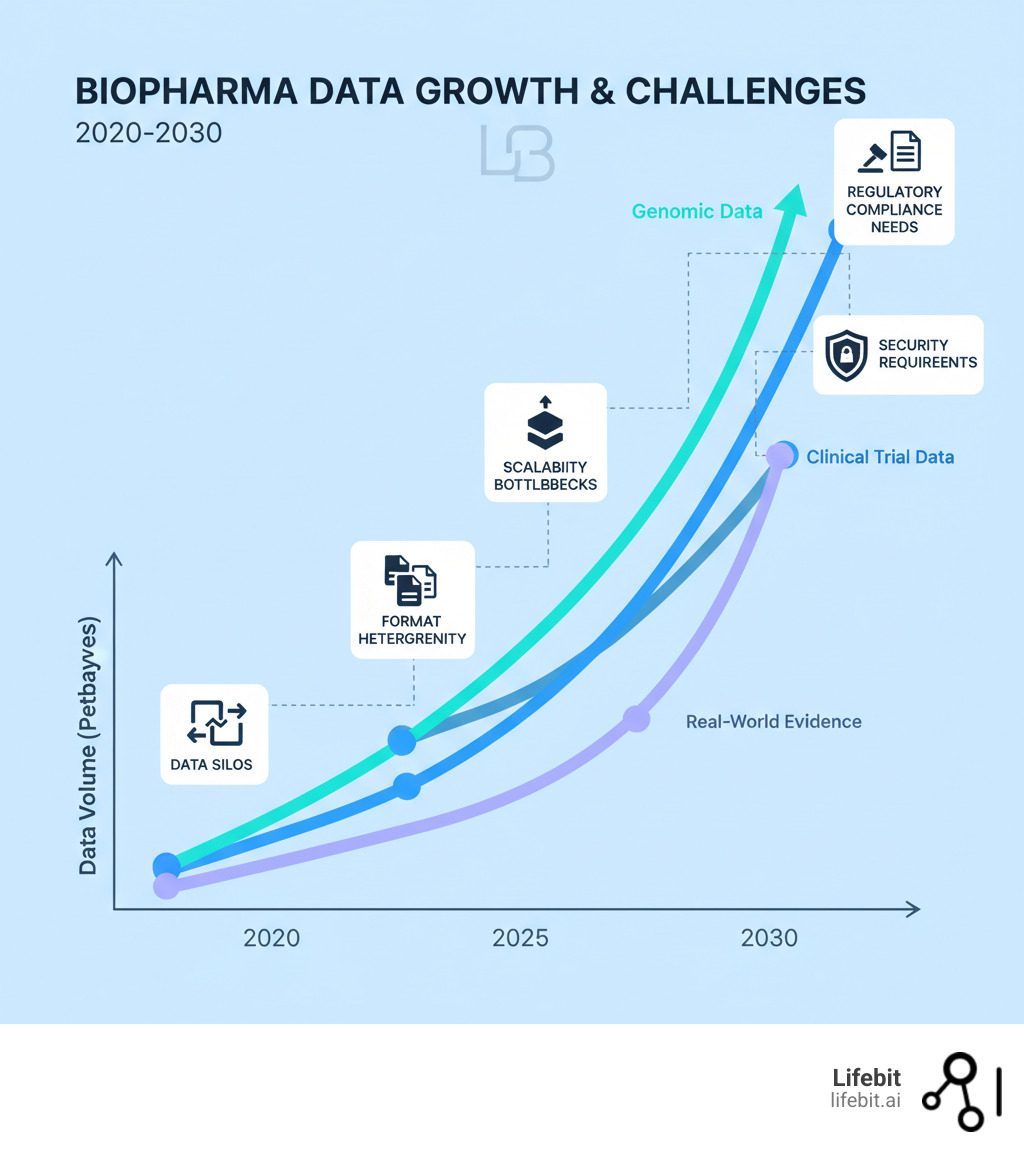

The biopharma industry is drowning in data. The global biotechnology market is projected to reach $3.88 trillion by 2030, and more than 85 percent of biopharma executives intend to increase investment in data, AI, and digital tools in 2025. Yet most organizations still struggle with the basics: getting genomic data, proteomics results, and real-world evidence to talk to each other.

The problem isn’t lack of data—it’s lack of infrastructure.

Large-scale research generates terabytes of multi-omic data daily, but when your bioinformatics team needs weeks to harmonize data formats, you’re falling behind competitors. Traditional lab software wasn’t built for this scale. Most platforms handle one data type well but crumble when you need to link genomics with clinical outcomes or federate analysis across institutions without moving sensitive patient data. The result? Data silos, compliance headaches, and AI initiatives that never leave the pilot phase.

This guide cuts through the noise. We’ll show you what features separate enterprise-grade biopharma data platforms from glorified spreadsheets and how unified platforms accelerate R&D. You’ll get a practical checklist for evaluating solutions that can handle population-level genomics, automated compliance, and real-time pharmacovigilance.

As Maria Chatzou Dunford, CEO and Co-founder of Lifebit with over 15 years in computational biology and federated genomic data platforms, I’ve worked with global pharma, regulatory agencies, and research institutions to solve this exact problem. This article distills that experience into actionable criteria you can use today.

What are the best biopharma data software solutions for large-scale research? word roundup:

The Data Tsunami: Why Standard Lab Software Fails at Scale

Most biopharma companies are sitting on goldmines of data they can’t actually use. Your teams generate terabytes of information daily, but traditional lab software is drowning in this data tsunami.

The systems that worked for small-scale experiments weren’t built for modern research demands. They crack under the pressure of population-level genomics and multi-omics integration, costing you time and money.

- Data silos are the first villain. Your genomics, clinical, and real-world evidence teams work in separate systems that don’t communicate. This blocks insights by preventing a complete view of a patient’s journey or the connection between genetic markers and treatment response.

- Heterogeneous formats are a nightmare. Raw sequencing reads, EHRs, and proteomics results don’t speak the same language. Without sophisticated data harmonization tools, your team wastes weeks manually wrangling formats before analysis can even begin.

- Scalability bottlenecks hit when you try to grow. Software that handled a hundred samples grinds to a halt with ten thousand. Systems crash, performance degrades, and cloud costs explode because the infrastructure is inefficient.

- Security vulnerabilities are a constant threat. Standard lab software often lacks enterprise-grade security, putting sensitive patient data and proprietary research at risk. A single breach destroys trust and can derail entire programs.

- Regulatory compliance (FDA 21 CFR Part 11, GxP, HIPAA, GDPR) is non-negotiable. Many traditional tools weren’t designed for these stringent requirements, leading to manual workarounds, incomplete audit trails, and regulatory risk.

The primary challenges in large-scale biopharma data management

Managing petabytes of diverse data from genomics, proteomics, clinical trials, and real-world sources is an infrastructure problem, not just a storage one.

- Genomic data federation is essential for integrating vast datasets from multiple institutions while respecting data sovereignty rules. You need to analyze data where it lives, which traditional software can’t do.

- Clinical trial data adds complexity with its mix of patient demographics, treatment responses, and lab results from multiple sites. Harmonizing this information is critical for comparing outcomes.

- Real-world data integration is vital for understanding treatment performance outside of controlled trials. But RWD from EHRs and claims databases is messy and unstructured, requiring powerful automation to become research-ready.

- The lack of interoperability ties all these problems together. When systems can’t communicate, data gets lost in translation, and collaboration becomes a logistical nightmare.

This is why the question “What are the best biopharma data software solutions for large-scale research?” matters. The right platform breaks down silos, harmonizes formats, scales seamlessly, and ensures security and compliance. The wrong one becomes the bottleneck for your entire R&D pipeline.

What are the best biopharma data software solutions for large-scale research? A Feature-Based Checklist

When evaluating biopharma data software, we need a robust checklist that goes beyond basic storage. We’re looking for solutions that accelerate findy, optimize development, and ensure compliance across the R&D pipeline.

Here are the core functionalities that define a ‘best-in-class’ solution for large-scale research:

1. Unified Data Integration and Harmonization

An effective solution must act as a central hub for all R&D data, breaking down the silos that cripple analysis. This begins with Automated Data Ingestion. A modern platform uses robust Instrument Connectors and APIs to pull data directly from its source, including high-throughput sequencers (e.g., Illumina BaseSpace), mass spectrometers, and existing LIMS or ELN databases. The platform should ingest a vast array of formats—from raw FASTQ files to structured clinical data—and automatically trigger quality control and processing pipelines.

Once ingested, the data must be standardized. This is where a comprehensive Data Integration Platform becomes indispensable. A critical feature is automated Data Harmonization to OMOP (Observational Medical Outcomes Partnership). By mapping disparate data sources to this common data model, the platform translates proprietary terms into a universal language. For example, a patient’s diagnosis coded as “Type 2 DM” in one EHR and “E11.9” in another are both mapped to the same standard OMOP concept ID. This enables researchers to run a single query across multiple datasets from different hospitals or countries and get consistent, comparable results.

Finally, this harmonized data needs a secure, scalable home. A simple data lake leads to a “data swamp,” while a traditional data warehouse is too rigid for complex biological data. The answer is a Trusted Data Lakehouse Architecture. This hybrid model combines the low-cost, flexible storage of a data lake for raw data with the performance and governance features of a data warehouse for structured, analysis-ready data. This architecture ensures that all data is cataloged, versioned, and governed by a single set of rules, providing automatable data unification and full audit trails for regulatory compliance.

2. Advanced AI and Machine Learning Environment

The future of biopharma R&D is inextricably linked to AI/ML, and a leading data solution must be an active workbench for machine learning. This means providing an integrated environment that supports the entire MLOps lifecycle. This includes native support for AI for Genomics, enabling researchers to apply deep learning models like DeepVariant for superior variant calling or use graph neural networks to uncover hidden patterns in protein-protein interaction networks.

Beyond genomics, the platform must offer powerful Predictive Modeling Tools. These tools allow clinical development teams to optimize trial design by identifying patient cohorts most likely to respond to a therapy, or even create synthetic control arms from harmonized real-world data to accelerate trials. For early-stage research, the platform must support AI-Powered Biomarker Discovery and Target Validation. By integrating multi-omics data with clinical outcomes at scale, AI models can identify novel digital biomarkers or validate a target’s link to disease progression, significantly de-risking drug development. The ability to integrate Large Language Models (LLMs) further enhances this, allowing researchers to extract structured information from unstructured clinical notes or scientific literature.

Crucially, for large-scale research, all of this must be done reproducibly. Reproducible ML Pipelines are paramount. A best-in-class platform incorporates MLOps principles, automatically versioning not just the code, but also the exact dataset, model parameters, and software environment used for training. This creates a complete, auditable lineage from raw data to predictive model, ensuring that an experiment can be perfectly replicated by a colleague or a regulator years later. This is the difference between a one-off AI project and a scalable, enterprise-wide AI capability.

3. Enterprise-Grade Security and Federated Governance

Handling sensitive patient and proprietary data demands a “zero trust” security posture. A top-tier solution is built on a foundation of Enterprise-Grade Security that goes far beyond simple passwords. This means a defense-in-depth strategy: robust encryption for data both in transit (TLS 1.3+) and at rest (AES-256), network isolation using virtual private clouds (VPCs), and tight integration with enterprise identity providers for single sign-on (SSO) and multi-factor authentication (MFA). The platform must enforce Granular Access Controls, allowing administrators to define precisely who can see what data and what actions they can perform based on their role, project, and data attributes (Attribute-Based Access Control).

This security model is extended to collaboration through Federated Governance and Trusted Research Environments (TREs). A TRE is a highly controlled virtual workspace where researchers can analyze sensitive data without being able to export it. The environment has no general internet access, uses whitelisted software tools, and all analysis outputs are subject to an “airlock” protocol, where they are reviewed for disclosive information before release. This enables secure analysis of distributed datasets without centralizing the data, preserving data sovereignty and enhancing privacy.

Federated governance allows an organization to apply a single, consistent set of rules across multiple TREs, even if they are in different countries. A central policy can dictate data access rights, and the platform enforces it everywhere. Every action—from login to query execution—is logged in Full Audit Trails, creating an immutable, timestamped record for transparency and demonstrating adherence to regulatory standards like HIPAA and GDPR Compliance, FDA 21 CFR Part 11, and GxP guidelines. This automated compliance is non-negotiable.

4. Scalable, Collaborative, and Reproducible Analytics

Large-scale research is a team sport that generates immense computational demands. A leading solution must be built for both. Scalability is achieved through Cloud-agnostic deployment, giving organizations the flexibility to run on their preferred cloud provider (AWS, Azure, GCP) or on-premise HPC clusters. This hybrid approach avoids vendor lock-in and allows teams to optimize for cost and performance, bursting to the public cloud for massive computations while keeping highly sensitive data on-premise. The platform should intelligently manage resources, spinning up and down compute clusters as needed to run analyses on petabytes of data without manual intervention.

Reproducibility is the bedrock of good science, and it must be built into the platform’s DNA. This is achieved by supporting modern workflow managers like Nextflow Pipelines and leveraging containerization technologies like Docker and Singularity. These tools package an analysis pipeline with all its software dependencies into a single, portable container. This guarantees that a complex genomic analysis will produce the exact same results whether it’s run today or a year from now by a collaborator, a key component of Bioinformatics Platform Best Practices.

Finally, the platform must enable seamless teamwork through Shared Workspaces. These are more than just shared folders; they are integrated collaborative environments. They provide shared data catalogs, code repositories, and interactive analysis tools like JupyterLab and RStudio, allowing multiple scientists to co-develop analyses in real time. With secure, role-based permissions, organizations can confidently grant access to external partners, CROs, and academic collaborators, accelerating the pace of innovation by bringing the best minds together in a single, governed environment.

The Platform Advantage: Unifying R&D vs. Juggling Multiple Tools

Why does it take months to get your genomics data talking to your clinical trial results? The answer is usually tool sprawl.

Most biopharma organizations use a patchwork of specialized software: one for sequencing, another for clinical data, a third for real-world evidence. Each works in isolation, but making them work together requires months of custom integrations and debugging. It’s like trying to build a house where the electrician and plumber use incompatible materials and refuse to share blueprints. The result is delays, cost overruns, and frustrated scientists.

A unified platform changes the game. Instead of duct-taping tools together, you get a single source of truth where all data can be analyzed together. When your genomics team finds a promising biomarker, your clinical colleagues can immediately overlay patient outcomes—no three-week data reformatting process required.

This approach slashes IT complexity. Your team no longer maintains separate security protocols and compliance audits for a dozen vendors. Updates don’t break integrations, and new team members learn one platform instead of five. When regulators ask for audit trails, you have end-to-end traceability built in. Faster onboarding extends to external partners, who can access what they need through one secure interface. For more on this unified approach, explore the platform advantage.

How enterprise platforms accelerate large-scale biopharma R&D

The difference between lab tools and enterprise platforms is clear at population scale. Enterprise platforms are built for scalability for population-level data, handling petabytes of multi-omic information without issue.

Large organizations need centralized data governance to ensure every team follows the same quality and compliance standards. When global teams work in one governed environment, you eliminate the chaos of conflicting data definitions.

A consistent user experience means scientists work faster with fewer errors because they aren’t context-switching between different interfaces. This consistency also maximizes your training investment.

Perhaps most compelling is the lower total cost of ownership. While enterprise platforms require upfront investment, the ROI from faster cycle times, reduced IT overhead, and higher success rates is typically realized within 12-18 months. When evaluating what are the best biopharma data software solutions for large-scale research, the platform versus point-solution question is about whether you want your team integrating tools or making findies.

Implementation Roadmap: Adopting Your Large-Scale Data Platform

Adopting a large-scale biopharma data solution is a strategic change, not just a technical upgrade. It’s about building a new foundation for how your teams find and deliver therapies.

The journey starts with Change Management. A powerful platform will fail if your people don’t accept it. We start by listening: what frustrates your teams about current workflows? When we frame the new platform as the solution to their problems, adoption becomes natural, not forced.

Stakeholder Alignment means getting R&D scientists, IT, legal, regulatory, and leadership in the same room. Each group has different priorities, and the platform must serve them all. This collaborative approach is essential for designing clinical trials for optimal success, ensuring the data infrastructure supports the entire research lifecycle.

A Phased Rollout beats a “big bang” launch. Start with a pilot program in one department—perhaps harmonizing clinical trial data or automating a bioinformatics pipeline. Iron out the kinks, celebrate early wins, and let success stories spread organically. When one team sees another analyzing data 15 times faster, they’ll be asking for access.

Finally, User Training can’t be an afterthought. It requires custom programs for different user types: hands-on workshops for bioinformaticians, focused sessions for clinicians, and strategic overviews for leadership. Ongoing support and internal platform champions are key to making everyone feel confident.

How to measure the ROI of your biopharma data software

Measuring the ROI of a biopharma data platform goes beyond a simple cost-benefit analysis. It’s about fundamentally accelerating scientific findy.

Accelerated R&D Timelines are the primary benefit. When compound screening analysis drops from days to hours, it’s a competitive advantage. Some organizations have slashed assay time for NGS workflows by 90%, accelerating time to insight by up to 100x. This allows scientists to test more hypotheses and move candidates through the pipeline faster.

Reduced Analysis Costs appear in unexpected ways. Eliminating over 90% of manual data processing for mass spectrometry workflows means your PhD-level scientists can focus on science, not spreadsheets. Cutting chromatography data analysis time by over 15x frees up computational resources and reduces cloud costs.

But the metric that truly matters is Increased Successful Drug Candidates. Advanced AI/ML can improve drug behavior prediction and optimize trial design, leading to a 20% improvement in success rates. In an industry where most candidates fail, even a modest improvement translates to billions in value.

The ultimate measure is how the platform transforms your entire Drug Discovery and Development pipeline. Track metrics like time from target identification to candidate selection and speed of regulatory submission. When your teams can finally do the science they trained for, that’s ROI you can feel—and measure.

Frequently Asked Questions about Biopharma Data Software

How do top-tier platforms ensure regulatory compliance?

Regulatory compliance is a core architectural principle in top-tier platforms, not an afterthought. They are built to meet FDA 21 CFR Part 11 for electronic records and signatures, ensuring all digital data is trustworthy and traceable.

These platforms also adhere to GxP (Good Laboratory, Clinical, and Manufacturing Practices) guidelines, maintaining data integrity throughout the R&D lifecycle. For patient data protection, they are designed to handle HIPAA in the USA and GDPR in Europe, with features like data de-identification and granular access controls. Our HIPAA Analytics Best Practices guide offers more detail.

What truly sets enterprise platforms apart are their Automated Audit Trails. Every action is logged automatically, creating an immutable record for regulatory scrutiny. Finally, comprehensive Validation Documentation comes standard, demonstrating that the software performs as intended and significantly reducing the burden during audits.

What is federated analysis and why is it crucial for large-scale research?

Federated analysis enables you to analyze data from multiple locations without ever moving it. Instead of centralizing data—which is often impossible due to privacy and sovereignty laws—the analytical algorithms are brought to the data. The code travels to where the data lives, processes it locally, and returns only aggregated, privacy-preserving insights. The raw data never leaves its secure environment.

This approach is crucial because it solves a major challenge: Preserving Patient Data Privacy while enabling powerful, population-level analysis. It allows you to maintain strict compliance with GDPR, HIPAA, and other regulations while open uping the potential of previously inaccessible datasets.

For large-scale research, this means you can access global datasets for studying rare diseases, analyzing real-world evidence, or validating biomarkers across diverse populations. A Federated Trusted Research Environment enables secure collaboration, with each institution retaining full control over its data. This overcomes the data sovereignty issues that have historically blocked international research.

What are the best biopharma data software solutions for large-scale research that can handle diverse data types like multi-omics and RWD?

Yes, and this capability is essential. The best solutions are platforms that seamlessly integrate the full spectrum of modern research data.

They excel at multi-omics integration, bringing together genomics, proteomics, metabolomics, and more into a unified analytical environment. These platforms have specialized tools and data models to reveal connections between molecular layers, building a comprehensive biological picture. Our Multi-Omics Integration approach is designed for this purpose.

The real breakthrough comes from integrating these molecular insights with real-world data (RWD) from EHRs, claims databases, and patient registries. Understanding the difference between Real-World Data vs. Evidence is critical. The best platforms transform raw RWD into actionable real-world evidence.

Using sophisticated Data Linking Software, these solutions connect a patient’s genomic profile, clinical history, treatment responses, and outcomes. This holistic patient view is invaluable for precision medicine, powering everything from biomarker findy and target validation to clinical trial optimization and pharmacovigilance.

Conclusion: Building a Future-Proof, AI-Ready R&D Data Strategy

The biopharma organizations that win in the next decade will be those that treat data as a strategic asset, not a byproduct of research. The data tsunami isn’t slowing down, and AI is now a fundamental requirement for competitive R&D.

The old way of juggling specialized tools and manually harmonizing data no longer works. Data silos don’t just slow you down—they prevent the integrated insights that lead to breakthrough findies.

A modern data strategy requires:

- Unified data integration for a single source of truth.

- Advanced AI/ML environments to turn data into insights.

- Enterprise-grade security with federated governance for secure, global collaboration.

- Scalable, collaborative analytics that grow with your research.

The shift to a data-centric, AI-ready R&D strategy is about empowering your scientists with tools that work together, not against each other. This is what a federated platform delivers: secure, real-time access to global biomedical data, built-in harmonization, and advanced AI analytics that respect data sovereignty.

What are the best biopharma data software solutions for large-scale research? The answer lies in solutions that unify your R&D pipeline, accelerate insights through AI, and maintain the security and compliance that biopharma demands. Organizations that have made this shift are analyzing data in hours instead of weeks and using AI to find biomarkers that would have taken years to identify manually.

Ready to see what’s possible when your data infrastructure matches your scientific ambition? Discover the Lifebit Platform and explore how federated AI can transform your R&D operations.