Matching Made Clear: Deterministic vs. Probabilistic Approaches

Deterministic Matching: Cut False Positives to Near Zero — Here’s How

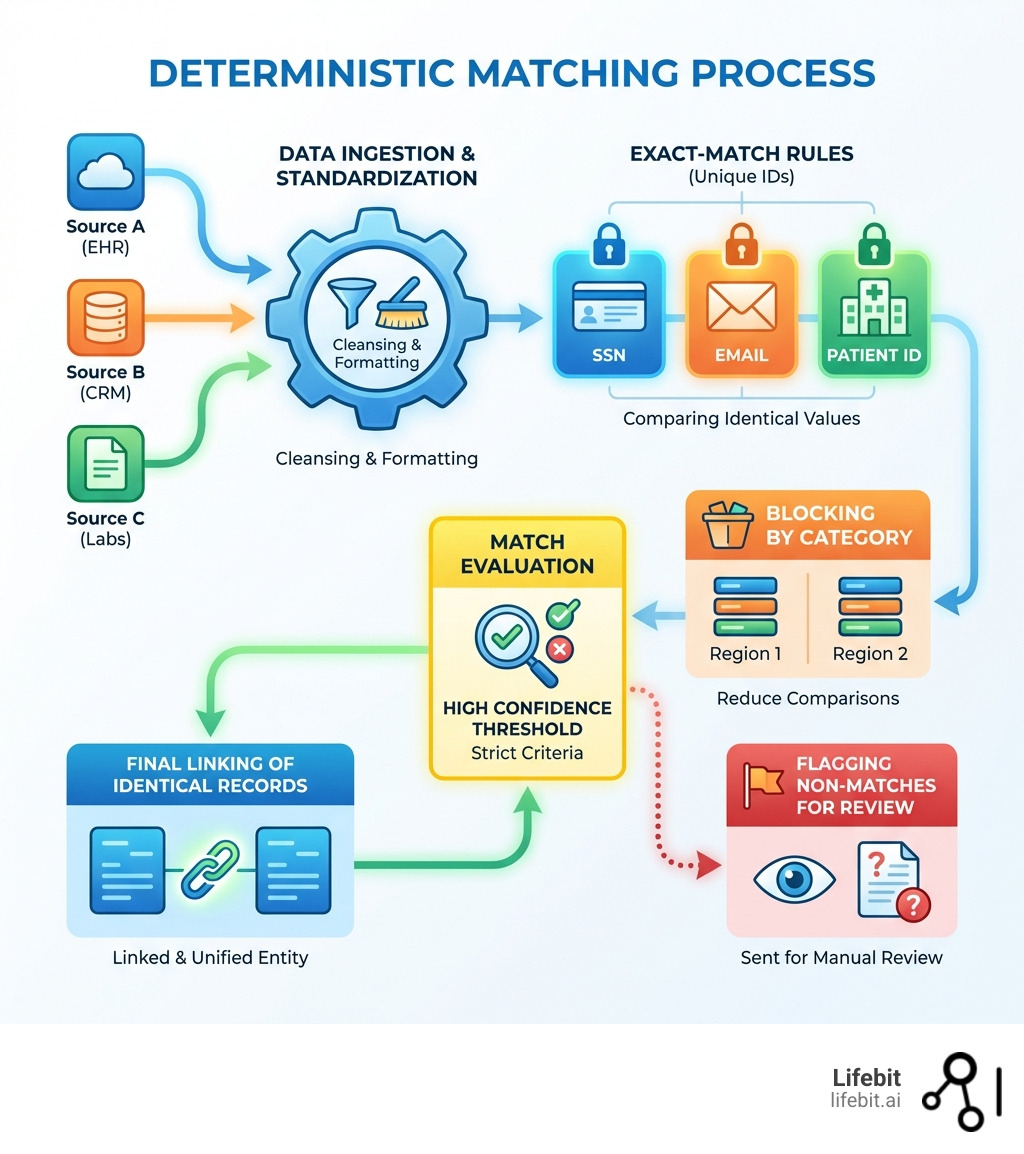

Deterministic matching is a rules-based approach to identifying identical records across datasets by comparing exact values of specific data fields like email addresses, national IDs, or patient identifiers. When two records share matching values for these key fields, they’re linked together—no guesswork, no probability scores. In the landscape of entity resolution, it represents the most conservative and precise method of data linkage, serving as the foundational layer for any robust data integration strategy.

Historically, record linkage was a manual, labor-intensive process. Clerks would pore over physical ledgers, looking for identical names or addresses. As data migrated to digital databases, the need for automated, high-speed matching became paramount. Deterministic matching emerged as the first logical step in this evolution, leveraging the binary nature of computer logic: either two strings are identical, or they are not. While modern data science has introduced more complex probabilistic models, the deterministic approach remains the “gold standard” for scenarios where the cost of a false match is catastrophic.

What you need to know:

- How it works: Compares unique identifiers or combinations of fields (name, address, DOB) using exact-match rules. It operates on a Boolean logic (True/False) rather than a spectrum of likelihood.

- Accuracy: Delivers 80-90% accuracy with high precision and few false positives. In well-maintained databases, the precision can reach 99.9%.

- Best for: Medical records, financial transactions, government registries, and any scenario where unique, verified IDs exist.

- Key limitation: Struggles with incomplete, inconsistent, or messy data—leading to more false negatives (missed matches). It cannot inherently recognize that “Robert” and “Bob” are the same person without pre-defined synonym tables.

- Computational cost: Low overhead and fast processing compared to probabilistic methods. It is highly scalable, making it suitable for real-time processing of millions of records.

The challenge? Most organizations struggle with data that’s almost clean. A missing character, a typo, or a formatting difference can break a deterministic match—leaving critical records unlinked and hidden from analysis. This is especially painful when working with siloed EHR systems, genomics databases, or multi-source clinical datasets where every lost record represents a missed opportunity for a breakthrough or a potential risk to patient safety.

I’m Maria Chatzou Dunford, CEO and Co-founder of Lifebit, where we’ve spent over 15 years building federated platforms that enable secure, compliant analysis across genomic and biomedical data. Throughout my work powering global healthcare research, I’ve seen how deterministic matching serves as the bedrock for reliable entity resolution—and where it needs support from advanced techniques to handle real-world data complexity. In the world of precision medicine, we cannot afford to guess; we must know. Deterministic matching provides that certainty.

Quick deterministic matching terms:

What is Deterministic Matching and How Does It Work?

At its simplest, deterministic matching is the “if-then” logic of the data world. We tell the system: “If Record A and Record B have the exact same Social Security Number, then they are the same person.” It relies on observed, factual data rather than statistical inferences. This method is binary; it does not calculate a score or a weight. It simply checks for equality across a set of pre-defined criteria.

In our work at Lifebit, we often deal with massive datasets across the UK, Europe, and North America. In these environments, deterministic matching works by searching through datasets to link profiles using common, high-quality identifiers. These identifiers, often called Personally Identifiable Information (PII), include:

- National ID numbers: SSN in the US, NHS number in the UK, or Social Insurance Numbers in Canada. These are the strongest deterministic keys because they are designed to be unique to a single individual.

- Email addresses: The most common unique identifier in digital environments. While people may have multiple emails, a shared email is a very strong indicator of identity.

- Phone numbers: Often used as a secondary key, though they can change over time or be shared within a household.

- Full names combined with Date of Birth (DOB): When unique IDs are missing, this combination serves as a “composite key.” While not as strong as a national ID, the probability of two different people having the exact same name and birth date is statistically low in smaller populations.

The process is inherently “all or nothing.” If the rules are met, it’s a match. If there’s even a tiny discrepancy, like “Jon” vs. “John,” the system traditionally says “No.” This is why deterministic matching is known for its high precision (when it says it’s a match, it almost certainly is) but can suffer from low recall (it misses real matches because of typos or missing data).

Key Components of Deterministic Matching Implementation

Implementing this isn’t just about clicking a “match” button. It involves several technical layers to ensure we aren’t merging records that don’t belong together. A professional-grade deterministic matching pipeline usually follows these five stages:

Data Cleansing and Standardization: Before we even look for matches, we have to make the data speak the same language. This is the most critical and time-consuming step. It involves:

- Normalization: Converting all text to lowercase or uppercase to avoid case-sensitivity issues.

- Formatting: Ensuring all dates follow the ISO 8601 standard (YYYY-MM-DD).

- Parsing: Breaking down address strings into components (Street Number, Street Name, City, Zip Code).

- Standardization: Mapping variations like “St.” to “Street” or “Apt” to “Apartment.”

Blocking Rules: Imagine trying to compare 5 million patient records. Comparing every record to every other record would result in trillions of comparisons ($O(n^2)$ complexity). We use blocking to group similar records (e.g., everyone born in the same year or everyone with the same first three digits of a Zip Code) and only search for matches within those blocks. This drastically reduces the computational load.

Exact Comparison: The core engine checks for equality across the chosen fields. This can be a single-field match (e.g., NHS Number) or a multi-field match (e.g., Last Name + First Name + DOB).

Match Relevancy: Many data management and entity resolution systems implement a match relevancy concept. In a deterministic context, this means defining which fields are “mandatory” and which are “optional.” For example, a rule might state that the National ID must match, but the Phone Number can match to add confidence.

Non-match Relevancy: Conversely, if values are present but do not match, it can contribute negatively. For instance, if the names match but the genders are different, the system might disqualify the pair even if other fields align, preventing “false positives” caused by family members sharing names.

At Lifebit, our Trusted Research Environment (TRE) can support these steps within a secure, federated architecture, helping teams apply consistent matching logic while keeping data governed in place.

How Data Quality Impacts Deterministic Matching Effectiveness

We have a saying in data science: “Garbage in, garbage out.” This is never truer than with deterministic matching. Because this method relies on exactness, its effectiveness is entirely dependent on data quality. If your source data is riddled with errors, deterministic matching will fail to find the majority of links.

- Null Values: If a “Unique ID” field is empty in one record, a deterministic rule cannot link it. In many clinical datasets, up to 30% of records may have missing identifiers.

- Character Standardization: Is it “10th Ave” or “Tenth Avenue”? Without standardization, these can look like two different entities to an exact-match rule. Even a trailing space at the end of a string can break a match.

- Data Decay: People move, change their names after marriage, or get new phone numbers. Deterministic matching is a “snapshot in time.” Without a strategy to handle historical data, these changes lead to fragmented records.

- Accuracy Rates: Precision can be very high when identifiers are truly unique and consistently captured. But if the data is messy, the number of false negatives (missed matches) increases sharply, leading to an incomplete view of the subject.

Deterministic vs. Probabilistic Matching: Which Should You Choose?

While deterministic matching demands an exact match, probabilistic matching (often based on the Fellegi-Sunter model) uses statistical evidence and field-level weights to estimate how likely it is that two records represent the same real-world entity. It assigns a “match score” based on the frequency of certain values. For example, a match on a rare surname like “Quizenberry” is weighted more heavily than a match on a common surname like “Smith.”

| Feature | Deterministic Matching | Probabilistic Matching |

|---|---|---|

| Logic | Rules-based (Exact) | Evidence-based (Statistical) |

| Precision | Very High (Few False Positives) | Moderate to High |

| Recall | Low (Many False Negatives) | High (Catches variations) |

| Computational Cost | Low / Cheap | High / Expensive |

| Best Use Case | Unique IDs available | Messy, incomplete data |

| Thresholds | Fixed (Yes/No) | Customizable (Score-based) |

| Transparency | High (Easy to audit) | Low (Black-box algorithms) |

For a deeper dive into these strategies, you might want to check out Robin Linacre’s Blog on Probabilistic Data Linkage.

When Deterministic Matching is the Most Appropriate Choice

We generally recommend deterministic matching when the stakes are high and the data is structured. In these scenarios, the risk of a false positive (linking two different people) outweighs the risk of a false negative (missing a link).

- Medical Records and Clinical Safety: You do not want to “guess” if two patient records belong to the same person when prescribing medication or reviewing allergy history. A false match here could lead to a patient receiving the wrong treatment, which is a critical safety violation.

- Financial Transactions and AML: In banking, accuracy is non-negotiable. Anti-Money Laundering (AML) checks require exact matches against watchlists to ensure compliance. “Close enough” is not acceptable in a regulatory audit.

- Low-Latency Requirements: Because it is computationally “cheap,” deterministic matching is often faster for real-time applications. If you need to match a user profile in milliseconds during a web session, deterministic rules are the way to go.

- Unique ID Availability: If your dataset is rich with SSNs, NHS numbers, or verified email logins, deterministic is often the safest and most efficient baseline. Why use complex statistics when you have a unique key?

Limitations of Deterministic Matching in Complex Data

The main drawback with deterministic matching is its rigidity. It cannot easily account for realistic variation across systems, such as nicknames, formatting differences, missing fields, or changes over time. It lacks the “nuance” required for human-centric data.

In probabilistic matching, practitioners often use concepts like m and u probabilities:

- m probability: The chance that two records share a value because they are the same entity (e.g., the probability that two records for the same person both list the correct DOB).

- u probability: The chance that two records share a value by coincidence (e.g., the probability that two different people share the same first name).

Deterministic matching ignores these nuances. It treats a match on “First Name” with the same weight regardless of whether the name is “John” or “Xylophone.” This lack of weighting is why it often falls short in settings where there is no universal “golden ID” and data is frequently incomplete or entered by different people across different systems.

Real-World Use Cases and Advanced Hybrid Strategies

In the real world, we rarely use just one method. Most sophisticated platforms, including our R.E.A.L. (Real-time Evidence & Analytics Layer), use a mix of deterministic and probabilistic logic to achieve the best of both worlds.

Cross-Device Tracking in Digital Marketing:

In advertising, deterministic matching is used when a user logs in on both a phone and a desktop with the same email. This allows for accurate “people-based” IDs. However, because not everyone logs in everywhere, it lacks scale. Marketers often use deterministic matches to “seed” their probabilistic models, using the known matches to train the system on how to recognize likely matches among anonymous users.

Master Data Management (MDM) for Retail:

Organizations use deterministic rules to link records from online purchases, registration forms, and social media to create a “Single Customer View.” If a customer uses the same credit card number across three different transactions, the system deterministically links them to one profile, allowing for personalized marketing and better inventory management.

Privacy-Preserving Record Linkage (PPRL)

A major advancement in deterministic matching is Privacy-Preserving Record Linkage (PPRL). In sensitive fields like genomics, we often cannot share raw PII (like names or SSNs) due to GDPR or HIPAA regulations. PPRL uses cryptographic hashing (like SHA-256) to turn PII into a unique string of characters (a “token”).

If two different hospitals hash the same NHS number using the same algorithm and salt, they will produce the same token. They can then match their records deterministically using these tokens without ever seeing the underlying sensitive data. This is a cornerstone of the work we do at Lifebit, enabling researchers to link datasets across international borders while maintaining absolute patient anonymity.

Combining Deterministic Matching with Machine Learning

The future of record linkage is cascading mixed heuristic matching. This basically means we apply deterministic rules first (to catch the easy, high-confidence matches) and then use probabilistic or Machine Learning (ML) models to sort through the remaining “maybe” pairs. This “waterfall” approach ensures that the most certain matches are handled quickly and cheaply, while the complex cases get the computational attention they deserve.

We use tools like Zingg, an open-source ML library, to help scale this. ML models can learn from “labeled pairs” (records humans have already confirmed as matches) to identify patterns that a simple rule might miss. For example, an ML model might learn that in a specific dataset, a match on “Last Name” and “Zip Code” is 95% likely to be a match even if the “First Name” is missing. This “active learning” approach allows the system to get smarter over time, reducing the need for manual review by data stewards.

Handling Time-Series Data with Dynamic Time Warping

Sometimes, “matching” isn’t about two static records, but two patterns over time. This is where Dynamic Time Warping (DTW) comes in. This is a more advanced form of deterministic pattern matching.

Imagine comparing two heart rate monitors or two sets of sales data. The patterns might be identical, but one is slightly faster or shifted in time. DTW allows us to “warp” the time axis to find the best possible alignment between these sequences. This is a critical component of our data harmonization efforts at Lifebit, especially when aligning multi-omic data or longitudinal patient records that may have been collected at different intervals across different clinical sites.

Frequently Asked Questions about Record Linkage

Why does deterministic matching have more false negatives?

Because it is rigid. If your rule says “First Name + Last Name + ZIP must match,” and a user moves to a new house (new ZIP), the system will fail to link them. It prioritizes precision (being right) over recall (finding everyone). In a deterministic system, a single character difference (like a hyphenated name vs. a non-hyphenated one) is enough to break the link. This is why data standardization is so vital; it attempts to minimize these “artificial” differences before the matching begins.

What are the computational costs of deterministic matching?

They are very low. Since the system is simply checking for “A = B,” it requires minimal CPU cycles compared to the complex statistical calculations, weightings, and string-distance algorithms (like Levenshtein distance) required for probabilistic matching. This makes it highly scalable for massive datasets. You can run deterministic matching on billions of rows in a fraction of the time it would take to run a full probabilistic model, making it the ideal first pass in any data pipeline.

How do privacy regulations affect data matching?

With the rise of GDPR in Europe and the FTC’s focus in the US, many identifiers (like IP addresses and device IDs) are now classed as PII. This makes traditional deterministic matching harder because you cannot simply move data to a central location.

At Lifebit, we solve this through federated governance. Instead of moving sensitive PII to a central server for matching, our platform allows the matching to happen within a secure Trusted Data Lakehouse (TDL) at the data source. We use the PPRL techniques mentioned earlier to match records without exposing the raw data, ensuring compliance with local privacy laws while still delivering global insights.

Can deterministic matching handle “Fuzzy” logic?

Strictly speaking, no. Deterministic matching is exact. However, many people use “deterministic” to describe a system that uses a series of exact rules to simulate flexibility. For example, you might have 10 different deterministic rules (Rule 1: Match on SSN; Rule 2: Match on Email; Rule 3: Match on Name + DOB). If any of these rules are met, it’s a match. While this increases recall, each individual rule is still deterministic. True “fuzzy” matching is the domain of probabilistic models.

What is a “Golden Record” in deterministic matching?

In Master Data Management, a “Golden Record” (or Single Source of Truth) is the master version of a data entity created by merging all linked records. Deterministic matching is the primary tool used to create these. Once the system identifies that five different records across five different databases all belong to “Patient X,” it merges them into one Golden Record that contains the most recent and accurate information from all sources.

Conclusion

Deterministic matching is the reliable, no-nonsense backbone of data integration. It provides the high-precision links that researchers and clinicians need when there is zero room for error. In an era where “big data” is often synonymous with “messy data,” the certainty provided by exact-match rules is more valuable than ever. It serves as the first line of defense against data fragmentation, ensuring that the most obvious and critical connections are made with 100% confidence.

However, as we’ve seen, its reliance on “perfect” data means it often needs to be part of a broader, more sophisticated strategy. By combining deterministic matching with probabilistic models, machine learning, and privacy-preserving technologies like hashing, organizations can build a comprehensive view of their data without sacrificing accuracy or security.

At Lifebit, we believe that the most powerful insights come from harmonizing diverse, complex datasets without compromising security. Whether you are linking patient records across continents or trying to find patterns in multi-omic data, our federated AI platform provides the tools to make those connections accurately and securely. We bridge the gap between siloed data and unified discovery.

Ready to see how we can unify your siloed data and implement a world-class entity resolution strategy? Explore the Lifebit Platform and find how we’re making secure, large-scale research a reality.