Stop Collecting and Start Analyzing with Federated Data Analytics

Why 97% of Hospital Data Stays Locked Away—And How to Fix It

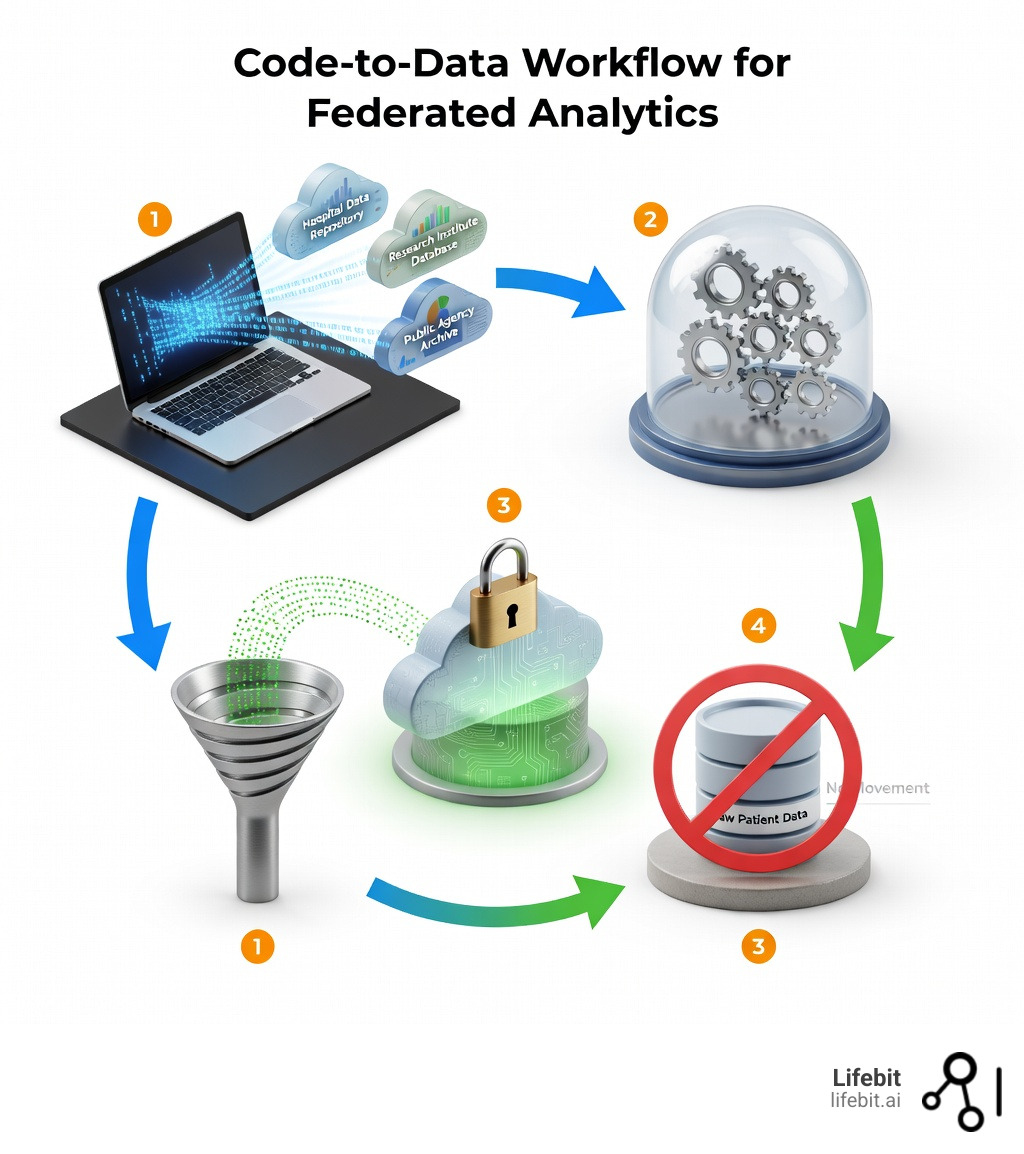

Federated data analytics is a privacy-preserving approach that enables organizations to run statistical queries and generate insights across multiple data sources—hospitals, research centers, government agencies—without moving or centralizing sensitive information. Instead of collecting data into a single warehouse, analytics code travels to where the data lives, processes it locally, and returns only aggregated results.

Key characteristics of federated data analytics:

- Code-to-data approach: Analysis scripts execute at each data location, not the other way around

- No data movement: Raw patient records, genomic files, and clinical data never leave their secure environments

- Privacy by design: Techniques like differential privacy and secure multiparty computation protect individual records

- Real-time access: Query fresh data without waiting for ETL pipelines or batch transfers

- Regulatory compliance: Meets GDPR, HIPAA, and data sovereignty requirements by keeping data in its original jurisdiction

Right now, 97% of hospital data goes unused—not because it lacks value, but because privacy regulations, institutional silos, and transfer costs make centralization impossible. During the COVID-19 pandemic, federated genomics projects analyzed data from nearly 60,000 individuals across institutions without compromising patient privacy. Federated approaches can boost sample sizes by 10-fold or more, dramatically improving variant classification for underrepresented populations in rare disease research.

The traditional model—extract, transform, load—is broken. A single whole-genome sequence generates 100-200 GB of data. Multiply that by thousands of patients across dozens of hospitals, and you’re looking at petabytes of transfer costs, months of legal negotiations, and data that’s already stale by the time it reaches your analytics platform.

I’m Maria Chatzou Dunford, CEO and co-founder of Lifebit, where we’ve spent over a decade building federated data analytics infrastructure for genomics and biomedical research across secure, compliant environments. Our work with public sector institutions and pharmaceutical organizations has shown that the shift from “move data to analysis” to “bring analysis to data” isn’t just technically feasible—it’s the only scalable path forward.

Quick look at federated data analytics:

What is Federated Data Analytics and Why Does It Beat Centralization?

In the old days of data science, we were like kids trying to build the world’s biggest LEGO castle, but we insisted that every single brick had to be shipped to our bedroom first. If a brick was too heavy, too fragile, or belonged to a friend whose parents didn’t trust us, we just couldn’t use it. That’s centralization. This model worked when data was measured in megabytes, but in the era of petabyte-scale genomics and high-resolution medical imaging, the physics of data movement has become a barrier to progress.

Federated data analytics flips the script. It uses data virtualization to create a unified view of information scattered across the globe. Instead of moving the “bricks” (the data), we send our “instructions” (the code) to each site. This approach is gaining massive traction because it solves the “data gravity” problem. Coined by Dave McCrory, data gravity describes the idea that as data sets grow in size, they become harder to move, eventually pulling applications and services toward them. By leaving data in place, we achieve scientific research on sustainable bioinformatics by eliminating redundant storage and massive carbon footprints associated with data transfer.

Furthermore, the historical shift from on-premise servers to the cloud was supposed to solve accessibility, but it actually created new “cloud silos.” Federated analytics acts as the bridge between these clouds, allowing a researcher to query an AWS bucket in the US and an Azure instance in the UK simultaneously without the data ever crossing the Atlantic.

As we explain in our complete guide to federated data analysis, the benefits are clear:

- Reduced Data Movement: You save on egress fees and storage costs. Egress fees alone can account for up to 20% of a research project’s cloud budget; federation reduces this to near zero.

- Real-Time Access: You aren’t analyzing a backup from six months ago; you’re querying the live database. This is critical for infectious disease monitoring where a week’s delay can cost lives.

- Security: The data owner maintains physical control, reducing the “attack surface” of a massive central honeypot. If a central warehouse is breached, everything is lost. In a federated model, a breach at one site does not compromise the entire network.

How Federated Data Analytics Differs from Federated Learning

While people often use these terms interchangeably, they are actually cousins, not twins. Understanding the distinction is vital for choosing the right architecture for your project.

- Federated Analytics (FA): Think of this as “collaborative data science.” It’s about computing descriptive statistics—like finding the average blood pressure of patients across ten hospitals or identifying the most frequent genetic variants in a population. It answers “What is happening right now?” FA is often used for feasibility studies, cohort discovery, and quality control. It involves simpler mathematical operations but requires high precision.

- Federated Learning (FL): This is “collaborative machine learning.” It’s about training complex AI models. Each site trains a local version of a model, and only the “learnings” (model weights) are sent back to be merged. It answers “Can we predict what will happen next?” FL is computationally intensive and often requires multiple rounds of communication between the central server and the local nodes.

According to scientific research on federated computation concepts, FA is often the prerequisite for FL. You need to understand the distribution of your data through analytics before you can effectively train a model on it. Without FA, you might train a model on biased or poorly harmonized data, leading to “garbage in, garbage out” on a global scale.

Comparing Traditional Data Federation and Modern Analytics

“Wait,” you might say, “hasn’t data federation existed for decades?” Yes, but modern federated data analytics is a different beast. Traditional federation, often called “Enterprise Information Integration” (EII), focused on “SQL pushdown”—sending a simple query to a remote database. It worked for small tables but choked on complex analytics or unstructured data like genomic sequences.

Modern systems, as detailed in our ultimate guide to federated analytics, use “virtual schemas” and containerized compute. This allows us to run heavy-duty Python scripts, R-based statistical models (like DataSHIELD), and even complex bioinformatic pipelines right where the data sits. We’ve moved from simple “Lookups” to “Deep Insights.” This evolution is powered by technologies like Docker and Kubernetes, which allow us to package the entire analysis environment and deploy it consistently across diverse institutional infrastructures.

Core Architecture: How Federated Systems Work Without Data Transfer

If you peek under the hood of a system like Lifebit’s, you’ll find a sophisticated orchestration layer. This is the “brain” of the operation. It manages the workflow, ensuring that the right code goes to the right place at the right time, while maintaining a strict audit trail of every action taken.

The architecture typically consists of four critical components:

- Orchestration Engine: Coordinates the distribution of analysis tasks. It breaks down a global query into sub-tasks, schedules them across the network, and monitors their execution. If one site is offline, the engine manages the retry logic or adjusts the final calculation accordingly.

- Data Connectors: Standardized bridges (like vantage6) that speak to local databases (SQL, NoSQL, or Object Storage). These connectors abstract away the underlying database complexity, presenting a uniform interface to the researcher regardless of whether the data is in a legacy Oracle database or a modern S3 bucket.

- Security Gateways: These act as digital bouncers. They use “allow-lists” of approved algorithms and perform static code analysis to ensure that the incoming analytics script isn’t trying to perform unauthorized actions, such as exfiltrating raw data or scanning the local network.

- Result Aggregator: Combines the local answers into a final, global insight. This isn’t just a simple addition; it often involves complex statistical weighting to account for different sample sizes and data distributions across sites.

This “compute-to-data” model is the heart of a federated research environment guide. It ensures that the researcher never sees the raw data, only the mathematical result. This shift is fundamental: we are moving from a world of “data sharing” to a world of “insight sharing.”

Handling Horizontal vs. Vertical Partitioning in Federated Data Analytics

Data isn’t always split up in the same way, and the architecture must adapt to these different “shapes” of distributed data.

- Horizontal Partitioning: This is the most common. Different sites have the same type of data for different people. For example, Hospital A and Hospital B both have columns for “Age,” “Diagnosis,” and “Genotype,” but they have different sets of patients. This is the primary use case for boosting sample sizes in rare disease research, where no single hospital has enough patients to reach statistical significance.

- Vertical Partitioning: This is the “hard mode.” Different sites have different information about the same people. For example, Hospital A has clinical records (history, medications), while a specialized lab has the genomic data for the same patient. To analyze this, the system must perform “Private Set Intersection” (PSI) to link the records without revealing the identities of the patients who don’t overlap between the datasets.

Handling vertical partitioning requires advanced “entity resolution” and scientific research on genomics standards like those from the Global Alliance for Genomics and Health (GA4GH). It ensures we are linking the right records using cryptographic hashes rather than names or social security numbers.

Steps for Implementing a Federated System

You can’t just flip a switch and be “federated.” It requires a strategic roadmap that balances technical, legal, and cultural shifts:

- Governance Agreements: Define who can ask what. This is often the longest step, involving “Data Use Agreements” (DUAs) that specify the purpose of the research and the limitations on data access.

- Data Harmonization: Ensuring “Male/Female” at Site A matches “M/F” at Site B. This often involves mapping local data to a Common Data Model (CDM) like OMOP or HL7 FHIR. Our federated data governance guide covers this in depth.

- Technical Standards: Adopting tools like RO-Crate for data packaging and GA4GH WES (Workflow Execution Service) for running standardized pipelines.

- Pilot Deployment: Start with synthetic data to test the “plumbing.” This allows you to verify that the orchestration engine and security gateways are functioning correctly without risking real patient information.

- Production Scaling: Connecting the real, high-value datasets and opening the platform to the broader research community.

Privacy-Preserving Techniques: Securing Distributed Data Sources

How do we guarantee that a clever hacker can’t reverse-engineer a patient’s identity from an average score? If a query returns the average age of patients with a rare disease in a small town, and only one person has that disease, their privacy is gone. To prevent this, we use a “toolbox of mitigations” known as Privacy-Enhancing Technologies (PETs).

- Differential Privacy (DP): This is the gold standard for statistical privacy. We add a mathematically calculated amount of “noise” to the results. It’s like looking at a photo through a slightly frosted window—you can see the crowd, but you can’t recognize a specific face. The “privacy budget” (epsilon) determines the balance between data accuracy and privacy protection. A lower epsilon means more privacy but less precision.

- Secure Multiparty Computation (SMPC): Data is split into encrypted fragments called “secret shares.” No single party can see the whole picture, but they can still do math on the pieces together. It’s like three people wanting to know their average salary without telling each other their individual pay. They each split their salary into three random numbers that add up to the total, share one piece with each other, and then sum the pieces they received.

- Trusted Execution Environments (TEEs): Think of this as a “black box” inside the computer’s processor (e.g., Intel SGX). The data is decrypted and analyzed only inside this secure enclave. Even the system administrator with root access to the server cannot see what is happening inside the TEE. This provides a hardware-based layer of security that complements software-based encryption.

- Homomorphic Encryption (HE): This is the “holy grail” of data privacy. It allows you to perform calculations on encrypted data without ever decrypting it. While historically too slow for practical use, recent breakthroughs in “Fully Homomorphic Encryption” (FHE) are making it viable for specific genomic calculations, such as searching for specific DNA sequences.

Research into private summation and other techniques ensures that we can maintain a high level of accuracy while providing ironclad privacy. For a deep dive, check out our federated data sharing guide.

Addressing Regulatory Compliance and Data Sovereignty

For many organizations, federated data analytics isn’t just a “nice to have”—it’s a legal requirement. Under GDPR (Europe), HIPAA (USA), and LGPD (Brazil), moving sensitive health data across borders is a regulatory nightmare. Many countries are now implementing “Data Sovereignty” laws that strictly forbid the export of genomic data from their territory.

Federation solves this by respecting these boundaries. The data stays in its home country, satisfying residency laws, while the insights travel. We follow the “Five Safes” framework to ensure compliance:

- Safe Projects: Is the research project approved by an ethics committee and legally permitted?

- Safe People: Has the researcher undergone identity verification and training?

- Safe Settings: Is the compute environment (the federated node) secure and audited?

- Safe Data: Has the data been de-identified or pseudonymized according to local standards?

- Safe Outputs: Are the final results checked for “disclosure risk” before being shown to the researcher?

This rigorous approach is why federated learning applications are becoming the gold standard for international consortia like the European Health Data Space (EHDS). By providing a technical solution to a legal problem, federation allows science to move at the speed of thought rather than the speed of litigation.

Real-World Impact: From Genomics to Global Security

The impact of federated data analytics is most visible in life sciences, where the “n=1” problem is a constant challenge. In rare disease research, a single hospital might only see one patient with a specific genetic mutation every five years. By federating data across 100 hospitals, researchers can find 20 patients in a single afternoon, accelerating the path to a diagnosis and treatment.

In a landmark study of BRCA1/BRCA2 (breast cancer genes), federated approaches boosted sample sizes by 10-fold. This is a game-changer for people of non-European ancestries. Historically, genomic databases have been heavily biased toward European populations. When a person of African or Asian descent gets a genetic test, they are more likely to receive a “Variant of Uncertain Significance” (VUS) result because there isn’t enough centralized data to compare them against. Federation allows us to tap into diverse datasets globally, closing the “genomic divide” and ensuring that precision medicine works for everyone, not just a privileged few.

Beyond healthcare, we see applications in:

- Cybersecurity: Organizations like Splunk use federated analytics to hunt for threats across different cloud environments. Instead of moving terabytes of log files to a central SIEM (Security Information and Event Management) system, they query the logs where they are generated, identifying patterns of a coordinated cyberattack in seconds.

- Finance: Banks use it to detect money laundering patterns across different institutions. Anti-Money Laundering (AML) regulations require banks to keep customer data private, but criminals often move money between multiple banks to hide their tracks. Federated analytics allows banks to collaborate on “suspicious activity” detection without sharing private customer lists.

- Public Sector: Government agencies use federation to analyze social trends across different departments (e.g., education, housing, and health) to improve urban planning while respecting the privacy of citizens.

According to a federated analytics survey, the ability to analyze “siloed institutional entities” is the biggest driver of innovation in the next decade. The survey highlights that 70% of data leaders believe that distributed data access will be more important than centralized storage by 2030.

Success Stories in Healthcare and Beyond

One of our favorite success stories involves the BY-COVID initiative. During the pandemic, the world needed answers fast. By connecting data from across Europe, researchers were able to identify host genetic factors that influenced COVID-19 severity. This required harmonizing data from dozens of different hospitals, each with their own local rules and languages. Federated analytics provided the only framework capable of handling this complexity in real-time.

In the field of oncology, the “Federated Tumor Segmentation” (FeTS) initiative has used these techniques to train AI models on brain tumor images from 30 different institutions across three continents. The resulting model was significantly more accurate and generalizable than any model trained on data from a single hospital. As we discuss in federated learning in healthcare, this is literally saving lives by accelerating drug discovery and safety surveillance. It allows us to detect rare side effects of new medications much faster than traditional reporting methods.

Frequently Asked Questions about Federated Analytics

What are the main challenges of federated analytics?

It’s not all sunshine and rainbows. The biggest hurdle is Data Harmonization. If one hospital records blood pressure in mmHg and another uses a different scale, or if one uses ICD-9 codes and another uses ICD-10, your results will be nonsense. This requires a robust “semantic layer” to translate between different data dialects.

You also have to deal with System Heterogeneity—some sites might have lightning-fast GPU clusters, while others are running on older hardware with limited bandwidth. The orchestration engine must be “resource-aware” to prevent the slowest node from bottlenecking the entire analysis. Finally, the Governance Complexity of getting twenty legal departments to sign one contract is a feat of human endurance that often requires specialized “federation managers” to navigate.

How does it reduce data engineering costs?

Traditional data engineering is expensive and brittle. You need a small army to build and maintain ETL (Extract, Transform, Load) pipelines. In fact, some organizations report that 86% of their analysts are using out-of-date data because they’re waiting on engineering resources to update the central warehouse.

Federation reduces this by:

- Eliminating ETL: You don’t need to transform and move data constantly. You map the data once at the source, and it’s ready for any future query.

- Reduced Storage: No need for a “second copy” of the data in a warehouse, which can double your storage costs and create synchronization errors.

- Config-Driven Pipelines: Systems like Databricks have shown that a config-driven data platform can allow two engineers to do the work of 100 by automating the connections and monitoring the health of the federated nodes.

Is federated analytics compatible with data lakehouses?

Absolutely. In fact, they are a perfect match. A “Federated Data Lakehouse” combines the flexibility of a data lake (storing raw genomic files) with the performance and ACID transactions of a data warehouse (querying clinical tables). This allows for unified governance across all your data sources. In this model, the lakehouse acts as the local storage engine for each federated node, providing the high-performance compute needed to process complex queries locally before sending the results back to the central coordinator.

What about network latency and reliability?

This is a common concern. If you are running a query across 50 sites, what happens if the internet goes down at one site? Modern federated systems use “asynchronous execution.” The central coordinator sends out the tasks and waits for results to trickle back. If a site is slow, the system can provide a “partial result” with a confidence interval, or it can wait until the site comes back online to finalize the report. Because we are only moving small result sets (kilobytes) rather than raw data (terabytes), the actual time spent on the network is usually negligible compared to the time spent on local computation.

Conclusion: The Future of Distributed Intelligence

The era of “hoarding” data is ending. The future belongs to those who can analyze data wherever it resides. At Lifebit, we believe that federated data analytics is the key to open uping the 97% of data that is currently sitting dark in hospital silos.

By bringing the analysis to the data, we aren’t just saving money on cloud storage or egress fees. We are enabling a new kind of global collaboration—one where a researcher in New York can securely query a dataset in London or Singapore to find the cure for a rare disease, all while the patient’s privacy remains perfectly intact.

The technology is here. The standards are ready. It’s time to stop collecting and start analyzing.