How AI Driven Research is Solving the World’s Hardest Math Problems

AI-Driven Research Is Changing Science Faster Than Most Labs Can Keep Up

AI-driven research is reshaping how scientists discover, analyze, and publish knowledge — and the gap between those using it and those who aren’t is widening fast.

Here’s what the data shows at a glance:

| What AI Does for Researchers | Impact |

|---|---|

| Publication output | 3x more papers published |

| Citation impact | ~5x more citations over a career |

| Career speed | Leadership roles ~1.5 years earlier |

| Junior researcher retention | Less likely to drop out of academia |

| Abstract screening time | Up to 99.8% reduction |

| Eligibility screening time | ~72% faster than manual review |

But it’s not all upside. A large-scale analysis of over 41 million scientific papers found that AI-assisted research covers 4.6% less topic territory than conventional studies — and generates 22% less engagement across the broader literature. Individual researchers win. The collective scientific ecosystem? That’s a more complicated story.

The stakes are especially high in biomedical and life sciences research, where fragmented datasets, regulatory constraints, and siloed institutions already slow progress. AI promises to compress timelines from months to minutes — but only when deployed responsibly, with the right infrastructure.

I’m Dr. Maria Chatzou Dunford, CEO and Co-founder of Lifebit and a computational biologist with over 15 years working at the intersection of genomics, federated data systems, and AI-driven research. My work building compliant, federated platforms for drug discovery and precision medicine has given me a front-row seat to both the transformative potential — and the real limitations — of AI in science.

Know your ai driven research terms:

3x More Papers: Why AI Driven Research Users Dominate the Field

If you feel like your colleagues are suddenly publishing at a superhuman pace, you aren’t imagining it. Recent studies have confirmed a growing “AI divide” in the natural sciences. Researchers who embrace machine learning and generative models aren’t just slightly more productive—they are dominating the leaderboard. This productivity gap is not merely a matter of writing speed; it represents a fundamental shift in how scientific value is created and synthesized.

According to a massive analysis of 41 million papers, scientists using AI published 3.02 times as many papers over their careers compared to their “AI-free” peers. But it isn’t just about quantity. These AI-boosted papers received 4.84 times more citations, suggesting that AI is helping researchers hit the “sweet spot” of high-impact, highly visible topics. By leveraging large language models (LLMs) and graph neural networks, these scientists can synthesize information across disparate fields—such as connecting a breakthrough in material science to a problem in vascular biology—at a scale impossible for the human mind alone.

For junior scientists, the impact is even more profound. Those using ai driven research tools reached leadership positions nearly 1.5 years faster and were significantly less likely to drop out of the grueling academic rat race. By automating the “drudge work” of science—such as formatting bibliographies, summarizing vast literature pools, and generating initial code for data analysis—AI allows these researchers to focus on high-level strategy and experimental design. This is particularly vital in AI for medical research, where the complexity of human biology requires every bit of cognitive bandwidth a researcher can muster. The use of AI has also been shown to reduce the “time-to-first-publication” for PhD students by an average of 14 months, fundamentally altering the career trajectory of the next generation of innovators.

Accelerating Discovery with AI Driven Research Tools

The secret sauce for these high-performing researchers often starts with how they handle existing knowledge. The traditional literature review—spending weeks or months reading through PDFs—is being replaced by dynamic literature mapping. This shift from “search” to “synthesis” allows researchers to identify “white spaces”—areas where research is lacking but potential impact is high.

Tools like Litmaps allow researchers to visualize citation networks, instantly identifying research gaps and potential collaborators. Instead of a linear search, you get a “galaxy map” of how ideas have evolved over time. This visualization helps prevent “reinventing the wheel” and ensures that new studies are built on the most robust existing evidence.

The efficiency gains are staggering when we look at the numbers. In systematic reviews, which are the gold standard for evidence-based medicine, AI is turning a marathon into a sprint:

| Research Task | Manual Time | AI-Assisted Time | Time Saved |

|---|---|---|---|

| Abstract Review | ~41.5 Hours | ~11.8 Hours | 72% |

| Qualitative Coding | 100% (Baseline) | 25% | 75% |

| Data Extraction | Weeks | Minutes | ~99% |

| Hypothesis Generation | Days | Seconds | ~99.9% |

| Peer Review Simulation | Hours | Minutes | ~90% |

Measuring Success with New Efficiency Metrics

How do we actually prove these tools are making science “better” and not just “faster”? We use sophisticated economic and statistical models like Data Envelopment Analysis (DEA) and Stochastic Frontier Analysis (SFA). These metrics allow research organizations to quantify the relationship between inputs (funding, hours, data) and outputs (discoveries, papers, patents). SFA, in particular, helps labs identify how close they are to their “production frontier”—the maximum possible output given their current resources.

Recent research on AI efficiency suggests that AI isn’t just a linear improvement; it’s a shift in the production frontier itself. By using transfer learning and hyperparameter optimization, researchers can take a model trained on one set of data and “fine-tune” it for a completely different problem in record time. This “recycling” of intelligence is a hallmark of modern ai driven research, allowing labs to pivot to new viral threats or therapeutic targets with unprecedented agility.

The “Monocropping” Risk: Is AI Narrowing the Scope of Human Inquiry?

While individual scientists are thriving, the scientific “forest” might be getting a bit less diverse. Think of it like industrial farming: if every farmer only plants the one corn variety that the AI says will grow fastest, the whole ecosystem becomes vulnerable. This is what experts call “epistemic monocropping.” Because AI models are trained on existing data, they tend to steer researchers toward “popular” problems that already have massive datasets.

The result? AI-driven papers cover 4.6% less topic territory. We are getting very, very good at answering the same types of questions, but we might be losing the “weird,” heterodox ideas that lead to true scientific revolutions. This creates a scientific “Matthew Effect,” where certain well-documented pathways receive even more attention, while high-risk, high-reward “blue sky” research is sidelined because it lacks the massive training datasets AI requires. If the AI doesn’t see enough data on a niche topic, it may categorize it as “noise” rather than a potential breakthrough.

Furthermore, AI papers tend to orbit “superstar” papers, creating feedback loops where 80% of citations go to less than 25% of the literature. This reduces the interconnectedness of science, making it harder for a discovery in physics to spark an idea in biology. At Lifebit, we believe the solution lies in privacy-preserving AI that allows researchers to “see” into diverse, siloed datasets without compromising security. By accessing the “long tail” of scientific data—the data currently locked away in small hospital systems or niche research labs—we can break these feedback loops and encourage a more diverse research landscape.

Safeguarding Diversity in AI Driven Research

To prevent science from becoming a giant echo chamber, the community needs to implement structural safeguards. We can’t just hope for diversity; we have to fund it and build it into our methodologies. This involves a conscious effort to champion “Small Data” AI—models designed to find patterns in sparse, noisy, or unconventional datasets that foundation models might ignore.

- Funding Diversification: Grant agencies should set aside “protected shares” of funding for projects that use non-computational or “high-risk” methodologies. This ensures that the “human element” of intuition and physical experimentation remains a core part of the scientific process.

- Methodological Rotation: Researchers should be encouraged to “triangulate” their findings—using AI for the heavy lifting but rotating back to qualitative, ethnographic, or wet-lab methods to validate the “why” behind the data. This prevents the “black box” problem where a result is accepted without understanding the underlying mechanism.

- Editorial Evolution: Journals need to reward originality and theoretical depth over the sheer speed and scale that AI provides. Peer review processes should include specific checks for “AI-generated homogeneity” to ensure that the literature remains a vibrant tapestry of ideas.

- Algorithmic Auditing: Research institutions should regularly audit the AI models they use to ensure they aren’t inadvertently biasing research toward specific demographics or established theories.

This is especially critical in fields like AI for genomics, where the “most likely” answer isn’t always the “right” answer for a specific patient with a rare disease. In precision medicine, the outliers are often the most important data points, and an AI trained only on the “average” will fail those who need it most.

Scale or Fail: How High Performers Achieve Enterprise-Wide AI Benefits

There is a massive gap between organizations that “do AI” and those that “are AI-driven.” While 88% of organizations report using AI in at least one function, nearly two-thirds are stuck in the “pilot phase” graveyard. These organizations often treat AI as a shiny new tool rather than a fundamental shift in their operational DNA.

So, what do the “AI high performers” (the top 6% of organizations) do differently?

- Workflow Redesign: They don’t just sprinkle AI on top of old processes. They rebuild the workflow from the ground up to be “AI-first.” This means rethinking how data is collected, how teams are structured, and how success is measured.

- Heavy Investment: These leaders often invest more than 20% of their digital budgets into AI capabilities. This isn’t just for software; it’s for the infrastructure, the talent, and the cultural change management required to sustain an AI-driven ecosystem.

- Leadership Commitment: AI isn’t just a “tech project” relegated to the IT department; it’s a core business strategy driven by the C-suite. Leaders in these organizations understand that AI is a competitive necessity, not an optional upgrade.

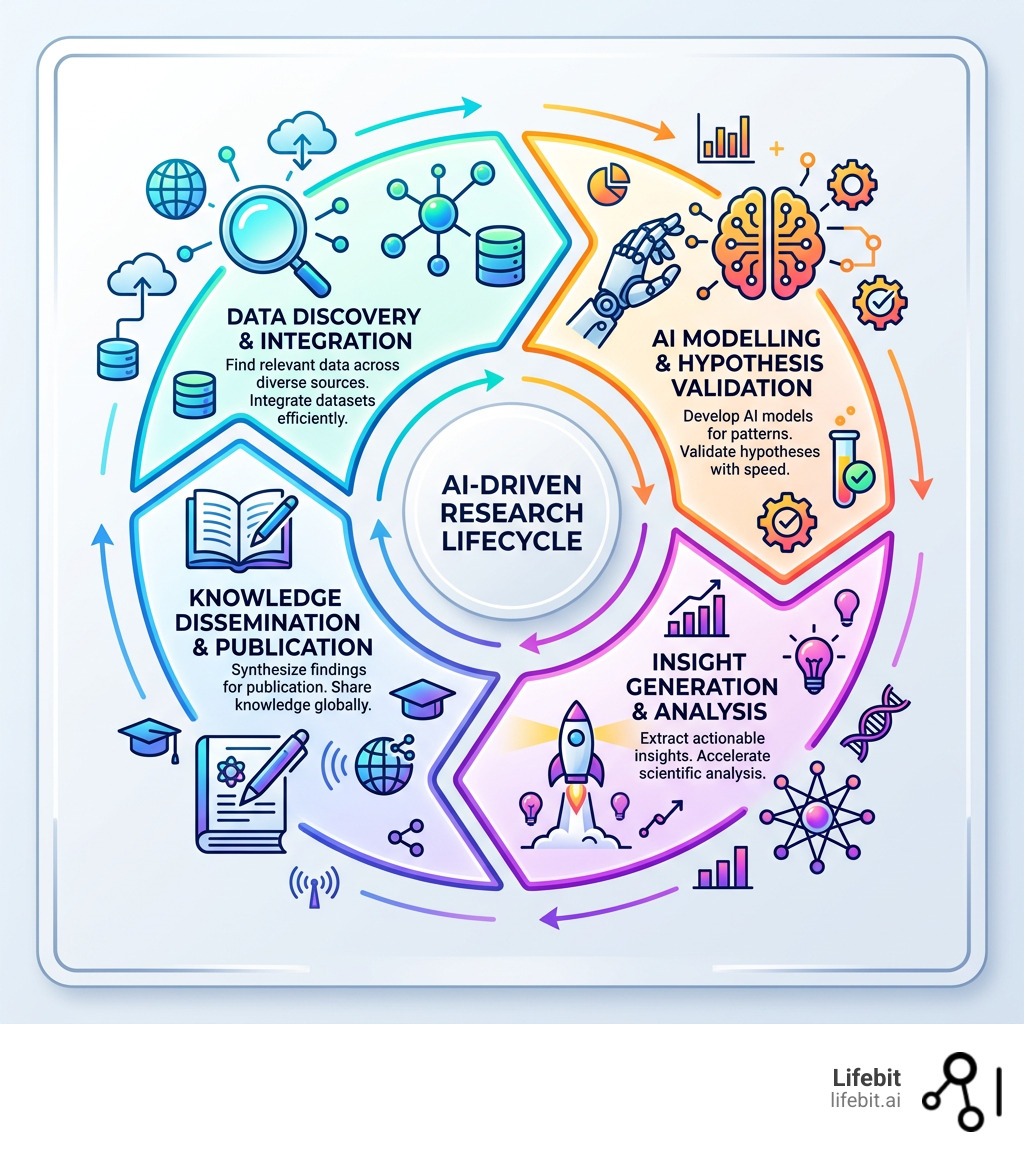

In AI in drug discovery, these high performers are moving beyond simple data crunching and toward “agentic” systems that can reason through complex problems. Unlike traditional AI, which requires a human to prompt every step, agentic systems are given a goal and then autonomously plan and execute the necessary steps to achieve it.

The Rise of Autonomous AI Agents in the Lab

We are entering the era of the “AI Scientist.” Systems like those developed by Sakana AI are now capable of automating the entire research lifecycle. This is not just automation; it is a closed-loop system where the AI acts as a collaborator. Imagine an AI agent that can:

- Generate a novel hypothesis: By scanning millions of papers and identifying unexplored chemical or biological interactions.

- Write the code to test it: Automatically generating the Python or R scripts needed for simulation or data analysis.

- Run the experiment: In a simulated environment or by interfacing with a “Self-Driving Lab” (SDL) where robotic arms perform physical wet-lab experiments.

- Visualize the results: Creating complex 3D models or heatmaps to explain the findings.

- Write a full scientific manuscript: Drafting the introduction, methods, results, and discussion sections.

- Perform its own peer review: Using a separate LLM-based agent to critique the work and suggest improvements before a human ever sees it.

In one instance, an AI co-scientist independently rediscovered a bacterial gene transfer mechanism that had taken human researchers years to uncover. These agents aren’t just tools; they are becoming essential partners in the quest for knowledge. This shift is already proving vital for generating AI for real-world evidence, where the sheer volume of patient data is too large for any human team to process manually. The ROI for these agentic systems is seen in the 10x reduction in the cost of identifying viable drug leads and the massive compression of discovery timelines.

Overcoming the Ethical and Technical Barriers of Machine Intelligence

Despite the excitement, we must address the “elephant in the server room.” Ai driven research faces significant hurdles that could derail progress if ignored. These barriers are not just technical; they are deeply ethical and sociological.

- Algorithmic Bias: If an AI is trained on data from only one demographic, its “discoveries” might only apply to that group. This is a life-or-death issue in healthcare, where clinical trials have historically underrepresented women and ethnic minorities. AI can amplify these biases if not carefully monitored.

- Data Privacy and Sovereignty: How do we train models on sensitive patient data without leaking that data? This is where federated learning in healthcare becomes the essential “middle way”—allowing AI to learn from data without the data ever leaving its secure home. This ensures compliance with strict regulations like GDPR and HIPAA while still enabling global-scale research.

- Hallucination and the “Black Box”: LLMs are notorious for “confident lying.” In science, a hallucinated citation or a made-up chemical property can lead to wasted years and millions of dollars. Furthermore, the lack of interpretability in deep learning models means we often don’t know why an AI reached a certain conclusion. In genomics, knowing a variant is associated with a disease is useless if we don’t understand the biological mechanism.

- The Reproducibility Crisis: AI has a dual role here. It can help identify inconsistencies in published data, but the complexity of the models themselves can make experiments harder to replicate if the exact model version, weights, and training data aren’t shared transparently.

- Skill Shortages: We need a new generation of “bilingual” researchers who understand both the biology and the Bayesian math behind the models. There is currently a massive talent gap for PhDs who are as comfortable with CRISPR gene editing as they are with transformer architectures.

To mitigate these risks, “Human-in-the-loop” (HITL) systems are essential. These systems use AI to generate possibilities but require human experts to validate the logic at critical checkpoints. This ensures that the speed of AI is balanced by the judgment and ethical nuance of human scientists.

Frequently Asked Questions about AI in Research

Can AI replace human researchers?

No. While AI is incredible at pattern recognition and data synthesis, it lacks human judgment, ethical nuance, and “out of the box” creativity. AI cannot decide which scientific questions are most worth asking or understand the societal implications of a discovery. Think of AI as a high-powered telescope: it helps you see further, but it doesn’t decide where to point or what the stars mean. We see it as augmentation, not replacement. The most successful labs will be those that combine human intuition with machine scale.

What are the best tools for literature reviews?

For mapping connections and visualizing the field, Litmaps is a standout. Semantic Scholar uses NLP to understand the intent behind citations, while Scopus remains a gold standard for high-quality, indexed data. For the “screening” phase, AI-driven assistants like Rayyan or Covidence can now reduce the time spent on titles and abstracts by over 90%, allowing researchers to focus on the full-text analysis of the most relevant papers.

How does AI impact research citations?

The data is clear: AI-driven papers receive roughly 5x more citations over their lifespan. This is partly due to “visibility gains”—AI helps researchers identify and write about trending, high-impact topics. However, there is a risk of “superstar” bias, where a few AI-generated “hits” drown out smaller, equally important studies. Researchers must be careful not to let AI steer them only toward what is popular, but also toward what is scientifically necessary.

Is AI-driven research only for wealthy institutions?

While the initial costs of building custom AI infrastructure are high, the long-term effect of AI is actually democratizing. Cloud-based AI tools and federated data platforms allow smaller labs to access the same computational power and data insights as “Big Pharma.” By using open-source models and shared data environments, the barrier to entry is lowering, provided labs have a clear strategic approach to implementation.

How does AI impact the reproducibility of science?

AI can improve reproducibility by automating data collection and analysis, reducing human error. However, it also introduces challenges; if the specific AI model and its training parameters aren’t shared, other researchers cannot replicate the results. This is why “Open AI Science”—the practice of sharing models, code, and data together—is becoming the new gold standard for scientific integrity.

Conclusion: The Future of the Federated Lab

The future of ai driven research isn’t just about faster computers; it’s about better connections. As we move toward autonomous AI agents and “self-driving” labs, the bottleneck will no longer be “how much can we compute?” but “how much data can we safely access?”

At Lifebit, we are building the infrastructure for this new era. Our federated platform allows researchers to run advanced AI/ML analytics across global, multi-omic datasets while maintaining the highest standards of security and compliance. We believe that by solving the data access problem, we can empower the next generation of AI Scientists to solve the world’s hardest problems—from climate change to curing rare diseases.

Secure your research with Lifebit’s Federated Data Platform and join the ranks of the high performers who are defining the future of science.