How to Use an AI Research Platform Without Becoming a Robot

2026 Guide: How to Choose an AI Research Platform Without Sacrificing Rigor

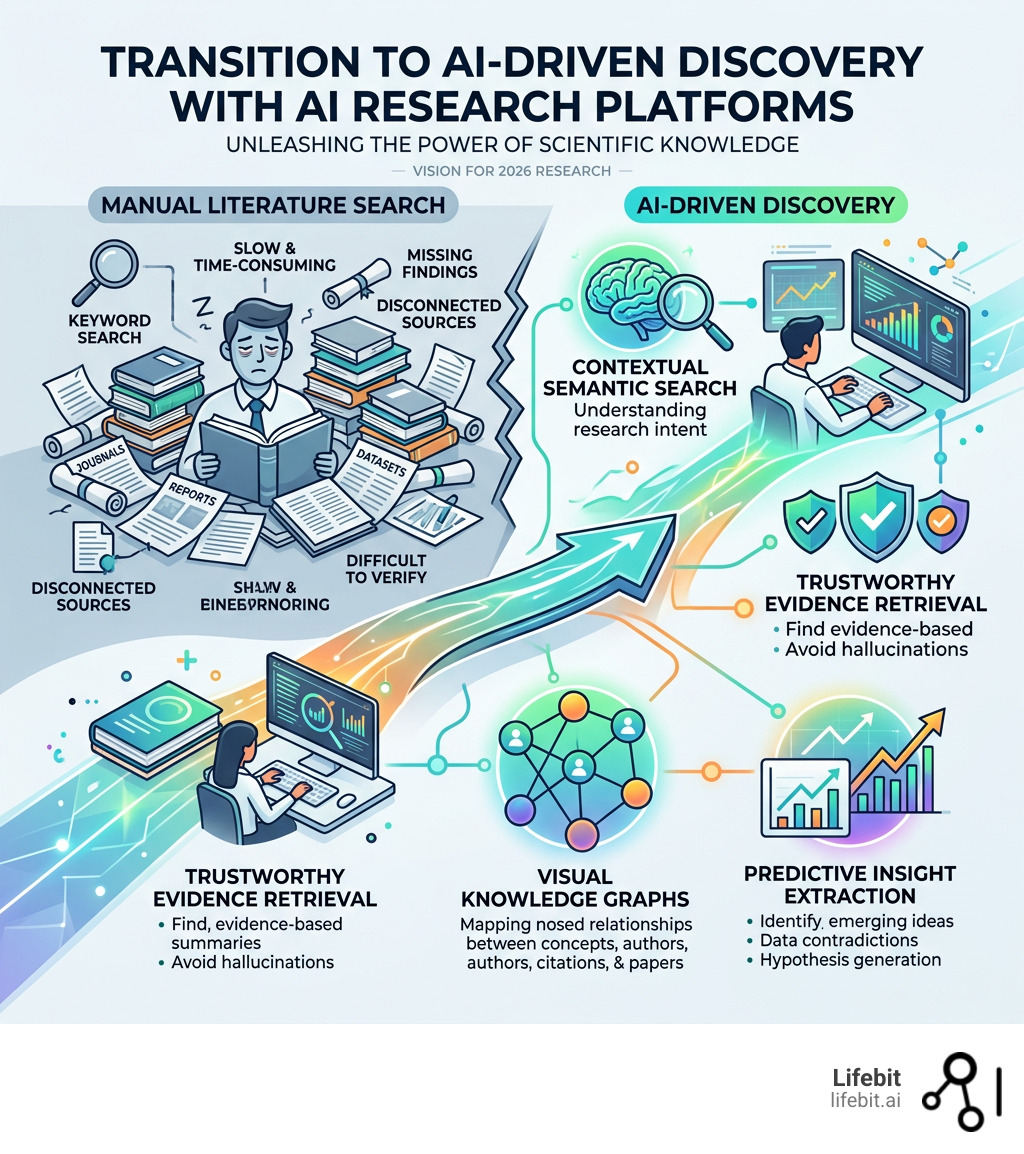

An AI research platform is a tool that uses artificial intelligence to help researchers find, analyze, and organize scientific literature, data, and evidence — faster and more accurately than traditional methods.

Research has never moved faster — and that’s the problem. Millions of new papers, preprints, and datasets are published every year. No human can keep up. Traditional keyword searches return endless lists that take hours to sort. Important findings get buried. Contradictory studies go unnoticed.

That’s why over 10 million researchers, students, and clinicians have turned to AI research platforms to cut through the noise — without sacrificing academic rigor.

But not every platform works the same way. Some specialize in citation analysis. Others shine in genomics, federated data, or model benchmarking. Picking the wrong one wastes time and can introduce real risks — like AI-generated summaries that aren’t grounded in actual evidence.

I’m Maria Chatzou Dunford, CEO and Co-founder of Lifebit and a computational biologist with over 15 years of experience building AI research platforms for biomedical discovery. In this guide, I’ll walk you through the features that actually move the needle — and how to choose the right AI research platform for your specific workflow.

AI research platform terms made easy:

Beyond the Search Bar: Core Features of a Modern AI Research Platform

When we talk about a modern AI research platform, we aren’t just talking about a “Google for Science.” We are talking about ecosystems that understand the context of human knowledge. The shift from simple keyword matching to semantic understanding has birthed features that were previously impossible.

One of the most transformative features is Smart Citations. Traditional citation counts are a vanity metric; they tell you how many people cited a paper, but not why. Modern platforms now analyze and classify billions of citations, telling you if a subsequent study supported, mentioned, or contradicted the original findings. This adds a layer of verifiable evidence to every search. For instance, if a researcher is looking at a controversial study on CRISPR-Cas9 off-target effects, the platform can instantly flag if the most recent 2025 studies have successfully replicated those results or if they have been largely refuted by the community.

Furthermore, these platforms provide:

- Full-text search: Moving beyond abstracts to index the entire body of 280M+ articles, books, and patents. This is critical because key data points, such as specific reagent concentrations or negative results, are often buried in the supplementary materials or the methods section, which traditional search engines ignore.

- SOTA Benchmarks: For those in technical fields, tracking State-of-the-Art (SOTA) performance across tens of thousands of benchmarks ensures you aren’t reinventing the wheel. This includes automated leaderboards for computer vision, NLP, and even specialized biological models.

- Non-hallucinated insights: By grounding LLMs in real-world papers, these tools provide answers backed by direct links to the source material. This is achieved through a process called Retrieval-Augmented Generation (RAG), where the AI is forced to cite its sources for every claim it makes.

- Knowledge Graph Visualization: Instead of a linear list of results, modern platforms map out the “intellectual landscape.” You can see how a discovery in physics in 2010 led to a breakthrough in medical imaging in 2024, identifying the key authors and institutions that bridged the gap.

Enhancing Discovery with an AI Research Platform

Traditional search engines are great if you know exactly what you’re looking for. But real research is often about following a trail of breadcrumbs. Advanced platforms have pioneered “curiosity-driven search.” Instead of looking at a static list, you start with one paper and the platform maps out related works, emerging topics, and author networks. This serendipitous discovery is vital for interdisciplinary research, where the solution to a problem in oncology might actually lie in a methodology used in marine biology.

This approach transforms literature review from a chore into an exploration. By visualizing how papers connect, you can spot “knowledge gaps”—areas where two fields overlap but no collaborative research has been published yet. For a deeper dive into how these tools fit into a broader ecosystem, check out our AI research platform guide.

Verifiable Evidence and Citation Analysis

The “reproducibility crisis” in science is real. To combat this, an AI research platform must offer more than just summaries; it must offer proof. Classification models now evaluate the sentiment of a citation with high confidence. This means the platform can distinguish between a paper that cites a study to build upon it and one that cites it to point out a flaw in its statistical power.

When you see a claim, the platform shows you the exact snippet of text from the citing paper. This allows you to see if a finding regarding, for example, colon cancer missegregation, has been affirmed by later proteomic comparisons. This level of transparency is essential for clinical researchers who are making decisions that affect patient lives. Before you fully commit to a tool, it is wise to research your AI research tools to ensure they meet the ethical standards of your institution, particularly regarding data provenance and algorithmic bias.

The Science of Speed: Hybrid Retrieval and LLM Embeddings

Why is one AI research platform faster or more accurate than another? It comes down to the retrieval architecture. Historically, we used BM25—a sparse lexical ranking function. It’s fast and great for keyword matching, but it’s “blind” to meaning. If you search for “feline,” BM25 might miss a paper that only uses the word “cat.”

Modern platforms use LLM-based vector embeddings for semantic retrieval. This maps words into a multi-dimensional space where “cat” and “feline” sit right next to each other. This allows the system to understand intent. If a researcher asks, “What are the side effects of this drug?”, the system understands that “adverse events,” “toxicities,” and “contraindications” are all semantically relevant terms.

| Feature | BM25 (Sparse) | LLM Embeddings (Dense) |

|---|---|---|

| Primary Signal | Keyword frequency | Semantic meaning |

| Speed | Extremely fast (milliseconds) | Slower (requires vector search) |

| Best For | Exact terms, IDs, acronyms | Natural language, “How-to” queries |

| Accuracy Metric | High precision for keywords | Higher Recall@k and nDCG@10 |

| Context Awareness | Low (word-to-word) | High (sentence-to-concept) |

The “gold standard” today is hybrid retrieval, which combines both methods to ensure you don’t miss a specific gene ID while still capturing the broad conceptual context of the study. This is particularly relevant in scientific research on diffusion models, where scaling laws require precise data retrieval across massive, unstructured datasets.

Improving Accuracy on Your AI Research Platform

To reach production-grade accuracy, top platforms don’t just stop at the first search. They use a multi-stage pipeline designed to filter out noise and prioritize high-impact evidence:

- Query Rewriting: Expanding your shorthand or fixing typos. If you type “p53,” the system may expand this to include “TP53” or “tumor protein 53” to ensure comprehensive coverage.

- Bi-encoders: Rapidly narrowing down millions of papers to the top 1,000 candidates using vector similarity. This is the “coarse-grained” filtering stage.

- Cross-encoders: A more compute-heavy “reranking” that picks the absolute best top 20 results. Unlike bi-encoders, cross-encoders look at the query and the document simultaneously to determine the exact relevance score.

- Contextual Reranking: Factoring in the user’s previous research history or the specific domain (e.g., prioritizing clinical trials over theoretical physics if the user is a doctor).

This process balances latency vs. accuracy. It also allows for domain adaptation—ensuring that a search for “T-cells” in a medical context doesn’t return results about “cell phones.” For more on how data is structured for these tasks, see our data intelligence platform guide.

Specialized Workflows: From Life Sciences to Embodied AI

A general-purpose AI won’t cut it when you’re dealing with 800,000+ patient genomic records or training a robot to navigate a 3D scene. Specialized platforms are emerging to handle these high-stakes workflows. In these environments, the cost of an error isn’t just a bad search result; it’s a failed clinical trial or a safety hazard.

In embodied AI, platforms are now using 3D reasoning to help robots understand “who-knows-what” in a scene, improving theory-of-mind performance. This requires the AI to process spatial data alongside textual research. Meanwhile, in the lab, researchers use AI for medical research to bridge the gap between bench work and data science. If you are handling sensitive biological data, you need an essential guide to AI platforms for biomedical data.

Accelerating Breakthroughs in the Life Sciences

In life sciences, the bottleneck isn’t finding a paper; it’s finding the data. Teams often spend months trying to build a patient cohort from siloed EHR (Electronic Health Record) and phenotypic data. The data is often messy, stored in different formats (HL7, FHIR, or custom CSVs), and spread across different hospital systems.

An AI research platform like Lifebit solves this by:

- Reducing time-to-insight: Turning weeks of manual cohort building into minutes of natural language querying. Instead of writing complex SQL queries, a researcher can simply ask, “Find all female patients over 50 with a history of hypertension and a specific BRCA1 mutation.”

- Unifying Silos: Syncing EHR data across multiple institutions to create a unified research commons without the need to physically move the data, which is often prohibited by law.

- Multi-omic Integration: Joining genotype and phenotype data automatically. This allows scientists to perform Genome-Wide Association Studies (GWAS) at scale, identifying the genetic drivers of complex diseases like Alzheimer’s or Type 2 Diabetes.

- Automated Data Harmonization: Using AI to map disparate data fields to a common data model (like OMOP), ensuring that “heart attack” in one database and “myocardial infarction” in another are recognized as the same clinical event.

This is the core of an AI-powered biomedical data platform, where the goal is to bring life-changing medicines to patients faster by removing the administrative and technical friction of data science.

Benchmarking and Evaluation Practices

How do we know if these AI models are actually getting smarter? We use benchmarks like the Evaluation Gauntlet or MLCommon. These stress-test models on everything from sparse autoencoders to their ability to follow hard instructions using simple BLEU scores. For example, the MLCommon benchmark provides a time-to-solution metric for training foundation models on large GPU clusters. These benchmarks are essential for ensuring that the AI research platform you choose is actually state-of-the-art and not just using outdated models behind a fancy interface.

Security, Compliance, and the Future of Federated Research

As we move toward 2026, the biggest hurdle for an AI research platform isn’t the AI—it’s the security. When dealing with 3 million diagnoses or sensitive genomic sequences, you cannot simply “upload” data to a public cloud. The risk of a data breach is too high, and the regulatory penalties are too severe.

This is where federated research comes in. Instead of moving the data to the AI, we move the AI to the data. This ensures:

- Compliance: Meeting HIPAA, GDPR, SOC 2 Type II, and NIST 800-171 standards. By keeping data within its original jurisdiction (e.g., a hospital’s private server), organizations can comply with strict data sovereignty laws.

- Unified Governance: A single layer that manages who has access to what, across multiple clouds and countries. This allows for “auditable research,” where every action taken by an AI or a human researcher is logged and verifiable.

- Fine-grained access: Ensuring a researcher can see the analysis results (e.g., a p-value or a trend line) without ever seeing the raw, identifying patient information. This is often supported by technologies like Differential Privacy, which adds mathematical “noise” to the data to prevent re-identification.

- Trusted Research Environments (TREs): These are secure, highly controlled digital spaces where researchers can access sensitive data. A modern AI research platform integrates directly with TREs to provide a seamless experience that is as secure as it is powerful.

For a deep dive into how this architecture works, read our federated data platform guide.

The Rise of Agentic Ecosystems and Open Coding

The future of the AI research platform is agentic. We are seeing the rise of AI assistants that don’t just chat, but actually write and execute code within private repositories. These agents can perform complex tasks like:

- Scanning a repository for existing analysis scripts.

- Modifying those scripts to fit a new dataset.

- Running the analysis and generating a summary report.

- Flagging any statistical anomalies for human review.

These agents are part of a broader “agentic ecosystem” that unites researchers, benchmarks, and resources to automate the most repetitive parts of the scientific process, allowing humans to focus on high-level hypothesis generation and experimental design.

Frequently Asked Questions about AI Research Platforms

How do AI research platforms prevent hallucinations?

Prevention starts with grounding. Unlike general chatbots, a dedicated research platform uses Retrieval-Augmented Generation (RAG). It extracts context from peer-reviewed sources first and then generates an answer based only on that text. If the evidence isn’t there, the system should tell you it doesn’t know. Furthermore, many platforms use “Chain of Verification” (CoVe) techniques, where the AI double-checks its own reasoning before presenting it to the user. This is a key component of what is a federated data platform.

Can these platforms handle paywalled academic content?

Yes, many do. Leading platforms have direct indexing agreements with major publishers like Elsevier, Springer Nature, and Wiley. This allows the AI to “read” and analyze full-text articles that might be behind a paywall for a regular user, providing you with the insights while respecting copyright. This ensures that your literature review is based on the highest quality, peer-reviewed data rather than just open-access preprints. For more on this, see our guide on the biomedical research data platform.

What is the difference between sparse and dense retrieval?

Sparse retrieval (BM25) looks for exact word matches. It’s like looking for a specific name in a phone book. Dense retrieval (Vector embeddings) looks for “mathematical similarity” in meaning. A hybrid model uses both to ensure you get the precision of a keyword search with the breadth of semantic understanding. This is crucial for scientific research where terminology can be highly specific but also highly varied.

How do these platforms integrate with existing lab workflows?

Most modern platforms offer robust APIs and integrations with tools like Jupyter Notebooks, RStudio, and Electronic Lab Notebooks (ELNs). This allows researchers to pull AI-generated insights directly into their existing data analysis pipelines, ensuring that the AI research platform acts as an extension of their current toolkit rather than a separate, siloed application.

Is my data used to train the AI models?

For enterprise-grade platforms, the answer is typically no. Most platforms offer “Private AI” deployments where your data and queries are never used to train the underlying foundation models. This is a critical requirement for pharmaceutical companies and government agencies that must protect their intellectual property and sensitive findings.

Conclusion: Choosing the Right Partner for Scientific Discovery

The era of “robotic” research—sorting through endless lists and manual data cleaning—is coming to an end. An AI research platform is no longer a luxury; it is a necessity for staying competitive in a world of 280M+ articles and massive multi-omic datasets.

At Lifebit, we believe that the most powerful research happens when data is secure and accessible. Our Lifebit platform provides a federated AI environment that empowers biopharma and government agencies to collaborate across borders without compromising privacy. By combining real-time insights with a Trusted Research Environment, we help you focus on what matters: the next big breakthrough.

Ready to see how federated AI can transform your workflow? Explore the Lifebit platform today.