Understanding government access and security for personal data

Why Government Data Security Is a Matter of Public Safety — Not Just Compliance

Government data security is one of the most critical challenges facing public institutions today. Governments hold some of the most sensitive personal information that exists — health records, financial data, criminal justice files, and the identities of individuals whose safety depends on that data staying private. In an era where data is the lifeblood of public service delivery, the protection of this asset is no longer a back-office IT concern; it is a fundamental pillar of national security and social stability.

A single breach can have consequences far beyond a leaked spreadsheet. Consider this: if a domestic abuse survivor’s new address is exposed through a government system, or a serving judge’s home is revealed to someone they convicted — the results can be life-threatening. These are not hypothetical edge cases. They are real risks that shape how governments must think about data protection. Furthermore, the economic impact of a breach in the public sector is staggering. According to recent industry reports, the average cost of a data breach in the public sector has risen significantly, driven by the complexity of legacy systems and the high value of the datasets targeted by state-sponsored actors and organized cybercriminals.

The Evolving Threat Landscape

Today’s threat landscape is characterized by “Ransomware-as-a-Service” (RaaS) and sophisticated phishing campaigns that target the human element of government operations. Public sector organizations are often viewed as “soft targets” due to budget constraints and the sheer volume of data they manage. This makes a robust government data security strategy essential for maintaining the “social contract” between the state and its citizens. When citizens provide their data to the government, they do so with the implicit trust that it will be used for their benefit and protected with the highest level of rigor.

Here is what government data security covers at a glance:

| Area | What It Means |

|---|---|

| Legal compliance | Following UK GDPR, the Data Protection Act 2018, and sector-specific rules |

| Data protection principles | Lawful, fair, transparent use — with strict limits on retention and access |

| Zero Trust security | Treating every access request as potentially hostile, not just external threats |

| Protecting vulnerable individuals | Extra safeguards for those in witness protection, sensitive roles, or at risk of harm |

| Supply chain and third-party risk | Ensuring processors and vendors meet the same security standards |

| Data lifecycle management | Securing data from the moment it is created to the moment it is destroyed |

The stakes are high. And the frameworks to manage them — from the UK’s National Cyber Security Centre (NCSC) guidance to NIST SP 800-53 and the CISA Zero Trust Maturity Model — are increasingly detailed and demanding. These frameworks provide a roadmap for agencies to transition from a reactive posture to one of proactive resilience.

I’m Dr. Maria Chatzou Dunford, CEO and Co-founder of Lifebit, and I have spent over 15 years working at the intersection of sensitive biomedical data, federated computing, and secure public sector environments — areas where government data security is not optional, but foundational. Through Lifebit’s work with governments and health institutions globally, I have seen what separates organisations that get this right from those that don’t.

Easy Government data security glossary:

- AI/ML analytics for healthcare

- AI clinical decision

- artificial intelligence (AI) enabled data repository services and informatics tools and capabilities

Core Principles of Government Data Security and Personal Privacy

At its heart, government data security is built on the “CIA triad”: Confidentiality, Integrity, and Availability. In the public sector, this means ensuring that only authorized personnel see sensitive files (Confidentiality), that the information remains accurate and untampered with (Integrity), and that services remain online and accessible when citizens need them most (Availability). A failure in any of these three pillars can lead to a breakdown in public service delivery, from delayed benefit payments to the inability to access critical health records during an emergency.

To achieve this, organizations must adhere to the 7 data protection principles outlined by the ICO. These principles demand that data be processed lawfully, fairly, and transparently, and that it is kept only as long as necessary. We believe that understanding more info about data privacy regulations is the first step for any agency looking to modernize its infrastructure. This includes the principle of “Accountability,” which requires agencies to not only comply with the law but to be able to demonstrate that compliance through rigorous documentation and auditing.

Modern government strategy has shifted toward a risk-driven approach. This involves Secure by Design principles, where security isn’t a “bolt-on” at the end of a project but is baked into the very architecture of every digital service. By adopting data protection by design and default, agencies ensure that privacy settings are at their highest level from day one, minimizing the chance of human error leading to a leak. This approach also includes “Data Minimization,” ensuring that only the specific data required for a task is collected and processed, thereby reducing the potential impact of any future breach.

Protecting Vulnerable Individuals from Targeted Risks

While a standard data breach is bad for anyone, for “at-risk” individuals, it can be catastrophic. The UK NCSC emphasizes that government datasets often contain information on people in witness protection, victims of domestic abuse, or staff in sensitive national security roles. For these individuals, data security is synonymous with physical security.

If these identities are compromised, the “Ripple Effect” can be devastating. A single leaked address can lead to physical harm or the compromise of ongoing criminal investigations. We must treat this data with an inclusive, highly protective lens. This means implementing stricter access controls, such as Just-In-Time (JIT) access, and ensuring that even within an organization, only those with a specific, verified “need to know” can view these high-stakes records. Furthermore, agencies must consider the risk of “Mosaic Effect” attacks, where multiple non-sensitive datasets are combined to re-identify anonymous individuals.

Legal Frameworks: UK GDPR and the Data Protection Act

In the UK, the legal backbone of government data security is the UK General Data Protection Regulation (UK GDPR) and the Data Protection Act 2018. These laws aren’t just red tape; they provide the mandate for how we handle “special category data”—such as health, race, or political affiliation—and criminal offence data. Special category data requires an even higher level of protection and a specific “Article 9” condition for processing, such as public interest in the area of public health.

For agencies, this means every processing activity must have a “lawful basis.” Whether it’s providing a public service or protecting vital interests, the reason for holding data must be clear. For those handling complex biological or research information, following a guide to GDPR compliant data is essential to ensure that large-scale analytics don’t run afoul of these strict legal requirements. This is particularly relevant when dealing with international data transfers, where “Adequacy Decisions” or “Standard Contractual Clauses” (SCCs) must be in place to ensure that the data of UK citizens remains protected even when processed abroad.

Implementing Zero Trust: Why Data is the New Perimeter

The old way of securing data was like a castle: a thick wall (the firewall) around everything. But once someone got through the gate, they had the run of the place. This “lateral movement” is how most major modern breaches happen. Government data security is now moving toward Zero Trust—a model that assumes the network is already compromised and that threats can originate from both outside and inside the organization.

In a Zero Trust architecture, the “perimeter” isn’t the network edge; it’s the data itself. Every single request for access is verified, regardless of where it comes from. This aligns with the CISA Zero Trust Maturity Model (ZTMM), which guides agencies from “traditional” to “advanced” and “optimal” security states across five key pillars: Identity, Device, Network, Application Workload, and Data.

The Five Pillars of Zero Trust Maturity:

- Identity: Moving beyond simple passwords to phishing-resistant Multi-Factor Authentication (MFA) and continuous identity verification.

- Device: Ensuring that only healthy, managed devices can access government resources, regardless of whether they are on-premises or remote.

- Network: Implementing micro-segmentation to prevent lateral movement. If one part of the network is breached, the attacker is contained within a small “cell.”

- Application Workload: Securing the entire software development lifecycle and ensuring that applications are only accessible through secure proxies.

- Data: The ultimate goal. Data must be encrypted at rest and in transit, categorized by sensitivity, and protected by granular access controls.

One of the most effective ways to manage this is through sophisticated access control models. Here is how they compare:

| Access Model | How It Works | Best Use Case |

|---|---|---|

| RBAC (Role-Based) | Access based on your job title. | Simple internal tools with clear hierarchies. |

| ABAC (Attribute-Based) | Access based on user characteristics (e.g., “Senior Doctor”). | Dynamic environments with many different roles. |

| CBAC (Context-Based) | Access based on user, device, location, and time. | Zero Trust: Ensures a user isn’t logging in from a high-risk location. |

By following the Federal Zero Trust Data Security Guide, agencies can move toward a data-centric security posture. For those working with US federal agencies, achieving a complete guide to FedRAMP status is often a mandatory step in proving that your cloud environment meets these rigorous standards. FedRAMP provides a standardized approach to security assessment, authorization, and continuous monitoring for cloud products and services.

Building a Comprehensive Inventory for Government Data Security

You cannot protect what you don’t know you have. Many agencies struggle with “shadow data”—information sitting in forgotten databases, local spreadsheets, or unauthorized cloud storage. Creating an Information Asset Register (IAR) or a robust data catalog is the first step in documenting service assets. This inventory should include not just the data itself, but its provenance, its sensitivity level, and its designated “Data Owner.”

Agencies should prioritize “High Value Assets” (HVAs)—those datasets that are essential to the nation’s primary functions or whose loss would cause significant impact. Automated discovery tools can help “tag” data as it’s created, ensuring it is categorized by sensitivity level (e.g., OFFICIAL-SENSITIVE) and that appropriate controls are applied automatically. This automated tagging is crucial for maintaining security at scale, as manual classification is often prone to error and cannot keep pace with the volume of data generated by modern digital services.

Identity and Access Management (ICAM) in Government Data Security

ICAM is the engine of Zero Trust. It ensures that the right people have access to the right data at the right time for the right reason. The principle of “least privilege” is key: users should only have the minimum level of access required to do their jobs. This reduces the “blast radius” of any potential account compromise.

Following NIST SP 800-171r3 standards, we recommend:

- Multi-factor authentication (MFA): Using “something you know” (password), “something you have” (security key), and “something you are” (biometrics) to verify identity.

- Replay-resistant techniques: Using hardware security keys (like YubiKeys) to prevent hackers from intercepting and reusing login credentials, a common tactic in modern phishing attacks.

- Separation of duties: Ensuring that no single person has enough power to both initiate and hide a fraudulent or malicious action. For example, the person who grants access to a database should not be the same person who manages the data within it.

Managing the Data Lifecycle: From Creation to Secure Disposal

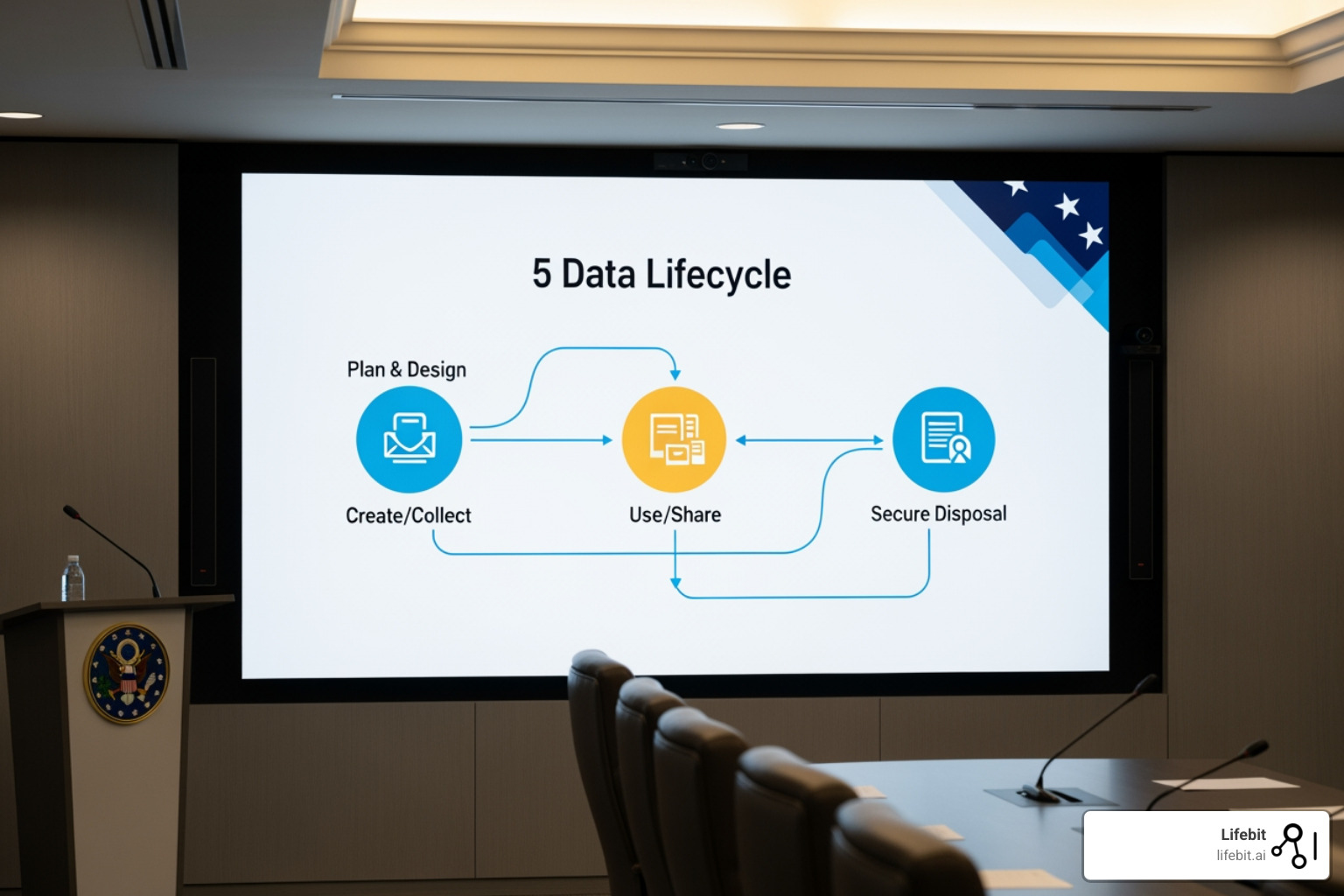

Data has a lifecycle, and security must be embedded in every phase. This requires a team effort involving Data Stewards (who manage the data’s quality and use), the Chief Data Officer (CDO), and the Chief Information Security Officer (CISO). Effective governance ensures that data is treated as a strategic asset rather than a liability.

- Plan & Design: Identify what data is needed and conduct a Data Protection Impact Assessment (DPIA). This phase should also define the data retention policy — how long the data is needed and why.

- Create/Collect: Ensure data is gathered lawfully and tagged correctly. This includes providing clear privacy notices to citizens at the point of collection.

- Use/Share: Apply access controls and monitor for unusual activity. Use audit logs to track who accessed what data and when, providing a clear trail for forensic investigations.

- Store/Archive: Encrypt data at rest using strong encryption standards (e.g., AES-256) and maintain backups that meet recovery time objectives (RTO) and recovery point objectives (RPO). Backups must be stored securely and ideally “air-gapped” to protect against ransomware.

- Dispose: When data is no longer needed, it must undergo secure sanitisation to ensure it cannot be recovered. This is a critical step that is often overlooked, leading to data leaks from decommissioned hardware.

At Lifebit, our solutions for government are designed to manage this lifecycle automatically, ensuring that data retention policies are enforced without manual intervention, reducing the risk of “data hoarding,” which only increases the potential attack surface.

Minimizing the Attack Surface During Data Sharing

The most dangerous moment for data is when it moves. Whether sharing with another agency or a private contractor, the “attack surface” increases. Data minimization is your best friend here: if you only need to share a user’s age to verify eligibility for a service, don’t send their full date of birth, address, and National Insurance number.

When working with third parties, Article 28 of UK GDPR mandates strict contracts. You are responsible for your supply chain. This means managing third-party product security risks by auditing your vendors and ensuring they use secure APIs and encrypted transit protocols like TLS 1.3. Agencies should also demand a Software Bill of Materials (SBOM) from vendors to understand the underlying components of the software they are using and identify any known vulnerabilities in the supply chain.

Advanced Controls: PETS, Anonymization, and Risk Assessment

To balance the need for research and policy-making with the need for individual privacy, we use advanced tools like Privacy-Enhancing Technologies (PETs). These allow us to gain insights from data without ever actually “seeing” the raw personal details. PETs are becoming essential for cross-departmental collaboration and public-private partnerships.

- Anonymisation: Stripping all identifiers so the individual can never be re-identified. Once truly anonymous, the data is no longer subject to GDPR. However, achieving true anonymization is increasingly difficult in the age of Big Data.

- Pseudonymisation: Replacing identifiers (like names) with codes. This is a great security measure, but the data is still legally “personal data” because it could be re-linked with the original identifiers.

- Pairwise Pseudonymous Identifiers (PPIDs): A clever technique where different partners receive different IDs for the same person, preventing organizations from “colluding” to track an individual across different databases without authorization.

- Federated Analytics: A model where the data stays in its secure home, and only the analytical queries move. This eliminates the need for mass data transfers, which are the primary cause of large-scale breaches.

Before any major data project, a Data Protection Impact Assessment (DPIA) is a legal must. This is especially true in healthcare data compliance, where the risks to individuals are highest. A DPIA helps identify and minimize the data protection risks of a project, ensuring that privacy is considered from the very beginning.

Using the Cyber Assessment Framework (CAF) for Resilience

For organizations managing critical national infrastructure, the NCSC’s Cyber Assessment Framework (NCSC) provides a structured way to measure cyber resilience. It moves beyond simple checklists to focus on “outcomes” and the ability of an organization to maintain essential services during a cyber attack.

Key activities include:

- Threat Modelling: Proactively “attacking” your own system on paper to find weak spots. This involves identifying potential attackers, their motivations, and the methods they might use.

- Performing a security risk assessment: Assigning a likelihood and impact score to every potential threat. This allows agencies to prioritize their security investments where they will have the most impact.

- Vulnerability Management: Ensuring systems are patched and that “legacy” software isn’t creating a back door for hackers. Legacy systems are a major challenge in government, often requiring specialized “compensating controls” when they cannot be easily replaced.

- Incident Response Planning: Knowing exactly who to call and what to do in the first 72 hours of a breach. This includes communication plans for notifying the ICO and affected citizens, as well as technical plans for containing the breach and restoring services.

Frequently Asked Questions about Government Data Security

What is the impact of a government data breach?

The impact can be catastrophic. Beyond financial loss or identity theft, breaches can lead to a total loss of public trust in digital services. For “at-risk” individuals, such as those in witness protection or sensitive national security roles, a data leak can result in direct physical danger or the compromise of vital intelligence operations. It can also lead to significant regulatory fines and the cost of providing credit monitoring services to millions of affected citizens.

Can government data be stored in the cloud overseas?

Yes, but with strict conditions. UK government data classified at the OFFICIAL level (including SENSITIVE) can be stored in overseas data centers or cloud regions. However, this is only permissible if a thorough risk assessment is completed and satisfactory legal and security protections are confirmed to be in place. This includes assessing the legal jurisdiction of the host country and ensuring that the data is protected from unauthorized access by foreign governments. There is no “blanket” requirement for all UK data to stay physically within the UK, but the security standards must follow the data wherever it goes.

How do agencies balance data sharing with security?

Agencies use a “balanced risk” approach. Not sharing data can sometimes be as dangerous as sharing it (e.g., missing a public safety threat or failing to identify a vulnerable child). To share safely, agencies use data minimization, PETs, and strict contractual agreements with third-party processors. Using federated data models—where the data stays put and only the “analysis” moves—is becoming the gold standard for secure sharing, as it provides the benefits of data collaboration without the risks of data movement.

How does legacy IT impact government data security?

Legacy systems are one of the biggest hurdles. Many government databases were built decades ago, before modern security threats existed. These systems often cannot support modern encryption or Multi-Factor Authentication. The strategy here is “Modernization through Isolation” — wrapping legacy systems in modern security layers (like Zero Trust proxies) while systematically migrating data to secure, cloud-native environments.

What is the role of AI in government data defense?

AI is a double-edged sword. While attackers use AI to create more convincing phishing emails, governments use AI for “User and Entity Behavior Analytics” (UEBA). These systems learn what “normal” looks like for a specific user and can automatically flag or block access if that user suddenly starts downloading massive amounts of sensitive data at 3 AM from an unusual location.

Conclusion: Why 2026 Is the Year for Zero Trust Maturity

As we look toward the future of government data security, the goal is clear: moving from reactive “firefighting” to proactive resilience. Public sector organizations must embrace data maturity, utilizing frameworks like the CAF and the Zero Trust roadmap to protect the citizens they serve. By 2026, the expectation is that Zero Trust will no longer be an aspirational goal but the standard operating procedure for all government agencies.

At Lifebit, we are committed to this mission. Our Lifebit Platform provides the federated infrastructure that allows governments to unlock the power of sensitive data for public good—without ever compromising on security or privacy. By keeping data in its original, secure location and bringing the analysis to the data, we eliminate the risks of mass data movement and ensure that privacy is truly “by design.”

The path to secure, modern government services is challenging, but with the right principles and technologies in place, we can build a digital future that is both innovative and safe. The focus must remain on the ultimate goal: ensuring that the data entrusted to the government by its citizens remains a tool for progress, not a source of peril.