The Great Debate: Harmonization vs Standardization

Why Data Harmonization vs Data Standardization Matters for Global Health Research

Data harmonization vs data standardization is not just a technical debate—it’s the difference between waiting six months to analyze research data or getting insights in weeks. The wrong choice can mean excluding 30% of your dataset, wasting millions in healthcare spending, or missing critical patient populations entirely.

Quick Answer: What’s the Difference?

| Aspect | Data Standardization | Data Harmonization |

|---|---|---|

| Approach | Uniform methodology applied prospectively | Flexible integration of disparate data retrospectively |

| Use Case | Clinical trials, new data collection | Multi-site research, legacy datasets |

| Timeline | Enforced before collection | Applied after data exists |

| Flexibility | Rigid, consistent formats | Adaptable to existing structures |

| Best For | Compliance, future studies | Pooling historical data, rare diseases |

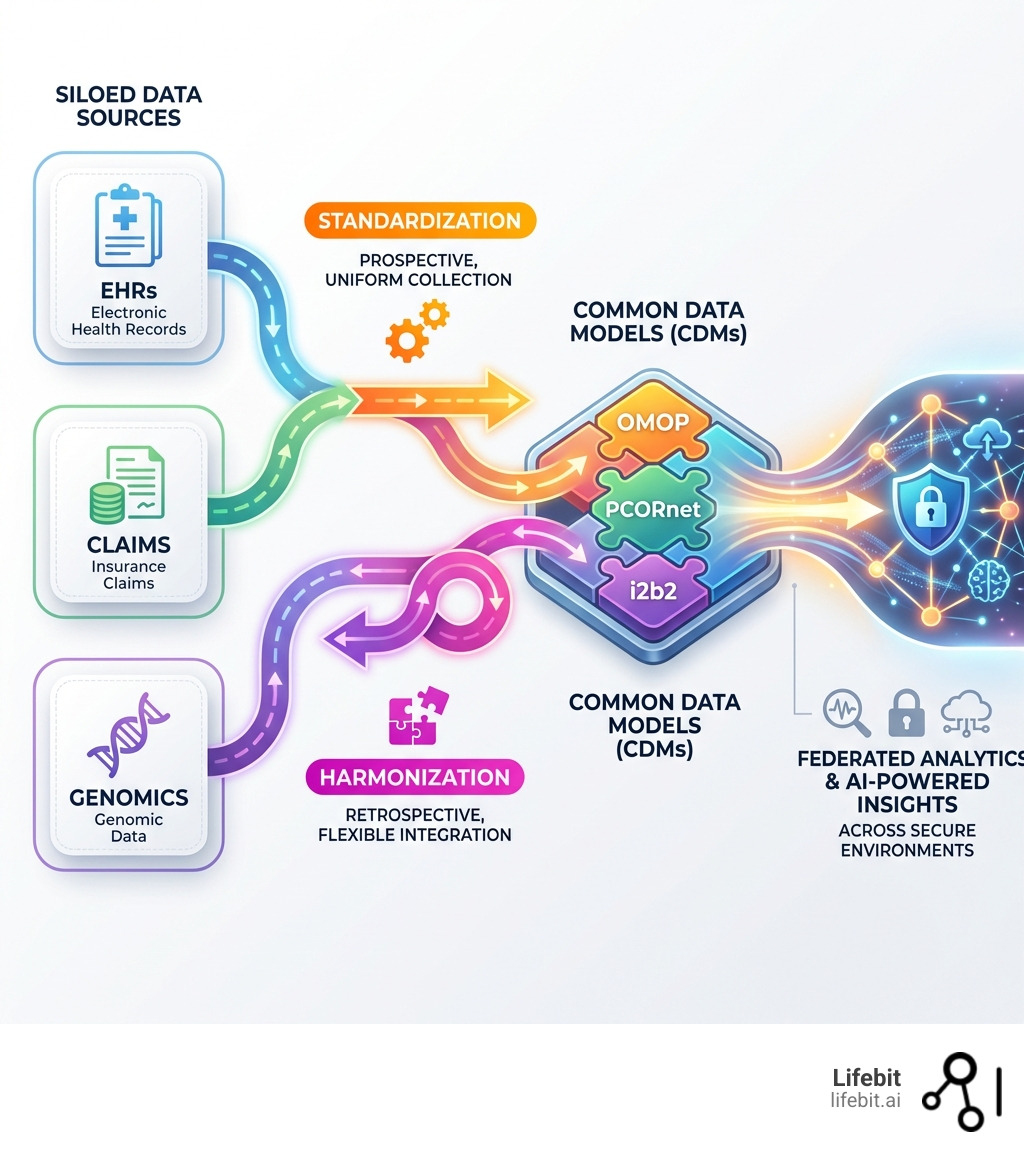

Here’s what you need to know: Standardization means collecting data the same way from the start—think uniform protocols in clinical trials. Harmonization means taking data that already exists in different formats and making it comparable—like pooling electronic health records from 80 institutions that use different systems.

The stakes are high. Research shows that harmonization projects can take up to six months to complete, with approximately 30% of data deemed impossible to harmonize and excluded from final datasets. Meanwhile, healthcare organizations waste billions annually due to data silos that prevent cross-institutional analysis.

But there’s good news: networks like PCORnet have already transformed data from over 80 institutions into a Common Data Model, while OMOP CDM is utilized at 90 sites worldwide, providing access to nearly 40 million patient records through harmonized approaches.

The real question isn’t which approach is “better”—it’s which one solves your specific problem. Need to launch a new multi-country clinical trial? Standardization ensures consistency from day one. Trying to pool decades of cancer registry data from hospitals across Europe? Harmonization is your only path forward.

As Maria Chatzou Dunford, CEO and Co-founder of Lifebit with over 15 years in computational biology and biomedical data integration, I’ve seen how the choice between data harmonization vs data standardization can make or break global research initiatives. This guide will help you make the right decision for your organization’s data strategy.

Quick look at data harmonization vs data standardization:

- data harmonization meaning

- data harmonization techniques

- how does data harmonization differ from data integration

Why 30% of Your Data Is Wasted: Data Harmonization vs Data Standardization

In big data, the bottleneck isn’t usually a lack of information—it’s the “fragmentation tax.” When we look at data harmonization vs data standardization, we are looking at two different philosophical and technical answers to the same question: How do we make this data useful?

Data aggregation—the compiling of information from databases to prepare combined datasets—is often stalled by heterogeneous data. Research highlights that data harmonization is the essential primer for reconciling various types, levels, and sources of data into comparable formats. Without these processes, we are left with “data graveyards”—vast silos of information that cannot talk to one another. This fragmentation is particularly acute in multi-omic research, where genomic sequences, phenotypic data, and environmental factors are often stored in incompatible formats across different biobanks.

To measure success in these initiatives, organizations often look at specific KPIs:

- Data Completeness: The percentage of data retained after the integration process. In retrospective harmonization, the risk of losing up to 30% of the dataset is a critical concern for statistical power. This loss often occurs because variables collected in one study (e.g., specific biomarker concentrations) may not have a corresponding field in another study, or the units of measurement are fundamentally incompatible.

- Time-to-Insight: The duration from initial data acquisition to a queryable state. Standardization typically shortens this for future data, while harmonization requires significant upfront investment for legacy data. For global health initiatives, this can be the difference between responding to an outbreak in real-time versus conducting a post-mortem analysis months later.

- Semantic Accuracy: The degree to which the original clinical meaning is preserved. This is the most difficult KPI to maintain when mapping between different coding systems, such as translating local hospital codes into international standards like SNOMED CT.

Defining Data Standardization

At its core, data standardization is the process of establishing a standardized set of concepts and designations for a subject area. It relies on a controlled vocabulary—a set of terms selected and defined to promote consistency.

Standardization involves three primary layers that must be addressed simultaneously:

- Syntactic Standardization: Ensuring the format is consistent. This involves technical specifications like using JSON vs. XML, or enforcing specific date formats like ISO 8601 (YYYY-MM-DD). Without syntactic consistency, automated scripts will fail to parse the data correctly.

- Structural Standardization: Defining the schema or the “architecture” of the data. This ensures every patient record has exactly the same fields in the same order. For example, ensuring that every “Patient” object contains a “Unique Identifier,” “Date of Birth,” and “Primary Diagnosis” field.

- Semantic Standardization: This is the most complex layer, involving universal coding systems. It ensures that “Heart Attack” in one system is coded exactly the same as “Myocardial Infarction” in another. Key standards include SNOMED CT for clinical findings, LOINC for laboratory observations, and RxNorm for medications.

Think of standardization as a strict “house style” for data. If we decide that “Sex” must only be recorded as “M,” “F,” or “U,” we are standardizing. It provides a fixed meaning and eliminates ambiguity before the data is even recorded. This is the bedrock of health data standardization.

Defining Data Harmonization

If standardization is a strict rulebook, data harmonization is a skilled translator. It is the process of bringing together data of different formats, metadata, and structures, often from disparate sources, and combining it so users can query or view it as a single, cohesive set.

Harmonization doesn’t demand that everyone change how they collect data. Instead, it creates a “crosswalk” between existing datasets. It resolves conflicts in syntax (format), structure (schema), and semantics (meaning). For example, it can reconcile one dataset that defines “young adults” as ages 18-25 with another that defines them as 18-30, allowing for meaningful cross-study analysis.

This process typically follows a rigorous Harmonization Workflow:

- Data Profiling: Analyzing the source data to understand its quality, structure, and content.

- Mapping: Creating the logic that links source data fields to a target model (e.g., mapping a local “Lab_Result” field to a standard LOINC code).

- Transformation (ETL): The technical process of Extracting, Transforming, and Loading the data into the new format. This often involves complex mathematical conversions, such as changing pounds to kilograms or Celsius to Fahrenheit.

- Validation: Testing the harmonized data to ensure that the transformation didn’t introduce errors or change the clinical meaning of the original records.

5 Critical Differences Between Harmonization and Standardization

Choosing between these two approaches requires understanding the trade-offs in time, cost, and data integrity. The decision often hinges on whether you are looking at the data you will collect or the data you already have.

| Feature | Data Standardization | Data Harmonization |

|---|---|---|

| Direction | Prospective (Forward-looking) | Retrospective (Backward-looking) |

| Flexibility | Low (Strict adherence to rules) | High (Adaptable to existing data) |

| Data Loss | Minimal (Data is born clean) | Significant (Up to 30% may be excluded) |

| Implementation | Fast at the point of entry | Slow (Requires complex mapping/ETL) |

| Scalability | Hard (Requires everyone to agree) | Easier (Connects existing disparate nodes) |

| Cost Profile | High upfront (Training/System changes) | High ongoing (Maintenance of mappings) |

When to Prioritize Standardization

Standardization is your best friend when you are starting fresh. It is the gold standard for:

- New Clinical Trials: Where fixed protocols and uniform data collection are mandatory for regulatory compliance. Without standardization, the FDA or EMA may reject the trial results due to data quality concerns.

- Internal System Overhauls: When an organization wants to ensure all future internal data is consistent across departments, such as merging two hospital wings into a single EHR system.

- High-Compliance Environments: Where data must meet strict government or industry standards (like HIPAA or GDPR) from the moment of creation to ensure auditability.

When to Choose Harmonization

Harmonization is the hero of “messy” real-world data. We recommend this approach for:

- Retrospective Studies: When you need to analyze data that was collected years ago using different standards. For instance, comparing 1990s cardiovascular data with modern records.

- Multi-Center Research: When integrating clinical and epidemiologic data across different hospitals or countries that will not change their internal systems. It is often politically and financially impossible to force 50 global hospitals to change their primary EHR software.

- Rare Disease Research: Where the patient population is so small that you must pool data from dozens of different global sources to achieve statistical power. In these cases, the risk of 30% data loss is often acceptable because the remaining 70% is still more data than any single institution could provide.

- Real-World Evidence (RWE): Using insurance claims, pharmacy records, and wearable data to supplement clinical trial results. These sources are inherently non-standardized and require harmonization to be useful.

Stop Rebuilding Models: How CDMs Open up 40 Million Patient Records

One of the most effective ways to bridge the data harmonization vs data standardization gap is through Common Data Models (CDMs). Instead of every institution building their own model from scratch—a massive waste of resources—they map their local data to a shared, proven structure. This effectively standardizes the output of the harmonization process, creating a unified layer for analysis.

The Power of OMOP and PCORnet

The OMOP CDM (Observational Medical Outcomes Partnership), maintained by the OHDSI community, is perhaps the most influential model today. It is utilized at over 90 sites worldwide. The brilliance of OMOP lies in its standardized vocabularies; it maps local source codes (like a specific hospital’s internal code for “chest pain”) to a standard concept ID. This allows a researcher in Tokyo and a researcher in London to run the exact same R-script on their respective data and get comparable results.

OMOP organizes data into specific tables such as:

- Person: Demographic information.

- Observation Period: The time span during which data was recorded.

- Drug Exposure: Details on medications prescribed or dispensed.

- Condition Occurrence: Diagnoses and clinical findings.

The PCORnet Common Data Model is another prime example, focusing on patient-centered outcomes. Over 80 institutions have already transformed their data into this model, enabling research at an unprecedented scale. Similarly, i2b2 (Informatics for Integrating Biology & the Bedside) is used at over 200 sites, primarily for cohort discovery and clinical research.

These models allow for federated queries. Instead of moving sensitive patient data to a central location—which raises massive security and privacy hurdles—researchers can send the query to the data. The ACT Network, for instance, provides access to nearly 40 million patient records through this harmonized approach.

The Role of FHIR in Data Harmonization vs Data Standardization

Modern interoperability relies heavily on HL7 FHIR (Fast Healthcare Interoperability Resources). FHIR acts as a bridge, providing native EHR API access that simplifies data exchange. Unlike older standards, FHIR uses modern web technologies (RESTful APIs) and is designed to be easy for developers to implement.

FHIR is built on “Resources”—the basic building blocks of all information exchange. Examples include Patient, Practitioner, Organization, and Observation. Each resource can be extended through “Profiles” to meet specific research needs. The HL7 FHIR Accelerator for research, known as Vulcan, is specifically designed to ensure that translational research needs are met. Vulcan bridges the gap between clinical care and clinical research by standardizing how data flows from an EHR into a research database.

FHIR is a game-changer because it moves us closer to real-time data harmonization, allowing systems to “talk” to each other without the need for massive, static ETL projects that take months to update. It enables a “plug-and-play” approach to health data integration.

Why Data Harmonization vs Data Standardization Matters for AI

If you want to train a deep learning model on electronic health records (EHRs), your data must be harmonized. AI is notoriously sensitive to “garbage in, garbage out.” If the training data contains inconsistencies, the model will fail to generalize to new populations.

Deep learning with EHRs requires scalable and accurate data. If one hospital records blood pressure in mmHg and another uses a different scale or format, the AI will learn the “noise” of the data format rather than the clinical signals of the patient.

Harmonization suppresses cross-platform bias and batch effects—statistical variations caused by different laboratory conditions, equipment, or data entry protocols. This is critical for AI-driven data harmonization. For example, in genomic research, batch effects can lead an AI to incorrectly identify a genetic variant as a disease marker when it is actually just an artifact of the sequencing machine used at a specific site. Without rigorous harmonization, an AI might correctly identify a disease in Hospital A but fail completely in Hospital B simply because the data structure changed.

Implementing a Clean Core Strategy in Enterprise Systems

In the enterprise world, particularly with large ERP systems like SAP, the concept of a “Clean Core” is gaining massive traction. SAP defines clean core as keeping the digital core of the ERP free from custom code and modifications. This philosophy is directly applicable to data management in life sciences.

How does this relate to data? A clean core requires that data flowing into the system is already harmonized and standardized. If you pump “dirty” or mismatched data into your central ERP or research platform, you are forced to write custom code to handle the exceptions. This creates “technical debt”—a burden of complex, fragile code that makes future changes nearly impossible.

By standardizing data outside the core and using harmonization layers, you can:

- Support smooth system upgrades: Because you don’t have to re-write custom “data fixes” every time the software updates. The core remains standard, while the harmonization happens in a separate, agile layer.

- Enable seamless interoperability: Between your ERP, CRM, and research platforms. When data is harmonized to a common standard, it can flow between these systems without manual intervention.

- Reduce technical debt: By eliminating the “spaghetti code” often used to patch together disparate datasets. This makes the entire IT infrastructure more resilient and easier to maintain.

- Improve Metadata Management: A clean core strategy encourages the use of robust metadata—data about the data. This ensures that every data point has a clear lineage, showing where it came from and how it was transformed.

Best Practices for Multi-System Integration

To achieve this, organizations should follow a structured maturity model. The Data Harmonization Index provides insights into an organization’s maturity across several categories:

- Governance: Who makes the decisions about data definitions? A central data governance committee is essential to prevent “standardization silos” where different departments create competing standards. This committee should include both IT professionals and clinical subject matter experts.

- Sustainability: Is there ongoing financial support for data maintenance? Harmonization is not a one-time project; as source systems change (e.g., an EHR update), the “crosswalks” must be updated. Organizations must budget for the long-term maintenance of these data pipelines.

- Workforce: Do you have the data architects and engineers needed? This requires a blend of clinical knowledge (to understand the data’s meaning) and technical skill (to build the ETL pipelines). There is currently a global shortage of “bioinformaticians” who possess this dual expertise.

- Infrastructure: Do you have the Trusted Research Environments to host this data securely? Security is paramount when dealing with harmonized datasets that may contain sensitive patient information from multiple sources. Modern infrastructure must support encryption at rest and in transit, as well as robust access controls.

Assessing Your Readiness: When to Harmonize vs. Standardize

Before diving into a massive data project, you must assess your readiness. It isn’t just a technical challenge; it’s an organizational one. Organizations often face significant barriers to data sharing, including legal restrictions, lack of financial incentives, and technical debt.

The “30% Exclusion Risk” and Data Quality

We cannot stress this enough: retrospective harmonization often leads to a 30% data loss. This happens because some data is simply too different to be reconciled. For example, if one hospital records “Smoking Status” as a simple Yes/No, and another records it as “Packs per Day,” you can harmonize them into a Yes/No format, but you lose the granular detail of the second dataset.

If your research depends on that granularity—for instance, studying the dose-response relationship of nicotine—you cannot rely on retrospective harmonization. In such cases, you must prioritize standardization through prospective collection, ensuring every site uses the same “Packs per Day” metric from the start.

The FAIR Data Principles

A key framework for assessing readiness is the FAIR Principles, which state that data should be:

- Findable: Easy to find by both humans and computers, with rich metadata and unique identifiers.

- Accessible: Stored in a way that it can be accessed under appropriate authorizations.

- Interoperable: Ready to be integrated with other data through common languages and vocabularies (the core of harmonization).

- Reusable: Well-documented so that it can be used in future research beyond its original purpose.

Challenges in Data Integration

The road to integrated data is paved with problems that require both technical and strategic solutions:

- Data Silos: Information trapped in legacy systems that don’t have APIs. These often require manual data extraction, which is prone to error and slow. Breaking these silos requires a combination of modern middleware and organizational willpower.

- Wasted Spending: Estimates suggest a significant percentage of healthcare spending is wasted due to the inability to share data effectively. This includes redundant tests because a doctor cannot see a patient’s results from another hospital. Effective harmonization can save billions by reducing these redundancies.

- Semantic Interoperability: The “Young Adult” problem—where two systems use the same word but mean different things. This is one of the biggest clinical challenges in health data. Solving this requires deep clinical expertise to ensure that the mapping doesn’t change the medical intent of the data.

- Legal and Ethical Hurdles: Harmonizing data across international borders (e.g., between the US and EU) requires navigating different privacy laws like HIPAA and GDPR. This often necessitates a federated approach where data stays in its country of origin (data sovereignty) and only the analysis results are shared.

A Decision Framework

To decide your path, ask these three questions:

- Is the data already collected? If yes, harmonization is your only option. If no, standardization is the goal.

- What is the required level of precision? If you need 100% accuracy and zero data loss, you must standardize the collection process.

- What is the budget for maintenance? Standardization has high upfront costs (training, system changes) but lower maintenance. Harmonization requires ongoing effort to keep mappings current as source systems evolve.

Frequently Asked Questions

Can you have harmonization without standardization?

Yes. In fact, most retrospective research is harmonization without standardization. You are taking data that was collected without a shared standard and using technical “crosswalks” to make it comparable after the fact. However, it is much harder and carries a higher risk of data loss.

What are the benefits of using the OMOP Common Data Model?

OMOP allows for large-scale analytics across disparate datasets. Because it’s used at 90 sites worldwide, it provides a “lingua franca” for health data. It enables researchers to run the same analytic code across multiple different institutions without moving the data, ensuring both privacy and scale.

How long does a typical data harmonization project take?

For complex research projects, data harmonization can require up to 6 months to complete. This includes the time needed to profile sources, define the target schema, write change rules, and validate the results. Using automated platforms can significantly reduce this timeline.

Conclusion: Powering the Future of Federated AI

The debate of data harmonization vs data standardization isn’t about choosing a winner—it’s about building a strategy that uses both. At Lifebit, we believe the future of research is federated.

Our next-generation federated AI platform enables secure, real-time access to global biomedical and multi-omic data. By utilizing our Trusted Research Environment (TRE) and Trusted Data Lakehouse (TDL), organizations can overcome the problems of data silos without compromising security. Whether you are standardizing new clinical trial data or harmonizing decades of legacy records, our R.E.A.L. (Real-time Evidence & Analytics Layer) delivers the insights you need to drive the next medical breakthrough.

Ready to turn your data silos into a unified research engine? Learn more about Lifebit’s data solutions and how we can help you steer the complexities of data integration at scale.