A Guide to Harmonizing Data with the Biomedical Lakehouse Paradigm

Biomedical Data Lakehouse: Stop Losing 30% of Global Data to Silos

A biomedical data lakehouse is a unified data architecture that combines the scalability and flexibility of data lakes with the governance and performance of data warehouses—purpose-built to handle diverse healthcare data types including genomics, electronic health records (EHRs), medical imaging, and real-time wearable device streams. It enables:

- Unified storage for structured (lab results), semi-structured (clinical notes), and unstructured data (DICOM images) in open formats like Parquet

- Advanced access controls including RBAC, PBAC, and ABAC to meet HIPAA, GDPR, and IRB compliance requirements

- Real-time analytics alongside batch processing for population health insights and AI/ML workloads

- Semantic integration through metadata enrichment and ontological frameworks for cross-dataset interoperability

- Cost efficiency with 10x storage reduction and 50% query performance improvements over legacy systems

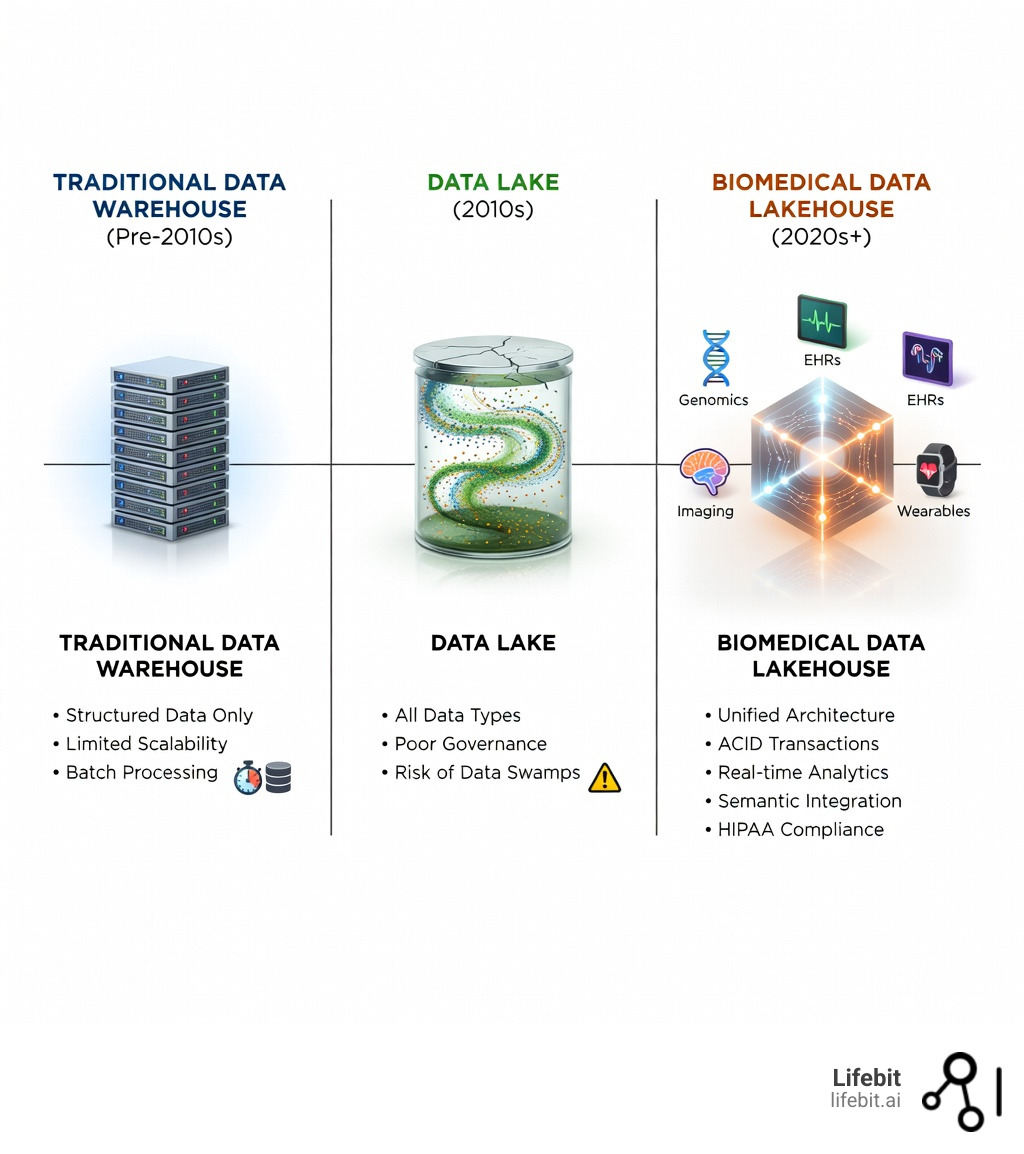

Healthcare organizations face an unprecedented data explosion. Thirty percent of the world’s data volume now comes from the healthcare industry, yet most of this information remains trapped in disconnected silos. This volume is expected to grow at a compound annual growth rate (CAGR) of 36% through 2025. Traditional data warehouses struggle with unstructured medical images and genomic sequences, while data lakes often devolve into ungoverned “data swamps” lacking the reliability required for clinical decision-making.

Legacy systems force researchers to wait weeks for data processing that should take hours. They prevent clinicians from accessing real-time insights from wearable devices when patients need immediate interventions. They create barriers between genomic data and clinical records, making it nearly impossible to achieve the holistic patient view required for precision medicine. For example, a researcher looking to correlate a specific genetic variant with long-term cardiovascular outcomes might have to manually request data from three different departments, wait for ETL (Extract, Transform, Load) processes to finish, and then spend days cleaning the data before a single analysis can begin.

The biomedical data lakehouse paradigm solves these challenges by unifying diverse data types under a single architecture that scales to petabytes while maintaining strict governance. Organizations like Regeneron have reduced genotype-phenotype query times from 30 minutes to 3 seconds across 1.5 million exomes. Others have applied machine learning to over 17 million electronic health records to find new treatment indications—workloads that were impossible under traditional architectures.

I’m Maria Chatzou Dunford, CEO and Co-founder of Lifebit, where I’ve spent over 15 years building computational biology and AI platforms that power secure, compliant biomedical data lakehouse implementations for pharmaceutical organizations and public sector institutions worldwide. Before founding Lifebit, I contributed to breakthrough tools like Nextflow at the Centre for Genomic Regulation, giving me deep expertise in the technical and regulatory challenges of harmonizing multi-omic datasets at scale.

Biomedical data lakehouse terms explained:

Biomedical Data Lakehouse: Query 1.5M Exomes in 3 Seconds, Not Weeks

To understand the biomedical data lakehouse, we must first look at the “identity crisis” healthcare data has faced for decades. Historically, we had two choices. On one hand, we had the Data Warehouse: great for structured lab results and billing data, but it would choke on a whole-genome sequence or a high-resolution MRI scan. On the other, we had the Data Lake: a vast reservoir where you could dump anything, but finding a specific patient record was like looking for a needle in a haystack—if the haystack was also underwater.

A biomedical data lakehouse is the “best of both worlds” evolution. It implements data warehouse-like structures and management functions directly on top of the low-cost, flexible storage used by data lakes. For us in the life sciences, this means we can finally store heterogeneous data—multi-omics, EHRs, digital pathology, and clinical notes—in one place without sacrificing the ACID (Atomicity, Consistency, Isolation, Durability) transactions needed for data integrity. ACID compliance is critical in healthcare; it ensures that if a clinician updates a patient’s allergy record while a researcher is running a query, the data remains consistent and reliable for both parties.

| Feature | Data Warehouse | Data Lake | Biomedical Data Lakehouse |

|---|---|---|---|

| Data Types | Structured only | All (Structured to Unstructured) | All (Optimized for Multi-omics/Imaging) |

| Schema | Schema-on-write | Schema-on-read | Decoupled (Flexible & Governed) |

| Performance | High for SQL | Low for SQL | High for SQL & AI/ML |

| Governance | Strong | Weak (“Data Swamps”) | Advanced (RBAC, PBAC, ABAC) |

| Cost | High | Low | Low (Open formats like Parquet) |

By adopting this architecture, we eliminate the need to move data between disparate systems for different tasks. You can run a SQL query for patient demographics and a Python-based machine learning model for genomic variant analysis on the exact same data footprint. This “zero-copy” approach is a game-changer for large-scale research. If you’re curious about the foundational shift, check out our deep dive on what is a data lakehouse.

Scaling biomedical data lakehouse workloads for population genomics

The true test of any architecture is its performance at the “mega-biobank” scale. Consider the work of Regeneron. Managing genomic data for 1.5 million exomes is a Herculean task that typically grinds traditional systems to a halt. In a traditional setup, genomic data is often stored in flat files (like VCFs) that are difficult to query alongside clinical phenotypes. Researchers would have to extract data into a temporary environment, join it with clinical records, and then run their analysis.

By moving to a lakehouse-style architecture, they achieved a staggering reduction in processing time: what used to take 3 weeks was compressed into just 5 hours. This was achieved by leveraging distributed computing frameworks like Apache Spark to process genomic variants in parallel across thousands of nodes.

Even more impressive is the query speed. Genotype-phenotype queries—the bread and butter of drug discovery—dropped from 30 minutes to just 3 seconds. This isn’t just a technical win; it’s a scientific one. When researchers can ask questions and get answers in seconds, the pace of discovery accelerates exponentially. They can test hypotheses in real-time, pivoting their research based on immediate feedback. For a more academic look at these structures, you can explore this research on medical big data warehouse architecture.

Real-time analytics and streaming data integration

In modern healthcare, data isn’t just sitting in a file; it’s flowing. Wearable devices, such as the continuous glucose monitors provided by Livongo, stream data in real-time. A traditional batch-processed warehouse can’t help a patient whose glucose levels are spiking right now.

A biomedical data lakehouse supports streaming data integration through technologies like Spark Streaming or Flink. This allows for immediate interventions, such as alerting a care team when a patient’s vitals deviate from their baseline. This capability extends to hospital operations, such as real-time ICU capacity management and sepsis prediction. By unifying streaming data with historical records, we can move from descriptive analytics (“What happened?”) to predictive insights (“What will happen next?”). For instance, a lakehouse can combine a patient’s historical EHR data with their current heart rate stream to predict the likelihood of a cardiac event within the next 12 hours. Learn more about the application of data lakehouses in life sciences to see how this transforms patient care.

Biomedical Data Lakehouse: Cut Storage Costs by 90% with Parquet

How does it actually work under the hood? We often use the Medallion Architecture to describe the data’s journey through the lakehouse, ensuring that data quality improves as it moves through the pipeline:

- Bronze (Raw): This is the landing zone. Whether it’s a VCF file from a sequencer, a JSON blob from an EHR, or a raw DICOM image from a CT scanner, it lands here in its raw form. This layer preserves the original data for auditability and re-processing if needed.

- Silver (Validated): Here, we perform the “cleanup.” Data is filtered, cleaned, and augmented. This is where we apply data lakehouse best practices to ensure the data is research-ready. In a biomedical context, this involves mapping local clinical codes to international standards like SNOMED-CT, LOINC, or the OMOP Common Data Model (CDM).

- Gold (Enriched): This is the “business-level” data, optimized for specific use cases like drug target identification or clinical reporting. Data in the Gold layer is often aggregated or joined into “feature tables” that are ready for machine learning models.

A key hero in this story is the Apache Parquet format. Parquet is a columnar storage format that provides an order of magnitude improvement in storage requirements—often a 10x reduction compared to raw CSV files. Because it’s optimized for analytical queries, we’ve seen PySpark query performance improve by 50% on massive datasets. Unlike row-based formats, Parquet allows the system to read only the specific columns needed for a query, which is essential when dealing with genomic tables that might have thousands of columns. Combined with technologies like Delta Lake, which brings reliability and ACID transactions to the lakehouse, we finally have a stable foundation for the FAIR Guiding Principles for data management—making data Findable, Accessible, Interoperable, and Reusable.

Metadata management and the CURIE Knowledge Graph

Data without context is just noise. In a biomedical data lakehouse, metadata management is what turns a file into an insight. Semantic integration involves resolving differences in nomenclature and formats across disparate sources. For example, one hospital might record a lab result as “Glucose,” while another uses “Blood Sugar.” A robust lakehouse uses a metadata layer to map both to the same LOINC code.

For instance, a top-5 global pharmaceutical company recently built a company-specific CURIE Biomedical Knowledge Graph. By integrating thousands of datasets into their lakehouse, they mapped over a billion proprietary relationships. This allows researchers to see how a specific gene variant might interact with a protein, which in turn might be linked to a specific clinical symptom recorded in an EHR—all through a unified semantic framework. This graph-based approach enables “discovery by association,” where researchers can find hidden links between diseases and potential drug targets that would be invisible in a standard relational database.

Ensuring HIPAA compliance within a biomedical data lakehouse

Security in healthcare isn’t optional; it’s the prerequisite. A biomedical data lakehouse must ensure compliance with regulations like HIPAA, GDPR, and strict IRB protocols. We move beyond simple Role-Based Access Control (RBAC) to more sophisticated models:

- PBAC (Policy-Based Access Control): Permissions are based on specific policies (e.g., “Researchers can only access de-identified data between 9 AM and 5 PM”).

- ABAC (Attribute-Based Access Control): Access is granted based on attributes of the user (e.g., their certification level), the data (e.g., its sensitivity level), and the environment (e.g., the IP address of the request).

This allows for fine-grained permissions where two researchers on the same project might see different data levels based on their specific IRB approvals. For example, a lead investigator might see full clinical notes, while a data scientist only sees de-identified structured data. For a deeper dive into how we handle these complexities, explore our guide on data lakehouse governance.

Biomedical Data Lakehouse: Find New Drug Targets Across 17M Records

The ultimate goal of a biomedical data lakehouse is to fuel the AI revolution in medicine. By providing a unified, high-quality data source, we can train more accurate models for several critical areas:

1. Biomarker Identification

Sifting through multi-omic data to find early signs of disease is a massive computational challenge. A lakehouse allows researchers to combine genomic, proteomic, and metabolomic data to identify “signatures” of disease long before symptoms appear. For example, by analyzing longitudinal blood samples alongside genomic data, researchers can identify protein markers that predict the onset of Alzheimer’s disease up to a decade in advance.

2. Disease Prediction and Risk Stratification

Using longitudinal EHR data to predict patient outcomes is essential for population health management. Machine learning models can be trained on the lakehouse to identify patients at high risk for chronic conditions like Type 2 diabetes or heart failure. This allows healthcare providers to intervene early with personalized wellness plans, significantly reducing the long-term cost of care and improving patient quality of life.

3. Digital Pathology and Medical Imaging

Automating the classification of medical images at scale requires massive amounts of labeled data. A lakehouse can store millions of Whole Slide Images (WSIs) alongside their corresponding pathology reports. AI models can then be trained to detect cancerous cells with a level of precision and speed that rivals or exceeds human pathologists. This is particularly vital in regions with a shortage of specialized medical professionals.

4. Drug Repurposing

One remarkable example involved applying machine learning to 17 million+ electronic health records to identify new treatment indications for existing, approved therapies. By looking for “off-label” success stories in the data, researchers found that a drug originally approved for hypertension showed significant promise in treating a specific type of autoimmune disorder. This type of population-level analysis requires the massive scale and high-performance compute that only a lakehouse can provide. For more on how these biobanks are changing the game, see the research on mega-biobanks for genetic influences and our ai data lakehouse ultimate guide.

Biomedical Data Lakehouse: 6 Critical Questions Answered for Researchers

How does a lakehouse handle unstructured medical imaging?

The lakehouse uses specialized libraries like pydicom and python-gdcm to process DICOM images at scale. By integrating these with Spark, we can parallelize metadata extraction and thumbnail generation, storing the results in Delta Lake tables. This allows researchers to query imaging metadata using standard SQL, effectively making unstructured images “searchable” alongside clinical data. For example, a researcher can query for “all CT scans of patients over 65 with a history of smoking and a specific genetic mutation.”

What are the primary ingestion methods for clinical data?

A robust biomedical data lakehouse supports several methods:

- Batch FHIR: For standardized exchange of health information using the HL7 FHIR standard.

- NGS Integrations: Direct pipelines from sequencing systems (e.g., Illumina, Tempus, Foundation Medicine) to ingest raw FASTQ or VCF files.

- Direct Database Connections: Real-time or scheduled pulls from EHR systems like Epic or Cerner using JDBC/ODBC connectors.

- API-Automated Uploads: For custom research pipelines and external data partners, ensuring that data from clinical trials can be ingested as soon as it is collected.

How do lakehouses support FAIR data principles?

By centralizing data and metadata in open formats, lakehouses naturally support the key features of a federated data lakehouse. They provide:

- Findability: Through rich, searchable metadata catalogs that describe every dataset in the lakehouse.

- Interoperability: By harmonizing data to common standards like OMOP CDM or CDISC for clinical trials.

- Accessibility: Through secure, auditable access protocols that ensure only authorized users can access sensitive data.

- Reusability: By maintaining data provenance and lineage, ensuring researchers know exactly where a data point came from and how it was processed.

Can a lakehouse support Federated Learning?

Yes. A modern biomedical data lakehouse is often the foundation for federated learning, where AI models are trained across multiple institutions without the raw data ever leaving its original location. This is crucial for rare disease research, where no single hospital has enough patients to train a robust model. The lakehouse provides the local infrastructure to manage the data, while a federated layer coordinates the model training.

How does the lakehouse handle data versioning?

Through technologies like Delta Lake, the lakehouse supports “Time Travel” or data versioning. This allows researchers to access previous versions of a dataset, which is essential for reproducibility in science. If a study was conducted six months ago, a researcher can query the data exactly as it existed at that time, even if the underlying records have since been updated.

What is the cost impact of moving from a warehouse to a lakehouse?

Most organizations see a significant reduction in Total Cost of Ownership (TCO). By using low-cost cloud storage (like AWS S3 or Azure Blob Storage) and open-source formats, organizations can avoid the high licensing fees associated with proprietary data warehouses. Furthermore, the ability to decouple storage from compute means you only pay for the processing power you use during active analysis.

Biomedical Data Lakehouse: Start Turning Siloed Data Into Life-Saving Discoveries

Building a biomedical data lakehouse is not without its challenges. It requires careful cost management, a commitment to data freshness, and a robust governance framework. Organizations must also invest in the cultural shift required to move away from data silos and toward a collaborative, open-data environment. However, the outcomes—faster drug discovery, more accurate disease prediction, and a truly 360-degree view of patient health—are worth the journey.

As we look to the future, the integration of Large Language Models (LLMs) with the biomedical data lakehouse will further revolutionize the field. Imagine a researcher asking a natural language question like, “Find all patients in the lakehouse who might be eligible for our new oncology trial based on their genomic profile and recent lab results,” and receiving a curated list in seconds. This is the level of intelligence that a unified architecture makes possible.

At Lifebit, we provide a next-generation federated AI platform featuring a Trusted Data Lakehouse (TDL). Our mission is to enable secure, real-time access to global multi-omic and clinical data while ensuring the strictest regulatory compliance. By removing the silos that have traditionally hampered research, we’re helping the world’s leading institutions turn data into life-saving discoveries.

Ready to see how a unified architecture can transform your research? Explore Lifebit’s federated trusted research environment and join the new era of biomedical intelligence.