The Ultimate Guide to AI Data Governance Providers for Biomedical Research

Turn Months into Minutes: End Data Silos and Slash Compliance Risk with AI Governance

Biomedical research generates more data today than in the entire previous history of medicine. Petabytes of genomic sequences, high-resolution imaging, and real-time electronic health records (EHRs) hold the keys to the next generation of cures. Yet, despite this data explosion, a stark productivity paradox plagues the industry: $300 billion spent annually on R&D faces stagnating returns. Why? Because this invaluable data is overwhelmingly locked away in disconnected silos. Manual, human-driven processes for data access, cleaning, and analysis slow everything down to a crawl. Complex and ever-evolving privacy regulations like HIPAA in the US and GDPR in Europe create compliance minefields that instill a culture of risk aversion, further inhibiting collaboration.

Traditional data governance frameworks, designed for a pre-AI era of structured, centralized data, simply can’t keep pace. They rely on manual review boards, cumbersome data transfer agreements, and brittle, hard-coded rules that are incapable of managing the volume, velocity, and variety of modern biomedical data. This isn’t just an IT problem or a line item on a budget—it’s a fundamental patient outcomes problem. When a researcher has to wait six months for a data access request to be approved, or spends 80% of their time manually harmonizing datasets from different hospitals, drug discovery slows. Clinical trials are delayed. Promising therapeutic hypotheses go untested. In short, life-saving breakthroughs get delayed by months or even years, a cost measured not just in dollars, but in lives.

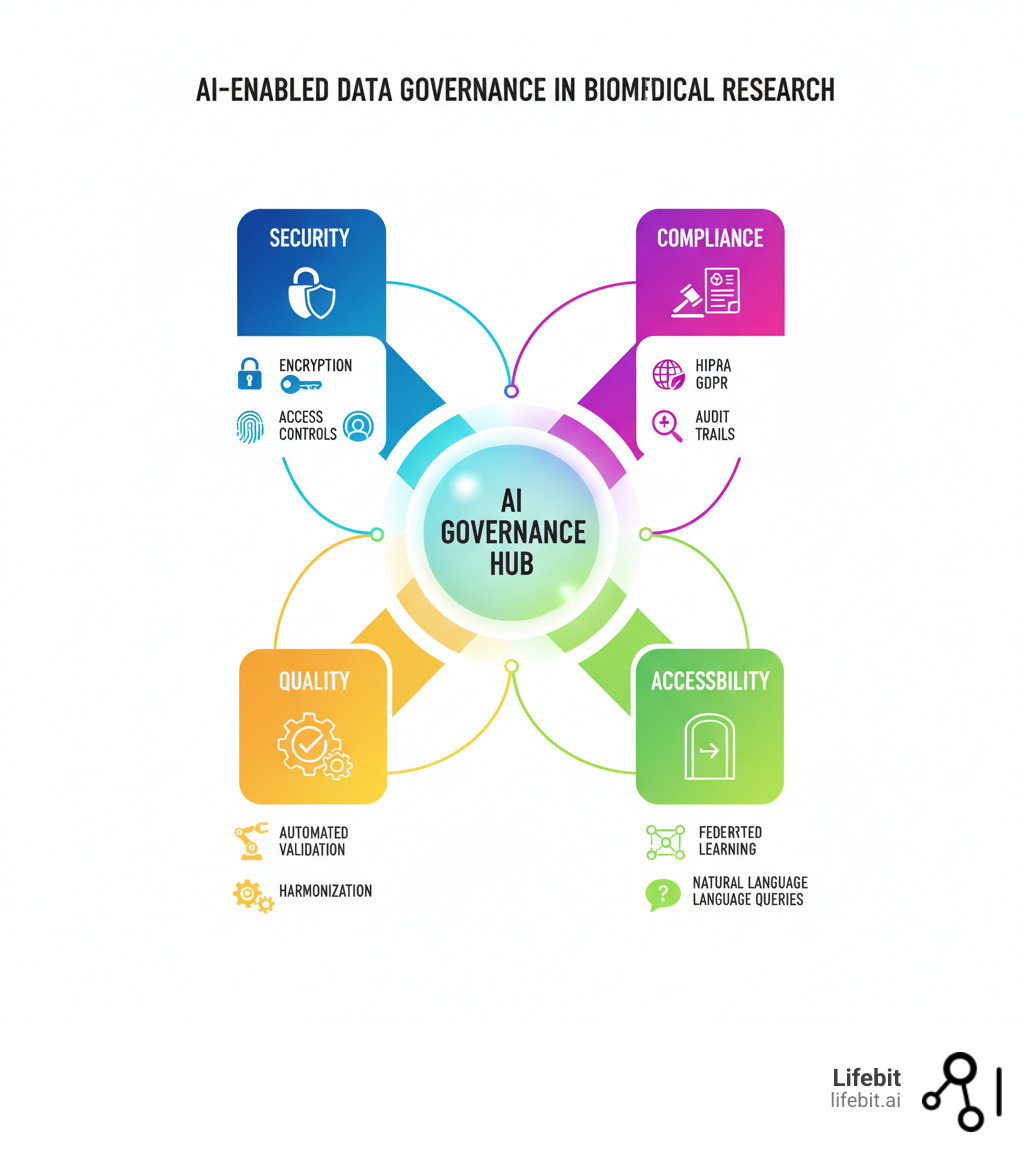

But there’s good news. A new paradigm of AI-enabled data governance is changing the game. These intelligent platforms are designed to automate the vast majority of non-scientific work that clogs research pipelines—discovering relevant datasets, cleaning and harmonizing disparate data formats, building patient cohorts, and ensuring continuous compliance. Crucially, they enable advanced privacy-preserving techniques like federated learning, allowing institutions to collaborate on sensitive data without ever moving it from its secure, local environment. They provide complete, immutable audit trails and real-time compliance monitoring, transforming governance from a reactive, punitive function into a proactive, enabling one. They turn months of frustrating manual work into minutes of automated, reproducible analysis.

As Maria Chatzou Dunford, CEO and Co-founder of Lifebit with over 15 years in computational biology and health-tech, I’ve seen firsthand how the right platform transforms fragmented, insecure data ecosystems into powerful, secure, and collaborative research environments. This is the essential key to unlocking the next wave of biomedical breakthroughs and finally realizing the full promise of precision medicine.

Your Research Data is Killing Patients: Fix These 5 Failures Before HIPAA Fines Hit

Biomedical research today generates an avalanche of information—EHRs, genomic sequences, medical imaging, wearable data, proteomics, and more—that holds the promise of life-saving breakthroughs. But managing this data securely, ethically, and effectively has become a monumental challenge that keeps research leaders, CIOs, and compliance officers up at night. The failure to do so doesn’t just risk fines; it actively hinders medical progress.

Here are the five critical failure points that modern AI governance must solve:

- Data Volume and Variety: The scale of data is staggering. A single whole-genome sequence is over 100 gigabytes, and large-scale studies involve thousands of participants. This data is also deeply multimodal, spanning unstructured clinical notes, structured lab results, high-resolution pathology slides, and real-time data streams from IoT devices. Traditional relational databases and data warehouses were never designed to integrate these diverse sources coherently, creating a technical barrier to a holistic view of patient health.

- Regulatory Complexity: The legal landscape is a minefield. Regulations like the Health Insurance Portability and Accountability Act (HIPAA) in the United States and the General Data Protection Regulation (GDPR) in Europe demand strict compliance, with penalties for missteps reaching millions of dollars per incident. GDPR’s ‘right to be forgotten’ and strict rules on cross-border data transfers, for example, make multi-national clinical trials a logistical nightmare. These legal requirements are a primary driver for seeking automated, auditable governance solutions that can enforce policies at scale.

- Patient Privacy and Trust: Every data point represents a person who has placed their trust in the research institution. Proper de-identification, anonymization, and granular access controls are not just legal requirements; they are fundamental ethical obligations. A single data breach can cause irreparable harm to patients and shut down research programs overnight. The risk of re-identification, where anonymized datasets are combined to unmask individuals, is a growing concern that requires advanced technological safeguards beyond simple data masking.

- Data Quality and Bias: The adage “garbage in, garbage out” is dangerously true in healthcare. AI models are exquisitely sensitive to the quality of their training data. If a model is trained on data that is biased (e.g., sourced predominantly from a single demographic), incomplete (missing key variables), or inaccurate (containing data entry errors), it will produce flawed, inequitable, and potentially harmful insights. An algorithm for detecting skin cancer trained primarily on light-skinned individuals may fail catastrophically on darker skin tones. Ensuring data is clean, complete, and representative is a massive, ongoing challenge that is critical for developing safe and effective AI.

- AI-Specific Pressures: AI introduces a new layer of governance complexity. Many advanced models, like deep neural networks, operate as “black boxes,” making decisions that are difficult for humans to interpret or explain. This lack of transparency is a major barrier to clinical adoption and regulatory approval. Furthermore, AI models can suffer from “model drift,” where their performance degrades over time as real-world data changes. This creates an urgent need for ethical frameworks that prioritize transparent, explainable AI (XAI) and continuous monitoring to ensure models remain fair, accurate, and robust long after they are deployed.

Why Traditional Governance Falls Short

Traditional data governance, with its reliance on manual processes, siloed systems, and human review committees, simply cannot keep up. Information gets trapped within departmental, institutional, and geographic boundaries, making a complete, longitudinal picture of a patient or population impossible. Manual data access requests, which can take months to process through ethics boards and IT departments, create massive bottlenecks that grind research to a crawl. Human-driven workflows for data cleaning and integration are not only slow but also error-prone and completely unscalable. As data volumes explode and AI models demand more data, these legacy systems buckle, and the lack of real-time monitoring means compliance violations can go undetected for months, making the risk of non-compliance an inevitability rather than a possibility.

Cut Data Risk by 90%: How Lifebit’s Federated AI Turns 6-Week Projects into 2-Hour Tasks

When research institutions grapple with data chaos, they’re really asking a fundamental question: How do we turn our fragmented, siloed, and risk-laden data assets into secure, collaborative insights—without getting buried in compliance nightmares and technical debt?

That’s exactly the problem we’ve solved at Lifebit. Our next-generation federated AI platform represents a complete paradigm shift in how biomedical organizations access, govern, and analyze sensitive data. While traditional governance attempts to control data by centralizing and locking it down, we enable researchers to securely analyze data in-place through a sophisticated federated architecture. The data never moves. The analysis and insights do. This simple but powerful change dramatically reduces risk, simplifies compliance, and accelerates research.

Building a Secure, Scalable Data Foundation

You can’t build groundbreaking research on a shaky infrastructure. Our platform starts with a robust, cloud-native foundation designed specifically for the rigors of healthcare and life sciences. We provide HIPAA-eligible services, backed by Business Associate Agreements (BAAs), and support for achieving HITRUST certification, a rigorous framework that harmonizes HIPAA, GDPR, and other standards. This ensures your data environment meets the highest global benchmarks for security and privacy, allowing you to collaborate across borders with confidence. Our elastically scalable architecture grows with your needs, effortlessly handling everything from a few hundred patient records to petabytes of multi-omics data, and integrates cutting-edge generative AI capabilities to accelerate every workflow, from cohort discovery to clinical trial reporting.

Learn more about building a data foundation for healthcare with Lifebit.

Advanced AI and NLP for Biomedical Data

Our platform doesn’t just store data—it understands it. Using advanced Natural Language Processing (NLP), we transform unstructured data, such as physicians’ clinical notes and pathology reports, into a rich source of research-ready information while rigorously protecting patient privacy. Our de-identification at scale capabilities can automatically redact 18 types of Protected Health Information (PHI) from millions of records to meet the HIPAA Safe Harbor standard. We build explainable AI (XAI) into the core of our platform, so you can understand why a model made a specific prediction, a critical feature for clinical validation and trust. This is augmented by human-in-the-loop validation workflows, where AI-driven insights are reviewed and confirmed by subject matter experts, creating a feedback loop that continuously improves model accuracy and reliability.

Data Curation and Harmonization for AI Readiness

Industry studies show that data scientists and researchers spend up to 80% of their time finding, cleaning, and organizing data. We flip that equation. Our platform automates the painstaking work of data cleaning and harmonization, transforming raw, messy multi-omics (genomics, transcriptomics, proteomics), EHR, and imaging data into standardized, searchable, AI-ready datasets. We use agentic AI systems—autonomous agents that can understand goals and execute complex, multi-step tasks—to process vast biological datasets, automatically annotating files, checking for quality control issues, and uncovering hidden relationships. This frees your team from low-value manual labor and allows them to focus on discovery and innovation. This isn’t just about efficiency—it’s about creating high-quality, interoperable data at the speed and scale of modern research.

Read more about Lifebit’s agentic AI for data curation.

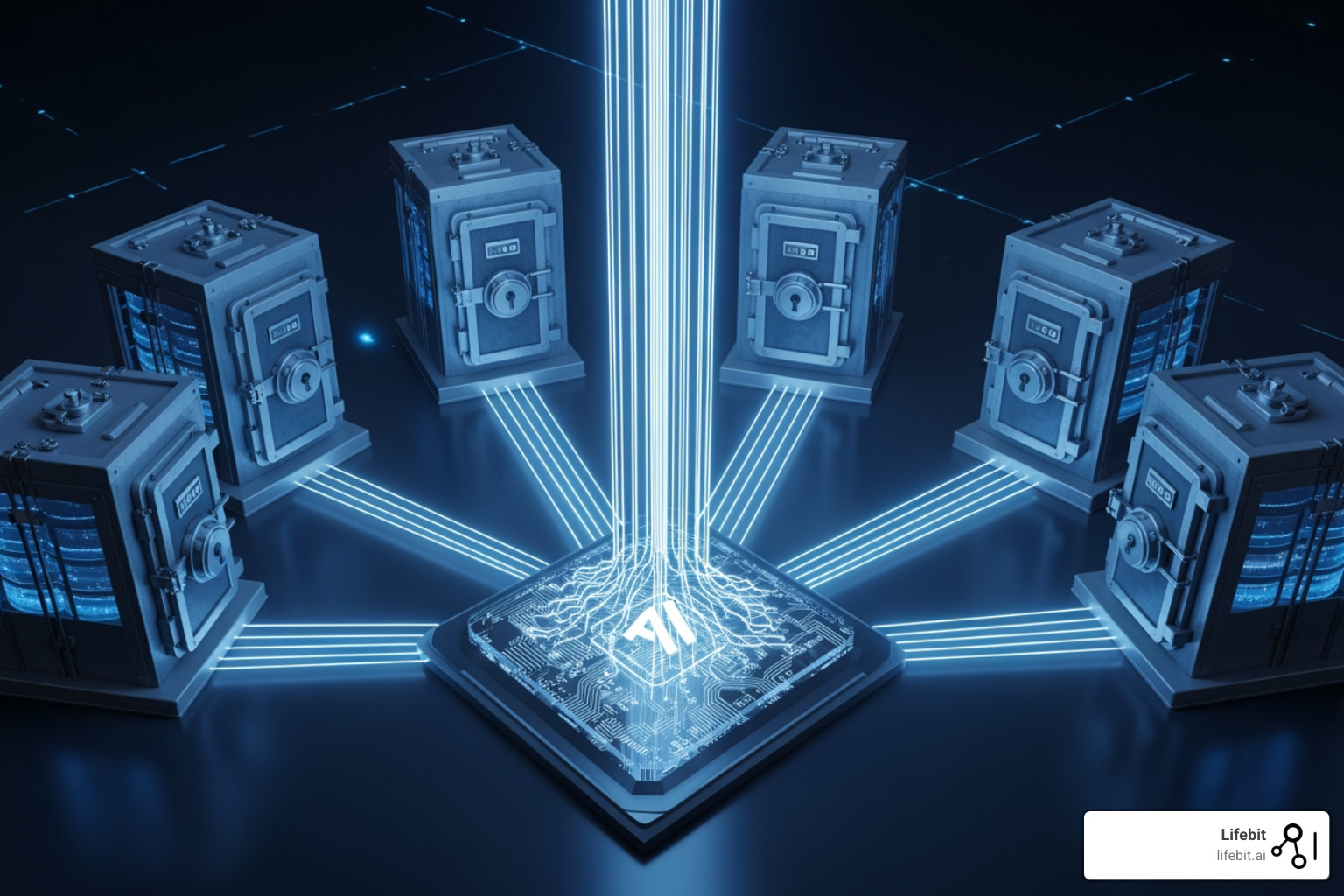

Federated AI and Secure Collaboration

How do you enable multi-institutional studies on rare diseases or diverse populations without the legal, ethical, and security nightmare of physically sharing patient data? Our answer is federated learning. This revolutionary approach allows AI models to be trained on decentralized data sources without the data ever leaving its original, secure location. You can train a single, powerful model on data from hospitals in London, New York, and Tokyo—without any raw patient data ever crossing a single border or firewall.

We deliver this capability through Trusted Research Environments (TREs) and data clean rooms. These are highly secure, access-controlled virtual environments that provide the tools for in-place analysis with full, immutable audit trails. We bring the analysis to the data, not the data to the analysis. This approach dramatically reduces risk, simplifies compliance with data sovereignty laws, and builds patient trust. With complete data lineage and provenance, our platform tracks every dataset, transformation, and analysis, meeting the highest standards of accountability and reproducibility, including the stringent requirements for FDA Real-World Evidence (RWE) submissions.

Discover Lifebit’s next-generation federated AI platform.

De-ID 10,000 Records in 2 Hours (Not 2 Weeks): The AI Stack That Saves Your Research

The convergence of artificial intelligence with data governance is ushering in a new era of speed, security, and scale for biomedical research. At Lifebit, we’ve harnessed several breakthrough AI technologies that don’t just incrementally improve governance—they fundamentally reshape how research institutions operate, collaborate, and discover.

How Lifebit AI Automates De-identification and Compliance

One of the biggest and most error-prone bottlenecks in preparing data for research is the manual de-identification of patient records. A human reviewer might spend weeks carefully redacting names, dates, and locations from a few thousand clinical notes. Our AI-powered solution, which leverages state-of-the-art Natural Language Processing (NLP) and Named Entity Recognition (NER) models, transforms this timeline from weeks to hours. Our models are trained on vast corpora of biomedical text to precisely identify and classify all 18 types of Protected Health Information (PHI) defined by HIPAA, including information that human reviewers might easily miss, like doctor’s names or specific dates.

Beyond de-identification, our platform embeds governance into the fabric of the research environment. We perform automated, real-time compliance checks against HIPAA, GDPR, and other regulatory requirements for every query and action. Potential policy violations trigger immediate alerts for compliance officers. This is enabled by a policy-as-code approach, where governance rules (e.g., “user group X can only access de-identified data from project Y for 90 days”) are written as machine-readable code. This code is then automatically and consistently enforced by the platform, eliminating the risk of human error and providing a detailed, immutable audit trail that documents every action for regulators.

What are the benefits of federated learning and data clean rooms?

Federated learning and data clean rooms solve a frustrating paradox: groundbreaking research requires collaboration on diverse data, but sharing that data is a legal and security nightmare. Instead of the traditional, high-risk approach of moving sensitive data to a central location, federated learning brings the AI model to the data. Each participating institution trains a global model on its local data, behind its own firewall. Only the anonymized, aggregated model updates (or “learnings”)—never the raw patient information—are shared and combined to improve the global model. This is privacy-preserving analytics at its finest, enabling the training of more robust, accurate, and generalizable AI models on diverse, decentralized patient data while dramatically reducing data transfer risks and simplifying compliance with data sovereignty laws like GDPR.

Data clean rooms extend this principle of secure collaboration. They are neutral, secure environments where two or more parties can bring their datasets for joint analysis without either party seeing the other’s raw data. Using advanced cryptographic techniques, the clean room allows for specific, pre-approved queries to be run across the combined dataset, revealing only the aggregated insights. This unlocks immense value from previously inaccessible partnered datasets, accelerating research in areas like drug discovery, clinical trial recruitment, and post-market surveillance.

The Role of Synthetic Data Generation (SDG)

Even with advanced privacy techniques, accessing real patient data can be slow and complex. Synthetic data provides a powerful and practical solution. It involves using generative AI models (like GANs or transformers) to create artificial datasets that mimic the statistical properties and complex correlations of real patient data, but without containing any actual patient information. This is transformative for several reasons:

- Training AI Models Safely: Developers and data scientists can build, test, and refine algorithms on high-fidelity synthetic data without needing to go through lengthy data access approvals, dramatically shortening development cycles.

- Augmenting Rare Disease Data: For diseases with only a few hundred known patients, it’s often impossible to train effective AI models. We can generate thousands of realistic synthetic patient profiles to create a large enough dataset for AI to identify meaningful patterns, enabling research that was previously impossible.

- Overcoming Data Access Barriers: It allows researchers, students, and collaborators to explore and experiment with data that realistically represents large, diverse populations without navigating complex data sharing agreements or privacy risks.

- Software Testing and Validation: Synthetic data provides a safe, scalable way to test data pipelines, analytics platforms, and software for bugs and performance issues without ever touching sensitive production data.

By creating high-quality, FAIRified (Findable, Accessible, Interoperable, Reusable) synthetic data, we can democratize access to realistic health data, increasing the availability of interoperable datasets while maintaining the highest standards of trust and transparency that biomedical research demands.

Stop $2M HIPAA Fines: 6 Features Your AI Partner Must Have (Or You’re Dead)

Choosing the right AI data governance partner is one of the most critical strategic decisions a modern research institution can make. It’s not just about procuring software; it’s about finding a partner who deeply understands the high stakes of healthcare research, respects the sanctity of patient privacy, and can provide a scalable, future-proof platform for innovation. The right choice accelerates research, protects patients, and creates a competitive advantage. The wrong one leads to spiraling costs, compliance nightmares, stalled projects, and irreparable reputational damage.

Key Considerations for Your Partner

When evaluating potential partners, look beyond slick marketing claims and focus on these six non-negotiable fundamentals:

- Compliance and Certifications: This is the absolute baseline. The platform must demonstrate non-negotiable adherence to HIPAA (including signing a BAA), GDPR, and other relevant regulations. Look for a partner who has achieved or can support HITRUST certification. HITRUST is a prescriptive framework that provides a ‘certify once, report many’ approach, proving that a robust, audited security posture is in place.

- Scalability and Interoperability: The platform must be built on a cloud-native, microservices-based architecture that can handle exploding data volumes and fluctuating computational demands. It must also be built on open standards to avoid vendor lock-in, with robust APIs and support for data standards like OMOP, FHIR, and FAIR principles to ensure it can integrate with your existing and future systems.

- Support for Federated Architecture: In the modern era of global collaboration and strict data sovereignty laws, a platform that requires data centralization is already obsolete. A modern partner must enable federated learning and provide secure Trusted Research Environments (TREs), allowing you to analyze data in-place without moving it. This is the only viable long-term strategy for multi-institutional research.

- Automated Data Harmonization Capabilities: A key value driver is the ability to rapidly transform disparate, raw data into standardized, analysis-ready formats. Your partner should demonstrate powerful, AI-driven tools for mapping data to common data models (like OMOP-CDM) and automating the tedious 80% of work that currently bogs down data science teams.

- Ethical AI Framework: Black-box AI has no place in healthcare. Demand a partner with a clear, transparent, and robust framework for ethical AI. This includes tools for explainability (XAI) to understand model predictions, active bias detection and mitigation to ensure fairness, and continuous monitoring to prevent model drift and performance degradation.

- Total Cost of Ownership (TCO): Look beyond the initial license fee and consider the long-term ROI. A platform that automates the 80% of manual work in research pipelines delivers enormous value. Calculate the cost of inaction: the salary costs of data wrangling, the opportunity cost of delayed research, the financial and reputational cost of a data breach, and the multi-million dollar fines for non-compliance.

Evaluating Security and Privacy Features

Patient data is sacred. Ensure your partner’s platform provides defense-in-depth protection:

- End-to-End Encryption: Data must be encrypted at all times: in transit (using TLS 1.2+), at rest (using AES-256), and, where possible, during analysis (using confidential computing).

- Granular Access Controls: The platform must enforce the principle of least privilege. This goes beyond simple Role-Based Access Controls (RBAC) to include Attribute-Based Access Controls (ABAC), which allow for dynamic, context-aware permissions (e.g., “Allow Dr. Smith to access de-identified data for Project X, but only from a hospital IP address and only for the next 30 days”).

- Auditability and Data Lineage: Every single action—every login, query, analysis, and export—must be logged in an immutable, timestamped audit trail. Full data lineage must track data from its source through every transformation to ensure regulatory compliance and scientific reproducibility.

- Support for Data Sovereignty: The platform must be architected to respect global legal landscapes, ensuring data remains within its required geographic and legal boundaries (e.g., EU data stays within the EU).

- Advanced Re-identification Prevention: The system must use state-of-the-art anonymization and de-identification techniques, coupled with strict controls on query outputs, to make the re-identification of individuals from the data practically impossible.

At Lifebit, we’ve built our platform on these principles from the ground up, giving researchers the freedom to innovate while giving CIOs and patients the protection they deserve. Discover Lifebit’s next-generation federated AI platform and see how we’re changing the standard for biomedical data governance.

Cut 80% of Research Time Today: How AI Governance Gets Drugs to Market Faster

The path through the complex landscape of biomedical data governance doesn’t have to be a maze of manual processes, compliance risks, and research bottlenecks. The promise of AI-enabled governance is here now, empowering leading institutions to move faster, collaborate more securely, and innovate more responsibly.

When you get governance right, everything changes. It ceases to be a restrictive gatekeeper and becomes a strategic enabler. Improved data quality, driven by automated harmonization and cleaning, means your AI models produce trustworthy, reproducible insights you can count on. Proactive compliance, achieved through real-time monitoring and automated de-identification, means you can confidently meet and exceed the standards of HIPAA and GDPR, transforming audits from a source of dread into a simple validation of good practice. Dramatically reduced risk flows naturally from this foundation; by keeping data in place with federated learning and enforcing granular, policy-as-code access controls, you protect your institution’s reputation, your research funding, and most importantly, your patients’ trust.

But what truly matters is the impact on your core mission: accelerated research timelines. Our platform is designed to automate the 80% of manual, non-scientific analysis work that currently clogs R&D pipelines, cutting complex cohort queries from weeks to minutes and data preparation from months to days. Your most valuable assets—your brilliant scientists—can finally spend their time on science, not on data wrangling. Insights arrive faster, drug discovery accelerates, clinical trials become more efficient, and life-saving breakthroughs reach patients sooner.

The future of biomedical research is collaborative, secure, and fast. It’s a future where data is no longer a liability but a powerful, fluid asset. It’s a future powered by AI that is not just powerful, but also explainable, ethical, and fair. It’s about turning data from a compliance headache into the engine of strategic advantage.

This isn’t science fiction. It’s the reality we’re building every day with our federated AI platform. The tools exist today to transform how you work with sensitive health data, to break down silos, and to unleash the full potential of your research teams. The only question is whether you’re ready to lead the way.