Federated Governance: The Blueprint for Data Democracy

Federated Governance Model: The #1 Blueprint

Why Organizations Need a New Approach to Data Governance

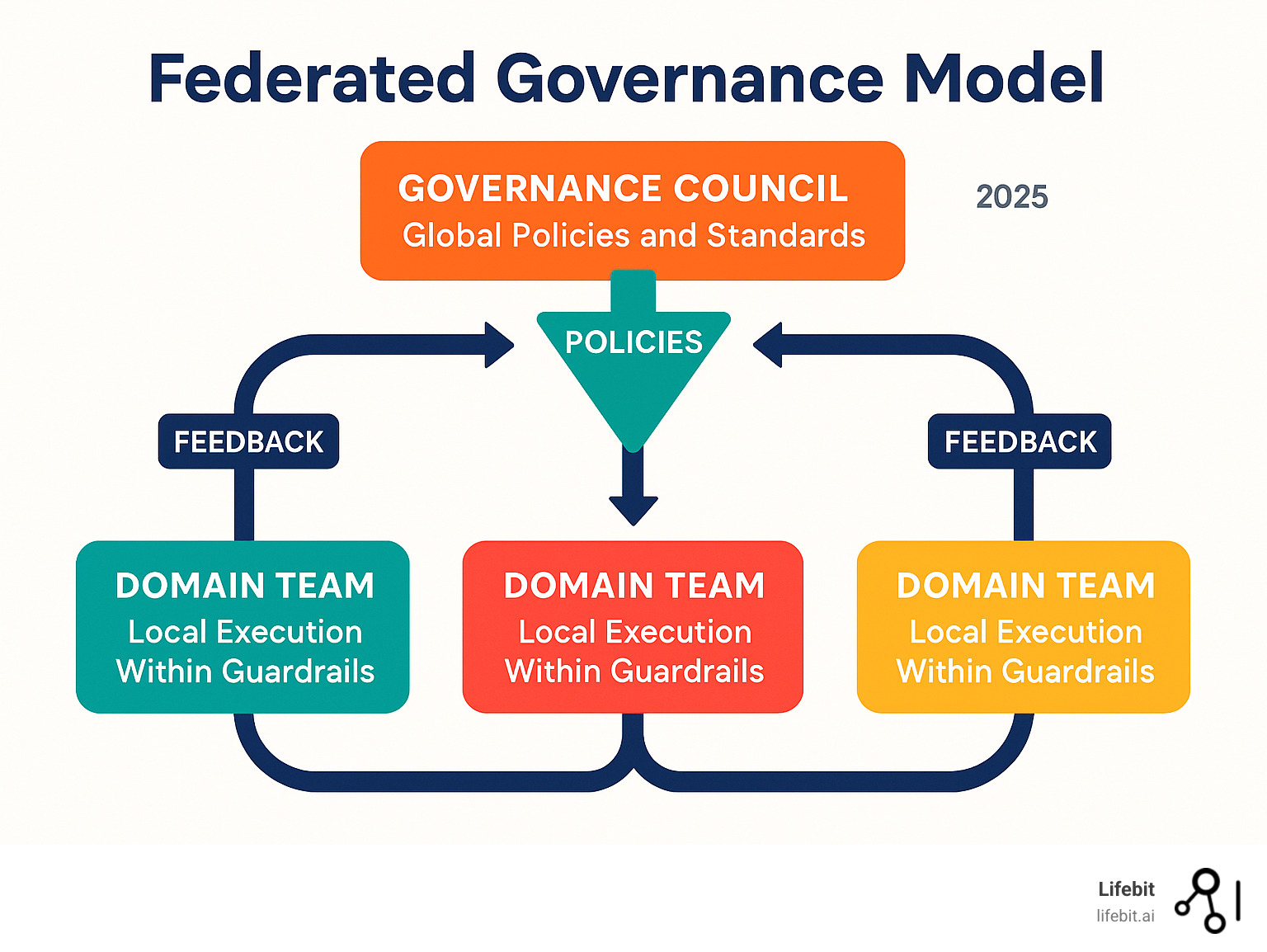

A federated governance model is a hybrid approach combining centralized policy-setting with decentralized execution. It allows organizations to maintain data standards while empowering domain teams to act autonomously within established guardrails. This model is defined by central oversight for global policies, domain autonomy for local management, shared accountability, and a scalable structure that prevents bottlenecks.

In today’s data landscape, organizations often face a choice between control and speed. Centralized governance creates bottlenecks, while decentralized approaches risk chaos. The federated governance model emerged as the solution, balancing enterprise-wide standards with domain-level agility.

This need is critical as data volumes explode and architectures like data mesh gain adoption. For example, Autodesk scaled to 60 domain teams using a federated approach, and The Very Group uses a hub-and-spoke structure to balance central policy with departmental flexibility.

My 15 years leading Lifebit’s federated biomedical data platform have shown me how this model enables secure, compliant data sharing in complex healthcare ecosystems. Successful federation requires both the right technical infrastructure and a significant cultural change.

The Spectrum of Data Governance: Centralized vs. Federated vs. Decentralized

Choosing a data governance approach depends on your organization’s unique situation, including its size, culture, regulatory environment, and data maturity. The models exist on a spectrum from full central control to complete domain autonomy, and understanding the trade-offs is the first step toward selecting the right fit.

Centralized data governance is a top-down model where a single, central team (often led by a Chief Data Officer or a governance committee) makes all data-related decisions. This approach is common in smaller organizations or highly regulated industries where consistency and control are paramount. It ensures uniform application of policies, simplifies auditing, and minimizes risk. However, as an organization scales, this model reveals its weaknesses. The central team, lacking deep business context for every domain, can create one-size-fits-none policies. It quickly becomes a bottleneck, with data users stuck in long queues waiting for approvals, access, or new data sources. This slows down innovation and creates a perception of the governance team as an “ivory tower” of gatekeepers.

On the other end of the spectrum, decentralized governance grants complete freedom to individual business units or teams. Each team defines its own rules, selects its own tools, and manages its own data. This model maximizes speed and agility, as there are no bureaucratic hurdles. However, this autonomy often leads to a “Wild West” environment that devolves into chaos. Without coordination, you get multiple, conflicting definitions for the same key metric (e.g., “customer churn”), rampant duplication of data, and inconsistent data quality. This creates a “data swamp” where analysts spend more time reconciling data than analyzing it. Furthermore, it poses significant security and compliance risks, as there is no unified view of where sensitive data resides or who has access to it.

This is where the federated governance model offers a practical, hybrid solution that balances the extremes. A central governance body establishes a set of non-negotiable, enterprise-wide policies, focusing on areas that require global consistency: security classifications, privacy rules (like GDPR and HIPAA), data ethics, and core interoperability standards. Domain teams—the business units that are closest to the data—are then empowered to implement these standards in ways that best suit their specific needs and use cases. The central team’s role shifts from being a gatekeeper to an enabler, providing a self-serve platform, reusable templates, and expert guidance to help domains succeed. This creates structured flexibility that scales with the organization, fostering both accountability and innovation.

| Dimension | Centralized Governance | Federated Governance | Decentralized Governance |

|---|---|---|---|

| Speed | Slow; all decisions flow through a central bottleneck. | Balanced; domains are agile within centrally defined guardrails. | Fast; teams operate with full autonomy, no approvals needed. |

| Consistency | High across all areas, but can be rigid and impractical. | High for global standards; flexible for local implementation. | Low; standards and quality vary widely by team, creating chaos. |

| Scalability | Poor; the central team becomes overwhelmed as the organization grows. | High; governance scales organically as new domains are added. | High speed for individual teams, but poor coordination at scale. |

| Ownership | A single central team owns all governance decisions. | Shared between the central council (global rules) and domain teams (local execution). | Individual teams have complete ownership, leading to silos. |

| Compliance | Strong but rigid; slow to adapt to new regulations or business needs. | Strong and adaptable; global policies ensure compliance, local teams adapt as needed. | Weak and high-risk; difficult to enforce or audit compliance consistently. |

| Innovation | Stifled by bureaucratic approvals and lack of domain context. | Empowered within clear boundaries; domains can experiment safely. | High but uncoordinated and often duplicative; reinvention of the wheel. |

Ultimately, the federated governance model is particularly effective in data-intensive organizations aiming to build a data-driven culture. For example, in genomic research, central policies can ensure patient privacy and data security standards are met across all studies, while individual research domains have the freedom to quickly adapt analytical pipelines for their specific research questions. This balanced approach transforms data governance from a roadblock into a strategic enabler for innovation.

Core Principles and Components of a Federated Governance Model

A successful federated governance model isn’t just an org chart; it’s a system built on clear principles that define how decisions are made and who is responsible. This creates a framework where central oversight and domain autonomy can coexist productively.

- Domain-oriented ownership: This core tenet of data mesh places data ownership with the business domains that create, understand, and use the data. This puts accountability and expertise where they belong, ensuring data is managed by those with the most context.

- Data as a product: This principle dictates that data should be treated not as a technical byproduct but as a valuable asset. Each data asset (or “data product”) should have a designated owner and meet clear quality, documentation, and accessibility standards. A good data product is:

- Discoverable: Easily found through a central data catalog with rich metadata.

- Addressable: Has a permanent, unique location and a reliable access mechanism.

- Trustworthy: Comes with clear lineage, quality metrics, and freshness guarantees.

- Self-describing: Includes documentation, schema definitions, and semantic context.

- Interoperable: Uses standard formats and protocols to connect with other data products.

- Secure: Has clear access control policies and classifications applied.

- Global policies with local implementation: This is the heart of the federated balance. A central body defines non-negotiable global policies (e.g., privacy, security), while domains have autonomy on local implementation. As noted in ‘Building an Event-Driven Data Mesh’, the key is to sort out the decisions that should remain at the local level from those that must be made globally. This balance is central to Federated Data Governance.

- Clear decision rights: To avoid confusion, the model must explicitly define what is decided centrally (e.g., enterprise data strategy, technology standards) versus what is decided locally (e.g., domain-specific quality rules, data product roadmaps). A RACI matrix (Responsible, Accountable, Consulted, Informed) is an invaluable tool for clarifying these roles for key governance processes.

Key Roles and Responsibilities

A federated model requires a well-defined team with distinct responsibilities:

- Chief Data Officer (CDO): The executive sponsor who sets the overall vision, champions the cultural shift towards data as a product, secures funding, and acts as the final arbiter in cross-domain disputes.

- Data Governance Council: A central group of senior stakeholders from business domains, IT, security, legal, and compliance. This council is responsible for defining, ratifying, and maintaining the global policies, standards, and ethical guidelines. They are the legislative branch of data governance.

- Domain Owners: Senior business leaders (e.g., VP of Sales, Head of Logistics) who are ultimately accountable for the data within their specific domains. They ensure their domain’s data strategy aligns with business objectives and sponsor data product initiatives.

- Data Product Owner: A role inspired by agile product management, this person is responsible for the lifecycle of one or more data products. They define the product’s roadmap, prioritize features, and work to meet the needs of their data consumers, bridging the gap between technical teams and business users.

- Data Stewards: Domain-level subject matter experts who are the guardians of day-to-day data management. They define data quality rules, curate metadata, resolve data issues, and approve access requests, ensuring data is fit for purpose.

- Data Custodians: The technical teams (e.g., data engineers, platform engineers) who handle the implementation and maintenance of the data infrastructure. They are responsible for security, storage, backup, and recovery, ensuring the platform runs reliably.

Designing Effective Data Domains

Well-designed data domains are the foundation of a scalable federated model. They should be designed to be MECE (Mutually Exclusive, Collectively Exhaustive) to avoid ownership conflicts and ensure all critical data has a clear home. Effective domains typically:

- Align with business functions: Grouping data by business capability (e.g., Marketing, Finance, Supply Chain) is a natural starting point, as it aligns data ownership with business accountability.

- Group data that changes together: Domains should be cohesive, grouping data assets that are logically related and often used together to simplify management and integration.

- Have end-to-end ownership: A domain should own its data products from creation to consumption, including the pipelines that produce them and the interfaces for accessing them.

- Use data contracts: To ensure reliable integration between domains, producers and consumers should agree on data contracts. These are formal, machine-readable agreements defining the schema, service level agreements (SLAs), quality metrics, and semantic meaning of a data product. They act as a stable API for data.

- Adhere to global interoperability standards: While domains have autonomy, they must follow centrally defined standards for data formats, API protocols, and contract schemas. This ensures that data products from different domains can be easily combined for cross-functional analysis, which is vital for work like our Federated Architecture in Genomics.

Enabling Modern Data Architectures and AI

The federated governance model is not just an organizational theory; it is the essential backbone that makes modern, distributed data architectures practical and safe. It enables a self-serve data platform where domain teams can independently develop, deploy, and manage their own data products within enterprise guardrails. This is achieved through computational governance, which automates policy enforcement directly within the data platform.

By embedding policies into data pipelines as policy-as-code, governance “shifts left” in the development lifecycle, catching issues early rather than after the fact. For example, a pipeline can automatically fail if it tries to join a dataset containing PII with an unapproved public dataset. This automated approach relies on a strong technology foundation of robust data catalogs for discovery, comprehensive metadata management for context, and detailed data lineage for traceability. This foundation is critical for enabling Federated Data Analysis across distributed teams.

Why the federated governance model is essential for data mesh

Data mesh architecture is built on four principles: domain-oriented ownership, data as a product, self-serve infrastructure, and federated computational governance. Without the fourth principle, a data mesh risks becoming a distributed data swamp. The decentralized nature of domain ownership and self-serve infrastructure can lead to chaos, with inconsistent standards, poor interoperability, and a lack of trust between teams.

The federated governance model provides the necessary coordination layer that makes data mesh work at scale. It establishes the “rules of the road” that all domains must follow, creating a social contract between data producers and consumers. By standardizing interoperability (e.g., common data contract formats, standard API protocols) and automating policy enforcement, it builds the trust required for data consumers to confidently use data products from other domains. This incentive structure encourages domains to produce high-quality, reliable data products, as their value is measured by their adoption across the organization.

Supporting AI, ML, and Responsible AI

For artificial intelligence and machine learning, where data quality and bias can have profound real-world consequences, a robust governance framework is non-negotiable. The federated governance model is uniquely suited to support Responsible AI initiatives by providing structure and accountability throughout the MLOps lifecycle.

- Data Lineage for Models: Federated governance, powered by a central data catalog, ensures end-to-end traceability from raw data sources to the features used in a model and, finally, to the predictions it generates. This is crucial for debugging, explainability, and meeting regulatory requirements like GDPR’s “right to explanation.”

- Bias Detection and Mitigation: Global policies can mandate fairness standards and require bias testing for models used in sensitive applications (e.g., credit scoring, clinical diagnostics). Domain teams, with their deep data context, are then responsible for identifying and mitigating biases specific to their datasets, such as historical biases in training data.

- Privacy Preservation: The model enables fine-grained access controls and automated privacy-enhancing techniques (PETs) like data masking, differential privacy, and federated learning. This allows data scientists to develop AI models on sensitive information without exposing the raw data, a critical capability in healthcare and finance.

- Model Governance as a Product: The same “as a product” thinking applied to data is extended to AI models. Models are versioned, documented in a model registry, and monitored for performance drift, concept drift, and fairness degradation over time. Each model has a clear owner responsible for its lifecycle, ensuring accountability for its outcomes.

These capabilities are vital in the biomedical space. Our Lifebit Federated Biomedical Data Platform operationalizes this model, enabling secure, compliant AI and machine learning on the world’s most sensitive health data. By embedding governance into the platform, we ensure every process is secure, traceable, and compliant by design.

A Pragmatic Roadmap to Implementation

Adopting a federated governance model is a significant organizational transformation, not just a technical project. It’s a journey best taken in deliberate phases to build momentum, manage change, and demonstrate value along the way.

The crawl-walk-run maturity roadmap is a proven method for navigating this transition:

- Crawl (Months 1-6): Establish the Foundation. The goal is to prove the concept and secure early wins.

- Activities: Conduct a data maturity assessment. Secure executive sponsorship and form a preliminary Data Governance Council. Select a pilot domain that is high-value, has strong leadership, and is of manageable complexity. Define the initial set of core principles and roles. Implement a foundational data catalog for the pilot domain.

- Outcomes: A documented governance charter. A successful pilot demonstrating reduced time-to-insight for a key use case. A clear business case for further investment.

- Walk (Months 6-18): Scale and Socialize. Focus on expanding the model and embedding the new culture.

- Activities: Onboard 2-3 additional high-impact domains. Refine global policies based on lessons from the pilot. Develop a robust change management and communication plan. Establish a ‘Community of Practice’ for data stewards and product owners to share knowledge. Begin automating policy enforcement (policy-as-code) for data classification and quality checks.

- Outcomes: Multiple domains operating under the federated model. A thriving community that fosters collaboration. Measurable improvements in data quality and accessibility. Increased trust in data across the organization.

- Run (Months 18+): Optimize and Automate. The model becomes the standard way of operating, with a focus on continuous improvement.

- Activities: The federated model is embedded in daily operations and the default for new data initiatives. Fully automate the onboarding of new domains and data products. Implement advanced capabilities like FinOps for cost governance and automated lineage tracking. Establish a formal feedback loop for refining global policies.

- Outcomes: A scalable, self-sustaining data governance program. Data is treated as a product across the enterprise. Governance is seen as an enabler, not a blocker, to innovation.

Avoid common pitfalls like a lack of sustained executive sponsorship, treating it as a one-off IT project, under-investing in change management, and failing to manage resistance to change. Overcoming cultural hurdles requires a deliberate effort to show teams “what’s in it for them”—less time on data wrangling, more time on high-value analysis.

Measuring the success of your federated governance model

To justify the investment and guide improvements, track a balanced set of metrics:

- Efficiency Metrics:

- Time-to-access data: Reduction in the time from a user needing data to them using it in an analysis.

- Time-to-market for data products: How quickly new, trusted datasets can be made available to consumers.

- Quality & Trust Metrics:

- Data quality scores: Tangible improvements in data accuracy, completeness, and consistency within governed domains.

- Data Product NPS: Net Promoter Score from data consumers on their satisfaction with data products.

- Risk & Compliance Metrics:

- Risk incidents reduction: Fewer data breaches, compliance violations, or audit findings.

- Policy adoption rates: Percentage of data products that are compliant with global policies.

- Value & ROI Metrics:

- ROI of data products: The measurable business value (e.g., increased revenue, cost savings) generated by governed data products.

- FinOps for cost governance: Visibility and optimization of data storage and processing costs across domains.

Operating and Funding Models

A hybrid funding model is often the most effective and sustainable approach. Central funding from the enterprise budget covers shared functions and platforms, such as the data catalog, policy definition by the council, and the core platform engineering team. This encourages the adoption of standard tools and practices. Chargeback to domains handles domain-specific costs, such as the development and storage of their data products. This creates direct accountability, encouraging domains to be efficient and to build products that provide real value, justifying their cost.

Addressing Compliance and Security in a Federated World

In a federated model, distributing data ownership does not mean distributing accountability for security and compliance. Instead, it creates a more scalable and effective framework for managing risk. The central governance council sets the mandatory security and compliance policies, and the domains are responsible for implementing them, with enforcement often automated by the platform.

Regulatory compliance becomes more manageable. Instead of a central team trying to interpret and apply regulations like GDPR or HIPAA to every dataset, they create global policy frameworks. For example, a global GDPR policy might mandate that all data products containing personal data must have a documented legal basis for processing and support data subject access requests (DSARs). The domain owner is then responsible for implementing this for their specific data products, with the process often facilitated by the self-serve platform. This approach simplifies auditing, as the central data catalog provides a comprehensive, searchable audit trail of data lineage, access history, and policy application, even though the data itself is managed decentrally.

Advanced Access Control: RBAC and ABAC

Access control in a federated model typically combines Role-Based Access Control (RBAC) and Attribute-Based Access Control (ABAC) for fine-grained security.

- RBAC is broad: It assigns permissions based on a user’s role (e.g., ‘Data Scientist’, ‘Marketing Analyst’).

- ABAC is contextual: It adds dynamic rules based on attributes of the user, the data, and the environment.

For example, in a healthcare setting:

- RBAC might state: “A ‘Researcher’ role can query clinical trial data.”

- ABAC adds critical context: “A ‘Researcher’ role can query clinical trial data, but only for the specific trial they are assigned to, only from an approved IP address, and only for patients who have provided consent for secondary research.”

This combination allows for powerful, context-aware security policies that can be defined centrally but enforced automatically at scale. For sensitive data handling, this is coupled with a systematic approach to data classification and tagging, which enables automated masking, encryption, and access control. Our Federated Trusted Research Environment was built to implement these precise controls for highly sensitive biomedical data.

Handling Multinational and Cross-Border Data Challenges

For global organizations, data residency and sovereignty laws (e.g., GDPR in the EU, CCPA in California, and various national laws) create immense complexity. Moving data across borders for analysis is often legally restricted, slow, and risky. The federated model, when combined with federated technology, provides a powerful solution.

This is achieved through a “code-to-data” paradigm. Instead of moving sensitive data to a central location for analysis (a data-to-code approach), the analytical query or machine learning model is securely sent to the distributed datasets where they reside. The analysis is performed locally, within the data’s jurisdictional boundary, and only the aggregated, non-sensitive results are returned to the researcher. This technique, often called federated analytics or federated learning, is invaluable in fields like Federated Technology in Population Genomics, where large, sensitive patient datasets must remain within specific hospitals or countries. It enables groundbreaking global collaboration and research while ensuring legal compliance and data security by design.

Frequently Asked Questions about Federated Governance

Here are answers to common questions about implementing a federated governance model.

What business problems does federated governance solve?

The federated governance model resolves the tension between central control and domain speed. It addresses key business problems by:

- Breaking down data silos: It accelerates data availability by empowering domain teams to manage their own data within clear guardrails.

- Eliminating analytics bottlenecks: Data scientists and analysts spend more time on analysis and less time on request forms.

- Improving data quality: Domain experts take ownership of data quality, leading to more trustworthy information.

- Managing compliance risks: It provides a robust, adaptable framework for managing regulations like GDPR and HIPAA without slowing down the business.

What is the difference between federated data governance, data federation, and data virtualization?

These terms are often confused but are distinct:

- Federated data governance is an organizational model for decision-making, defining the balance between central oversight and domain autonomy.

- Data federation is a technology that allows users to query multiple data sources as a single system without moving the data.

- Data virtualization is a related technology that creates a virtual abstraction layer to hide the complexity of underlying data sources.

In short, federated governance is the organizational blueprint, while data federation and virtualization are technical tools that can operate within that framework.

What are some real-world examples of federated governance?

Many organizations have successfully adopted this model:

- Autodesk: This software company scaled to 60 domain data teams using a federated model with a data mesh architecture, eliminating its data backlog and enabling self-service access.

- The Very Group: The UK online retailer uses a hub-and-spoke governance structure, where a central hub sets policies and departmental spokes execute locally, balancing consistency with flexibility.

- Avista: The energy company uses a federated “data octopus” model where different functional areas manage their own domains while connecting to a central data catalog.

- Brainly: The global education app uses federated governance supported by a modern data catalog to manage data products across 35 countries, reducing collaboration friction.

These examples show the model’s adaptability across industries, delivering agility, accountability, and improved data quality.

Conclusion

The federated governance model is the essential framework for organizations seeking to open up their data’s potential. This balanced approach delivers enterprise-wide consistency without the bottlenecks of centralized control, enabling agility, scale, and trust. It fosters true data democracy, where data is a shared asset driving innovation.

Adopting this model requires a cultural and organizational shift from data gatekeeping to data enablement. This change is particularly critical in biomedical research, where analyzing complex datasets quickly and safely can save lives. Traditional methods of moving sensitive health data are no longer viable at the scale and speed required for modern research.

At Lifebit, our platform is built to power this federated future. Our next-generation federated AI platform provides secure, real-time access to global biomedical data without the complexities of data movement. With built-in harmonization, advanced AI/ML analytics, and robust federated governance, we empower researchers, biopharma, and governments to collaborate at scale.

Our platform components—the Trusted Research Environment (TRE), Trusted Data Lakehouse (TDL), and R.E.A.L. (Real-time Evidence & Analytics Layer)—deliver real-time insights and secure collaboration across hybrid data ecosystems. This is federated governance in action, enabling breakthrough research while keeping sensitive data secure.

Ready to transform your data governance and open up the power of federated analytics? Explore Lifebit’s federated platform to see how we make secure, large-scale research practical.