How to Master Healthcare Data Integration

Why Healthcare Data Integration Is Broken — And What It’s Costing You

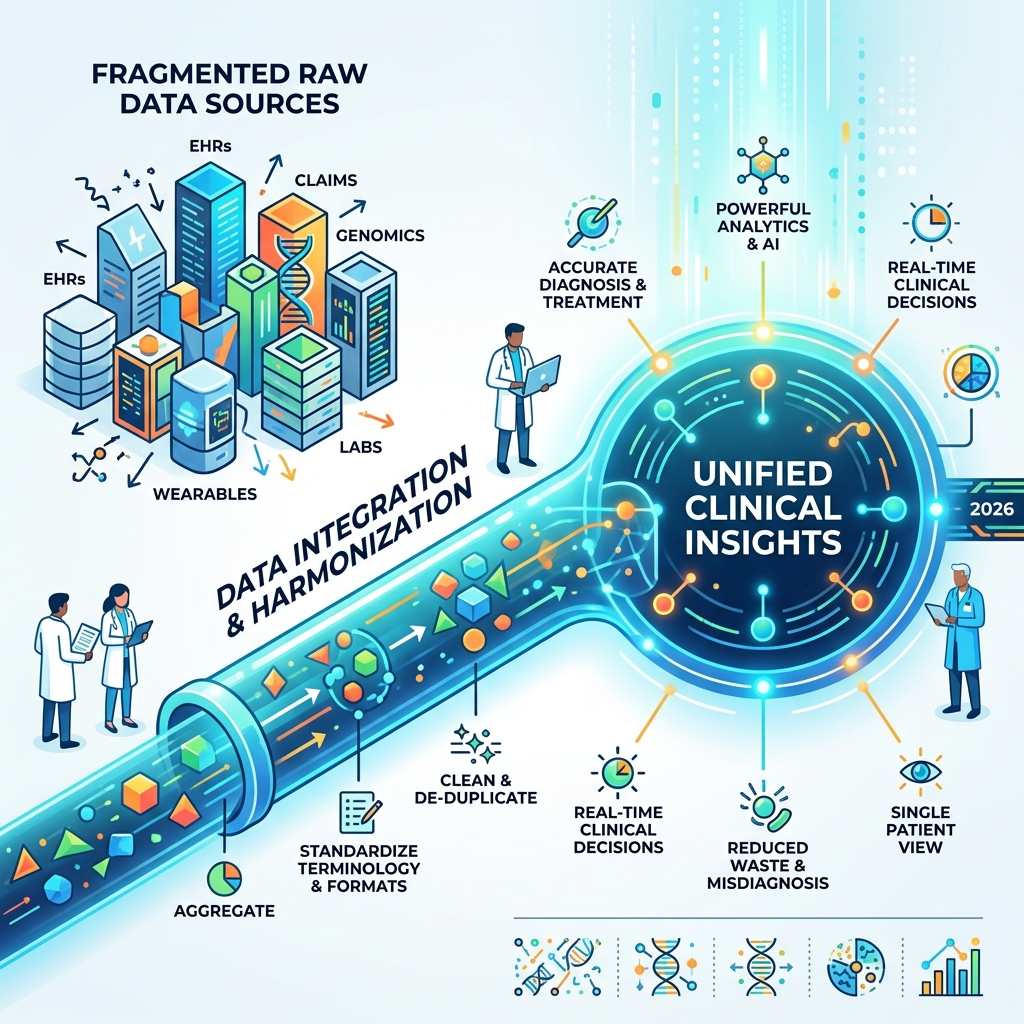

Healthcare data integration is the process of aggregating, harmonizing, and unifying health data from multiple disconnected sources — EHRs, claims, genomics, labs, wearables, and more — into a single, usable foundation for clinical and research decisions.

Here’s a quick breakdown of what it means and why it matters:

| What It Is | Why It Matters |

|---|---|

| Aggregating data from EHRs, labs, imaging, wearables | Eliminates dangerous data silos |

| Harmonizing formats and terminology across systems | Enables accurate diagnosis and treatment |

| Creating a unified record for each patient | Reduces fraud, waste, and misdiagnosis |

| Feeding clean data into analytics and AI tools | Powers real-time clinical and research insights |

The Zettabyte Era: A Deluge of Unusable Data

The scale of the problem is hard to overstate. Healthcare generated an estimated 2.3 zettabytes of data in 2020 alone. To put that in perspective, one zettabyte is equal to a trillion gigabytes. The average patient’s EHR grows by roughly 80MB every year, fueled by high-resolution medical imaging, continuous monitoring from wearables, and the increasing prevalence of genomic sequencing. Yet the majority of that data sits locked in silos — incompatible, incomplete, or simply unreachable.

This is what we call “Dark Data.” It is information that is collected and stored but never utilized because it is trapped in proprietary formats or legacy systems. In a clinical setting, this lack of visibility is lethal. Between 40,000 and 80,000 people die annually in US hospitals due to misdiagnosis. Over 35% of Medicare patients see five or more physicians per year, with no guarantee those physicians share a common view of the patient. When a specialist doesn’t know what the primary care physician prescribed, or a surgeon is unaware of a patient’s recent lab results from an urgent care clinic, the risk of adverse drug events and surgical complications increases exponentially.

The Economic Toll of Fragmentation

Meanwhile, the US spends nearly $4 trillion on healthcare annually — with an estimated $600 billion lost to fraud, waste, and abuse, much of it driven by fragmented, unverified data. This waste includes redundant testing (ordering a second MRI because the first one couldn’t be transferred between systems) and administrative overhead required to manually reconcile records.

The irony? EHR adoption in US hospitals went from 9% to 96% between 2008 and 2015. The infrastructure exists. The data exists. The problem is that it doesn’t talk. We have digitized the paper records, but we have created digital islands that are just as isolated as the filing cabinets they replaced.

I’m Dr. Maria Chatzou Dunford, CEO and Co-founder of Lifebit, and I’ve spent over 15 years working at the intersection of computational biology, AI, and federated healthcare data integration — from building genomic analysis tools at the Centre for Genomic Regulation to leading Lifebit’s work with global pharma and public sector institutions. In this guide, I’ll walk you through everything you need to know to evaluate, implement, and future-proof your data integration strategy.

Healthcare data integration terms explained:

Healthcare Data Integration vs. Interoperability: Why the Difference Matters

In many boardrooms, “integration” and “interoperability” are used interchangeably. This is a mistake that can lead to expensive architectural dead ends. Think of it this way: integration is the plumbing that connects two different buildings, while interoperability is the ability for the people inside those buildings to speak the same language and exchange ideas.

Healthcare data integration involves the technical process of moving data from one system (like a legacy lab database) to another (like a modern data lakehouse). It requires structural mapping—ensuring that the “Patient Name” field in System A matches the “LastName, FirstName” format in System B. It is the foundational layer of data movement and consolidation.

Interoperability, specifically clinical data interoperability, is the higher-level goal. It ensures that once the data is moved, it retains its clinical meaning across different contexts. This relies heavily on modern standards like HL7 FHIR, which provides a consistent framework for resources like “Patient,” “Observation,” and “Medication.”

The Four Levels of Interoperability

To truly master healthcare data integration, one must understand the four levels of interoperability as defined by HIMSS:

- Foundational Interoperability: This is the most basic level, where one system can send data to another, but the receiving system does not necessarily understand the data. It’s like receiving a letter in a language you don’t speak; you have the paper, but not the message.

- Structural Interoperability: This defines the format and syntax of the data exchange. It ensures that the data is organized in a way that the receiving system can detect specific fields (like ‘Date of Birth’).

- Semantic Interoperability: This is the “holy grail.” It ensures that the meaning of the data is preserved. If one hospital codes a heart attack as “Myocardial Infarction” and another uses a specific ICD-10 code, semantic interoperability ensures both systems recognize them as the same clinical event. This is where health data interoperability becomes truly powerful.

- Organizational Interoperability: This involves the non-technical aspects, such as legal agreements, consent management, and workflow integration between different healthcare entities.

The Role of Semantic Interoperability and Harmonization

Moving data is useless if the meaning is lost in translation. By using standard vocabularies like LOINC for lab results and SNOMED CT for clinical findings, we can achieve data harmonization. This allows researchers to query “all patients with Type 2 Diabetes” and get accurate results, regardless of how the original clinician entered the data.

Without healthcare data standardisation, your analytics will fail. The seven benefits of health data standardisation include improved patient safety, reduced costs, and the ability to use AI at scale. Without this foundation, your healthcare organization is just a collection of expensive, isolated digital islands.

Solving the $4 Trillion Crisis with Real-Time Healthcare Data Integration

The financial stakes of fragmented data are astronomical. The US spends roughly $4 trillion annually on healthcare—nearly double that of any other nation—yet life expectancy has actually dropped in recent years. A staggering 15% of this spend, or $600 billion, is attributed to fraud, waste, and abuse. Much of this is preventable through better data visibility.

Consider the human cost: misdiagnosis leads to 40,000 to 80,000 deaths in US hospitals every year. When a physician doesn’t have a patient’s complete history because the data is trapped in a different health system, the risk of error skyrockets. For example, a patient might be allergic to a specific contrast dye used in imaging, but if that allergy was recorded in a different hospital’s EHR, the current provider might proceed with a life-threatening procedure.

Improving Clinical Trial Efficiency and Drug Discovery

In drug development, the lack of healthcare data integration is a billion-dollar bottleneck. Currently, 100% source data verification (SDV)—the manual process of checking trial data against original medical records—can account for over 50% of a trial’s total budget. This manual labor is not only expensive but prone to human error.

By following a clinical trial data integration guide, sponsors can move toward “eSource” models where data flows directly from the EHR to the electronic data capture (EDC) system. This is particularly critical in oncology, where 95% of trials use medical imaging, generating massive datasets that manual processes simply cannot handle. Furthermore, integrated data allows for the use of Real-World Evidence (RWE), enabling pharmaceutical companies to monitor how drugs perform in diverse, real-world populations rather than just controlled trial environments.

Enhancing Value-Based Care and Patient Safety

Value-based care relies on the ability to monitor patient outcomes in real-time. However, over 35% of Medicare beneficiaries see five or more different physicians annually. Without EHR claims integration, it is impossible to see the “whole patient.”

Integrated data allows for “closing the loop” on care gaps. For example, if a patient picks up a prescription (pharmacy data) but misses their follow-up appointment (scheduling data), an integrated system can trigger an automated alert to the care coordinator. This proactive approach prevents potential readmissions and ensures that chronic conditions like hypertension or diabetes are managed effectively before they escalate into acute crises. The transition from volume-based to value-based care is impossible without a unified data layer that tracks the patient journey across every touchpoint.

Overcoming Technical Barriers in Clinical Data Aggregation

If integration were easy, we would have solved it decades ago. The reality is that we are fighting a “fragmentation problem” built on legacy architecture. Many hospital systems still run on software designed in the 1990s—systems that were built for billing and administrative tasks, not for big data analytics, genomic research, or AI-driven diagnostics.

Research shows that up to 54% of clinical data requires advanced cleaning before it can be used for secondary research. Common issues include “machine-unreadable” notes, missing timestamps, and software design flaws that allow clinicians to enter impossible vital signs (like a blood pressure of “9” instead of “90”). Furthermore, much of the most valuable clinical data is trapped in unstructured formats, such as PDF pathology reports or dictated physician notes, which require Natural Language Processing (NLP) to extract and normalize.

Standardizing Formats for Seamless Healthcare Data Integration

To make data usable, we must align it with global standards. In clinical research, this means the CDISC standards, including SDTM (Study Data Tabulation Model) and ADaM (Analysis Data Model). These standards ensure that regulatory bodies like the FDA can review trial data efficiently, reducing the time it takes to bring life-saving therapies to market.

For broader healthcare applications, the industry is moving toward the OMOP Common Data Model. Mapping disparate datasets to OMOP allows researchers to run the same analytical code across different databases worldwide without moving the raw data. This is a core component of our data integration standards healthcare guide. By adopting a common model, we eliminate the need for custom ETL (Extract, Transform, Load) processes for every new research question.

Navigating Privacy Laws and Healthcare Data Integration Security

Data privacy is often cited as the biggest barrier to integration. Regulations like HIPAA in the US and the GDPR in Europe are designed to protect patients, but they can also create “data silos” if not managed correctly. The 21st Century Cures Act specifically targets “information blocking,” mandating that patients have easy access to their electronic health information and that providers do not intentionally interfere with data sharing.

To comply while maintaining security, organizations must implement robust DHA data governance. This includes using technologies like federated access, which allows researchers to analyze data where it lives, rather than copying it into a central (and vulnerable) repository. This “data-to-code” model ensures that sensitive Personal Health Information (PHI) never leaves the secure environment of the hospital or clinic, while still allowing for large-scale collaborative research.

Best Practices for Implementing a Scalable Data Infrastructure

Building a scalable infrastructure for healthcare data integration requires moving away from “point-to-point” connections. If you connect 10 systems individually, you end up with a “spaghetti” architecture that breaks every time a vendor updates their software. This technical debt becomes unmanageable as the number of data sources grows.

Instead, modern organizations are adopting the Data Fabric approach. A data fabric is a unified layer that sits across all your data sources, providing a consistent way to access, govern, and analyze information. Unlike a traditional warehouse, a data fabric doesn’t necessarily move all the data into one place; it creates a virtualized view that makes disparate sources appear as one.

Transitioning from Legacy Warehouses to Modern Fabrics

The old way of doing things—the centralized Clinical Data Warehouse (CDW)—is struggling to keep up with the volume of modern data. These warehouses are often “black boxes” that make it hard to track data lineage (where the data came from and how it was changed).

The future lies in legacy EHR warehouses to FHIR transitions. By using API-first designs and real-time streaming, you can move from batch processing (where data is 24-48 hours old) to real-time insights. Tools like data linking software help bridge the gap between clinical records and social determinants of health (SDOH), such as housing stability and food security, providing a 360-degree view of patient wellness.

Leveraging AI and Synthetic Data for Future-Proofing

AI is not just the “end goal” of integration; it is also a powerful tool for the process itself. AI for data harmonization can automate the tedious work of mapping legacy codes to modern standards, reducing the “human-in-the-loop” requirement by up to 80%. Machine learning models can identify patterns in data entry errors and suggest corrections, significantly improving data quality over time.

We are also seeing the rise of synthetic data. By creating mathematically accurate “twins” of patient datasets, researchers can develop and test algorithms without ever touching sensitive personal health information (PHI). This is a game-changer for innovation, as it allows third-party developers to build tools for a health system without the massive legal and security hurdles of accessing real patient records. This accelerates innovation while virtually eliminating the risk of a data breach during the development phase. Furthermore, the use of Trusted Research Environments (TREs) provides a secure space where researchers can collaborate on integrated datasets under strict governance, ensuring that every access and analysis is audited and approved.

Frequently Asked Questions about Healthcare Data Integration

What is the difference between healthcare data integration and interoperability?

Integration is the technical “how”—connecting systems and moving data. Interoperability is the “what”—the ability for different systems to exchange and use information meaningfully. You need integration to achieve interoperability.

How does the 21st Century Cures Act impact data sharing?

The Act includes “Information Blocking” rules that prohibit healthcare providers and IT vendors from interfering with the access, exchange, or use of electronic health information. It effectively mandates a move toward more open, standardized data sharing and gives patients more control over their own health data.

Which data standards are most important for clinical research?

CDISC (SDTM/ADaM) is the gold standard for regulatory submissions to the FDA. However, HL7 FHIR is increasingly used for real-time data exchange between clinical systems, and OMOP is becoming the preferred model for large-scale observational research and real-world evidence (RWE) generation.

What is a Trusted Research Environment (TRE)?

A TRE is a secure, highly controlled digital environment that allows researchers to access and analyze sensitive health data without the data ever leaving its original secure location. It is a key technology for maintaining privacy in large-scale data integration projects.

Can AI help with data cleaning?

Yes, AI and machine learning are increasingly used to automate the normalization of unstructured data, such as physician notes, and to identify inconsistencies or errors in large clinical datasets, making the integration process faster and more accurate.

Conclusion: How to Stop Drowning in Data and Start Driving Results

The era of the “monolithic EHR” is over. To survive in a world of 2.3 zettabytes of information, healthcare organizations must shift their focus from simply storing data to actively integrating and activating it. The goal is no longer just to have the data, but to make it actionable at the point of care and the point of discovery.

At Lifebit, we believe the answer isn’t to build bigger central databases that become tomorrow’s legacy silos. Instead, we provide a next-generation federated AI platform that enables secure, real-time access to global biomedical and multi-omic data. Our platform—including the Trusted Research Environment (TRE) and Trusted Data Lakehouse (TDL)—allows you to harmonize fragmented data and run advanced AI/ML analytics where the data resides, ensuring maximum security and compliance.

Whether you are a biopharma company looking to slash clinical trial costs, a researcher hunting for the next breakthrough in precision medicine, or a public health agency aiming to improve patient safety, mastering healthcare data integration is your most critical business imperative. The data is already there, hidden in the noise. It’s time to make it talk.

Experience the Lifebit Platform and see how we unify the world’s most complex health data.