How to stop worrying and love your secure analytics environment

Secure Analytics Environment: Stop Data Silos and Cut Research Risks by 90%

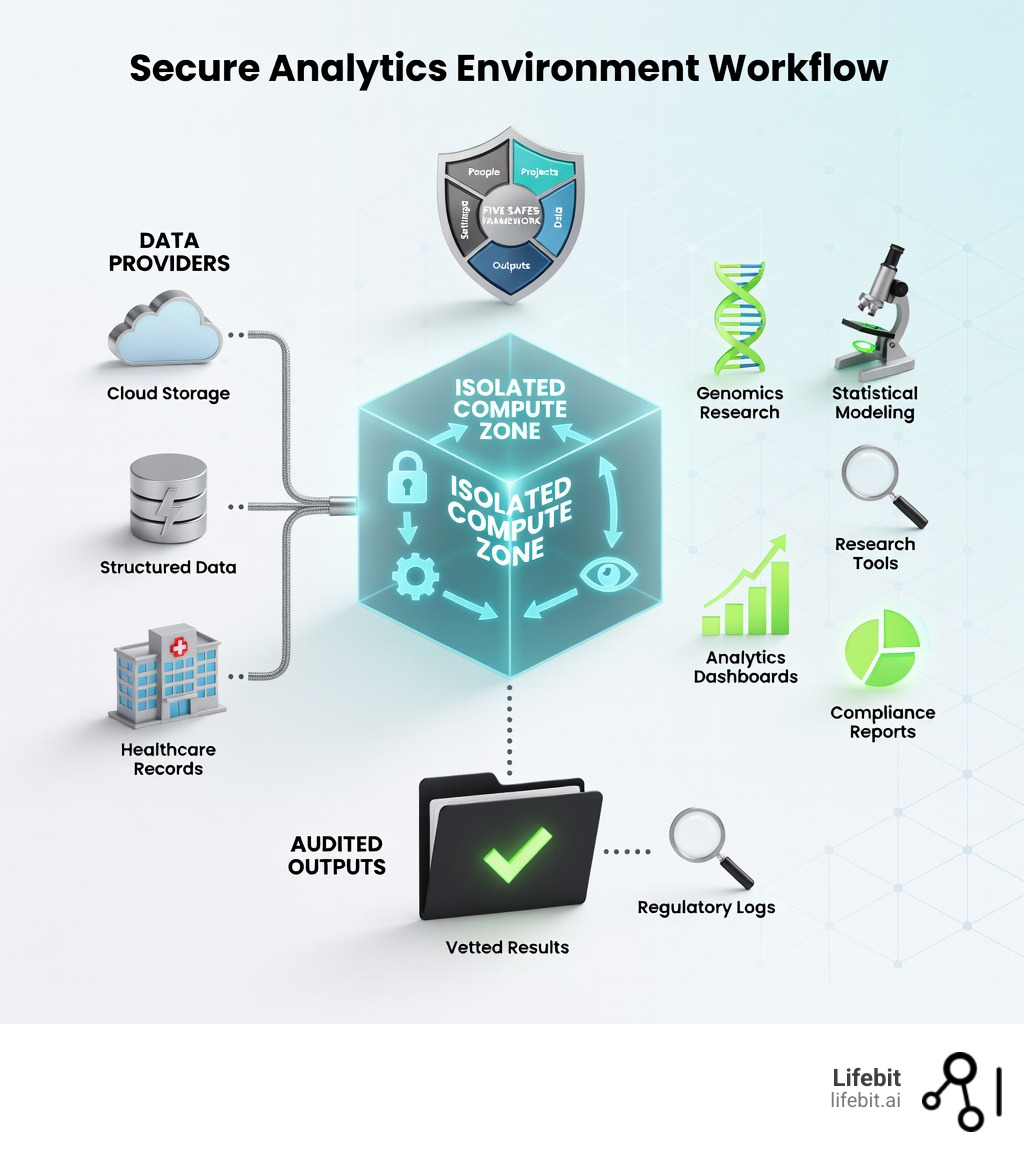

A secure analytics environment (SAE) is a highly controlled, specialized computing infrastructure designed to allow approved researchers to analyze sensitive data—such as patient health records, genomic sequences, or proprietary financial information—without ever exposing the raw datasets to the risk of unauthorized extraction or violating stringent privacy regulations. These environments are not merely “secure servers”; they are comprehensive ecosystems that combine advanced encryption, granular access controls, and continuous auditing to protect data at rest, in transit, and, crucially, during active use.

In the current landscape of biomedical research, we face a “Data Paradox.” We have more data than ever before, yet 80% of it remains locked in silos due to security concerns. This “dark data” represents a massive opportunity cost in the search for cures for rare diseases and cancer. A secure analytics environment bridges this gap by providing a “Data Safe Haven” where the utility of the data can be extracted without compromising the privacy of the individuals behind the data. By implementing these environments, organizations can effectively reduce the risk of data breaches and regulatory non-compliance by up to 90%, as the primary vector for leaks—the physical movement of data—is eliminated.

Furthermore, the economic impact of these silos is staggering. Research suggests that the inability to share data efficiently adds years to drug development timelines and billions to R&D costs. An SAE mitigates this by creating a standardized, secure interface for collaboration. It allows for the “democratization of data” while maintaining the highest levels of “data sovereignty.” This means that a hospital in Germany can collaborate with a research institute in the US without the data ever leaving German soil, adhering to both GDPR and local data residency laws.

Key features of a secure analytics environment:

- Isolated compute: Dedicated virtual machines (VMs) or containers that operate in a “walled garden,” preventing unauthorized data extraction through the internet, USB ports, or clipboards. These environments are often “air-gapped” from the public internet to ensure no data can be exfiltrated via hidden scripts.

- The Five Safes Framework: A holistic governance model that ensures safety across five dimensions: safe people, safe projects, safe settings, safe data, and safe outputs. This framework is the bedrock of modern data stewardship.

- Compliance-ready: Architectures specifically built to meet and exceed HIPAA, GDPR, ISO-27001, and SOC2 Type II standards, often featuring automated compliance reporting that can be shared with regulators at the touch of a button.

- Flexible access modes: Support for various research workflows, ranging from full interactive analysis workspaces (Tinker Mode) to automated, blind script submission (Blind Mode), ensuring that the level of access matches the sensitivity of the data.

- Comprehensive Audit Trails: Every single action—from a login attempt to a specific line of code executed—is logged and timestamped for regulatory review and forensic analysis. This provides a “black box” recorder for all research activities.

Privacy, copyright, and competition barriers have traditionally limited the sharing of sensitive data for scientific purposes. Traditional data sharing creates immense risk: a single breach can trigger catastrophic regulatory fines, permanent damage to institutional reputation, and the immediate halting of vital research programs. Secure analytics environments solve this by flipping the script: they keep the data where it lives (in-situ) and bring the analysis code to the data—not the other way around.

As Dr. Maria Chatzou Dunford, CEO of Lifebit, I’ve spent over 15 years building secure analytics infrastructure for genomics and biomedical research. I have seen firsthand how federated platforms, trusted by public sector institutions like Genomics England and pharmaceutical giants globally, can transform research. A well-designed secure analytics environment doesn’t just protect data—it accelerates discovery by removing the bureaucratic and technical barriers that keep researchers locked out of the datasets they need to cure diseases.

Secure analytics environment terms at a glance:

Why Traditional Data Sharing is Dead—And What’s Replacing It

In the modern research landscape, data is the most valuable currency we have. However, it is often locked away in silos, guarded by legal teams and IT departments who are rightfully terrified of the consequences of a leak. Why is this the case? Because the risks of sharing it—data leaks, intellectual property theft, and privacy violations—are perceived as significantly higher than the rewards of collaboration. This is where a secure analytics environment (SAE) changes the game by decoupling data access from data ownership.

An SAE is more than just a locked folder or a password-protected cloud bucket; it is a secure computing environment built to offer safe remote access to sensitive data while keeping that data private. For data providers, such as hospitals or biobanks, it offers a way to monetize or share assets without losing control or physical possession. For researchers, it provides the “keys to the kingdom”—access to high-fidelity, real-world data (RWD) that was previously off-limits due to its sensitivity. This shift from “data sharing” to “data visiting” is the most significant evolution in research infrastructure in the last decade.

The Core Pillars of a Secure Analytics Environment

We don’t just “trust” that an environment is secure; we verify it using the Five Safes Framework. This gold-standard model, originally developed by the UK Office for National Statistics, ensures that every aspect of the research process is de-risked through a multi-layered approach:

- Safe People: Only researchers with the right credentials, verified institutional affiliations, and specific ethical training (such as GCP or HIPAA certification) are granted entry. This often involves a rigorous vetting process by a Data Access Committee (DAC) that reviews the researcher’s track record and institutional standing.

- Safe Projects: The research must have a clear, approved purpose that benefits the public or scientific community. This prevents “fishing expeditions” where data is used for purposes outside the original consent. Every project must have a defined scope, and any deviation from that scope requires a new approval cycle.

- Safe Settings: This is the “environment” itself—a closed-off virtual machine where data cannot be copied, pasted, or emailed out. It often includes “air-gapping” techniques where the environment has no outbound internet access. The user interface is typically a Virtual Desktop Infrastructure (VDI) that only transmits pixels, not raw data, to the researcher’s local screen.

- Safe Data: Data is de-identified, pseudonymized, or even synthetically enhanced before the researcher even sees it, ensuring that individual identities are protected even if a researcher were to act maliciously. This includes the removal of direct identifiers like names and social security numbers, as well as the masking of indirect identifiers like birth dates or specific geographic locations.

- Safe Outputs: Before any results, charts, or statistical summaries leave the environment, they are vetted by data stewards to ensure no “re-identification” of individuals is possible through residual disclosure. This process, known as Statistical Disclosure Control (SDC), ensures that small cell counts or outliers do not inadvertently reveal a patient’s identity.

Why Traditional Data Sharing Fails

The “old way” of sharing data involved physical transfers, encrypted hard drives sent via courier, or insecure FTP sites. This is a nightmare for compliance officers and a goldmine for hackers. Under regulations like GDPR and HIPAA, a single leaked record can lead to millions in fines and the loss of public trust. Furthermore, the “copy-and-send” model creates “Data Gravity” issues; when datasets reach petabyte scale, moving them becomes physically and economically impossible.

A secure analytics environment replaces this outdated model with a “visit-and-analyze” model. Instead of the data moving to the researcher, the researcher moves to the data. This eliminates the need for data movement entirely, drastically reducing the attack surface and ensuring that the data provider maintains 100% sovereignty over their assets at all times. This shift is not just a technical upgrade; it is a fundamental change in the philosophy of data stewardship, moving from a model of “custody” to a model of “governance.”

Stop Data Leaks: How Lifebit Secures Your Most Sensitive Research

At Lifebit, we believe you shouldn’t have to choose between data security and research speed. Our platform uses isolated virtual machines that act as a “digital clean room.” Within this space, researchers can use the tools they love—like R, Python, and Jupyter Notebooks—while the data stays firmly behind the provider’s firewall. This approach ensures that the data never leaves its original jurisdiction, which is critical for complying with national data sovereignty laws.

Our infrastructure is built on a foundation of ISO-27001 and SOC2 certified services and undergoes rigorous, regular third-party penetration testing. We ensure that the “trust boundary” is as small as possible, protecting your data even from the cloud administrators themselves. By utilizing advanced Identity and Access Management (IAM) and Multi-Factor Authentication (MFA), we ensure that only the right eyes ever see the data. This “Zero Trust” architecture means that no user or system is trusted by default, regardless of whether they are inside or outside the network perimeter.

Flexible Access: Choose the Right Secure Analytics Mode

Not every research project requires the same level of access. Depending on the sensitivity of the data and the goals of the study, we typically see two main modes of operation, often referred to in the community as “Tinker” and “Blind”:

- Tinker Mode (Interactive Analysis): Ideal for exploratory analysis and hypothesis generation. Researchers work directly within a virtual desktop environment. They can see the data, clean it, and manipulate it using standard libraries, but they cannot extract it. Every output is manually or automatically screened for privacy leaks using statistical disclosure control (SDC) methods. This mode is essential for the “messy” early stages of research where data cleaning and feature engineering are required.

- Blind Mode (Non-Interactive Analysis): Used when the data is so sensitive that even looking at it is a risk (e.g., rare disease data where a single patient might be identifiable). Researchers submit their analysis scripts (code) via a secure portal. The system runs the script against the data in a “black box” environment and returns only the final, aggregated results. This is often combined with “Differential Privacy” to add mathematical noise to the results, further protecting individual privacy. This mode is the gold standard for high-stakes clinical trials and genomic studies.

The Role of Cloud Infrastructure in Scalability

One of the biggest problems in modern biomedical research is the sheer size of the data. Genomic files are massive, often reaching hundreds of gigabytes per individual. A secure analytics environment powered by cloud infrastructure—like our federated biomedical data platform—allows for infinite scaling.

Traditional on-premise servers often crash when faced with large-scale GWAS (Genome-Wide Association Studies) or deep learning workloads. In contrast, a cloud-native SAE can spin up thousands of nodes in minutes. Whether you need a single CPU for a week of data cleaning or a thousand GPUs for an afternoon of deep learning, the environment expands to meet your needs and shrinks to save costs when you’re done. This “elasticity” ensures that research is never bottlenecked by hardware limitations, allowing scientists to focus on discovery rather than infrastructure management. Furthermore, by using containerization (Docker/Kubernetes), we ensure that the research environment is perfectly reproducible, a key requirement for scientific integrity.

The Five Safes: Your 2026 Blueprint for Bulletproof Data Governance

Implementing a secure analytics environment isn’t just a technical task; it’s a governance task. By following the Five Safes, organizations can securely connect rare disease ecosystems, as seen in projects like the rare disease cures accelerator data analytics platform (RDCA-DAP). This framework provides a common language for legal, IT, and research teams to agree on risk thresholds. It transforms security from a “no” department into an “enabler” department.

This framework ensures that even the most complex multi-omic datasets—which combine genomics, proteomics, and clinical data—remain protected. For example, “Safe Outputs” often involves “disclosure control,” where statistical noise might be added to small patient groups to prevent anyone from being identified by their unique combination of traits. If a researcher tries to export a table where a specific cell has a count of less than five, the system can automatically redact that cell to prevent re-identification. This is critical in rare disease research, where a specific combination of age, gender, and diagnosis could easily identify a single individual.

Ensuring Data Security During Active Analysis

Security doesn’t stop once a researcher logs in. In a modern SAE, we employ several layers of protection that operate in the background to ensure that the data remains secure even during the most intensive computations:

- Encryption at Rest and Transit: Data is scrambled using AES-256 whenever it isn’t being actively computed, and all communication between the researcher’s browser and the environment is protected by TLS 1.3. This ensures that even if a data packet is intercepted, it is unreadable.

- Runtime Protection: We monitor the “memory” of the computer to ensure no unauthorized processes are trying to peek at the data while it’s being analyzed. This prevents “side-channel” attacks where a malicious user might try to infer data values by monitoring CPU usage or memory access patterns.

- Egress Filtering: The environment is strictly prohibited from making outbound connections. This means a researcher cannot “ping” an external server or use a hidden script to send data to a personal cloud storage account. All software libraries and dependencies are pre-vetted and stored in a local, secure mirror.

- Audit Logs: We record every command. If a researcher tries to run an unusual query—such as attempting to list all patient names or accessing a table they aren’t authorized for—the system flags it immediately and can automatically terminate the session. These logs are immutable and stored in a separate security account to prevent tampering.

[TABLE] Comparing Secure Analytics Environments and Data Clean Rooms

| Feature | Secure Analytics Environment (SAE) | Data Clean Room | Trusted Research Environment (TRE) |

|---|---|---|---|

| Primary Goal | Deep analysis, ML & Discovery | Marketing, Attribution & Matching | Academic & Clinical Research |

| Data Movement | None (Federated/In-Situ) | Minimal (Shared Views/Joins) | None (Data Stays In-Situ) |

| User Tools | R, Python, SAS, SQL, Julia | SQL, Query Templates | R, Python, Stata, Julia |

| Security Model | Five Safes / TEEs / IAM | Differential Privacy / K-Anonymity | Five Safes Framework |

| Best For | Drug discovery, AI, Genomics | Retail, Advertising, FinTech | Public Health, Population Studies |

| Compute Power | High (HPC/GPU Support) | Moderate (SQL Engines) | High (Virtual Desktops) |

| Audit Level | Full Command-Level Logging | Query-Level Logging | Full Session Recording |

How UK Biobank and YODA Cut Research Bottlenecks Using Secure Environments

The impact of these environments is already being felt globally, moving from theoretical frameworks to essential research infrastructure. The UK Biobank is a prime example. It contains de-identified genomic information, imaging data, and health records from 500,000 individuals. In the past, researchers had to download subsets of this data, which was slow, expensive, and risky. Today, they provide a secure cloud-based environment (the Research Analysis Platform) where global experts can run analyses safely on the entire dataset without a single byte of raw data leaving the UK Biobank’s control. This has led to a 10x increase in the number of research projects being conducted on the platform.

Similarly, projects like the Yale University Open Data Access (YODA) project and the EPIC-Norfolk study use these frameworks to share clinical trial and lifestyle data. By providing a Secure Research Computing Platform, they ensure that 30,000+ people’s health history helps cure cancer without ever risking their personal privacy. These platforms have reduced the time it takes to grant data access from months to just days, accelerating the pace of scientific discovery.

Why Federated Learning Is the Future of Secure Analytics

We are moving toward a world of “Data Sovereignty.” Organizations want to collaborate, but they don’t want to move their data across borders due to legal restrictions like the European Health Data Space (EHDS) or China’s Data Security Law. Federated Learning is the answer, and it is the most advanced form of a secure analytics environment. It allows for the training of AI models on decentralized data without the data ever being pooled.

In a federated secure analytics environment, the AI model travels to the data, rather than the data traveling to a central server. The process works like this:

- A central “global” model is sent to multiple local sites (e.g., Hospital A, Hospital B, and Hospital C).

- The model “learns” from the local dataset at each hospital, improving its parameters based on the local data. This happens within a secure enclave at each site.

- Only the “gradients” (the mathematical updates to the model) are sent back to the central server. These gradients do not contain any raw patient data.

- The central server aggregates these updates to create a smarter global model, which is then sent back out for another round of learning.

At no point is raw patient data ever shared or moved. This allows for the training of incredibly powerful AI models on global datasets that could never be centralized due to legal or technical constraints. This is how we will eventually train AI to detect rare diseases that no single hospital sees enough of to build a model on its own. It is the ultimate solution for multi-center clinical trials and international research consortia.

Overcoming Infrastructure Complexity and Cost

Setting up a bespoke SAE used to be a multi-million dollar project requiring a team of specialized security engineers and months of configuration. Today, cloud-native architectures and platforms like Lifebit have slashed these costs and timelines. A typical SANE (Secure Analytics Node Environment), for instance, can cost as little as €25 to €300 per month depending on the specific compute needs. By using an integrated platform, organizations avoid “tool sprawl”—the expensive and risky habit of buying ten different software packages for encryption, logging, and compute when one integrated platform will do it all more securely. This allows even small research teams and startups to access the same level of security as global pharmaceutical giants.

Beyond 2025: How Confidential Computing Fixes the ‘Data in Use’ Gap

The next frontier in the secure analytics environment space is Confidential Computing. Traditionally, data was protected in two states: “at rest” (on a hard drive) and “in transit” (moving across the network). However, data was always vulnerable when it was “in use” (being processed in the RAM). If a hacker or a rogue administrator had access to the server’s memory, they could see the raw data as the CPU worked on it. This was the “final mile” of data security that remained unaddressed for decades.

Confidential Computing uses hardware-based trusted execution environments (TEE) to create a “safe room” inside the processor itself. This technology, pioneered by Intel (SGX) and AMD (SEV), ensures that data is encrypted even while it is being processed. It provides a hardware-level guarantee of privacy that is independent of the software stack.

Closing the Security Gap with Confidential Computing

With TEEs, even if a hacker gains “root access” to the entire operating system or the cloud provider’s hypervisor, they cannot see the data inside the TEE. The hardware itself prevents any process outside the “enclave” from reading the memory. This provides “attestation”—a cryptographic proof that the environment is running the exact code it says it is, and that the hardware is genuine and secure. This means that the data provider can be 100% certain that their data is only being used for the approved purpose.

This technology is a core component of how we secure the most sensitive multi-omic workloads at Lifebit. It allows pharmaceutical companies to run proprietary algorithms on third-party datasets with the absolute certainty that their IP is protected and the data provider’s privacy is maintained. It is the final piece of the puzzle in creating a truly “zero-trust” research environment. As we move into 2026, we expect Confidential Computing to become a standard requirement for all high-sensitivity data processing.

Scaling Global Research with a Secure Analytics Environment

As we look toward 2026 and beyond, the goal is to achieve “FAIR” data: Findable, Accessible, Interoperable, and Reusable. A secure analytics environment is the engine that makes FAIR data possible. By integrating an SAE with federated AI, we enable real-time insights across 5 continents simultaneously. This allows for “real-time evidence generation,” where researchers can see the impact of a new treatment across global populations in days rather than years.

Whether it’s the BeginNGS organization using advanced database technologies like TileDB for newborn sequencing or pharma companies seizing what McKinsey calls a “once-in-a-century opportunity” for AI-driven drug discovery, the foundation is always the same: a secure, trusted space for data to meet code. We are moving away from a world of data hoarding and toward a world of data collaboration, where the security of the environment is the catalyst for the next generation of medical breakthroughs. The future of science is not just open; it is securely open.

Secure Analytics Environment: 3 Questions You Need Answered Now

What is the difference between a Secure Analytics Environment and a Trusted Research Environment?

In practice, the terms are often used interchangeably, but they have different origins and slightly different focuses. A Trusted Research Environment (TRE) is the term most common in the UK and European public health sectors, popularized by the NHS and the Goldacre Review. It often focuses on academic and clinical research. A Secure Analytics Environment is a broader term often used in the private sector, biopharma, and government agencies like Statistics Canada’s Collaborative Analytics Environment. Both aim to provide a “Data Safe Haven” that adheres to the Five Safes Framework, but an SAE often places a heavier emphasis on supporting advanced machine learning, AI workloads, and commercial drug discovery pipelines.

How does a secure analytics environment prevent data leaks from malicious researchers?

It uses a “walled garden” approach that assumes a “Zero Trust” posture. Internet access is disabled by default, USB ports are blocked, and the clipboard (copy/paste) is restricted so data cannot be moved to the local machine. Furthermore, any file that a researcher wants to take out (like a final graph or a summary table) must go through a “Managed Transfer Process” (MTP). During this process, a human data steward or an automated AI scanner reviews the file to ensure it contains no sensitive personal information or individual-level data. This “human-in-the-loop” system, combined with automated Statistical Disclosure Control (SDC), is the ultimate safeguard against data exfiltration. Even if a researcher tries to take a photo of the screen, the low-resolution pixel streaming and watermarking make it difficult to extract usable raw data.

Can I use standard tools like R and Python in these environments, or am I restricted to proprietary software?

Yes, you can use standard tools! In fact, that is the main benefit of a modern SAE. Unlike older “Data Clean Rooms” that might only allow limited SQL queries or basic spreadsheets, a modern secure analytics environment like Lifebit’s allows for full Azure Databricks or Azure Machine Learning integration. Researchers can bring their own custom scripts, use familiar libraries (like Pandas, Scikit-learn, or Tidyverse), and even use IDEs like VS Code or JupyterLab. This ensures that the security of the environment does not come at the cost of scientific productivity. The environment provides a “standard” experience for the researcher, while the security layers operate invisibly in the background.

How do these environments handle data from multiple different sources?

Modern SAEs use “Data Harmonization” layers to solve the problem of data heterogeneity. When data comes from different hospitals or biobanks, it often uses different formats, coding systems (e.g., ICD-9 vs. ICD-10), or terminologies. A robust secure analytics environment includes tools to map these disparate datasets to a Common Data Model (CDM) like OMOP (Observational Medical Outcomes Partnership). This allows researchers to run a single script across multiple datasets simultaneously, significantly increasing the statistical power of their studies without needing to manually clean every individual dataset. This interoperability is what allows for the creation of “Virtual Biobanks” that span multiple countries and institutions.

Start Focusing on Discovery, Not Data Risks

The era of “hiding” data to keep it safe is officially over. In a world facing global health crises and the need for personalized medicine, we can no longer afford to let valuable data sit idle in silos. To solve the world’s biggest health challenges, we must share data—but we must do it responsibly, ethically, and securely. By adopting a secure analytics environment, you can stop worrying about the catastrophic risks of a data breach and start focusing on the immense rewards of scientific discovery.

At Lifebit, we are proud to power this transition with our federated AI platform. We provide the infrastructure that allows biopharma, governments, and researchers to collaborate securely across the globe, ensuring that the next medical breakthrough is only a query away. From real-time evidence layers to trusted data lakehouses, we are building the foundation for a more collaborative and secure future in science. The transition to secure analytics is not just a technical requirement; it is a moral imperative to ensure that every patient’s data can contribute to the next generation of life-saving treatments.

Ready to open up your data’s potential?

Learn more about Lifebit’s federated platform and how we can secure your research today.