Singularity and Beyond: Navigating the World of Decentralized AI

Stop Losing Data Control: Why a Decentralized AI Platform is Your Only Choice in 2026

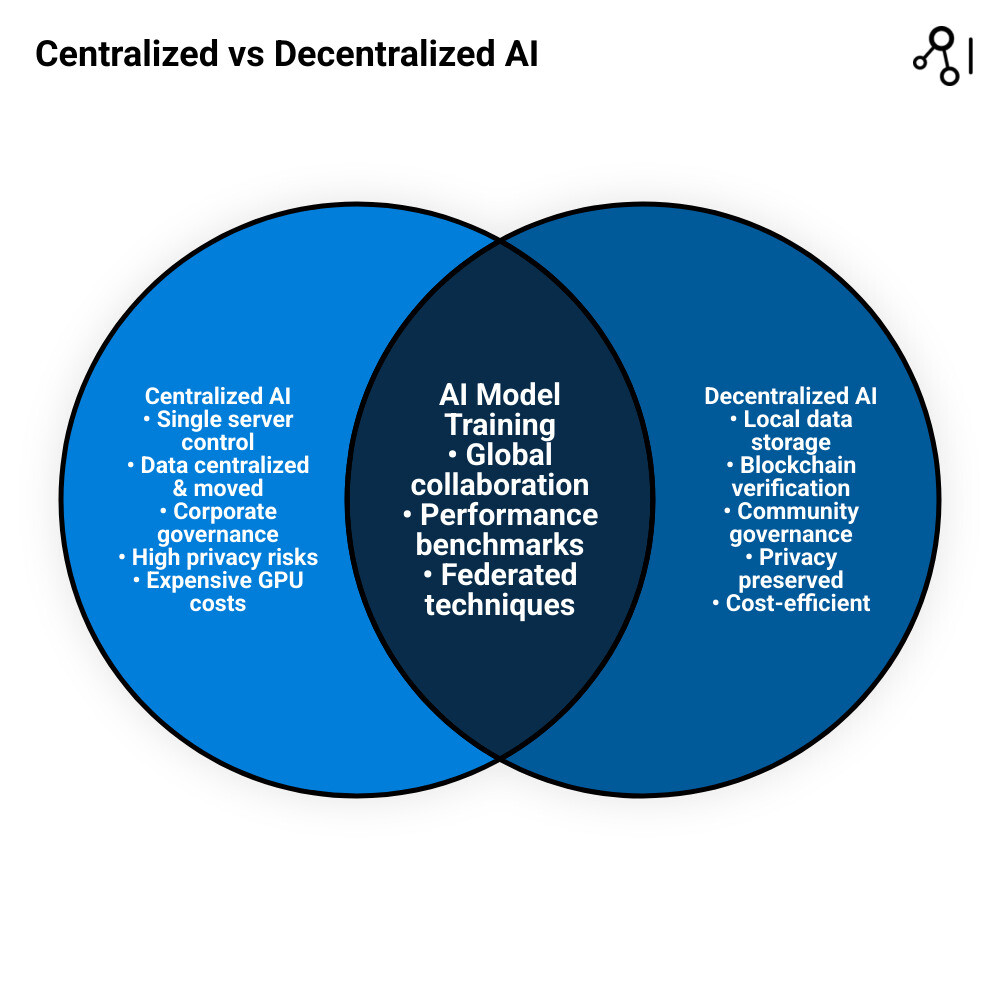

A decentralized ai platform distributes AI capabilities across a network of independent nodes rather than concentrating them in centralized servers controlled by single entities. As we approach 2026, the urgency for this shift has reached a breaking point. The traditional model of AI development—where a handful of “Big Tech” firms own the infrastructure, the algorithms, and the data—is creating a digital bottleneck that stifles innovation and compromises individual sovereignty.

In the current landscape, data is often treated as the “new oil,” but the refineries are owned by a select few. This leads to a “Data Control Crisis” where organizations must choose between utilizing powerful AI tools or maintaining the privacy of their proprietary information. A decentralized AI platform eliminates this forced compromise by fundamentally re-architecting how intelligence is generated and shared. Here’s what makes them different:

Key Features of Decentralized AI Platforms:

- Data stays local — AI models learn via federated techniques without moving sensitive data, ensuring that raw information never leaves its original jurisdiction or secure environment.

- Blockchain verification — Provides a transparent, immutable ledger for trustless validation of AI contributions, ensuring that every training step and model update is verifiable.

- Privacy-preserving computation — Employs advanced cryptographic methods like zero-knowledge proofs (ZKPs), Secure Multi-Party Computation (SMPC), and encrypted processing to protect intellectual property.

- Tokenized incentives — Creates a circular economy that rewards contributors for providing high-quality data, idle compute power, or algorithmic improvements, democratizing the wealth generated by AI.

- Open governance — Community-driven decisions via DAOs (Decentralized Autonomous Organizations) replace opaque corporate boardrooms, ensuring the AI’s evolution aligns with broader human interests.

The shift away from centralized AI is accelerating due to a perfect storm of privacy concerns and economic necessity. ChatGPT alone produces 100 billion words daily, while major tech platforms track nearly half of all users’ web browsing. Yet 49.6% of web traffic now comes from bots, and over 80% of people believe external forces manipulate the news they see. This concentration of AI power in a few hands creates data monopolies, amplifies algorithmic bias, and locks out smaller organizations from the innovation cycle. By 2026, the cost of data breaches and the regulatory pressure of acts like the EU AI Act will make centralized storage of sensitive training data a massive liability.

Decentralized AI platforms solve these problems by keeping data where it belongs — in secure, local environments — while still enabling global collaboration. The market is responding: decentralized AI is predicted to grow at 40% CAGR between 2023 and 2030, with systems already achieving 95% accuracy, 94% precision, and 96% recall in real-world applications. This isn’t just a theoretical improvement; it is a functional necessity for industries that handle high-stakes information.

For healthcare and life sciences organizations, this matters enormously. Clinical data, genomics, and patient records can’t be centralized without massive privacy and regulatory risks. Yet AI models need diverse datasets to deliver accurate, unbiased results. Decentralized platforms bridge this gap through federated learning, blockchain-backed governance, and secure computation across distributed networks.

I’m Maria Chatzou Dunford, CEO and Co-founder of Lifebit, where I’ve spent over 15 years building federated platforms that enable secure biomedical data analysis without moving data. Throughout this guide, I’ll show you how decentralized ai platform systems are reshaping healthcare, finance, and research — and how your organization can adopt them to open up innovation while protecting privacy.

Decentralized ai platform terms explained:

How a Decentralized AI Platform Cuts GPU Costs by 80% and Ends Data Monopolies

Let’s be honest: the current AI landscape is a bit of a “walled garden” situation, and the walls are getting higher. When we rely on a centralized AI model, we aren’t just using a service; we are handing over the keys to our most valuable asset—our data. This creates a dependency loop where the more we use these services, the more powerful the provider becomes, and the less control we retain over our own digital destiny.

Centralized AI systems create massive data monopolies. Because these models require data to be moved into a central “brain” for training, sensitive information becomes siloed within corporate servers. This creates a single point of failure and a massive target for breaches. Furthermore, “one-size-fits-all” models often struggle with diverse real-world scenarios, leading to outcomes that can be both inaccurate and unfair. When a model is trained on a narrow, centralized dataset, it inevitably inherits the biases of that data, which can have devastating consequences in fields like medical diagnostics or credit scoring.

Then there is the cost. Building and running massive AI models is eye-wateringly expensive. The “GPU squeeze” is a real phenomenon where the demand for high-end compute power far outstrips the supply, allowing cloud giants to charge a premium. However, a decentralized ai platform approach can change the math. By leveraging a global network of contributors—ranging from data centers with idle capacity to individual researchers with high-end workstations—these platforms create a competitive marketplace for compute.

Decentralized infrastructure projects have demonstrated that they can cut GPU costs by up to 80% by eliminating the “middleman tax” charged by cloud giants. Instead of paying for the overhead of a massive corporation, users pay directly for the compute they use, often in the form of utility tokens. This democratizes access to high-performance computing, allowing startups and academic institutions to compete with tech titans on a level playing field.

The performance results speak for themselves. Scientific research on AI performance shows decentralized AI achieving 95% accuracy, 94% precision, and 96% recall. These numbers prove that distributing intelligence doesn’t mean sacrificing quality. In fact, by exploring the power of distributed architectures, we can often build more robust models because they are trained on a wider, more diverse range of real-world data. This diversity acts as a natural defense against overfitting and ensures the model performs reliably across different demographics and environments.

| Feature | Centralized AI | Decentralized AI Platform |

|---|---|---|

| Data Location | Centralized Cloud | Stays Local (Sovereign) |

| GPU Costs | High (Corporate Pricing) | Up to 80% Lower |

| Transparency | Black Box APIs | Verifiable & Open |

| Privacy | High Risk (Data Transfer) | High Security (Local Training) |

| Governance | Corporate-Led | Community/DAO-Led |

| Resilience | Single Point of Failure | Distributed & Fault-Tolerant |

| Incentives | Profit-Driven | Contribution-Driven |

The Tech Stack: How to Build a Decentralized AI Platform Without Moving Sensitive Data

How do we actually build a brain that lives everywhere at once? It isn’t magic; it’s a clever combination of several cutting-edge technologies. A decentralized ai platform relies on a “tech stack” that prioritizes security, scalability, and collaboration over central control. This stack is designed to solve the “Privacy-Utility Trade-off,” allowing us to extract value from data without ever exposing the data itself.

At its heart, this ecosystem uses distributed computing to spread the heavy lifting across thousands of nodes. Instead of one giant supercomputer, we use a global network. To keep this network honest and efficient, we use three main pillars: Federated Learning, Blockchain, and Privacy-Preserving Computation (including Zero-Knowledge Proofs).

How Federated Learning Secures a Decentralized AI Platform

If you’ve ever used a smartphone that predicts your next word without sending your private texts to a server, you’ve used federated learning. In a decentralized ai platform, federated learning is the secret sauce for data sovereignty. It flips the traditional data science workflow on its head.

Instead of bringing the data to the model, we bring the model to the data. Each node (like a hospital, a research lab, or even an edge device) trains the model locally on its own private dataset. Then, only the “insights”—mathematical updates or gradients that contain no raw data—are shared back to the global network. These updates are aggregated to improve the global model, which is then sent back to the nodes for the next round of training. This is a game-changer for federated learning in healthcare, where patient privacy is non-negotiable. It allows us to gain global insights while ensuring that sensitive records never leave their secure home. You can learn more about how this works in our federated data platform ultimate guide.

Blockchain and Tokenomics in a Decentralized AI Platform

If federated learning is the “how,” blockchain is the “who and why.” A decentralized system needs a way to verify that contributors are actually doing the work they claim to do and that the data they provide is high-quality. Blockchain provides a trustless verification layer that replaces the need for a central authority.

Professor Ramesh Raskar highlighted in his talk at EmTech Digital in May 2024 that opening up AI’s true potential requires collaborative, verifiable frameworks. Blockchain makes this possible through:

- Smart Contracts: These are self-executing contracts with the terms of the agreement directly written into code. They automatically handle payments, permissions, and model versioning without needing a human administrator.

- Incentivization (Tokenomics): This is the economic engine of the platform. Tokenomics rewards people for providing high-quality data or compute power. By creating a financial incentive for honesty and contribution, the platform becomes a self-sustaining ecosystem.

- DAO Governance: Decentralized Autonomous Organizations allow the community to vote on the roadmap, ethical guidelines, and protocol updates. This ensures the platform serves its users, not just shareholders.

Zero-Knowledge Proofs and Secure Computation

To further enhance security, a decentralized ai platform often incorporates Zero-Knowledge Proofs (ZKPs). ZKPs allow one party to prove to another that a statement is true (e.g., “I have trained this model on valid data”) without revealing the underlying data itself. This is crucial for verifying that a node hasn’t “cheated” during the federated learning process. Additionally, technologies like Trusted Execution Environments (TEEs)—secure areas of a processor—ensure that the AI model code cannot be tampered with while it is running on a remote node. By using federated learning applications, we can create an incentivized marketplace for intelligence where everyone wins.

From Rare Disease to Fraud: 3 Ways a Decentralized AI Platform Solves Real-World Silos

We aren’t just talking about theoretical whitepapers. Decentralized ai platform technology is already solving some of the world’s hardest problems by breaking down the silos that have historically hindered progress. When data can be analyzed without being moved, the possibilities for collaboration expand exponentially.

Healthcare and Life Sciences: The Quest for Precision Medicine

This is where we see the most profound impact. Traditionally, clinical research is slowed down by “data silos.” A hospital in New York might have the key to a rare disease, but a researcher in London can’t access it due to strict privacy laws like GDPR or HIPAA. By using a decentralized ai platform, these institutions can collaborate securely. Federated learning meets precision medicine by allowing AI to “visit” data at multiple sites to find patterns in genomics and drug discovery that would be impossible to see in a single dataset. For example, identifying a specific genetic marker for a rare cancer requires thousands of samples; a decentralized platform allows 50 hospitals to contribute their data’s “intelligence” without ever sharing a single patient’s name or record.

Finance and Fraud Detection: Collective Defense

In finance, speed and privacy are everything. Banks are often hesitant to share data about fraudulent activities because it might reveal proprietary trading strategies or customer information. However, fraudsters often target multiple banks simultaneously. Decentralized networks allow banks to share “threat intelligence” and train fraud detection models collectively. By following a federated analytics ultimate guide, financial institutions can build collective defense systems that are much stronger than any one bank could build alone. If Bank A detects a new type of phishing attack, the “insight” can be instantly shared across the decentralized network, protecting Bank B and Bank C before they are even targeted.

Supply Chain Optimization: Transparency Without Exposure

Supply chains are notoriously complex and opaque, involving dozens of independent companies across multiple borders. Decentralized AI enables autonomous agents to track goods, predict delays, and optimize routes without requiring a central authority to manage every interaction. Each participant in the supply chain can run a node that contributes data about shipping times and inventory levels. The AI platform then optimizes the entire route for everyone involved, while keeping each company’s specific supplier lists and pricing structures private. This reduces friction, lowers costs, and makes global trade more resilient to shocks like port closures or natural disasters.

Scale Your Decentralized AI Platform in 2026 Without Breaking Compliance

While the future is bright, we have to be realistic about the hurdles. Building and scaling a decentralized ai platform isn’t as simple as flipping a switch. It requires a strategic approach to both technical architecture and regulatory alignment. As we move into 2026, the organizations that succeed will be those that treat decentralization not just as a tech stack, but as a governance philosophy.

The Scalability and Latency Challenge

Training a massive LLM (Large Language Model) across thousands of home computers or distributed servers is inherently more complex than doing it in a dedicated, high-speed data center. The primary bottleneck is communication latency—the time it takes for nodes to send their updates back to the aggregator. Advanced architectures are tackling this by using “model sharding” (breaking the model into smaller pieces) and intelligent task schedulers that prioritize nodes with higher bandwidth. We are also seeing the rise of autonomous AI agents that can coordinate themselves to solve these efficiency problems, dynamically rerouting tasks if a node goes offline or becomes slow.

The Regulatory Shield: Compliance by Design

Governments are still catching up with the rapid pace of AI development. However, decentralization actually helps with compliance rather than hindering it. Because data stays local, it is often easier to meet strict regional requirements like the EU’s GDPR, California’s CCPA, or the healthcare-specific HIPAA. In a centralized model, moving data across borders is a legal minefield. In a decentralized model, the data never moves, so the legal “nexus” remains simple. Implementing federated governance ensures that every action on the platform is audited and compliant by design. This “Compliance by Design” approach reduces the risk of massive fines and builds trust with users who are increasingly wary of how their data is handled.

Overcoming the “Incentive Alignment” Problem

For a decentralized platform to scale, the incentives must be perfectly aligned. If the rewards for contributing compute are too low, the network will be slow. If the rewards for data are too high, people might try to upload “junk” data to game the system. Successful platforms use sophisticated reputation scores and “Proof of Contribution” algorithms to ensure that only high-quality work is rewarded. The shift between centralized vs decentralized data governance is already underway, and those who move early to establish these incentive structures will have the most secure and cost-effective AI strategies. By 2026, we expect to see standardized protocols for decentralized AI that allow different platforms to interoperate, further accelerating the network effect.

Stop Guessing: Your Top Decentralized AI Platform Questions Answered

How does decentralized AI differ from federated learning?

Think of federated learning as the technique and a decentralized ai platform as the entire ecosystem. Federated learning is the specific mathematical way we train models on local data without moving it. A decentralized AI platform includes that technique, but also adds the blockchain for rewards, the governance for decision-making, the distributed hardware to run the models, and the user interface for developers to build applications. Federated learning is the engine; the decentralized platform is the entire car and the highway system it runs on.

Is blockchain necessary for all decentralized AI applications?

Not strictly. You can perform federated learning between three known hospitals using a private network without a blockchain. However, if you want a system that is truly “permissionless”—where anyone in the world can contribute compute or data and be paid fairly without a central boss—then blockchain is essential. It provides the trustless layer needed for strangers to collaborate securely and ensures that the rules of the platform cannot be changed unilaterally by one party.

How do you prevent “Model Poisoning” in a decentralized network?

Model poisoning occurs when a malicious node submits fake training updates to try and bias the AI. Decentralized platforms combat this using “Robust Aggregation” techniques that can identify and filter out statistical outliers. Furthermore, blockchain-based reputation systems penalize nodes that consistently provide low-quality or malicious updates, eventually slashing their stake or banning them from the network. This creates a “self-healing” system that becomes more resilient as it grows.

What are the first steps for an organization to adopt a decentralized AI approach?

We always recommend starting with your most sensitive data. Identify a use case where privacy concerns or data residency laws are currently blocking your progress (like a multi-hospital study or a cross-border financial analysis). Launch a pilot project using federated techniques to prove the value of the “data stays local” model. Look for platforms that offer a Trusted Research Environment to ensure your data remains secure throughout the process. As the pilot succeeds, you can gradually expand to more public decentralized networks to take advantage of lower compute costs.

Own Your Intelligence: Why the Future of Research is a Decentralized AI Platform

The era of AI being locked behind corporate walls is coming to an end. We are moving toward a more equitable ecosystem where powerful AI capabilities are a shared public good rather than a proprietary secret. Whether it’s cutting GPU costs by 80%, achieving 95% accuracy in medical diagnostics, or ensuring that sensitive genomic data remains private, the decentralized ai platform is proving its worth every day.

As we look toward 2026 and beyond, the democratization of AI will be the defining trend of the decade. Organizations that continue to rely solely on centralized providers will find themselves vulnerable to price hikes, data breaches, and algorithmic bias. Conversely, those that embrace decentralized architectures will gain a significant competitive advantage through lower costs, better data diversity, and superior privacy protections. This isn’t just about technology; it’s about who owns the future of intelligence.

At Lifebit, we are proud to be at the forefront of this revolution. Our platform—including the Trusted Research Environment (TRE), Trusted Data Lakehouse (TDL), and R.E.A.L. (Real-time Evidence & Analytics Layer)—is designed to help you steer this new world. We enable secure, real-time access to global biomedical data, allowing you to build the next generation of AI without ever compromising on privacy or control. We believe that the most powerful insights come from collaboration, not consolidation.

The “Singularity” doesn’t have to be a central, scary supercomputer owned by a single corporation. It can be a global, collaborative network of intelligence that belongs to all of us—a “Collective Intelligence” that respects individual privacy while solving humanity’s greatest challenges. Ready to join the mission? Explore our decentralized ai platform guide to see how we can help your organization lead the way into 2026 and beyond. The future of AI is distributed, and it starts with your data staying exactly where it is.