Stop Losing Data with These Biopharma Management Tools

Why Biopharma Companies Are Losing Months of R&D to Bad Data Management

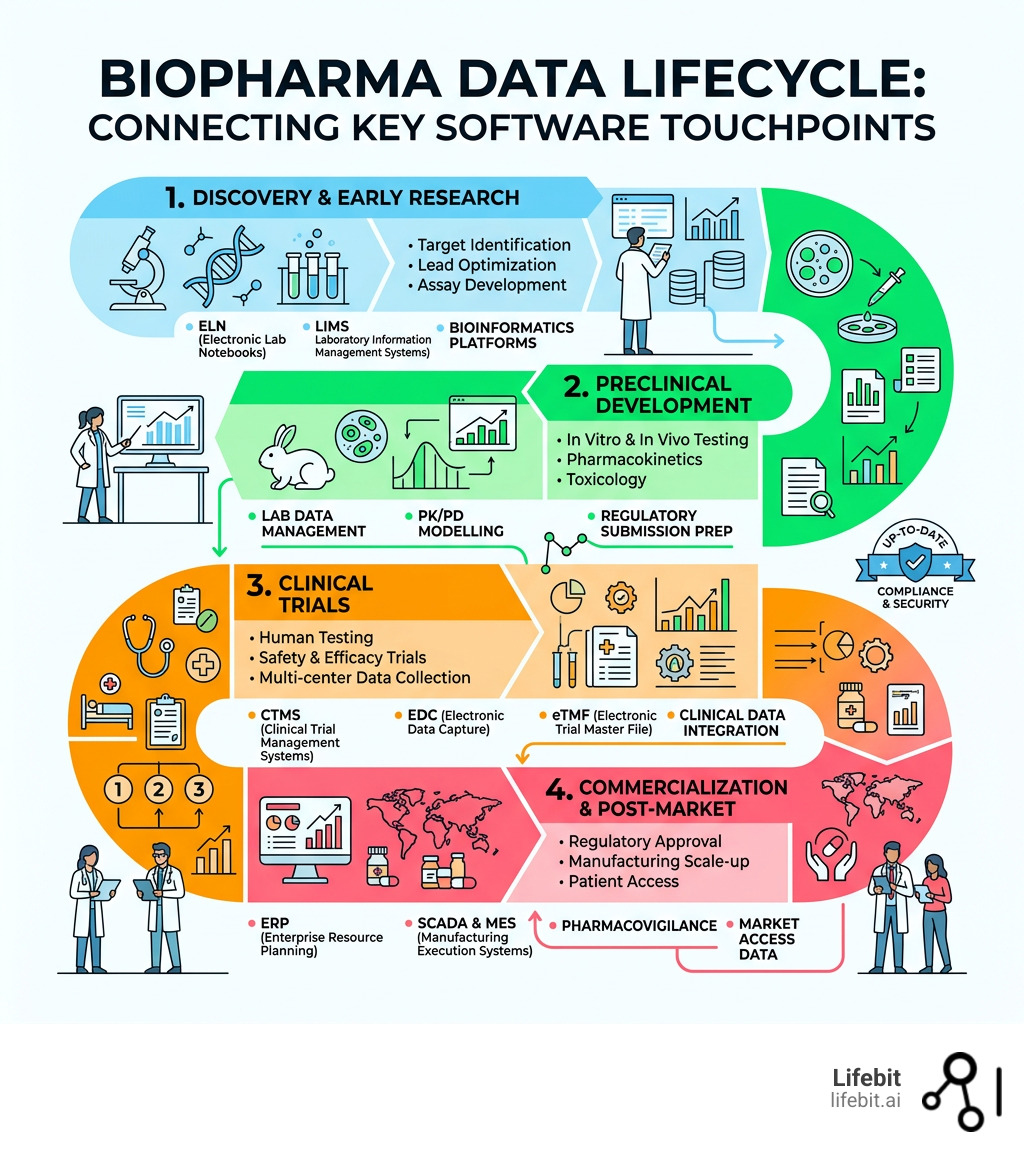

Biopharma data management software is a digital platform that centralizes, secures, and automates data workflows across R&D, clinical trials, and manufacturing – so your teams spend less time chasing data and more time doing science.

In the current landscape, the volume of data generated by a single clinical trial or genomic study can reach petabytes. Without a sophisticated management layer, this information becomes “dark data”—unstructured, unindexed, and effectively invisible to the researchers who need it most. The cost of this invisibility is staggering. Industry estimates suggest that up to 80% of a data scientist’s time is spent on data cleaning and preparation rather than actual analysis. For a multi-billion dollar biopharma enterprise, this represents a massive drain on human capital and a significant delay in the time-to-market for life-saving therapies.

Here are the top capabilities to look for in biopharma data management platforms in 2025-2026:

| Capability | Why It Matters |

|---|---|

| Federated data access | Analyze data where it lives to reduce risk and movement of sensitive datasets, essential for global collaboration. |

| Trusted Research Environment (TRE) | Run compliant analytics with controlled access, auditability, and governance in a secure “walled garden.” |

| Multi-omic harmonization and integration | Connect genomic, proteomic, and clinical data for end-to-end insight into disease mechanisms. |

| Real-time safety and surveillance analytics | Support rapid signal detection and monitoring across programs to ensure patient safety during trials. |

| Compliance tooling and audit trails | Reduce validation burden and improve inspection readiness for FDA and EMA submissions. |

| APIs and workflow automation | Integrate instruments, pipelines, and partners without manual handoffs, creating a seamless data fabric. |

Biopharma companies generate enormous amounts of data – from genomic sequences and clinical trial records to manufacturing quality logs and regulatory submissions. And most of it ends up locked in silos. These silos are often the result of rapid growth, mergers and acquisitions, or the adoption of specialized tools that don’t communicate with one another.

The result? Researchers spend weeks hunting down data before analysis can even begin. Manual errors creep in during the transcription of data from one system to another. Compliance audits turn into fire drills as teams scramble to reconstruct the provenance of a specific dataset. And drug development timelines stretch out – sometimes by months. In an industry where a single day of patent life can be worth millions, these delays are unacceptable.

This isn’t a minor inefficiency. It’s a structural problem that costs real money and real time. AI-powered data management models have been shown to reduce drug discovery timelines by 30% – but only when the underlying data is clean, connected, and accessible. The “garbage in, garbage out” principle applies more strictly to AI than perhaps any other technology; without high-quality data management, AI is just an expensive toy.

The right biopharma data management software fixes that foundation by creating a unified data architecture that supports the entire drug lifecycle.

I’m Dr. Maria Chatzou Dunford, CEO and Co-founder of Lifebit, with over 15 years of experience in computational biology, federated AI, and biomedical data infrastructure – and I’ve spent my career building the tools that make biopharma data management software work at scale across regulated, global environments. Below, I’ll walk you through what separates good tools from great ones, and how to match the right solution to your specific research and compliance needs.

Simple guide to biopharma data management software:

Why Modern Labs Need Biopharma Data Management Software

In the high-stakes world of drug development, data is your most valuable asset. Yet, many organizations still struggle with data fragmentation, where critical insights are buried in disparate spreadsheets, legacy databases, or even paper notebooks. This fragmentation doesn’t just slow you down; it creates a massive “institutional memory” gap where past experiments are forgotten or needlessly repeated. When a lead scientist leaves a company, their knowledge often leaves with them if it isn’t captured in a structured, searchable format.

Modern labs are moving toward the 5Rs framework: identifying the right target, right tissue, right safety, right patient, and right commercial potential. Achieving this requires a unified view of data that spans the entire R&D continuum. For example, “Right Target” identification requires the integration of massive genomic datasets with real-world evidence (RWE) to validate disease associations. “Right Patient” selection in clinical trials depends on the ability to query diverse patient populations for specific biomarkers.

By implementing robust biopharma data management software, companies have seen transformative results, including 75% faster data retrieval and a 40% reduction in manual data errors. These improvements aren’t just about speed; they are about the quality of the science. When data is easily accessible, researchers can perform meta-analyses that were previously impossible, uncovering subtle patterns that lead to breakthrough discoveries.

Centralizing these assets ensures that data follows the FAIR guiding principles—making it Findable, Accessible, Interoperable, and Reusable.

Deep Dive into FAIR Principles in Biopharma

- Findable: Data and metadata should be easy to find for both humans and computers. This requires unique, persistent identifiers and rich metadata descriptions.

- Accessible: Once the user finds the required data, they need to know how it can be accessed, possibly including authentication and authorization.

- Interoperable: The data needs to integrate with other data. It should use a formal, accessible, shared, and broadly applicable language for knowledge representation.

- Reusable: The ultimate goal of FAIR is to optimize the reuse of data. To achieve this, metadata and data should be well-described so that they can be replicated and/or combined in different settings.

Without these principles, your data is just noise. With them, it becomes a strategic engine for innovation. For those looking to dive deeper into the technical landscape, check out more info about biopharma data software to see how these tools are evolving.

Essential Capabilities of Biopharma Data Management Software

When we evaluate top-tier platforms, four pillars stand out as non-negotiable for modern biopharma R&D:

- Bioregistry: A central source of truth for all biological entities, from plasmids and cell lines to antibodies. It tracks lineage and relationships, so you always know the “who, what, and where” of your samples. This is critical for reproducibility; if you can’t trace the exact cell line used in an experiment, you can’t trust the results.

- Assay Data Management: This automates the capture of experimental results. Instead of manual entry, data flows directly from lab instruments (like plate readers or sequencers) into the system, ensuring consistency across teams and eliminating the risk of transcription errors.

- Electronic Lab Notebooks (ELN): Modern ELNs are no longer just digital paper; they are integrated hubs where scientists document experiments in real-time, linked directly to the bioregistry and assay data. This creates a complete narrative of the research process.

- Workflow Orchestration: This aligns cross-functional teams, managing hand-offs between discovery, process development, and quality control. It’s about moving projects forward without the “email tag” that usually stalls progress. Automated notifications and status tracking ensure that everyone knows exactly what needs to happen next.

Overcoming the High Cost of Fragmented Research Data

The financial stakes are rising. The global commercial pharmaceutical analytics market is projected to hit USD 18.49 billion by 2031. To stay competitive, more than 85% of biopharma executives intend to increase their investment in data and AI tools through 2026. Healthcare’s investment in AI specifically is expected to triple to $1.4 billion in 2025.

The real challenge lies in multi-omic integration—combining genomic, proteomic, and clinical data to find new biomarkers. Fragmented data makes this nearly impossible because the formats and scales of these data types are so different. By using advanced biopharma data integration, labs can finally bridge the gap between “wet lab” results and “dry lab” computational analysis, turning raw clinico-omics data into actionable medicine. This integration allows for a holistic view of the patient, leading to more personalized and effective treatments.

Solving the Compliance Nightmare: FDA, HIPAA, and GxP

In biopharma, if it isn’t documented and compliant, it didn’t happen. Regulatory hurdles are often the biggest bottleneck in bringing a drug to market. Biopharma data management software must be built with a “compliance-first” mindset to handle the stringent requirements of the FDA, EMA, and other global bodies. The cost of non-compliance is not just financial; it can result in the rejection of a New Drug Application (NDA), costing years of work and billions in potential revenue.

Key standards like FDA 21 CFR Part 11 require strict controls over electronic records and signatures. This includes maintaining immutable audit trails that track every change made to a dataset—who made it, when, and why. These audit trails must be computer-generated and time-stamped, and they must be available for inspection at any time. Furthermore, protecting patient privacy under HIPAA (in the US) and GDPR (in the EU) is paramount. Maintaining ISO 27001 certification for information security is no longer optional—it is the baseline for any enterprise-grade solution.

Regulatory Readiness in Biopharma Data Management Software

Top platforms provide “validation packages” that significantly reduce the time your IT team spends on system verification. Instead of building compliance workflows from scratch, these tools offer:

- GxP Compliance: Pre-configured environments that meet Good Clinical (GCP), Laboratory (GLP), and Manufacturing (GMP) Practices. This ensures that data integrity is maintained throughout the entire product lifecycle.

- Data Provenance: A complete map of data’s journey from the initial sample to the final regulatory submission. This “chain of custody” for data is essential for proving the validity of your findings to regulators.

- Automated Documentation: Generating Certificates of Analysis (CoA) or submission-ready reports in minutes rather than days. One QC lab using these automated tools reported a 50% boost in compliance efficiency, allowing them to reallocate staff to higher-value analytical tasks.

- Electronic Signatures: Secure, encrypted signing processes that meet the legal requirements for official documentation, replacing the slow and error-prone paper-and-ink method.

Beyond the standard regulations, the rise of AI in drug development has introduced new compliance challenges. Regulators are increasingly looking for “explainability” in AI models. Modern data management software helps by documenting the training sets, parameters, and validation steps used in AI-driven discovery, ensuring that the “black box” of AI is opened for regulatory scrutiny. This transparency is vital for gaining approval for AI-designed molecules or AI-optimized clinical trial protocols.

The 2026 Tech Stack: AI, Machine Learning, and Agentic Workflows

We are entering the era of Agentic AI—where software doesn’t just display data but autonomously orchestrates workflows and suggests the next best experiment. Imagine a system that notices a specific protein is consistently overexpressed in a subset of trial participants and automatically triggers a secondary analysis of their genomic profiles to find a common biomarker. This is the promise of agentic workflows: moving from reactive data storage to proactive research assistance.

Generative AI is already being used to summarize complex clinical trials and draft regulatory documents, while digital twins simulate bioprocesses to identify bottlenecks before they happen in the real world. For example, a digital twin of a bioreactor can predict how changes in temperature or nutrient concentration will affect yield, allowing scientists to optimize the process in a virtual environment before committing expensive reagents in the lab.

Another major shift is the use of blockchain for data integrity, providing an unalterable ledger for clinical trial results. This ensures that “data massaging” or selective reporting is impossible, boosting trust with regulators and the public.

The Shift to Federated Learning

One of the most significant technological leaps is Federated Learning. Traditionally, to analyze data from multiple sources (like different hospitals or research centers), the data had to be moved to a central location. This is often impossible due to privacy laws and data sovereignty concerns. Federated learning allows the AI model to travel to the data. The model learns from the local data and only sends the “insights” (mathematical weights) back to a central server. This allows biopharma companies to train models on massive, diverse datasets without ever compromising patient privacy or moving sensitive information across borders.

When choosing a deployment model, biopharma leaders must weigh the pros and cons of cloud vs. on-premise:

| Model | Pros | Cons | Best For |

|---|---|---|---|

| Cloud | Infinite scalability, remote access, lower IT overhead, rapid deployment of updates. | Requires robust internet, perceived security risks, ongoing subscription costs. | Distributed global teams, rapid scaling, startups, and collaborative research. |

| On-Premise | Total data control, no external dependency, potentially lower long-term cost for static workloads. | High upfront capital expenditure, high maintenance costs, difficult to scale, silo risk. | Ultra-sensitive proprietary research, organizations with existing massive data centers. |

| Hybrid | Balances security with scalability, allows for data residency compliance. | Complex to manage integration, requires specialized IT expertise. | Large enterprises with legacy systems and diverse global regulatory requirements. |

For a deeper look at how these technologies are converging, read about biopharma’s digital leap and the impact of real-world evidence. The integration of RWE with clinical trial data is creating a more comprehensive understanding of drug performance in the “real world,” beyond the controlled environment of a trial.

Implementation Strategies for Biopharma IT Leaders

Implementation is where many great software choices go to die. The graveyard of biopharma IT is littered with expensive platforms that were never fully adopted because they were too complex or didn’t fit into existing workflows. To succeed, biopharma IT leaders must ensure their new data management tool talks to the rest of the “tech stack.” This isn’t just a technical challenge; it’s a strategic one.

The Integrated Ecosystem

A modern biopharma company relies on a complex web of software. Your data management platform must offer seamless integration with:

- CRM (Customer Relationship Management): For tracking physician engagement, medical science liaison (MSL) interactions, and market access strategies.

- LIMS (Laboratory Information Management Systems) & ELN: To ensure the raw lab data flows into the analytics layer without manual intervention.

- ERP (Enterprise Resource Planning): For synchronizing manufacturing data with supply chain logistics, ensuring that production scales with demand.

- BI (Business Intelligence) Tools: Like Tableau or PowerBI for executive-level dashboards that provide a real-time view of the R&D pipeline and clinical trial progress.

A successful rollout often involves establishing a cloud data management strategy that prioritizes data governance from day one. This means defining who owns the data, who can access it, and how its quality is maintained throughout its lifecycle.

A Phased Roadmap for Implementation

- Assessment & Strategy: Identify the most critical data bottlenecks. Is it in discovery, clinical, or manufacturing? Define clear KPIs for success (e.g., “reduce data retrieval time by 50%”).

- Pilot Program: Select a single department or project to test the software. This allows you to work out the kinks in a controlled environment and build “internal champions” who can advocate for the tool.

- Data Migration & Cleaning: This is often the most time-consuming step. Use automated tools to clean and format legacy data before moving it into the new system.

- Training & Change Management: Software is only as good as the people using it. Invest heavily in training and ensure that the software actually makes the scientists’ lives easier, rather than adding another layer of bureaucracy.

- Full Scale-Out: Once the pilot is successful, roll the platform out across the organization, integrating it with other enterprise systems.

Selecting the Right Biopharma Data Management Software

When evaluating vendors, look beyond the sales deck. Focus on:

- No-code configurability: Can your scientists change a workflow or add a new data field without calling IT? If not, the system will quickly become a bottleneck.

- API-first architecture: How easily can it connect to a new sequencer, a third-party CRO, or a future AI tool? An open architecture is essential for future-proofing.

- Speed of Analysis: High-performing tools can cut chromatography data analysis time by 15x and reduce NGS (Next-Generation Sequencing) assay time by 90%. Ask for benchmarks based on your specific data types.

- Vendor Stability and Support: In a rapidly consolidating market, ensure your vendor has the financial stability and the support infrastructure to be a long-term partner.

The goal is to find a platform that scales with you, from a 10-person startup to a global enterprise with thousands of users across multiple continents.

Frequently Asked Questions about Biopharma Data Management

What is biopharma data management software?

It is a specialized digital ecosystem designed to handle the unique complexities of biological data, regulatory compliance (like GxP), and the collaborative nature of drug development. Unlike generic data management tools, it is built to handle multi-omic datasets, track biological samples, and maintain the rigorous audit trails required by health authorities. It replaces fragmented spreadsheets with a centralized, “FAIR” data repository.

How does AI reduce drug discovery timelines?

AI accelerates the “hit identification” phase by analyzing millions of compounds in silico to predict their binding affinity and toxicity. It also optimizes patient recruitment for clinical trials by analyzing electronic health records to match candidates to protocols with 96% predictive accuracy, often reducing timelines by 30%. Furthermore, AI can predict potential drug-drug interactions and side effects early in the process, preventing late-stage failures.

Is cloud or on-premise better for GxP compliance?

While on-premise was once the standard due to security concerns, modern cloud solutions are now mature enough to offer full GxP compliance. In many cases, cloud platforms provide better “audit readiness” because they automate the logging of every system interaction, which is harder to maintain manually on-premise. Cloud providers also invest more in cybersecurity than most individual biopharma companies can afford.

How does this software handle data from Contract Research Organizations (CROs)?

Modern platforms use secure APIs and standardized data exchange formats to ingest data from CROs. This ensures that data from external partners is integrated into the internal “source of truth” in real-time, allowing for better oversight of outsourced trials and faster decision-making.

Can these systems handle legacy data from older experiments?

Yes, though it requires a structured migration strategy. Most top-tier biopharma data management software includes tools for mapping and transforming legacy data into modern, FAIR-compliant formats. This is essential for preserving “institutional memory” and enabling meta-analysis across decades of research.

What is the role of data sovereignty in global biopharma?

Data sovereignty refers to the idea that data is subject to the laws of the country in which it is located. For global biopharma, this is a major challenge. Federated data management solutions solve this by allowing researchers to analyze data in different jurisdictions without moving it, ensuring compliance with local laws like GDPR while still enabling global insights.

Conclusion: Future-Proofing Your Research with Lifebit

At Lifebit, we believe that the future of medicine shouldn’t be limited by where data lives. Our next-generation federated AI platform allows you to access and analyze global biomedical and multi-omic data without moving it. This “data-to-code” approach ensures maximum security and compliance while enabling real-time insights across five continents.

Whether you are looking to build a Trusted Research Environment (TRE), implement a Trusted Data Lakehouse (TDL), or leverage our R.E.A.L. (Real-time Evidence & Analytics Layer) for AI-driven safety surveillance, we provide the infrastructure to turn your data into a breakthrough.

Don’t let your research be held back by silos or manual errors. Join the leading biopharma companies and public health agencies that trust us to power their most critical research.