The Hospital Data Diet and Why Federated Learning is the Secret Sauce

Why Hospitals Are Training AI Together — Without Sharing a Single Patient Record

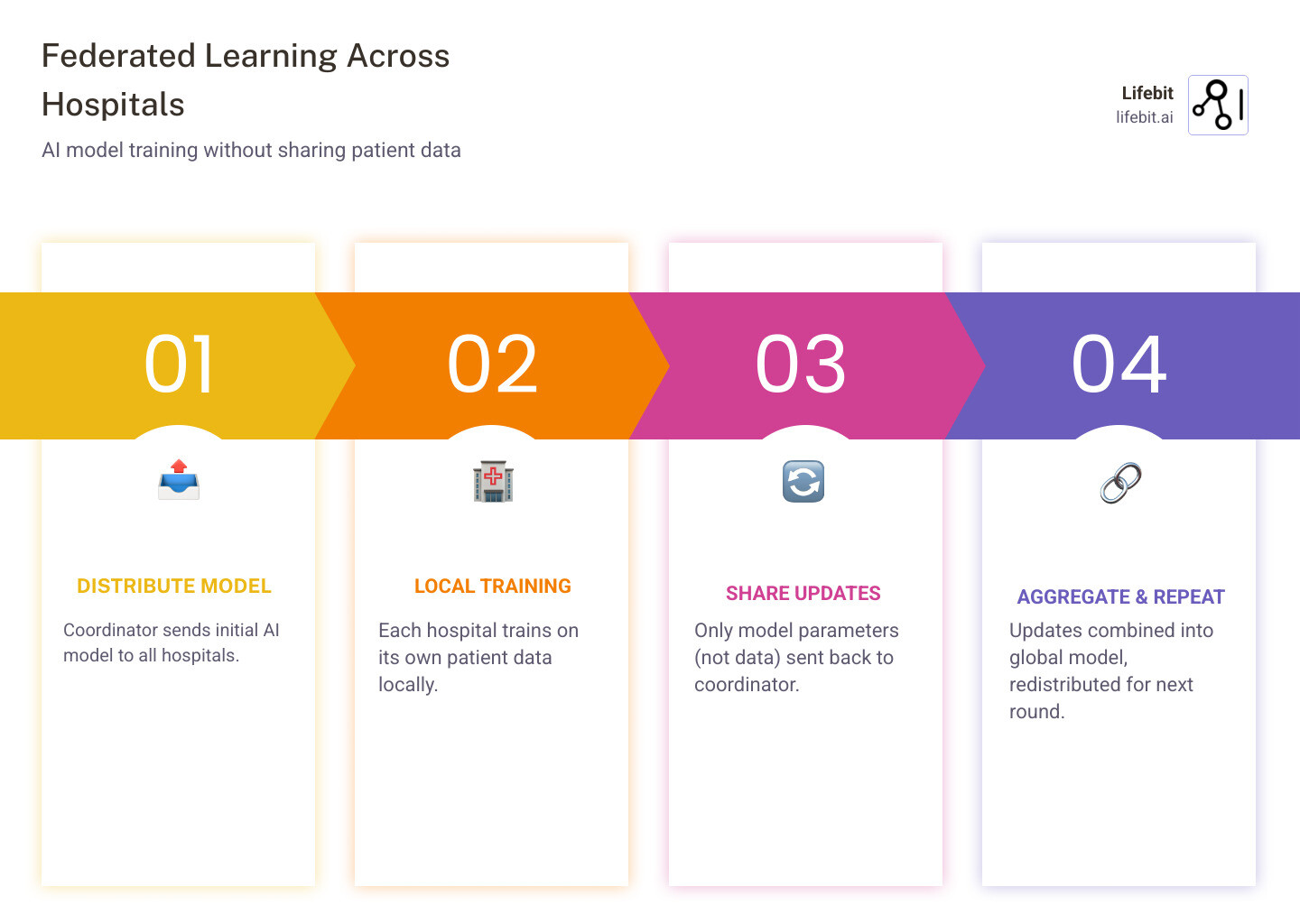

Federated learning across hospitals is a method that lets multiple medical institutions train a shared AI model together — without any hospital ever sending its patient data to another site.

Here’s how it works at a glance:

| Step | What Happens | Where Data Lives |

|---|---|---|

| 1. Model distributed | A coordinator shares a starting AI model with each hospital | Central coordinator |

| 2. Local training | Each hospital trains the model on its own patient data | Stays local |

| 3. Updates shared | Only model parameters (not patient records) are sent back | Local → coordinator |

| 4. Aggregation | Updates are combined into one improved global model | Central coordinator |

| 5. Repeat | The improved model is redistributed and the cycle continues | Each hospital |

The result: a smarter AI model trained on data from dozens — or even hundreds — of hospitals, with zero raw patient data ever leaving its source.

This matters because healthcare AI has a serious data problem. The datasets needed to train reliable diagnostic models are scattered across thousands of siloed hospital systems, locked behind privacy regulations like HIPAA and GDPR. No single institution has enough data to build AI that generalizes well across diverse patient populations, equipment types, and care settings.

Federated learning breaks that deadlock.

I’m Dr. Maria Chatzou Dunford, CEO and Co-founder of Lifebit, and I’ve spent over 15 years in computational biology, genomics, and health-tech building the infrastructure that makes federated learning across hospitals practical at scale. In this guide, I’ll walk you through how it works, where it’s already delivering results, and what it takes to deploy it in the real world.

Related content about federated learning across hospitals:

Stop Moving Data: How Federated Learning Across Hospitals Solves the Privacy Crisis

For decades, the only way to do multi-center research was the “centralized” approach: hospitals would ship copies of their patient records to a central server. We call this the “old way,” and in today’s regulatory environment, it’s increasingly becoming a dead end. Why? Because moving sensitive data creates massive security risks, high costs, and a mountain of legal paperwork. Every time data crosses a boundary, the risk of a breach increases, and the administrative burden of Data Transfer Agreements (DTAs) can delay projects by months or even years.

Federated learning across hospitals introduces a “data diet.” Instead of the data moving to the model, the model moves to the data. By keeping patient records behind the hospital’s own firewall, institutions maintain absolute data residency and local control. This is not just a technical preference; it is a fundamental shift in how we view data ownership and stewardship in the digital age.

When we talk about “model parameters” moving instead of data, we mean the mathematical “learnings” (like weights in a neural network) that the AI has gathered. For example, a model might learn that a specific pattern in an X-ray correlates with a diagnosis. It shares that mathematical realization, but it never shares the X-ray itself. This shift is why Federated learning in medicine: Facilitating multi-institutional collaborations without sharing patient data is viewed as a paradigm shift for clinical research.

Why Federated Learning Across Hospitals is HIPAA Compliant

One of the most common questions we get at Lifebit is: “Is this legal?” In the US, HIPAA requires strict protections for Protected Health Information (PHI). In Europe, GDPR demands “data minimization” and “privacy by design.” Federated learning is uniquely positioned to satisfy these requirements because it adheres to the principle of least privilege—only the minimum necessary information (the model updates) is ever transmitted.

Federated learning aligns perfectly with these mandates because:

- It minimizes exposure: Raw PHI never leaves the hospital’s secure environment, reducing the attack surface for hackers.

- De-identification is inherent: Only aggregated mathematical updates are shared, which do not contain direct identifiers like names, social security numbers, or addresses.

- Auditability: Every interaction with the data can be logged within the hospital’s own federated governance framework, providing a clear trail for compliance officers.

However, compliance isn’t automatic. It requires a robust system design, including Business Associate Agreements (BAAs) and a thorough risk assessment to ensure that the model updates themselves don’t accidentally leak information. This is particularly important in the context of “membership inference attacks,” where a sophisticated adversary might try to determine if a specific individual’s data was used in the training set. To counter this, we implement advanced defensive measures.

Privacy-Enhancing Technologies (PETs) for Bulletproof Security

To make federated learning across hospitals truly “production-ready,” we often layer it with other Privacy-Enhancing Technologies (PETs). Think of these as the extra security guards at the door, ensuring that even if one layer is compromised, the patient data remains shielded:

- Differential Privacy (DP): This adds “statistical noise” to the model updates. It’s like blurring a photo just enough so you can tell it’s a person, but you can’t recognize their face. DP provides a mathematical guarantee that the presence or absence of a single patient in the dataset will not significantly change the output of the model.

- Homomorphic Encryption (HE): This allows the central coordinator to aggregate model updates while they are still encrypted. The coordinator never even sees the plain-text updates; they only see the encrypted result of the combined knowledge. This is the gold standard for preventing “honest-but-curious” server administrators from peeking at the learning process.

- Confidential Computing: This uses hardware-based “enclaves” (like Intel SGX) to process data in a black box that even the system administrator can’t peek into. By running the federated client inside a Trusted Execution Environment (TEE), we ensure that the code and data are protected from external tampering.

By combining these, we can provide federated analytics that are statistically equivalent to centralized training but significantly more secure, meeting the highest standards of global data protection regulations.

From COVID to Cancer: Real-World Case Studies of Federated Learning Across Hospitals

If this sounds like science fiction, it’s time for a reality check. Federated learning across hospitals is already saving lives and accelerating drug discovery. The transition from theoretical research to clinical application has been rapid, driven by the urgent need for collaborative intelligence during global health crises.

Take the EXAM model developed during the height of the pandemic. Researchers used federated learning across 20 diverse international institutions—including Mass General Brigham in the US and hospitals in Brazil, Canada, and Japan—to train an AI that predicts the oxygen requirements of COVID-19 patients in the emergency department. Because the hospitals didn’t have to share raw data, they could collaborate across borders in real-time, bypassing the usual bureaucratic delays. The result was a model that was significantly more robust and generalizable than any single institution could have built alone. You can read the full study in Nature Medicine.

Another massive implementation is happening in South Korea, where a major data platform is connecting 20 hospitals. By the end of 2024, this network will cover 15,000 beds and 20 million people, enabling joint learning for digital transformation without disrupting daily hospital workflows. This project focuses on creating a “standardized data lake” at each site, allowing for seamless federated learning in healthcare across oncology, cardiology, and neurology departments.

Achieving 99% Accuracy with Federated Learning Across Hospitals

A frequent concern among clinicians is that federated learning might be “AI-lite”—that you sacrifice performance for privacy. The data says otherwise. In many cases, the increased volume and diversity of data available through federation actually leads to models that outperform their centralized counterparts in real-world scenarios.

In a landmark study using the BraTS (Brain Tumor Segmentation) dataset, researchers compared federated learning against traditional centralized data sharing (CDS). Across 10 different institutions, the federated model achieved 99% of the quality of the centralized model. Using the “Dice similarity coefficient” (a standard measure for medical imaging accuracy), the federated model scored 0.835 compared to 0.840 for the centralized version.

This proves that you don’t have to choose between privacy and performance. In fact, because federated learning makes it easier for more hospitals to join a project, the increase in data diversity often leads to better real-world performance. A model trained on data from five different types of MRI machines is far more likely to work in a sixth hospital than a model trained on a single, massive dataset from one manufacturer. Check out more on this in the research on distributed cross-learning.

Rare Disease Research and EHR Analysis: The Power of Small Data

For rare diseases, the “data diet” is actually a feast. No single hospital sees enough patients with a rare condition to train an AI. If a condition affects only 1 in 100,000 people, a single hospital might only have two or three cases. By using federated learning across hospitals, we can aggregate the signals from five patients in London, three in New York, and two in Singapore, creating a virtual cohort large enough for deep learning.

We’ve seen this work with the FADL framework, which was tested on Electronic Health Record (EHR) data from 58 different hospitals. It achieved an AUC (Area Under the Curve) of 0.79 for predicting ICU mortality, outperforming traditional local models. This is one of the most exciting federated learning applications because it allows us to find patterns in “small data” that were previously invisible. It enables the discovery of subtle biomarkers and treatment responses that would be lost in the noise of a single-site study, effectively democratizing the power of AI for specialized medical fields.

The Technical Blueprint: Architectures for Secure Multi-Site Collaboration

How do you actually build this? There are two main ways to organize the conversation between hospitals, each with its own set of trade-offs regarding security, scalability, and administrative control. Understanding these architectures is crucial for any hospital IT department looking to implement a federated strategy.

| Feature | Centralized Federated Learning | Decentralized (Peer-to-Peer) |

|---|---|---|

| Coordinator | A central server manages updates | No central server; hospitals talk to each other |

| Risk | Single point of failure at the hub | Higher communication overhead |

| Trust | Hospitals must trust the hub | Trust is distributed (often via Blockchain) |

| Use Case | Most commercial hospital networks | High-security consortia |

| Scalability | High (easier to add new nodes) | Moderate (complex networking) |

Most federated research environments use the centralized approach because it’s easier to manage and monitor. In this setup, the central hub acts as an orchestrator, ensuring that all hospitals are using the same version of the model and aggregating the results using algorithms like FedAvg (Federated Averaging).

Decentralized Learning and Blockchain Integration

For groups that want to eliminate the “central hub” entirely—perhaps due to geopolitical concerns or extreme privacy requirements—frameworks like D-CLEF are the answer. These systems use Blockchain (like Ethereum smart contracts) to coordinate the training process without a single point of control.

In a D-CLEF setup:

- Smart Contracts manage the “round-robin” roles, where each hospital takes a turn being the “virtual server” for a specific training round.

- IPFS (InterPlanetary File System) is used to store and share the large model matrices securely, ensuring that the model itself is distributed across the network.

- Immutable Audit Trails ensure that every step of the training is recorded on the ledger. This prevents any single participant from “poisoning” the model with bad data without being detected.

This creates a “fair compute load” where no single institution holds all the power. You can find more about these equitable federated models in recent peer-reviewed literature, which highlights how blockchain can solve the “trust deficit” in multi-institutional collaborations.

Overcoming Data Heterogeneity and Non-IID Challenges

The biggest technical headache in federated learning across hospitals is “Non-IID” data. In statistics, IID stands for “Independent and Identically Distributed.” In the real world, hospital data is almost never IID. This is a fancy way of saying that every hospital is different. One hospital might use Siemens scanners with specific settings, while another uses GE. One might serve an elderly population with multiple comorbidities, while another serves mostly children.

This “data skewing” can confuse an AI, leading to a model that performs well on average but fails miserably at specific sites. To fix it, we use a multi-layered approach:

- Standardization: Mapping all data to a common format like the OMOP Common Data Model (CDM). This ensures that “heart rate” means the same thing in every database.

- Harmonization: Pre-processing images to a standard resolution, orientation, and intensity atlas before training. This removes the “signature” of the specific medical device.

- Robust Aggregation: Using advanced algorithms like FedProx, which adds a proximal term to the local objective function to account for the differences between the local data and the global model.

Cloud platforms like Google Cloud (using GKE) and AWS are increasingly providing the “plumbing” to host these federated data platforms securely and at scale, allowing hospitals to focus on the science rather than the infrastructure.

Beyond the Hype: Why Most Hospital AI Projects Fail (And How to Fix It)

We’ve seen many AI projects start with great fanfare and end in a whimper. Usually, the culprit isn’t the algorithm—it’s the data. In the context of federated learning across hospitals, the complexity is multiplied because you are dealing with multiple data owners, each with their own internal cultures and technical debt.

The most common failure point is mismatched definitions. If Hospital A defines “diabetes” based on a single high glucose lab result and Hospital B defines it based on a specific ICD-10 billing code, the AI will learn a mess. It will try to find a pattern that doesn’t exist because the ground truth is inconsistent. Successful federated learning requires locking down shared specifications before a single line of code is run. This includes:

- Cohort Rules: Who is in the study? (e.g., “Adults over 18 with at least two visits in the last year.”)

- Labeling Logic: How do we define the “outcome”? If we are predicting mortality, is it in-hospital mortality or 30-day post-discharge mortality?

- Feature Definitions: Are we measuring blood pressure in the same units? Are we using the mean or the peak value from a 24-hour period?

Without this alignment, you’re just doing federated data sharing of bad data, which leads to bad models. This is often referred to as the “Garbage In, Garbage Out” (GIGO) principle, and it is the primary reason why many pilot projects fail to scale into clinical practice.

Evaluating Model Performance Across Multiple Sites

In a centralized world, you just split your data into “train” and “test” sets. In the federated world, it’s a bit more complex. We need to ensure the model is not just accurate, but also fair and generalizable. We use a rigorous evaluation framework:

- Cross-site Validation: Training the model on four hospitals and testing it on a fifth “unseen” hospital. This is the ultimate test of whether the model has truly learned the underlying biology or just the quirks of the training sites.

- Per-site Reporting: Checking if the model performs poorly at one specific hospital. If the accuracy is 95% at four sites but 60% at the fifth, it indicates a data quality issue or a unique patient sub-population at that site that needs to be addressed.

- Robustness Testing: Seeing how the model handles “adversarial” updates. We simulate scenarios where one site might have corrupted data to ensure the global model remains stable.

This level of rigor is what we build into our federated Trusted Research Environments, ensuring that the insights you get are actually reliable in a clinical setting. We also focus on “Explainable AI” (XAI) to help clinicians understand why a model is making a certain prediction, which is essential for building trust in the system.

The Human Factor: Governance and Incentives

Finally, we cannot ignore the human element. Federated learning is as much a social challenge as a technical one. Hospitals need clear incentives to participate. Why should a hospital dedicate IT resources to a project that benefits their competitors?

The answer lies in the “collective gain.” By participating, the hospital gets access to a superior model that they can use for their own patients. Furthermore, we implement “contribution tracking” to ensure that institutions providing high-quality data receive appropriate credit in publications and intellectual property agreements. Establishing a clear federated governance structure early on is the best way to prevent political friction from stalling the project.

Frequently Asked Questions about Federated Learning Across Hospitals

How is data protected if the model is shared?

The model itself consists of millions of numbers (weights). While it’s theoretically possible to “reverse-engineer” data from these numbers, we use technologies like Differential Privacy to add noise, making it mathematically impossible to identify a specific patient from the model.

Does federated learning require high-speed internet between hospitals?

It depends on the model. For simple models, the updates are small. For deep learning (like medical imaging), the updates can be large. However, we only send the updates, not the raw images, which typically reduces the bandwidth needed by over 90% compared to centralized sharing.

Can small hospitals participate in large-scale federated networks?

Absolutely! In fact, small hospitals benefit the most. They get access to a high-performing model trained on millions of patients, which they could never build themselves, while still keeping their local data private.

Conclusion

The future of medicine isn’t in a single “super-database”—it’s in the network. By embracing federated learning across hospitals, we can finally break down the silos that have held back precision medicine for decades. We can train models that are more accurate, more ethical, and more representative of the global population.

At Lifebit, we are building the tools to make this transition seamless. Our platform provides the secure, federated infrastructure needed to turn “locked” hospital data into life-saving insights.

Secure your hospital data with Lifebit’s Federated Trusted Research Environment and join the world’s leading research institutions in the next generation of AI-driven healthcare.