Top Streaming Analytics Tools: Unveiling Immediate Insights

Stop Waiting: Get Insights in Seconds with a Real-time Analytics Platform

A real-time analytics platform processes data as it arrives—enabling your organization to see, understand, and act on events as they unfold, typically within seconds or less. In the modern digital economy, the value of data decays exponentially with time. A transaction that occurred five minutes ago is a historical record; a transaction occurring now is an opportunity to prevent fraud, personalize a user experience, or save a life.

Key capabilities of a real-time analytics platform:

- Streaming ingestion – captures events from IoT sensors, APIs, databases, and logs continuously. This involves handling high-throughput data streams without dropping packets, often utilizing distributed messaging systems that ensure durability and fault tolerance.

- Stream processing – runs queries, changes, and aggregations on live data flows using SQL or specialized frameworks. Unlike batch processing, which waits for a data set to be complete, stream processing operates on “unbounded” data, calculating moving averages, session windows, and complex event patterns in flight.

- Low-latency storage – stores results in fast-access databases (e.g., OLAP stores, lakehouse tables) for instant retrieval. These systems are optimized for high-concurrency reads and rapid writes, often employing columnar storage formats to speed up analytical queries.

- Live dashboards and alerts – visualizes insights and triggers automated actions based on real-time conditions. This moves beyond static charts to dynamic environments where a spike in a metric can automatically trigger a webhook, an SMS alert, or a machine-learning-driven intervention.

- AI and ML integration – feeds fresh data into models for fraud detection, personalization, and predictive maintenance. By reducing the “feature lag,” models can make predictions based on the most current state of the world, significantly increasing accuracy in volatile environments.

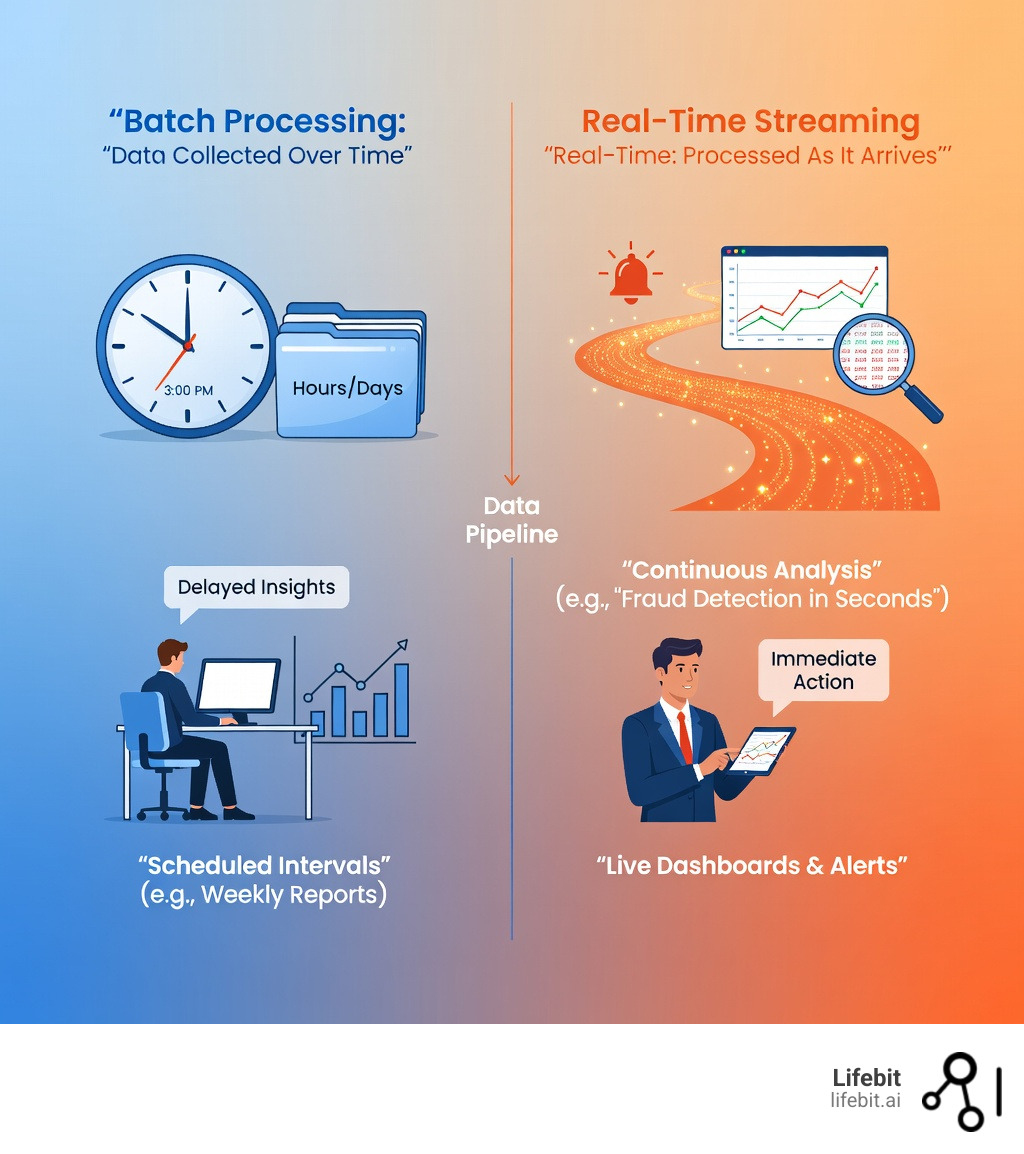

Traditional batch processing collects data in scheduled intervals—weekly reports, nightly ETL (Extract, Transform, Load) runs—creating hours or days of delay between event and insight. This lag creates a “blind spot” where critical business changes occur unnoticed. Real-time platforms eliminate that lag, turning data velocity into a decisive competitive advantage.

Why it matters: 86% of IT leaders now prioritize streaming data as a strategic imperative. Enterprises report 5x ROI on streaming analytics investments, and 63% say these platforms directly fuel their AI initiatives. In healthcare, finance, and pharma, the gap between data collection and decision-making can mean the difference between catching fraud in seconds versus hours, or identifying adverse drug events before they escalate. For instance, in high-frequency trading, a millisecond of latency can result in millions of dollars in lost opportunity. In a hospital setting, a delay in processing telemetry data from a bedside monitor could result in a missed window for life-saving intervention.

Real-time systems handle use cases that batch processing simply cannot touch: monitoring patient vitals for immediate alerts, detecting anomalies in clinical trial data as it’s recorded, or analyzing pharmacovigilance signals across federated datasets without moving sensitive information. For organizations working with siloed EHR (Electronic Health Records), genomics, and claims data, a real-time analytics platform provides the speed and compliance needed to generate evidence, run cohort analyses, and power AI—all in situ.

I’m Maria Chatzou Dunford, CEO and Co-founder of Lifebit, where we’ve built a federated real-time analytics platform that powers secure, compliant data analysis for global pharma, regulatory agencies, and public health institutions across 275M+ patient records. My 15+ years in computational biology, AI, and health-tech entrepreneurship have shown me that real-time insights are no longer optional—they’re essential for proactive, data-driven healthcare. We are seeing a fundamental shift where the infrastructure is moving away from centralized, slow data warehouses toward distributed, real-time ecosystems that respect data sovereignty while maximizing utility.

Real-time analytics platform word list:

Batch Processing is Dead: Why Leaders Choose a Real-time Analytics Platform

In the past, we were content with “yesterday’s news.” We ran our heavy workloads overnight and sipped coffee while reading reports that were already 12 to 24 hours old. This was the era of the “Daily Briefing,” where decisions were made based on a snapshot of the past. But in 2026, data that is 12 hours old is essentially a fossil. The shift to a real-time analytics platform isn’t just a technical upgrade; it’s a survival strategy in an era of instant gratification and automated threats.

Consider this: 86 per cent of IT leaders now prioritize streaming data as a strategic imperative. Why? Because the ROI is undeniable. Forty-four per cent of enterprises report a massive fivefold ROI on their streaming analytics investments. When we talk about advanced analytics solutions, we are talking about moving from a reactive posture—where you analyze why something went wrong—to a proactive one, where you prevent the failure from happening in the first place.

Batch processing is discontinuous and periodic. It works for payroll or monthly tax filings, but it fails for patient safety, cybersecurity, and supply chain logistics. If a clinical trial participant experiences a severe adverse reaction, waiting for a weekly batch report is unacceptable and potentially unethical. We need to see that signal the moment it hits the database. Real-time platforms handle input, output, and processing simultaneously, allowing us to watch the “cogs and sprockets” of our organization work in unison. This is often referred to as “Data in Motion,” a paradigm where data is treated as a continuous stream of events rather than a collection of static files.

Core Components of a Real-time Analytics Platform

To build a system that breathes with your data, you need more than just a fast database. You need a coordinated ecosystem that can handle the “Three Vs” of big data: Volume, Velocity, and Variety. The architecture of a modern real-time analytics platform typically includes:

- Event Ingestion: This is the “front door.” Tools like Apache Kafka, Redpanda, or AWS Pub/Sub act as the backbone for data in motion, connecting producers (like IoT sensors or web logs) to consumers. These tools must support “backpressure,” ensuring that if the processing engine slows down, the ingestion layer can buffer the data without loss.

- Stream Processing: This is the “brain.” Engines like Apache Flink, Spark Streaming, or RisingWave perform continuous SQL queries on data as it flows. This layer handles “stateful” processing, which allows the system to remember previous events (e.g., “Has this credit card been used in three different cities in the last ten minutes?”).

- Change Data Capture (CDC): A critical sub-component that monitors your primary databases (like Postgres or MongoDB) and streams any changes (inserts, updates, deletes) to the analytics platform in real-time. This ensures your analytics are always in sync with your operational systems.

- Storage Layers: For lightning-fast retrieval, many systems use a Log-structured merge-tree (LSM tree) like Bigtable or ClickHouse. These are optimized for single-row lookups and time-series analysis, allowing users to query billions of rows in milliseconds.

- Serving APIs and Webhooks: This layer turns raw data into something a dashboard, a mobile app, or an automated system can use. It provides the interface for “push” notifications, where the system tells the user something has happened, rather than the user having to ask.

- Unified Governance: In our experience at Lifebit, this is the most critical piece. You need to learn more about data intelligence to ensure that while data moves fast, it remains secure and compliant with local regulations like GDPR, HIPAA, or the UK Data Protection Act. Governance must be “baked in” to the stream, with automated PII (Personally Identifiable Information) masking and lineage tracking.

How to Identify and Collect the Right Data

Not all data is created equal. If you try to stream everything—every single system log and minor heartbeat—you’ll end up with a “data swamp” that moves at the speed of a glacier and costs a fortune in cloud egress fees. The key is to align your collection strategy with specific business goals.

Start by identifying high-value telemetry. For a pharmaceutical company, this might be real-time patient monitoring data from wearable devices during a Phase II trial. For a financial firm, it’s transaction logs and login attempts. We often recommend combining internal organizational data with external industry trends for a well-rounded view. For example, a logistics company might combine their real-time truck GPS data with live weather and traffic feeds to optimize routes on the fly. To dive deeper into how to manage these massive volumes without losing control, check out our Big Data Analytics Complete Guide.

Cut Fraud by 60% Using a Real-time Analytics Platform

The most compelling reason to adopt a real-time analytics platform is the immediate, measurable impact on the bottom line. Whether it’s preventing a $1 million fraud event, optimizing a supply chain to save thousands in fuel, or identifying a life-saving drug interaction, speed equals value. In the digital age, the first mover doesn’t just win the market; the fastest responder wins the trust of the customer.

Take fraud detection: one financial services firm cut its detection time from an hour to seconds by implementing a streaming architecture. This drove a 60 per cent reduction in account takeovers. In the old batch-based world, a fraudster could drain an account and disappear before the nightly report even flagged the suspicious activity. With real-time analytics, the platform can block a suspicious purchase before the “Confirm” button is even finished clicking. Beyond security, real-time insights drive operational efficiency and predictive maintenance—allowing us to fix a machine (or a clinical trial protocol) before it breaks. This is the essence of Real-time Evidence Generation.

Real-time Analytics Platform Applications in Healthcare and Finance

In the sectors we serve, the stakes are at their highest. Real-time Healthcare Analytics allows clinicians to monitor patient health trajectories in real-time, enabling early intervention for sepsis or cardiac arrest. Sepsis, for example, is a condition where every hour of delay in treatment increases the risk of death by nearly 8%. A real-time platform can aggregate heart rate, blood pressure, and lab results to flag a sepsis risk hours before physical symptoms become obvious to a human observer.

In drug safety, Real-time Pharmacovigilance is a game-changer. Instead of waiting months for post-market surveillance reports to trickle in from doctors, regulatory agencies can now analyze real-world data streams from pharmacies and hospitals to detect safety signals instantly. By integrating Real-time Patient Insights, we can personalize treatment plans based on how a patient is responding to a drug right now, not how they responded at their last check-up three months ago. This allows for “N-of-1” clinical trials where the protocol can be adjusted dynamically for the individual.

Real-time Personalization and Retail

In the retail and e-commerce space, real-time analytics platforms power the recommendation engines we use every day. But it goes deeper than just suggesting a pair of shoes. Modern platforms use “sessionization” to understand a user’s intent in real-time. If a user is looking at winter coats and then switches to searching for umbrellas, the platform can instantly update the homepage to show rain gear, increasing conversion rates by up to 20%. This requires the platform to process clickstream data, inventory levels, and user history simultaneously to deliver a coherent, personalized experience.

The Role of Visualizations and Live Dashboards

Data is only useful if it’s understood by the people who need to make decisions. Real-time dashboards act as the “flight deck” for your organization. They share complex analyses with non-technical teams through updating visualizations that highlight anomalies the moment they occur. A static PDF report is a post-mortem; a live dashboard is a diagnostic tool.

When we build Health Data Analytics Platforms for All, we focus on making insights actionable. A live dashboard shouldn’t just show a pretty graph; it should trigger an alert when a threshold is crossed. For example, if a genomic sequencing run starts producing low-quality reads, the lab manager should see a red flash on their screen immediately, allowing them to stop the run and save expensive reagents. This continuous monitoring ensures that the user experience—whether it’s a researcher looking for a genomic variant or a doctor checking a patient’s status—is always informed by the freshest possible data.

Stop Data Silos: 5 Must-Haves for Your Real-time Analytics Platform

Let’s be honest: moving to real-time isn’t always a walk in the park. There are significant hurdles, from the “skills gap” in finding engineers who understand streaming SQL and distributed systems to the sheer cost of high-velocity storage and cloud networking. Many organizations attempt to build these systems in-house, only to find that the complexity of managing a distributed cluster of processing nodes is a full-time job in itself.

A major challenge is data silos. Often, the data you need is locked away in different departments, different cloud providers, or even different countries with strict data residency laws. This is where Federated Data Analysis becomes essential. It allows you to analyze data where it resides, avoiding the risk, cost, and latency of moving massive datasets across borders—a common pain point for our clients in the UK, USA, and Europe. In a federated model, the real-time analytics platform acts as an orchestration layer, sending the computation to the data rather than pulling the data to a central hub.

Critical Criteria for Selecting Your Real-time Analytics Platform

When you’re evaluating a real-time analytics platform, don’t get distracted by “vanity metrics” like raw ingestion speed (e.g., “we can ingest 10 million events per second”). While ingestion is important, what matters is the end-to-end latency from event occurrence to actionable insight. Look for these core pillars:

- Cloud-native and Elastic Architecture: The platform must scale elastically. Data traffic is rarely constant; it peaks and valleys. You don’t want to pay for peak capacity during quiet hours, nor do you want your system to crash during a Black Friday surge or a public health emergency. Look for “serverless” streaming options that scale automatically.

- SQL-first Interfaces: To lower the learning curve, your team should be able to use familiar SQL rather than learning complex new frameworks like Scala or Java. Modern platforms allow you to write

SELECT * FROM stream WHERE price > 100just as you would with a traditional database. - Exactly-once Processing (EOP): In finance and healthcare, “close enough” isn’t good enough. If you are calculating a patient’s dosage or a bank balance, you cannot afford to process an event twice or skip it entirely. You need mathematical guarantees that every event is processed exactly once to maintain data integrity.

- Latency SLAs: Look for platforms that guarantee sub-second end-to-end latency. This includes the time for ingestion, transformation, and making the data available for query. If the latency is over 10 seconds, you are moving back into the realm of “near-real-time,” which may not be sufficient for high-stakes use cases.

- Governance and Security Best Practices: Ensure the platform adheres to Data Lakehouse Best Practices for security, encryption at rest and in transit, and detailed audit logging. In the age of AI, knowing the “lineage” of your data—where it came from and how it was transformed—is vital for regulatory compliance.

Combining Real-time and Traditional Analytics for a 360-Degree View

Real-time analytics shouldn’t replace your historical data; it should dance with it. This is often called the “Lambda” or “Kappa” architecture, but more recently, it has evolved into the “Lakehouse” approach. By pairing current transactional data with historical baselines, you get the full picture. For example, knowing a patient’s heart rate is 100 BPM is useful; knowing it’s 100 BPM and has risen 20% since yesterday while they are on a specific medication is actionable.

This hybrid approach treats streaming and batching as first-class citizens. It allows you to run complex AI models on years of historical data to find patterns, and then feed those models live events for instant inference. For a deep dive into how this architecture works in practice, see our Data Lakehouse Ultimate Guide. By unifying these two worlds, organizations can avoid the “split-brain” problem where the real-time system says one thing and the historical warehouse says another.

2026 Guide: Power AI with a Federated Real-time Analytics Platform

The future of the real-time analytics platform is inextricably linked with Artificial Intelligence. Nearly 90 per cent of IT leaders are increasing their spend on streaming platforms specifically to power AI and real-time automation. We are moving away from “Human-in-the-loop” systems toward “Human-on-the-loop,” where AI handles the bulk of the real-time decisioning, and humans provide oversight.

We are moving toward “AI-native decisioning,” where generative AI agents don’t just show you a chart—they explain the narrative behind the stream. For instance, an AI copilot might alert a researcher: “I’ve detected a cluster of respiratory issues in this specific demographic that matches a pattern seen in a 2022 study; should I initiate a cohort analysis?” This requires the platform to have “Vector Search” capabilities, allowing the AI to compare real-time streams against vast libraries of unstructured data like medical journals or past research papers.

The Rise of Real-time RAG (Retrieval-Augmented Generation)

One of the most exciting developments in 2026 is Real-time RAG. Traditional Large Language Models (LLMs) are limited by their training data cutoff. By connecting an LLM to a real-time analytics platform, the model can “retrieve” the most current data before generating a response. Imagine a doctor asking an AI, “What is the current status of the clinical trial?” The AI doesn’t just give a general answer; it queries the live stream of patient data to provide an up-to-the-second summary. This eliminates hallucinations and ensures that AI-driven decisions are based on reality, not history.

As we deploy more AI Healthcare Solutions, the convergence of edge computing and federated architectures will allow us to run these powerful models locally on hospital servers or mobile devices. This “Edge AI” approach keeps data private while delivering insights at the speed of thought. For example, a wearable device could run a local ML model to detect an arrhythmia and only stream the relevant alert to the cloud, saving bandwidth and protecting privacy. The real-time analytics platform of the future will be the central nervous system that connects these edge devices to global intelligence networks, ensuring that every node in the system is as smart as the whole.

Real-time Analytics Platform: Your Top Questions Answered

What is the difference between real-time and batch processing?

Batch processing collects data over a period (minutes, hours, or days) and processes it in chunks. It is efficient for massive volumes of historical data where time is not a factor. A real-time analytics platform processes data as it is generated, providing insights in milliseconds or seconds. Batch is for understanding history; real-time is for taking immediate action. Think of batch as a digital photo and real-time as a live video stream.

How does a real-time analytics platform improve fraud detection?

By analyzing transaction patterns as they happen, real-time platforms can compare a current transaction against a user’s historical behavior and global fraud patterns simultaneously. If a transaction is flagged, it can be blocked or sent for multi-factor authentication before the money ever leaves the account. Batch systems can only tell you that you were robbed yesterday, which is often too late for recovery.

Why is federated analysis important for real-time insights?

In highly regulated industries like healthcare and pharma, data cannot always be moved to a central hub due to laws like GDPR or the need to keep sensitive genomic data secure. Federated analysis allows a real-time analytics platform to send the query to the data, wherever it lives. This ensures compliance and reduces the time spent on data transfer, while still delivering instant results across global locations like London, New York, and Singapore.

What are the most common industries using real-time analytics?

While finance and healthcare are the leaders, we see massive adoption in:

- Manufacturing: For predictive maintenance and monitoring assembly line efficiency.

- Retail: For dynamic pricing and personalized marketing.

- Logistics: For real-time fleet tracking and route optimization.

- Cybersecurity: For detecting and neutralizing threats as they enter the network.

- Energy: For balancing the power grid in response to real-time demand fluctuations.

Is a real-time analytics platform expensive to maintain?

While the initial setup and cloud costs for high-velocity data can be higher than traditional batch systems, the ROI usually outweighs the cost. By preventing fraud, reducing downtime, and improving customer conversion, the platform often pays for itself within the first year. Using cloud-native, serverless architectures can also help manage costs by only charging for the data processed.

Start Your Real-time Analytics Platform Journey Today

The era of waiting for data is over. In a world that moves at the speed of light, speed to insight is now the table stakes for any organization that wants to remain competitive, resilient, and relevant. Whether you are monitoring global health trends, securing financial transactions, or optimizing a complex supply chain, a real-time analytics platform is the engine that turns data velocity into strategic advantage.

At Lifebit, we are committed to helping you steer this transition. We believe that the most powerful insights shouldn’t require compromising on security or compliance. By combining federated governance with high-performance real-time processing, we ensure that your most sensitive data stays secure while providing the immediate, actionable insights you need to save lives, drive innovation, and lead your industry into the future.

Ready to see your data in motion?

Explore the Lifebit Platform