Why a Federated Analytics Platform is the Ultimate Data Peace Treaty

97% of Enterprise Data Is Trapped. A Federated Analytics Platform Is the Key Out.

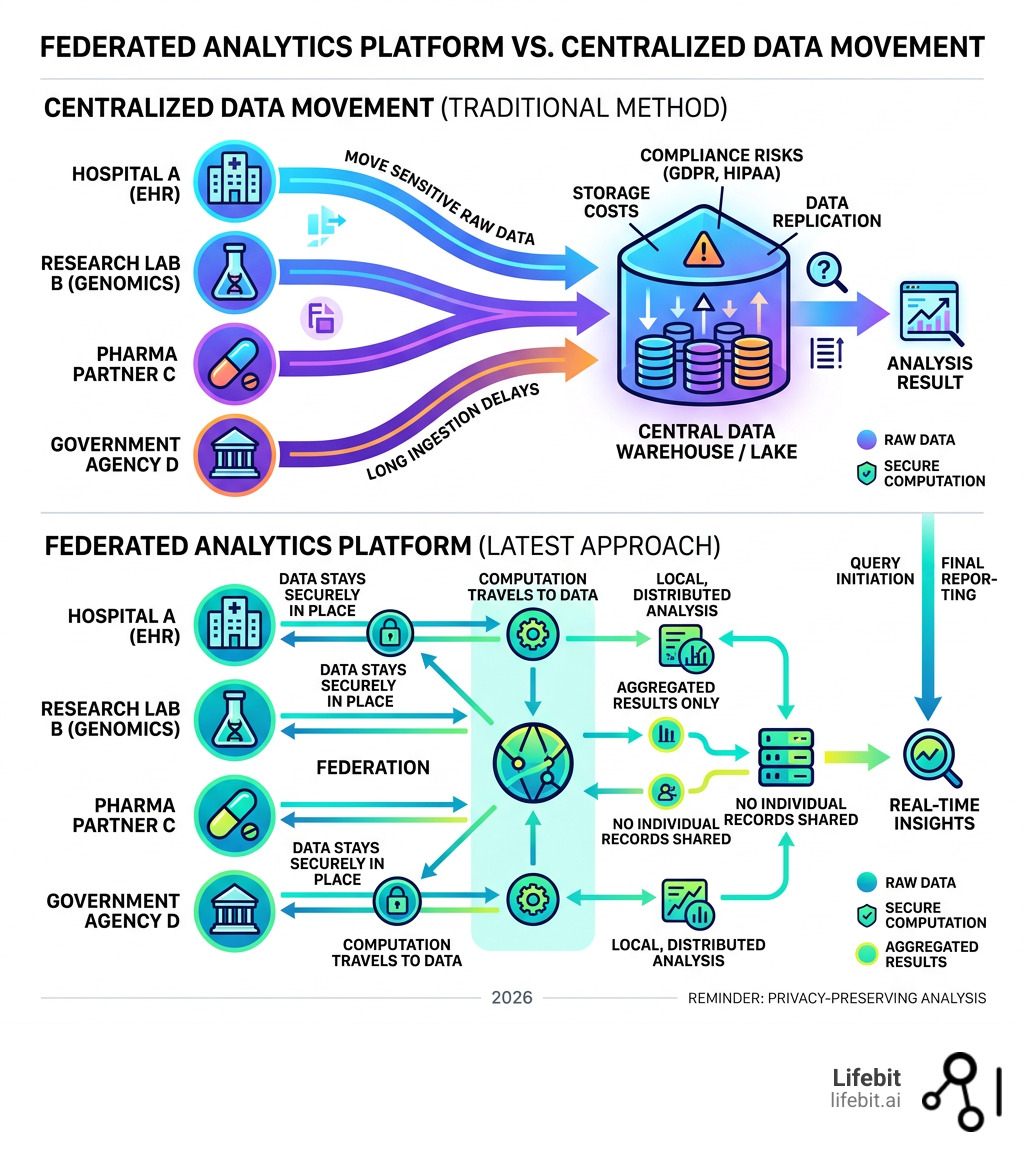

A federated analytics platform is a system that lets you run queries and analytics across multiple, distributed data sources — without ever moving the data. Instead of copying sensitive records into a central warehouse, the computation travels to the data. Results come back. The raw data stays put.

Here’s what that means in practice:

| What You Want | What a Federated Analytics Platform Does |

|---|---|

| Query EHR data across 10 hospitals | Sends the query to each site; aggregates results only |

| Comply with GDPR & HIPAA | Data never leaves its original jurisdiction |

| Get real-time insights | No ingestion delays — query live, in-place data |

| Reduce infrastructure cost | No replication, no centralized storage overhead |

| Unify siloed datasets | Single semantic layer across heterogeneous sources |

Nearly 97% of enterprise data remains untapped, locked inside disconnected systems that can’t talk to each other. For pharma companies, public health agencies, and research institutions, this isn’t just a technical headache — it’s a direct barrier to faster drug discovery, real-time pharmacovigilance, and evidence-based policy decisions.

The old answer was to move everything into one place. Build a warehouse. Centralize. Control.

That answer is breaking down. Data volumes are exploding. Privacy regulations are tightening. Cross-border data transfers are increasingly restricted. Moving data is slow, expensive, and — in regulated industries — often simply not allowed.

A federated analytics platform resolves this tension. It turns “you can’t share that data” into “you don’t need to.”

I’m Dr. Maria Chatzou Dunford, CEO and Co-founder of Lifebit, and I’ve spent over 15 years building computational tools at the intersection of genomics, AI, and federated data infrastructure — including foundational work on Nextflow, the workflow framework now used globally for genomic data analysis. At Lifebit, our entire platform is built on the principle that a federated analytics platform should make distributed, privacy-preserving analysis as simple as working with centralized data. In this guide, I’ll walk you through exactly how it works and why it matters.

Important federated analytics platform terms:

What is a Federated Data Platform and How Does it Work?

At its core, a what is federated data platform is an architecture designed for decentralized analysis. In a traditional setup, you have to move data from Point A to a central Point B to study it. In a federated model, Point A keeps the data, and the analysis “visits” it. This shift is critical for maintaining data sovereignty. When data stays with its original custodian—whether that’s a hospital in London, a genomic repository in New York, or a government health agency in Singapore—the owner retains full control over who sees what.

This is exactly why the NHS Federated Data Platform is so transformative. Instead of creating one giant, vulnerable “mega-database” of patient records, it connects existing systems so clinicians and researchers can get answers without compromising patient privacy. The NHS FDP is designed to help Integrated Care Systems (ICSs) manage elective recovery, care coordination, and vaccination programs by providing a unified view of data that remains physically stored in local trust environments. The beauty of federation is that it bridges the gap between needing to know and needing to protect. It allows organizations to act as a unified ecosystem while respecting the physical and legal boundaries of the data.

The ‘Query, Don’t Move’ Principle in a Federated Analytics Platform

If we had to sum up our philosophy in three words, it would be: Query, don’t move. This approach addresses the growing problem of Data Gravity. As datasets grow into the petabyte scale, the cost, time, and risk associated with moving them become prohibitive. Data gravity suggests that as data accumulates, it attracts applications and services, making it harder to relocate. A federated analytics platform flips this script by bringing the compute to the data.

In a standard federated analytics ultimate guide, the “algorithm-to-data” approach is the star of the show. Instead of pulling 10 terabytes of genomic data over a network to your local server, you send a tiny query (a few kilobytes of code) to where that 10TB lives. The local server does the heavy lifting, calculates the result, and sends back only the answer—like a “yes/no” or a statistical mean. This preserves data residency, ensuring that sensitive information never crosses a digital border. It’s the ultimate privacy-preserving move because the raw, identifiable data is never exposed to the outside world.

Step-by-Step: How a Federated Query Executes

How does this actually look under the hood? It’s not magic; it’s coordinated engineering. Here is the typical lifecycle of a query on a federated data exchange platform:

- The User Request: A researcher writes a query (often in SQL or Python) via a central interface. This interface provides a global view of available datasets without revealing the underlying raw records.

- The Semantic Layer: The platform translates this query. Since different databases might call a patient’s age “Age,” “DOB,” or “Patient_Yrs,” the semantic layer harmonizes these terms using standard ontologies like OMOP or HL7 FHIR so the query makes sense to every source.

- The Query Coordinator: The platform breaks the big query into smaller “sub-queries” tailored for each connected data source, accounting for the specific database engine (e.g., PostgreSQL, BigQuery, or Snowflake) at each site.

- Predicate Pushdown: This is a fancy way of saying we tell the remote database to filter the data before doing anything else. If you only want data from patients over 50, the remote site filters that first, saving massive amounts of computing power and reducing the volume of data processed.

- Parallel Execution: The sub-queries run simultaneously across all sites. No waiting in line. This distributed processing often results in faster performance than a single centralized server could achieve.

- Result Aggregation: The platform collects the tiny “answer packets” from each site and merges them into a single, comprehensive report for the user, ensuring that no PII (Personally Identifiable Information) is included in the final output.

Architecture and Components of a Federated Analytics Platform

Building a federated analytics platform requires a few “must-have” components to ensure it doesn’t just work, but works securely and efficiently across heterogeneous environments.

According to our federated data platform ultimate guide, a robust architecture includes:

- Data Connectors: These are the “translators” that allow the platform to talk to everything from legacy SQL databases to modern cloud buckets. They handle the authentication and protocol translation required to access data in situ.

- Common Data Model (CDM): A unified language (like OMOP) that ensures data from a lab in Canada looks the same as data from a clinic in Europe. Without a CDM, federated queries would fail due to structural inconsistencies.

- Orchestration Engine: The “brain” that manages where queries go, monitors the health of remote nodes, and ensures that results return in a timely manner.

- Metadata Catalog: A centralized repository that stores information about what data exists where, its schema, and its provenance, without storing the data itself.

To understand why this is different from what you might already use, look at this comparison:

| Feature | Federated Platform | Data Warehouse | Data Lakehouse |

|---|---|---|---|

| Data Location | Decentralized (stays at source) | Centralized (copied) | Centralized (stored) |

| Movement Cost | Zero to Low | High (ETL processes) | Moderate |

| Real-time Access | High (queries live data) | Low (delayed by ingestion) | Moderate |

| Compliance | Built-in (Data Sovereignty) | Complex (Requires transfer) | Complex |

Security, Governance, and Compliance (GDPR & HIPAA)

When you are dealing with biomedical data across five continents, “good enough” security doesn’t cut it. A federated analytics platform must implement federated data governance by design. This involves a “Zero Trust” approach where every access request is verified, regardless of where it originates.

We use Role-Based Access Control (RBAC) to ensure that a researcher only sees what their credentials allow. But we go further with differential privacy, which adds “mathematical noise” to results to ensure no individual can be re-identified from a dataset. For example, if a query asks for the average age of patients with a rare disease at a specific site, differential privacy ensures the answer is accurate enough for research but vague enough to protect individual identities.

Every single action is logged in immutable audit trails, providing a clear record for regulators. For a deeper dive into how we manage this, check out our federated governance complete guide. It’s about creating a “Trust, but Verify” environment where data owners feel safe sharing access because they retain the “kill switch” over their own data nodes.

Performance Optimization and Technical Challenges

We won’t pretend federation is without its hurdles. When data is scattered, you face data heterogeneity (different formats) and network latency (slow internet between sites). To fix this, a high-performing federated research environment complete guide uses:

- Intelligent Caching: Storing frequent query results (not the raw data!) to speed up future requests. This is particularly useful for static datasets like reference genomes.

- Materialized Views: Creating “pre-computed” summaries of data at the source. This allows the platform to answer complex questions by looking at a summary table rather than scanning billions of raw records.

- Parallel Execution: Using the combined power of every connected server to finish tasks faster than a single central server ever could. This effectively turns the entire federated network into a massive, distributed supercomputer.

Real-World Use Cases: From Healthcare to Finance

Federation isn’t a theoretical concept; it’s solving real problems today by unlocking data that was previously considered “unshareable” due to privacy or technical constraints.

In the financial world, a federated model for fraud detection allows banks to spot suspicious patterns across different institutions without sharing sensitive customer names or account details. If a “synthetic identity” is used to open accounts at three different banks, a federated query can flag the pattern in real-time. This collaborative approach to Anti-Money Laundering (AML) is significantly more effective than banks working in isolation, as it identifies cross-institutional criminal activity.

In manufacturing, it powers predictive maintenance. A global car manufacturer can analyze sensor data from factories in Germany, the USA, and China simultaneously to predict when a robot arm might fail, without ever moving petabytes of IoT logs to a central hub. This reduces downtime and saves millions in maintenance costs by identifying failure signatures that are common across all factory sites.

Accelerating Biomedical Research with a Federated Analytics Platform

This is where our hearts are at Lifebit. In healthcare, data is the most siloed and the most sensitive. By using a federated analytics platform, we can bridge EHR systems and genomic datasets globally, creating a “virtual data lake” that spans the planet.

For example, federated technology in population genomics allows scientists to study rare diseases by querying small cohorts from dozens of different countries. Individually, these cohorts are too small for a study to reach statistical significance. Together, they provide the power needed for a breakthrough. This is vital for precision medicine, where understanding the genetic drivers of a disease requires diverse datasets from different ancestral backgrounds.

As noted in our guide on federated learning in healthcare, this model accelerates clinical trials by identifying eligible patients across multiple hospital systems instantly. Instead of waiting months for manual site-by-site searches, researchers can run a federated query to find patients who meet specific inclusion criteria, drastically shortening the recruitment phase of a trial.

Hybrid Architectures: When to Federate vs. Centralize

Is federation always the answer? Not necessarily. Sometimes a hybrid approach is best. As we explain in our federated data sharing complete guide, you might choose to:

- Federate for real-time insights, sensitive third-party data, and massive datasets that are too expensive to move. This is the default for cross-border collaboration.

- Centralize for deep historical analysis of your own internal data or when you need to perform “heavy” data cleaning that requires a single source of truth. Centralization is often better for small, non-sensitive datasets where the overhead of federation isn’t justified.

Most modern enterprises are moving toward this “best of both worlds” model to balance cost, speed, and security. By integrating a federated layer with an existing data warehouse, organizations can maintain their legacy systems while gaining the ability to reach out to external data sources securely.

Frequently Asked Questions about Federated Analytics

What is the difference between data federation and data virtualization?

Think of data virtualization as the broad “umbrella” term. It creates an abstraction layer so you can see all your data in one place. A federated analytics platform is a specific, high-performance implementation of this. While virtualization often focuses on just viewing data, federation is built for querying and analyzing heterogeneous sources in real-time while maintaining strict local control and data residency.

Can a federated platform replace a data warehouse?

It can, but it’s often better as a partner. A warehouse is great for historical storage of “dead” data that doesn’t change. A federated analytics platform is for “live” data and data that cannot be moved. In a federated data ecosystem niaid style setup, you use the federation layer to reach out to the data that can’t or shouldn’t be in your warehouse, such as sensitive patient records or massive genomic files.

How is governance managed in a distributed system?

We use a “Centralized Policy, Decentralized Execution” model. You set the rules once (e.g., “No one can see DNA sequences, only statistical summaries”). The platform then automatically enforces those rules at every single site. This is a core part of federated architecture in genomics, where compliance must be automated to keep up with the scale of the data. Local data owners still retain the ultimate authority to grant or revoke access to their specific node.

Does federated analytics impact query performance?

While there is some overhead in coordinating queries across multiple sites, federated platforms use techniques like parallel execution and predicate pushdown to minimize latency. In many cases, querying data in parallel across ten local servers is actually faster than querying a single centralized database that has become a bottleneck. The performance impact is usually outweighed by the time saved in not having to perform complex ETL (Extract, Transform, Load) processes.

How does federation handle data quality issues?

Data quality is managed through the semantic layer and the use of Common Data Models (CDMs). By mapping disparate data sources to a standard schema, the platform can identify and flag inconsistencies. Furthermore, because the data is queried in situ, it is always the most up-to-date version, avoiding the “stale data” problems common in centralized warehouses where data might be days or weeks old.

Conclusion: The Future of Decentralized Data

The era of the “data hoard” is ending. As AI optimization and edge computing take off, the ability to analyze data where it lives will become the standard, not the exception. We are moving from a world of data ownership to a world of data stewardship, where the value lies not in possessing the data, but in the ability to derive insights from it securely.

Federated learning—where AI models are trained across distributed sites without data exchange—is already showing that we can build smarter algorithms without sacrificing a shred of privacy. This technology is being used to train diagnostic AI on medical images from multiple hospitals, resulting in models that are more robust and less biased than those trained on a single site’s data.

At Lifebit, we are proud to be at the forefront of this “Data Peace Treaty.” Our lifebit federated biomedical data platform is designed to turn those untapped 97% of data into the next generation of medical cures. By removing the barriers to data access, we are enabling a new era of collaborative research that respects privacy and accelerates discovery.

Ready to stop moving data and start getting answers? The future of research is federated, and the tools to unlock it are available today. Whether you are a pharmaceutical giant looking to accelerate drug discovery or a public health agency aiming for better patient outcomes, federated analytics is the key to a more connected and insightful future.