Why Data Transformation Services are Your Secret Weapon

Why Raw Data Is Worthless Without Data Transformation Services

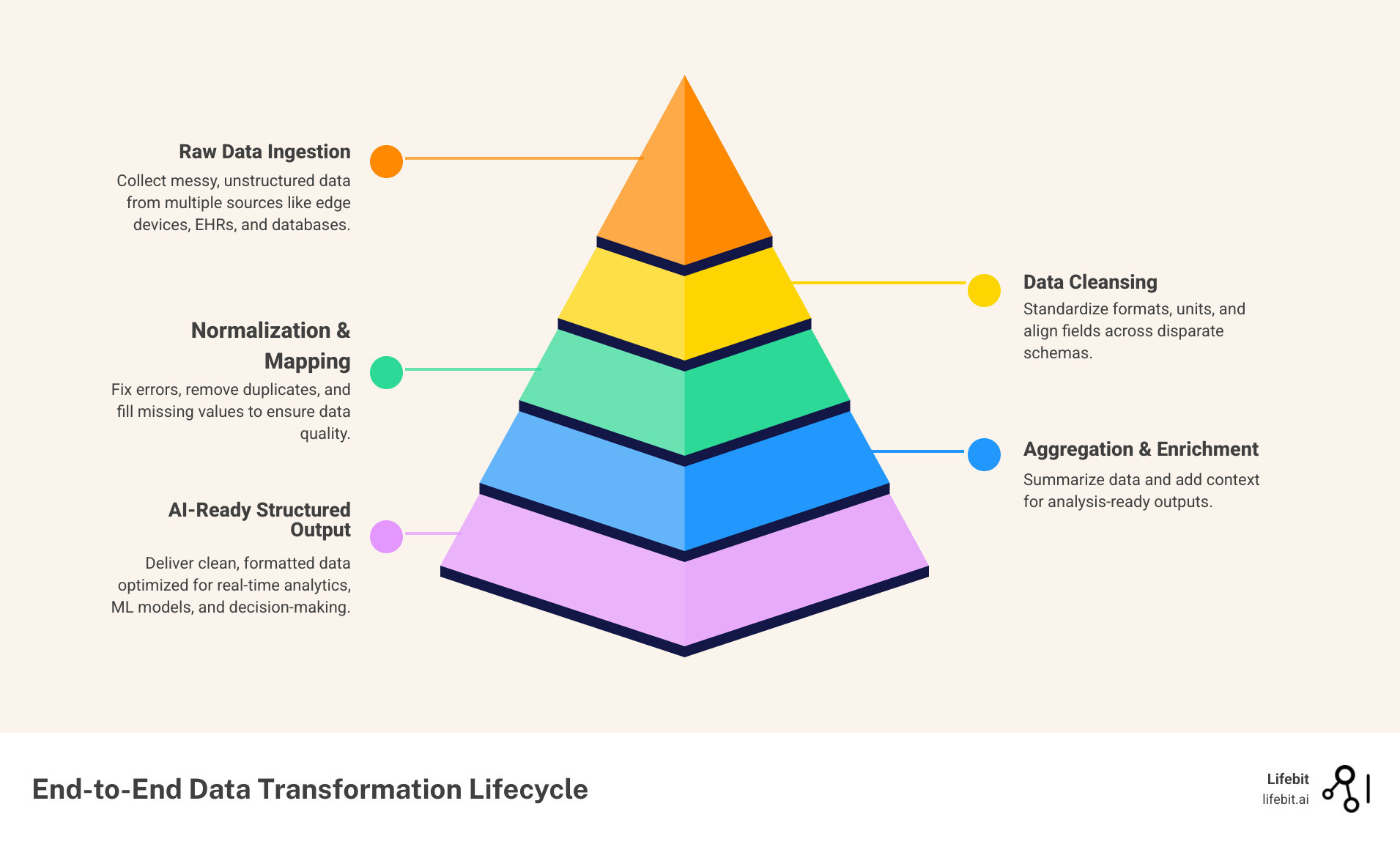

Data transformation services are the process of converting raw, messy data from multiple sources into clean, structured, analysis-ready formats — so your teams, models, and systems can actually use it.

Here’s what they typically include:

- Data cleansing — fixing errors, removing duplicates, filling gaps

- Normalization — standardizing formats and units across sources

- Aggregation — summarizing data for reporting and analysis

- Mapping — aligning fields between different systems or schemas

- Enrichment — adding context to make data more useful downstream

Without this, raw data sitting in silos is just noise. With it, you get a foundation for real-time analytics, AI, and evidence-based decisions.

This matters more than ever right now. 75% of enterprise data is now created outside centralized data centers — spread across edge devices, clinical systems, EHR platforms, and research databases. Meanwhile, 80–90% of enterprise data is unstructured. Organizations that can’t wrangle that data fast enough don’t just fall behind — they get left out of the AI era entirely.

For global pharma companies, public health agencies, and regulatory bodies, the stakes are even higher. Slow data onboarding, poor data quality, and fragmented genomics or claims datasets don’t just slow research — they block it.

I’m Maria Chatzou Dunford, CEO and co-founder of Lifebit, and I’ve spent over 15 years building computational tools and platforms that tackle exactly these challenges — from federated genomics pipelines to compliant, AI-ready data transformation services for the world’s most sensitive biomedical datasets. In the sections ahead, I’ll walk you through everything you need to know to transform your data strategy from reactive to ready.

Simple guide to data transformation services terms:

Why Modern Organizations Need Data Transformation Services

In the old days, we thought having a big database was enough. We were wrong. Today, organizations are drowning in data but starving for insights. The reality is that 80-90% of enterprise data is unstructured—think of it as a giant pile of unorganized puzzle pieces from ten different boxes. This includes everything from physician notes written in natural language to high-resolution medical imaging (DICOM files), and the massive FASTQ files generated by next-generation sequencing (NGS). Without a cohesive strategy, this information remains locked in digital vaults, inaccessible and unusable.

Data silos are the enemy of progress. When your clinical trial data lives in one format in London and your genomic sequences live in another in New York, you can’t see the big picture. This is where scientific research on Data Transformation Services shows its value: by creating a “lingua franca” for your information. Without professional data harmonization services, your high-paid data scientists spend 80% of their time just cleaning spreadsheets instead of curing diseases. This “data janitor” work is not just a waste of talent; it is a massive economic drain on R&D budgets, often costing millions in lost productivity and delayed time-to-market for life-saving therapies.

The High Cost of Data Inefficiency

When data transformation is neglected, the consequences ripple through the entire organization. We see “data debt” accumulating—a state where the effort required to fix old data exceeds the value the data provides. In the pharmaceutical sector, this can lead to failed regulatory audits or the inability to replicate study results. Modern data transformation services act as a debt-relief mechanism, streamlining the path from raw ingestion to actionable intelligence. By automating the repetitive tasks of validation and formatting, organizations can shift their focus from data maintenance to data innovation.

Turning Raw Data into AI-Ready Assets

If you want to use Generative AI or machine learning, your data needs to be “AI-ready.” You wouldn’t put crude oil into a Ferrari; you shouldn’t put “crude” data into your ML models. High-quality data transformation services perform essential feature engineering—selecting and refining the variables that actually help a model learn. This involves more than just cleaning; it requires a deep understanding of the biological or clinical context of the data.

When we talk about AI for data harmonization, we’re talking about using intelligent systems to recognize that “Male,” “M,” and “1” all mean the same thing in different datasets. This level of readiness is what allows researchers to move from hypothesis to discovery in record time. AI-ready data must be consistent, labeled, and ethically sourced, ensuring that the models built upon it are both accurate and unbiased.

Maximizing Throughput with Data Transformation Services

Speed is a feature, not a luxury. Modern cloud-native services can handle massive throughput—up to 5 Gbps—meaning your data moves as fast as your network allows. This scalability is vital when dealing with multi-omic datasets that are terabytes in size. In the context of a global pandemic or a rapid-response clinical trial, the ability to transform and analyze data in real-time can be the difference between success and failure.

However, achieving this isn’t easy. There are significant health data standardisation challenges, such as maintaining the integrity of complex clinical records while moving them across borders. We solve this by ensuring the transformation happens securely, often where the data resides, so you don’t lose time or security in transit. This “compute-to-data” model is the cornerstone of modern, high-throughput data architectures.

Core Techniques: From Data Cleansing to Normalization

To understand how these services work, we need to look under the hood at the techniques used to polish your data. The process is rarely linear; it is an iterative cycle of refinement that ensures every data point is accounted for and correctly interpreted.

| Feature | Batch Processing | Streaming Processing |

|---|---|---|

| Data Volume | Large chunks of historical data | Continuous, real-time data flow |

| Latency | Minutes to hours | Milliseconds to seconds |

| Use Case | Monthly payroll, end-of-day reports | Fraud detection, patient monitoring |

| Complexity | Lower | Higher |

| Cost Efficiency | High for static datasets | High for time-sensitive insights |

Beyond these modes, core techniques include:

- Data Aggregation: Combining multiple data points (like daily heart rate readings) into a single summary (average resting heart rate). This is essential for high-level reporting and identifying long-term trends.

- De-duplication: Identifying and removing the three different entries for the same patient that were created because someone misspelled their last name. This ensures a “single source of truth” for every individual in a study.

- Format Revision: Converting a legacy date format (DD/MM/YY) into a standardized ISO format (YYYY-MM-DD). While it sounds simple, inconsistent date formats are one of the leading causes of errors in longitudinal studies.

- Data Masking and Anonymization: In healthcare, protecting patient privacy is paramount. Transformation services must include robust de-identification protocols that remove Personally Identifiable Information (PII) while preserving the analytical utility of the data.

- Schema Mapping: Aligning the structure of a source database with a target database. This is particularly complex when merging data from legacy on-premise systems with modern cloud-based data lakes.

By following global data integration standards, we ensure that your data is compatible with any tool in your stack, from Power BI to advanced genomic analysis engines.

The Evolution from Legacy to Cloud-Native Data Transformation

We’ve come a long way since the early days of Microsoft’s Data Transformation Services (DTS) in the late 90s. Back then, everything was on-premise, brittle, and required a specialized priest-class of DBAs to maintain. If a server went down, your data stayed messy. These legacy systems were designed for a world of structured, predictable data—a world that no longer exists.

The shift to cloud-native data transformation services changed the game. Modern pipelines are serverless, meaning they scale up when you have a massive data dump and scale down to zero when they’re done, saving you a fortune. Cloud migration isn’t just about moving files; it’s about adopting modern data pipelines that are self-healing and infinitely flexible. These systems can automatically detect changes in source data schemas and adjust the transformation logic accordingly, a concept known as “schema evolution.”

Advanced Transformation: Feature Engineering for Bio-AI

In the realm of precision medicine, data transformation goes beyond simple cleaning. It involves “feature engineering,” where raw biological data is transformed into specific inputs for machine learning models. For example, raw genetic sequences might be transformed into polygenic risk scores, or continuous glucose monitor data might be transformed into “time-in-range” metrics.

These high-level transformations require domain expertise. Data transformation services in the life sciences must understand the nuances of biological variability and the specific requirements of bioinformatics pipelines. By automating these complex calculations, we enable researchers to move directly to the analysis phase, significantly shortening the R&D lifecycle.

Automating Workflows for Efficient Data Preparation

Why wait for an engineer to write a custom Python script every time you get a new dataset? Modern platforms use visual interfaces that allow researchers to design data flows by simply dragging and dropping components. This “low-code/no-code” approach empowers the people who understand the data best—the scientists and clinicians—to take control of the transformation process.

This democratization of data has a massive impact: we’ve seen a 70% reduction in the time it takes to complete data import tasks. By using automated mapping and AI-assisted cleansing, your team can focus on the “What does this mean?” rather than the “How do I open this file?” Furthermore, automated workflows provide a clear audit trail, which is essential for regulatory compliance and scientific reproducibility.

Overcoming the Challenges of Distributed Data

The biggest headache for global organizations today is that data is everywhere. It’s at the “edge”—in hospitals, in local clinics, and in remote research labs. You can’t always move this data to a central warehouse because of privacy laws, data residency requirements, or the sheer volume of the data. Moving a petabyte of genomic data across the ocean is not only expensive but often legally impossible.

This is where technological governance and data sovereignty become critical. We use techniques like OMOP mapping to standardize data locally so it can be analyzed globally without ever leaving its home jurisdiction. This “federated” approach allows for the creation of virtual data lakes, where the data remains secure behind the provider’s firewall while still being accessible for authorized research queries.

The Federated Paradigm: Transformation at the Source

In a traditional ETL (Extract, Transform, Load) model, data is pulled from various sources and moved to a central location for transformation. In the federated paradigm, we flip this model. We bring the data transformation services to the data. This minimizes data movement, reduces the risk of security breaches during transit, and ensures compliance with local regulations like the GDPR in Europe or HIPAA in the United States.

By transforming data at the source, we also ensure that the data remains “fresh.” There is no delay caused by long-running extraction processes. Researchers can query the most up-to-date information available, which is critical for real-world evidence (RWE) studies and post-market surveillance of new drugs.

Ensuring Security and Compliance in Global Research

When you’re dealing with human lives, “good enough” security isn’t enough. We operate in highly regulated environments where GDPR and HIPAA are the floor, not the ceiling. Data transformation in this context must include rigorous logging, encryption at rest and in transit, and strict access controls.

Lifebit is certified for data transformation by organizations like EHDEN, ensuring that our mapping to standards like OMOP is accurate and reliable. With 34,000 engineers at partners like Microsoft dedicated to security, and our own federated architecture, we ensure your data is encrypted, audited, and compliant at every step. This level of security is what allows public health agencies and private pharma companies to collaborate on sensitive datasets without compromising patient trust.

Optimizing Performance and Reducing Platform Costs

Let’s talk money. Transforming data shouldn’t break the bank. In fact, modernizing your legacy workloads can lead to up to 88% cost savings. These savings are not just from reduced storage costs, but from the increased efficiency of the queries themselves. Clean, well-structured data is much cheaper to analyze than messy, redundant data.

We achieve this through:

- Data Compaction: Shrinking small files into larger, more efficient ones to reduce I/O overhead.

- Partitioning: Dividing datasets by specific criteria (like date or region) so you only scan the data you actually need for a query.

- Data Skipping: Using metadata to ignore irrelevant data blocks entirely, which can speed up query times by orders of magnitude.

- Automated Tiering: Moving infrequently accessed data to lower-cost storage tiers while keeping active data in high-performance environments.

These are just a few of the seven benefits of health data standardisation that help you do more research with less budget. By optimizing the underlying data structure, we enable complex analyses that were previously cost-prohibitive.

Real-World Impact Across Healthcare

In biotech and pharma, data transformation services are the difference between a failed trial and a breakthrough. Imagine trying to analyze patient records from 50 different hospitals, each using a different EHR system. One hospital might record blood pressure as a single string, while another uses two separate numerical fields. Without transformation, these datasets are like books written in 50 different languages.

By converting legacy EHR warehouses to FHIR (Fast Healthcare Interoperability Resources), we make that data searchable and usable. We’ve seen customer onboarding speed up by 2.17x simply by automating the messy parts of data ingestion. This acceleration means that life-saving insights reach the clinic months earlier than they would have otherwise.

The ROI of Data Transformation

The return on investment for these services is measured in both time and money. For a mid-sized biotech company, automating the data transformation pipeline can save thousands of engineering hours per year. But the real value lies in the quality of the science. When data is harmonized, researchers can perform cross-cohort analyses that were previously impossible, leading to the discovery of rare disease biomarkers or the identification of patient subgroups that respond better to specific treatments.

Case Study: Accelerating Clinical Insights

Consider a large-scale pharmacovigilance project. You need to monitor drug safety across millions of patients in real-time. This requires harmonizing multi-omic data (genetics, proteins, etc.) with real-world evidence from clinical notes, pharmacy records, and even wearable device data. This is a massive data transformation challenge that involves billions of rows of data.

Through clinical data harmonisation, we create a “Trusted Data Factory.” This allows researchers to run complex queries across disparate datasets and get answers in seconds, not months. For example, a researcher could ask: “What is the incidence of adverse cardiac events in patients with this specific genetic variant who are also taking this medication?” In a non-transformed environment, answering this would take a team of data scientists weeks. In a Trusted Data Factory, it takes a single query. It’s how we turn “data chaos” into “life-saving insights.”

Frequently Asked Questions about Data Transformation

What is the difference between ETL and ELT in data transformation services?

ETL (Extract, Transform, Load) cleans the data before it hits the warehouse. It’s great for strict quality control and ensuring that only high-quality data enters your primary storage. ELT (Extract, Load, Transform) dumps the raw data into the warehouse first and uses the warehouse’s own processing power to transform it. ELT is generally faster and more flexible for modern cloud environments because it allows you to keep the raw data for future, unforeseen transformation needs.

How does data transformation support AI and machine learning?

AI is only as good as its training data. Data transformation services handle “data qualification”—ensuring the data is clean, labeled, and formatted correctly. This prevents the “garbage in, garbage out” problem. Furthermore, transformation services can help with “data augmentation,” creating synthetic data points to help train models when real-world data is scarce, and “feature scaling,” ensuring that different variables are on a comparable scale so the model doesn’t become biased toward one specific metric.

Why is data quality more important than data quantity for research?

In the era of Big Data, there is a temptation to collect as much as possible. However, 1,000 high-quality, well-annotated patient records are often more valuable than 1,000,000 messy, incomplete records. Poor data quality leads to “noise” that can obscure real biological signals. Data transformation services focus on improving the “signal-to-noise” ratio, ensuring that the conclusions drawn from the data are statistically sound and biologically relevant.

How does Lifebit’s platform simplify complex data preparation for researchers?

We use a federated approach. Instead of making you move your massive, sensitive datasets, our platform brings the transformation and analysis tools to the data. We provide automated health data standardisation tools that map your local data to global standards like OMOP or FHIR. This includes AI-assisted mapping that suggests the best fit for your local variables, significantly reducing the manual effort required. This means you can start researching immediately, with the confidence that your data is compliant and interoperable.

Conclusion: Future-Proofing Your Data Strategy

The volume of data isn’t going to shrink, and the complexity of research isn’t going to decrease. To stay competitive, you need a strategy that doesn’t just store data, but actively transforms it into a strategic asset.

At Lifebit, we believe the future is federated. Our Federated Biomedical Data Platform is built to handle the world’s most complex multi-omic and clinical datasets. By combining our Trusted Data Lakehouse with advanced data transformation services, we enable secure collaboration across five continents without compromising on data privacy or performance.

Don’t let your data sit in a silo. Transform it. Use it. And let’s build the future of precision medicine together.

Connect with Lifebit to see our Data Transformation Services in action