The Federated Data Platform Explained: Connecting Your Data Ecosystem

That 97% of Untapped Data? Here’s How to Open up It

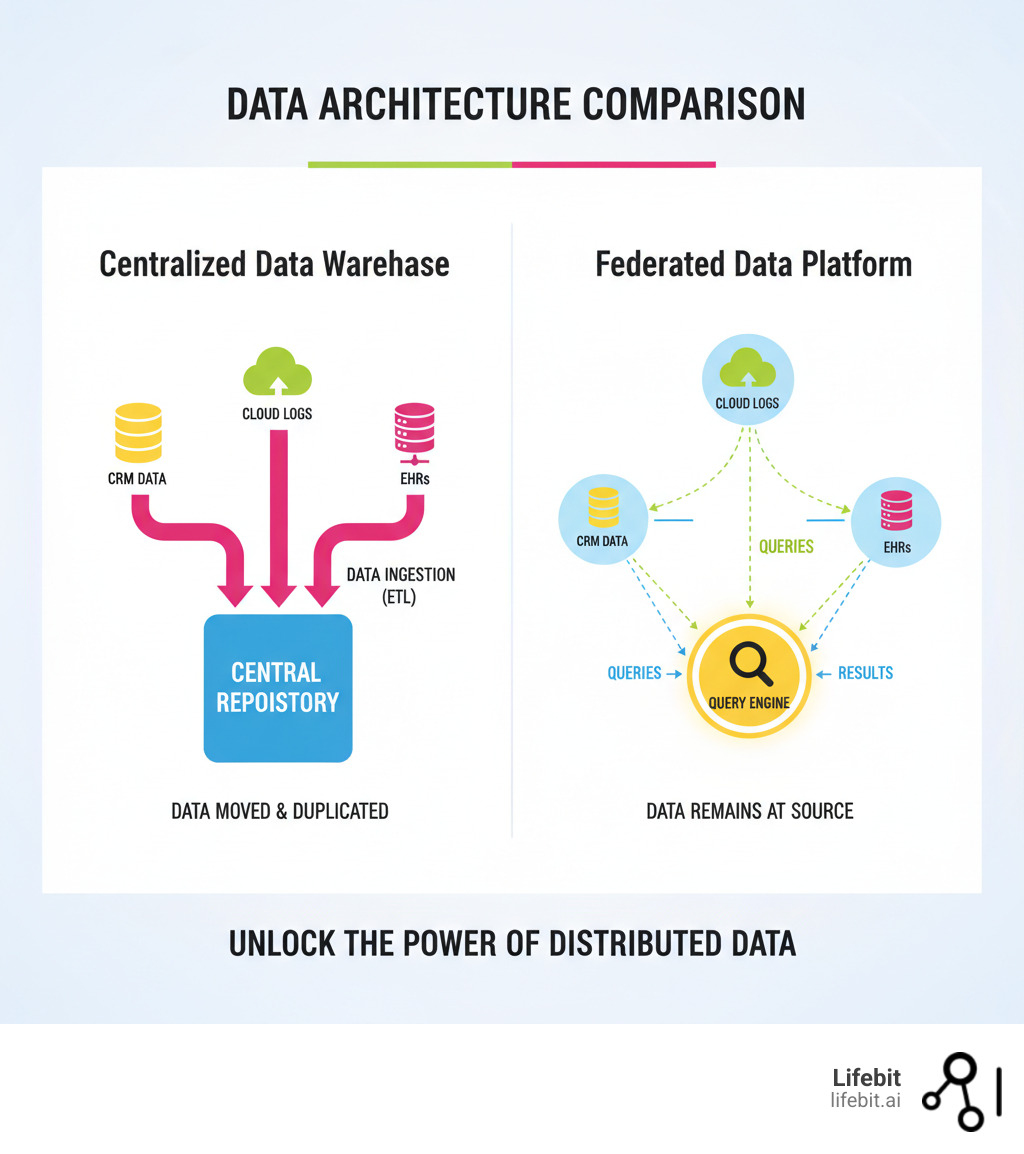

A federated data platform is a virtual data layer that lets you query, analyze, and govern data across distributed sources without moving or copying it. Instead of forcing data into a central repository, it brings computation directly to the data, wherever it lives.

Key characteristics of a federated data platform:

- Data remains at source – No replication or migration required.

- Real-time access – Query live data across different systems at once.

- Unified governance – Centralize security, compliance, and access control.

- Cost efficiency – Eliminate redundant storage and complex ETL pipelines.

- Interoperability – Connect databases, cloud platforms, EHRs, and more.

This approach is changing how healthcare, pharmaceutical, and regulatory organizations handle sensitive, siloed data—enabling real-time pharmacovigilance and cross-institutional research without compromising security.

Nearly 97% of enterprise data remains untapped, locked in disconnected systems. For organizations managing electronic health records (EHRs), genomic datasets, and clinical trial information, this fragmentation creates massive bottlenecks, delaying critical insights.

Federated data platforms solve this by creating a virtual data layer over your entire data ecosystem. You can run queries across AWS, Azure, Oracle, Snowflake, and specialized health formats like FHIR from a single interface. The data stays put. You get the insights you need in real time, without the risk or cost of moving petabytes of sensitive information.

I’m Maria Chatzou Dunford, CEO and Co-founder of Lifebit. We build federated platforms that power precision medicine for leading health organizations worldwide. Understanding this technology is key to breaking down data silos and accelerating research in secure, compliant environments.

How Federation Works: The ‘Query, Don’t Move’ Revolution

A federated data platform is an advanced form of data virtualization that makes multiple, separate data sources act as one unified system. Instead of copying data to a central repository—a complex, costly, and risky process—it leaves your data exactly where it is.

Think of it as a virtual database sitting on top of your existing infrastructure. It connects everything from relational databases and data warehouses to cloud data stores and streaming sources, eliminating the problems of siloed data while maintaining the highest security standards.

The ‘Query, Don’t Move’ Principle

The defining philosophy is “query, don’t move.” When you need to analyze data, the query—or an entire algorithm—travels to the data source, processes the information locally, and returns only the results. The raw, sensitive data never leaves its original location.

This creates virtualized access, giving users a unified view of data without needing to know its physical storage or format. A single federated query can combine information from multiple sources in real time, eliminating manual data consolidation and the latency of ETL pipelines.

By avoiding data replication, these platforms drastically reduce storage costs, simplify governance, and lower the risk of a data breach. Crucially, this approach respects data sovereignty, ensuring data remains in its original location to comply with regulations like GDPR and HIPAA.

The Architecture That Makes It Possible

How does a federated platform achieve this? Through a sophisticated architecture working behind the scenes to create a seamless experience.

- Data source connectors act as intelligent gateways. These are more than simple APIs; they are adapters that translate a standardized query from the federated engine into the native language of each source system (e.g., SQL, NoSQL queries, REST API calls). They handle authentication, protocol conversion, and data type mapping, allowing the platform to communicate with diverse sources like SQL databases, cloud storage (S3, Azure Blob), data warehouses (Snowflake, Redshift), and specialized formats like FHIR.

- The federated query engine is the brain of the operation. When it receives a query, it performs several critical tasks. First, it consults the common data model to understand the request. Then, it decomposes the query into optimized sub-queries for each relevant data source. Its optimization process, often using cost-based analysis, determines the most efficient execution plan, deciding what operations to “push down” to the source systems to minimize data transfer. Finally, it orchestrates the parallel execution of these sub-queries.

- A common data model (or semantic layer) is the Rosetta Stone of the federated platform. It harmonizes different schemas, naming conventions, and data structures into a unified, logical view. This abstraction layer allows users to query data using familiar business terms (e.g., “customer,” “patient visit”) without needing to know the technical details of the underlying source systems (e.g.,

CUST_TBLin one database,customer_documentin another). This greatly simplifies the user experience and accelerates analysis. - The security and governance layer is a non-negotiable, centralized control plane. It enforces access controls, data masking, and audit trails across all connected sources, ensuring that every interaction complies with organizational and regulatory policies. This layer manages user identities and applies role-based (RBAC) or attribute-based (ABAC) access control to columns, rows, or even individual data cells. Our Federated Data Governance solutions are built to manage these complexities at scale.

- An orchestrator is essential for coordinating activities in large, complex federated networks, especially those involving multiple organizations. It acts as a central point of trust, monitoring the health of the network, managing query workflows, and ensuring that all operations are executed smoothly and securely. In federated learning, the orchestrator also manages the distribution of models and the aggregation of updates.

How a Federated Query Works: A Deeper Look

Imagine a researcher analyzing the link between a specific gene and treatment outcomes, with data spread across three different hospital databases. Here’s a more detailed breakdown of the federated query process:

- Single Query Submission: The researcher submits a single query to the platform, asking for the correlation between the gene and outcomes for patients over 50. The query is written against the common data model, not the individual databases.

- Query Decomposition and Optimization: The federated query engine parses the request. It identifies that patient demographics are in Hospital A’s EHR, genomic data is in Hospital B’s database, and treatment outcomes are in Hospital C’s clinical trial system. The engine creates an optimized plan, deciding to filter for age at each source first (a “predicate pushdown”) to reduce the amount of data processed.

- Sub-Query Execution: The engine dispatches the optimized sub-queries to the respective connectors for each hospital system. Each connector translates the sub-query into the native language of its data source.

- Local Processing: Each system processes its query locally. Hospital A finds patients over 50, Hospital B identifies those with the target gene, and Hospital C retrieves their treatment outcomes. The raw data never moves between systems or leaves the hospital’s secure environment.

- Results Aggregation: Only the anonymized, intermediate results (e.g., lists of patient IDs or aggregated statistics) are sent back to the query engine. The engine then performs the final join or aggregation across these results.

- Unified Response: The engine combines the intermediate results and presents a single, comprehensive answer to the researcher in near real-time, without ever having centralized the sensitive source data.

Federation vs. Data Warehouse vs. Data Lakehouse: Stop Paying to Move Data

When trying to make sense of scattered data, organizations have traditionally chosen a data warehouse. However, the modern data landscape offers more options: the data lakehouse and the federated data platform. They take fundamentally different approaches, and your choice impacts speed, cost, security, and legal compliance.

- Data Warehouses use the Extract, Transform, Load (ETL) model. They physically copy structured data from source systems, standardize it into a rigid schema, and load it into a central repository. This batch process means data is never truly live, but it’s highly optimized for predictable business intelligence (BI) and reporting.

- Data Lakehouses are an evolution of data lakes. They store all types of data (structured, semi-structured, unstructured) in a central, low-cost object store. They then apply warehouse-like management features (e.g., ACID transactions, schema enforcement) on top, offering more flexibility than a warehouse but still requiring data to be centralized.

- Federated Data Platforms take a completely different approach. They leave the data where it is and create a virtual access layer on top. Instead of moving data, they move queries. This provides real-time access to live data in its original context.

Here’s how the three architectures stack up:

| Feature | Federated Data Platform | Data Lakehouse | Data Warehouse |

|---|---|---|---|

| Data Location | Data remains at its original source (decentralized) | Data is copied and stored centrally in open formats | Data is copied and stored centrally in proprietary formats |

| Data Freshness | Real-time access to live, operational data | Near real-time or batch, depending on ingestion | Typically batch-processed, leading to latency |

| Cost | Lower storage costs; leverages existing infrastructure | Moderate storage costs (low-cost object store); compute costs vary | High storage and ETL infrastructure costs |

| Scalability | Scales by leveraging existing source systems | Highly scalable storage and compute (decoupled) | Requires scaling of central storage and processing (coupled) |

| Use Case | Real-time analytics, data residency, multi-cloud/hybrid queries | BI and ML on all data types; streaming analytics | Historical analysis, standard reporting, BI on structured data |

| Schema | Virtual common schema (schema-on-read) | Schema-on-write and schema-on-read | Enforced, rigid schema (schema-on-write) |

When to Choose Federation

Choose federation when data cannot or should not be moved. It is the superior choice for:

- Real-time analytics on live operational data, such as fraud detection or supply chain monitoring, where ETL latency is unacceptable.

- Strict data residency and compliance, as it keeps data within jurisdictional boundaries (like GDPR or HIPAA) by design. This is non-negotiable in fields like healthcare and international finance.

- Analyzing highly heterogeneous data, where the cost and complexity of forcing everything into a single central schema are prohibitive.

- Ad-hoc exploratory analysis, allowing data scientists and analysts to quickly query new combinations of data sources without waiting for a new ETL pipeline to be built.

When a Warehouse or Lakehouse Makes Sense

Centralized architectures like data warehouses and lakehouses remain valuable for use cases that benefit from a consolidated, curated, and historically deep dataset. They are the right choice for:

- Large-scale aggregation of historical data for deep analytical queries, such as quarterly financial reporting or longitudinal patient studies.

- Predictable, high-performance BI reporting where query patterns are known and the underlying data model can be highly optimized.

- Training complex AI and machine learning models on large, static, and pre-processed historical datasets where performance and consistency are paramount.

A data lakehouse is often preferred over a traditional warehouse when an organization needs to incorporate unstructured data (like images or text) into its analytics and ML workflows.

The Power of a Hybrid Approach

Leading organizations rarely make an either/or choice. Instead, they use a hybrid approach that combines the strengths of each architecture. For example, a multinational bank might use a federated platform for real-time, cross-border fraud detection, querying live transaction data from systems in the US, EU, and Asia without violating data sovereignty laws. Simultaneously, it might load curated, anonymized subsets of that data into a central data warehouse each night to train fraud prediction models and perform historical risk analysis. This combines the agility of federation with the deep analytical power of a centralized repository, creating a powerful, flexible, and compliant data ecosystem.

4 Reasons to Federate: Faster Insights, Lower Costs, Tighter Security

The shift to federated data platforms is driven by clear benefits that solve today’s biggest data challenges. They offer a more efficient, secure, and cost-effective way to generate actionable insights.

1. Get Instant Insights from Real-Time Data

Federated platforms query live operational data directly, eliminating the delays of traditional ETL (Extract, Transform, Load) processes. This gives you:

- Live Queries: Run analysis against operational data for immediate decision-making.

- No ETL Latency: Bypass the delays of moving and changing data to get insights when they matter most.

- Greater Business Agility: React faster to market changes, customer behavior, or critical events.

2. Cut Storage and Infrastructure Costs

Federation offers a lean approach to data management that leads to significant savings:

- No Redundant Storage: Since data isn’t duplicated, you avoid paying for extra hardware or cloud storage.

- Reduced Hardware Investment: The platform leverages your existing infrastructure instead of requiring new, expensive systems.

- Minimal Overhead: With less data movement and fewer systems to manage, operational costs are drastically reduced.

3. Strengthen Security and Simplify Compliance

Federated platforms are inherently more secure, especially for sensitive information:

- Data Stays in Place: By not moving data, you virtually eliminate the risk of it being lost or breached in transit.

- Simplified Compliance: Federation makes it easier to comply with data residency laws like GDPR and HIPAA because data never leaves its original jurisdiction.

- Centralized Policy Control: A central governance layer enforces security policies, role-based access control (RBAC), and data masking across all sources. Our Federated Data Governance approach ensures these controls are robust, often within a Federated Trusted Research Environment.

4. Unify All Your Data Silos

Federated platforms break down the barriers between disconnected data systems:

- Connects Diverse Formats: Query data across SQL databases, cloud storage, and specialized scientific formats from a single interface.

- Flexible Schema: Harmonization happens virtually at query time, so you don’t need to force all data into a single, rigid schema.

- Single Source of Truth: Create a virtual “single source of truth” for analytics, enabling 360-degree views of customers, patients, or operations.

Federation in Action: How Modern Industries Win With Data

Understanding what is a federated data platform is easier when you see it in action. These solutions are enabling breakthroughs across multiple sectors that were impossible with traditional, centralized approaches.

Healthcare: Accelerating Research Without Compromising Privacy

In healthcare, where data is extremely sensitive and siloed, federation is transformative. Large-scale initiatives like the NHS Federated Data Platform are connecting millions of patient records to improve care without creating a massive central database. This model supports collaborative research across institutions, as seen in global projects like VODAN for pandemic response and FeTS for AI-driven tumor analysis. It also powers real-time pharmacovigilance, allowing regulators to monitor drug safety by querying adverse event data across diverse, distributed datasets.

At Lifebit, our platform enables secure analysis of global biomedical and genomic data, accelerating precision medicine while upholding the strictest privacy standards. A central orchestrator is vital in these networks, providing governance and ensuring the entire ecosystem operates safely and at scale—a model we’ve refined through years of work with public health agencies and biopharma organizations.

Finance: Fighting Fraud in Real-Time

Financial institutions face a constant battle against sophisticated fraud. With transaction data, customer information, and risk profiles stored across multiple cloud and on-premises systems, getting a complete, real-time view is a challenge.

A federated model for fraud detection changes the game. Banks can analyze transactions across all platforms simultaneously without data replication, enabling real-time monitoring for suspicious activity. This cross-platform monitoring helps analysts spot complex fraud patterns—like synthetic identity fraud—that are invisible when looking at isolated systems. By keeping data in place, banks can train more effective fraud models without centralizing sensitive customer data, satisfying both security and regulatory requirements like GDPR.

Manufacturing and IoT: Predictive Maintenance at Scale

Modern manufacturing relies on the Internet of Things (IoT), with factories generating petabytes of sensor data from production lines. Centralizing this data for analysis is often impractical due to network bandwidth limitations and storage costs. A federated platform allows a manufacturer to analyze this data in place. Engineers can run queries to monitor equipment health across multiple factories globally, identify anomalies, and predict maintenance needs in real time. For example, an algorithm can be sent to each factory’s local server to analyze vibration and temperature data, returning only alerts for machines at risk of failure. This prevents costly downtime without overwhelming the corporate network.

The Future: Smarter, Faster, and More Connected

The evolution of federated platforms is accelerating, driven by AI, edge computing, and hybrid cloud needs.

- AI-Powered Query Optimization: Future platforms will use machine learning to enhance query optimization. By learning from past query patterns, the engine will automatically determine the most efficient way to access, join, and combine data, making platforms faster and more intuitive for non-technical users.

- Hybrid and Multi-Cloud Federation: As organizations increasingly use multiple cloud providers alongside on-premises systems, federated platforms that can seamlessly query across AWS, Azure, GCP, and private data centers are becoming the norm. This prevents cloud vendor lock-in and provides a unified view of the entire data estate.

- Federation-as-a-Service (FaaS): Cloud providers are making it easier for organizations to deploy these platforms without large upfront investments. Managed FaaS solutions handle the complexity of connector maintenance and query engine management, lowering the barrier to entry.

- Federated Learning at Scale: This is more than a trend; it’s a paradigm shift. Instead of bringing data to a central model, federated learning brings the model to the data. An initial AI model is sent to each data source, where it trains on local data. Only the resulting model updates (weights and parameters), not the raw data, are sent back to a central server. These updates are aggregated to create an improved global model, which is then sent back out for another round of training. As our work in Federated Learning in Healthcare shows, this iterative process allows AI models to learn from diverse, distributed datasets without ever compromising data privacy, achieving results comparable to centralized training.

- Federation at the Edge: The rise of edge computing means more data is being generated and processed on devices like sensors, cameras, and smartphones. Federated platforms are extending to the edge, allowing analysis to happen directly on these devices. This is critical for applications requiring ultra-low latency, such as real-time quality control on an assembly line or autonomous vehicle navigation.

Your Top 5 Questions About Federation, Answered

As organizations explore federated data platforms, a few key questions always come up. Here are the answers to the most common ones.

1. What’s the difference between data federation and data virtualization?

Data virtualization is the broad concept of creating a unified, abstract data view without revealing its physical location or format. Data federation is a specific type of data virtualization focused on enabling a single query to run across multiple, heterogeneous data sources in real time. Think of virtualization as the “what” (an abstract view) and federation as the “how” (a query engine that joins distributed sources). In short, all federation is virtualization, but not all virtualization is federation (e.g., a simple data proxy for a single database is also a form of virtualization).

2. Can a federated platform replace our data warehouse?

Not always—they often work best together in a hybrid architecture. A federated platform is ideal for real-time answers from live, distributed data, especially when compliance requires data to stay in place. A data warehouse excels at deep historical analysis and high-performance reporting on large volumes of curated, static data. Many organizations use federation for live operational queries (e.g., “What is the current inventory level across all our warehouses?”) and a data warehouse for long-term trend analysis (e.g., “How have inventory levels changed year-over-year?”).

3. How is governance managed in a federated system?

This is a critical question. While data remains distributed, governance is centralized. A central control plane or governance layer is responsible for enforcing security policies, access controls, and compliance rules uniformly across the entire network. This is how it works:

- Centralized Policy Management: Security policies are defined once and applied everywhere. This includes role-based access control (RBAC) and attribute-based access control (ABAC), which grants access based on user roles, data sensitivity, and other contextual attributes.

- Virtual Data Protection: Techniques like dynamic data masking and anonymization can be applied at query time. This protects raw data at its source while allowing analysts and researchers to work with it safely.

- Comprehensive Audit Trails: The central platform logs every query and access attempt, providing a complete, unified audit trail. This transparency is essential for regulatory compliance (e.g., GDPR, HIPAA) and for building trust among data owners.

For highly sensitive work, organizations often use a Federated Trusted Research Environment. These secure spaces allow researchers to bring approved algorithms to the data under strict, auditable controls.

4. What are the performance considerations for a federated platform?

Performance is a valid concern, as federated queries can be slower than querying a centralized, optimized data warehouse. Latency is influenced by three main factors: network speed between the platform and data sources, the performance of the underlying data sources themselves, and the complexity of the query. However, modern federated platforms use several techniques to mitigate this:

- Query Optimization: The query engine’s ability to push down processing to the source systems is paramount. This minimizes the amount of data that needs to be moved over the network.

- Intelligent Caching: Frequently accessed data or intermediate query results can be cached within the federation layer to speed up subsequent queries.

- Parallel Execution: The platform executes sub-queries against multiple sources in parallel, reducing the total query time.

- Materialized Views: For common and complex queries, some platforms allow the creation of virtual materialized views, which pre-join and store the results for near-instant access.

5. What are the biggest challenges when implementing a federated data platform?

Implementation involves both technical and organizational hurdles. The main challenges include:

- Data Source Heterogeneity: Mapping schemas and semantics from dozens of different systems into a single common data model can be complex and time-consuming. It requires a deep understanding of the source data.

- Connector Maintenance: Each data source needs a connector, and these connectors must be maintained and updated as source systems change or APIs are upgraded.

- Network Reliability and Latency: Since the platform relies on the network to reach data sources, performance can be impacted by network instability or high latency, especially in globally distributed environments.

- Organizational Buy-In: The biggest challenge is often not technical but political. You must convince the owners of different data silos to connect their systems to the platform. This requires building trust and demonstrating the value of a robust, centralized governance model that respects their ownership and ownership and security concerns.

Stop Moving Data. Start Getting Answers.

Data silos are a barrier to innovation. Forcing data into a central repository is slow, expensive, and risky. A federated data platform offers a better way: bring the analysis to the data. Your information stays secure and compliant, while you get instant, unified insights.

The benefits are clear: real-time access, lower costs, and stronger security. We see this approach changing industries from healthcare to finance, enabling breakthroughs that were once impossible.

The future looks even more promising with AI-powered optimization and federated learning. The organizations that adopt this approach today are positioning themselves to lead tomorrow.

At Lifebit, we’ve spent over a decade building federated data platforms for the complexities of biomedical research. We understand that working with multi-omic datasets and EHRs requires deep domain expertise and an unwavering commitment to security. That’s why leading biopharma companies, government agencies, and public health organizations trust us to power their most critical research.

The question isn’t whether federation will become the standard—it’s whether you’ll be ahead of the curve. Your data holds the answers. The right platform helps you find them.

Ready to transform your data strategy and connect your global biomedical data? Explore how we can help with the Lifebit Federated Biomedical Data Platform and turn your data into findy.