How to Build a Scalable Data Analytics Workflow

Why Scalable Data Analytics Is No Longer Optional

Scalable data analytics is the ability of a data system to efficiently handle growing volumes, complexity, and user demands without performance degradation or constant infrastructure overhauls. Here’s what you need to know:

- The Problem: 86% of analysts work with out-of-date data, and 62% wait on engineering resources multiple times per month

- The Investment: 87.8% of organizations increased analytics spending in 2022, with 83.9% planning to continue through 2024

- The Gap: Only 23.9% say they’ve become truly data-driven, and just 40.8% believe they’re fully leveraging their data

- The Solution: Modern scalable architectures—like Lakehouses—combine low-cost storage with fast queries, ACID transactions, and open formats to eliminate bottlenecks

Your data is growing exponentially. Your analytics infrastructure isn’t.

Global pharma companies, public health agencies, and regulatory bodies now manage petabytes of siloed EHR, claims, and genomics data. Yet most teams still battle the same three problems: slow data onboarding, stale insights, and waiting on engineering.

Traditional data warehouses force you to choose between speed and flexibility. Two-tier lake-plus-warehouse setups create double ETL pipelines, leading to data that’s days or weeks behind operational reality. And as volumes surge—from real-world evidence to multi-omic datasets—legacy systems simply collapse under the load.

The result? Billions spent on analytics that never reach decision-makers in time.

But there’s a better way. Modern scalable architectures don’t just add more servers—they fundamentally rethink how data is stored, processed, and governed. They use open formats like Parquet and Delta Lake, metadata layers for ACID compliance, and distributed compute that scales horizontally without breaking the bank. They turn analytics from a bottleneck into a product that teams can build, version, and improve like software.

I’m Maria Chatzou Dunford, CEO and Co-founder of Lifebit, where we’ve spent over a decade building scalable data analytics platforms for genomics, biomedical research, and federated health data across secure, compliant environments. My background spans computational biology, AI, and high-performance computing—domains where petabyte-scale analytics isn’t a luxury, it’s table stakes.

In this guide, I’ll walk you through the exact components, strategies, and tools you need to build a scalable analytics workflow that won’t break when your data—or your ambitions—grow.

Scalable data analytics word roundup:

Why Legacy Data Architectures Fail at Scale

In the early days of big data, we relied on two distinct silos: the data warehouse for structured, high-performance business intelligence and the data lake for cheap, massive-scale storage of raw files. Today, this “two-tier” architecture is the primary reason organizations struggle with Scalable data analytics.

When you store data in a lake and then move it to a warehouse for analysis, you create a “double ETL” nightmare. This process introduces lag—often days or weeks—meaning 86% of analysts are making decisions based on out-of-date information. Furthermore, data warehouses often use proprietary formats that make it nearly impossible for advanced AI/ML tools to access the data directly. This creates a “Data Gravity” problem: as your data grows, it becomes increasingly difficult and expensive to move, effectively locking your insights behind a paywall of egress fees and proprietary API limitations.

| Feature | Traditional Data Warehouse | Traditional Data Lake | Lifebit’s Lakehouse Approach |

|---|---|---|---|

| Data Format | Proprietary | Open (CSV, JSON, Parquet) | Open (Delta Lake, Iceberg) |

| Performance | High (SQL only) | Low (Scanning files) | High (SQL & AI/ML) |

| Cost | High (Storage + Compute) | Low (Storage only) | Optimized (Separated) |

| ACID Support | Yes | No | Yes |

| Scalability | Vertical (Expensive) | Horizontal (Unmanaged) | Horizontal (Elastic) |

Legacy systems suffer from “accidental complexity.” Every time you want to scale, you have to stitch together disparate systems, leading to hidden inefficiencies and security gaps. This often results in a “Data Swamp,” where data is ingested but never indexed, governed, or utilized because the cost of processing it exceeds the perceived value. For a deeper dive into these challenges, check out our Big data analytics complete guide and our breakdown of data warehouses.

The Shift to True Horizontal Scaling

When a system hits a performance wall, the old-school response was “vertical scaling”—buying a bigger, faster server. But in multi-omic data and global health records, there is no server big enough. The sheer volume of a single whole-genome sequencing (WGS) file can exceed 100GB; multiplying that by a cohort of 500,000 participants makes vertical scaling a physical impossibility.

True Scalable data analytics requires horizontal scaling. This means adding more machines (nodes) to a distributed cluster. If a task is too big for one machine, we split it across 100 or 1,000 nodes. This architecture provides:

- Elasticity: Spinning up resources only when needed (e.g., for a massive GWAS study) and turning them off to save costs. This “pay-as-you-go” model is essential for research budgets that fluctuate between discovery and validation phases.

- Redundancy: If one node fails, the cluster continues to process the data without losing progress. In legacy systems, a single hardware failure during a 48-hour query could mean starting from scratch.

- High Throughput: Processing petabytes in minutes rather than months by parallelizing I/O operations across the entire cluster.

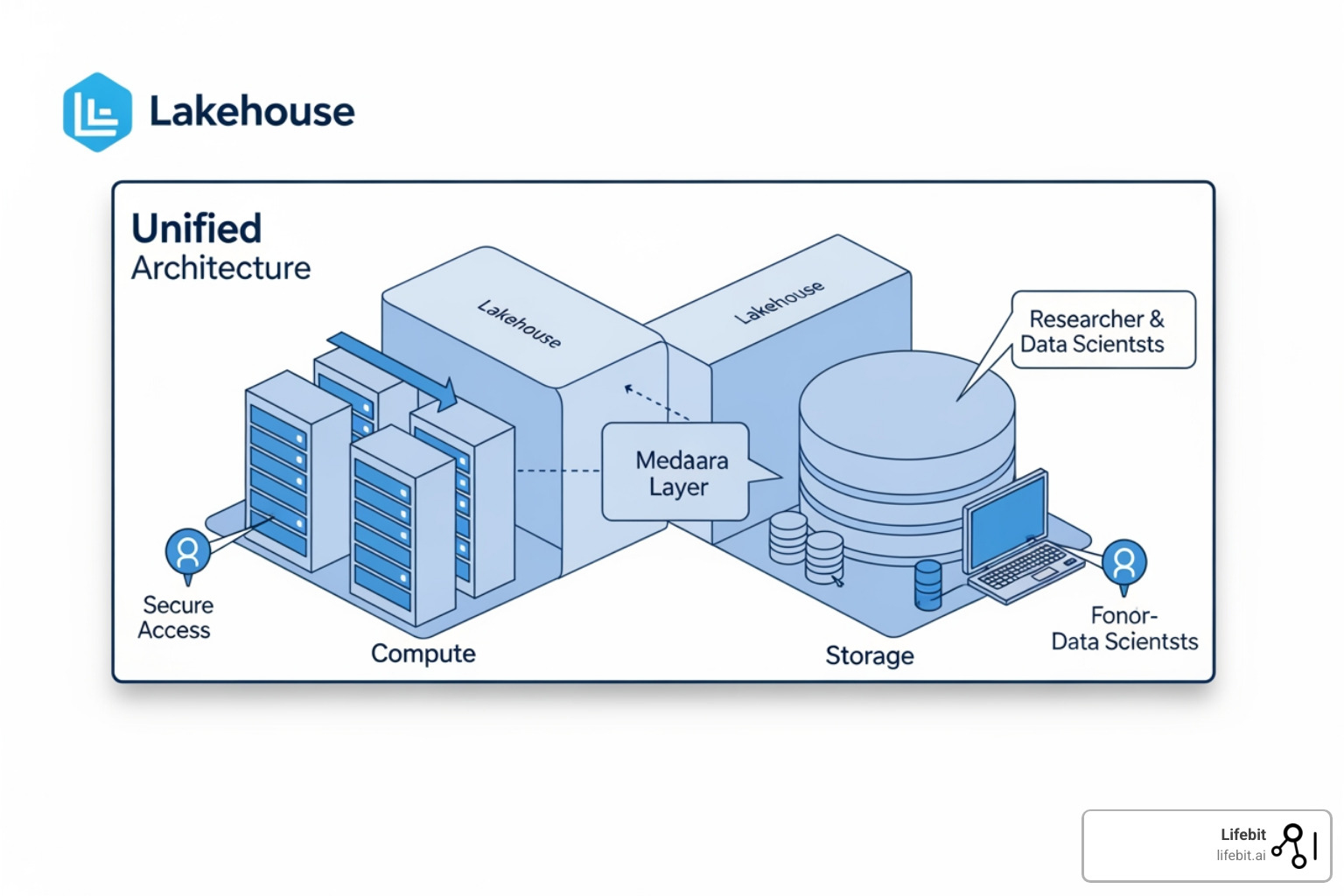

According to scientific research on Lakehouse architecture, this shift allows organizations to match the speed of specialized databases while maintaining the flexibility of open data lakes. It effectively decouples compute from storage, allowing you to scale your processing power independently of your data volume.

Breaking the “Analytics as a Service” Bottleneck

For too long, analytics has operated as a “service” model. A researcher or business lead has a question, they file a ticket, and they wait for a data engineer to build a pipeline. This creates a massive bottleneck, often referred to as the “Data Engineering Tax.” By the time the data is ready, the clinical window or market opportunity may have closed.

To scale, we must move toward Analytics as a Product. This involves creating curated, high-quality datasets that are documented, version-controlled, and ready for self-service. By using advanced analytics solutions, we empower analysts to “thrive in the forest” rather than waiting for someone to plant the trees for them. This cultural shift is as important as the technical one; it requires treating data with the same rigor as software code, including CI/CD (Continuous Integration/Continuous Deployment) for data pipelines.

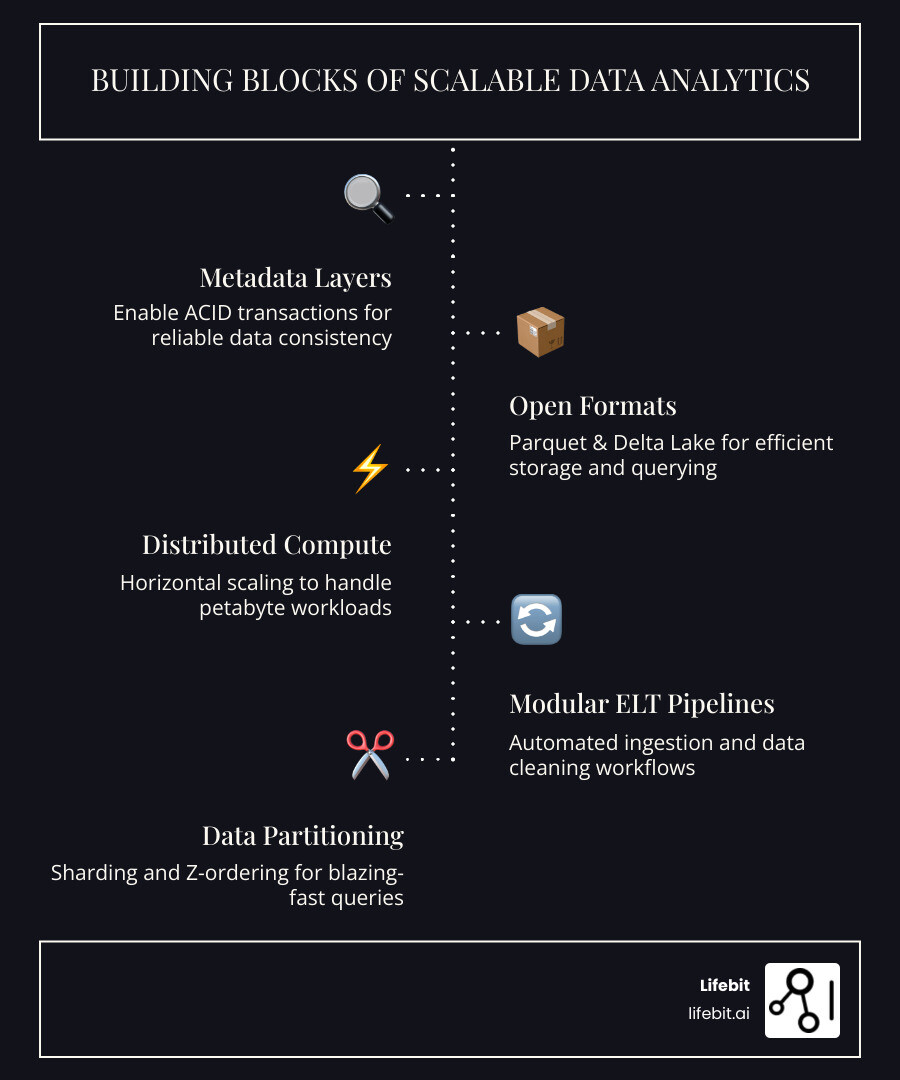

The Building Blocks of Scalable Data Analytics with Lifebit

At Lifebit, we utilize a Lakehouse architecture to unify the best parts of warehouses and lakes. The core of this system is the metadata layer. By using open formats like Parquet combined with transactional layers like Delta Lake, we bring ACID (Atomicity, Consistency, Isolation, Durability) transactions to the data lake. This ensures that even when hundreds of researchers are querying the same petabyte-scale dataset, the data remains consistent and reliable.

If you are wondering what is a data lakehouse, it is essentially the engine that allows for data lakehouse best practices like schema enforcement and data versioning (time travel). Time travel is particularly critical for reproducible scientific research; it allows a scientist to re-run an analysis on the exact state of the data as it existed six months ago, ensuring that new data ingestions haven’t skewed historical results.

The Medallion Architecture: Organizing for Scale

To manage data quality at scale, we implement a “Medallion Architecture,” which categorizes data into three distinct layers:

- Bronze (Raw): This is the landing zone for all incoming data in its native format. It acts as a historical archive that can be reprocessed if business logic changes.

- Silver (Validated): Data in this layer is cleaned, normalized, and joined. For biomedical data, this is where we perform unit conversion (e.g., mg/dL to mmol/L) and basic quality control filtering.

- Gold (Enriched): This layer contains project-specific datasets, such as a cohort of patients with a specific genetic variant and their longitudinal health outcomes. These are optimized for high-speed consumption by BI tools and ML models.

Supercharge Performance with Partitioning and Sharding

To make Scalable data analytics actually fast, you can’t just throw hardware at the problem; you need smart data layout.

- Data Partitioning: We divide large tables into smaller, manageable parts based on columns like “Date” or “Region.” This allows the system to skip irrelevant data entirely during a query. For example, if a researcher only needs data from 2023, the system won’t even look at the files from 2022.

- Z-Ordering: A technique to co-locate related information in the same files. In genomics, this might mean grouping variants by chromosomal position, drastically reducing the amount of data read from storage during a regional analysis.

- Bloom Filters: These are probabilistic data structures that help the system quickly determine if a piece of data exists in a file without reading the whole file. This is a massive time-saver when searching for rare variants across billions of rows.

These techniques, combined with intelligent load balancing, ensure that your query speed remains lightning-fast even as your dataset grows from terabytes to petabytes. Learn more in our Advanced analytics ultimate guide.

Build Fast, Reliable Pipelines for Petabyte Analytics

The old way was ETL (Extract, Transform, Load). You transformed data before it hit your storage, which often meant losing raw information that might be useful later. In a scalable world, we prefer ELT. We load the raw data into the Lakehouse first and then use the massive power of distributed compute (like Apache Spark) to transform it in place.

Modular workflows are the secret to reliability. Instead of one giant “spaghetti” pipeline that breaks if a single column changes, we build small, reusable components for:

- Automated Ingestion: Pulling from EHR systems, wearable devices, or sequencing machines using event-driven triggers.

- Data Cleaning: Standardizing units and removing duplicates automatically using predefined schemas.

- Harmonization: Aligning diverse datasets into a common model (like OMOP or CDISC) to enable cross-study meta-analysis.

For teams looking ahead, our Cloud data management guide 2025 provides a roadmap for building these resilient pipelines that can handle the velocity and variety of modern health data.

Modern Analytics Engineering: Tools That Scale with You

Analytics engineering is the bridge between data engineering and data science. It applies software engineering best practices—like version control, testing, and modularity—to the data transformation layer. This shift is critical because as data scales, the complexity of the logic used to process it scales even faster.

By using tools like dbt (data build tool) and Apache Spark, we treat our data transformations as code. This means every change is tracked in Git, and if a pipeline breaks, we can roll it back instantly. This is the foundation of our distributed data analysis platform. Furthermore, this approach allows for automated testing; before a new dataset is published to the “Gold” layer, the system can automatically verify that there are no null values in critical columns or that the age range of patients is biologically plausible.

The Role of Orchestration and Data Mesh

Scaling isn’t just about the data; it’s about the people. As organizations grow, a centralized data team often becomes a bottleneck. This has led to the rise of the Data Mesh concept, where data is treated as a product owned by the specific domain teams (e.g., the Oncology department owns their data, the Cardiology department owns theirs).

To support this decentralized model, robust Orchestration is required. Tools like Apache Airflow or Dagster allow teams to schedule complex dependencies across different data products. For instance, a multi-omic analysis might depend on the completion of both a genomic pipeline and a clinical data refresh. Orchestration ensures these pieces move in harmony, providing clear visibility into the lineage of every data point.

Turn Analytics from a Service into a Product

When you treat analytics as a product, you focus on the entire lifecycle, not just the initial delivery.

- Discovery: Can researchers find the data? This requires a robust data catalog that indexes metadata and provides a searchable interface for scientists.

- Quality: Can they trust it? This involves publishing data quality scores and lineage maps so users know exactly where the data came from and how it was processed.

- Usability: Is it in a format they can use with Python, R, or SQL? A scalable platform must support polyglot analytics, allowing different specialists to use the tools they are most comfortable with.

By upskilling teams to use shared repositories and modular code, we ensure that a piece of logic—for example, “how we calculate patient BMI”—is written once and reused by everyone. This approach is essential for advanced analytics healthcare where consistency across clinical trials is a regulatory requirement. It eliminates the “Excel-based truth” where three different departments report three different numbers for the same metric.

Real-World Wins: How Leading Teams Scale with Lifebit

Scalability isn’t just a theoretical concept; it’s how the world’s most innovative organizations operate.

- Massive Clusters: Companies like Netflix and Amazon utilize clusters of over 8,000 nodes to process petabytes of data daily. In the life sciences, the UK Biobank and Genomics England use similar distributed architectures to serve thousands of researchers simultaneously.

- Genomics Speedups: Research has shown that moving from traditional, single-node pipelines to Spark-based architectures can result in a 28x speedup in genomics processing and a 63% reduction in cost. This means a study that used to take a month can now be completed in a single day.

- Real-Time Processing: Using technologies like Kafka, teams can now analyze streaming data for immediate insights, such as pharmacovigilance alerts that detect adverse drug reactions in real-time across global populations.

Lifebit enables these same high-performance workflows for distributed computing biomedical data, allowing even small research teams to leverage the power of a global-scale infrastructure without needing a 50-person engineering department.

Future-Proof Your Analytics: AI and Federated Governance

The future of Scalable data analytics isn’t just about bigger data; it’s about distributed data. Because of privacy laws like GDPR, HIPAA, and the emerging AI Act, much of the world’s most valuable health data cannot be moved. It is “locked” within the borders of a country or the firewalls of a hospital.

This is where federated analytics comes in. Instead of moving the data to the code (which is slow, expensive, and often illegal), we move the code to the data. Our Federated analytics ultimate guide explains how this allows for secure, multi-continental collaboration without compromising data sovereignty. In this model, the central researcher sends a query or a model to the local data site; the site executes the computation locally and only sends back the aggregated results (e.g., the average or the coefficient), never the raw patient data.

Privacy-Enhancing Technologies (PETs) at Scale

To make federated analytics truly secure, we integrate Privacy-Enhancing Technologies directly into the scalable workflow:

- Differential Privacy: Adding controlled “noise” to the results to ensure that no individual patient can be identified from the aggregate data.

- Homomorphic Encryption: Allowing computations to be performed on encrypted data without ever needing to decrypt it, providing an additional layer of security for highly sensitive genomic markers.

- Secure Multi-Party Computation (SMPC): Enabling multiple parties to jointly compute a function over their inputs while keeping those inputs private from each other.

Declarative APIs and Smart Metadata Layers

To support AI/ML at scale, we use declarative DataFrame APIs. These allow data scientists to write code in Python or Scala that looks simple but is automatically optimized by the underlying engine. The system performs “predicate pushdown” (filtering data at the storage level) and “caching” (keeping frequently used data in fast memory).

These key features of a federated data lakehouse ensure that your ML models are trained on the most complete, high-quality data available, leading to more accurate discoveries in drug development and disease prevention. For more on how AI integrates with these systems, see our AI data lakehouse ultimate guide. By automating the infrastructure and governance, we allow scientists to focus on the science, not the servers.

Frequently Asked Questions about Scalable Data Analytics

What’s the difference between horizontal and vertical scaling?

Vertical scaling (scaling up) is like trying to make one person work faster by giving them a bigger desk and a faster computer. Eventually, that person hits a physical limit. Horizontal scaling (scaling out) is like hiring a team of 100 people to work on the same project simultaneously. Horizontal scaling is the only way to handle petabyte-scale analytics because it has no theoretical ceiling; you can always add more nodes to the cluster.

How does a Lakehouse architecture boost scalability?

It removes the “bottleneck” of the data warehouse. In a Lakehouse, your data stays in cheap, open-format storage (the lake), but you have a smart metadata layer on top that makes it act like a high-performance database (the warehouse). This means you get ACID transactions, versioning, and fast SQL queries without the high cost, proprietary lock-in, and rigidity of traditional warehouses.

Why is data partitioning critical for big analytics?

Imagine looking for a single book in a library that has no sections. You’d have to check every single shelf. Partitioning is like organizing the library by “Genre” and then “Author.” If you’re looking for a mystery, you can skip 90% of the library. In data terms, this means your queries finish in seconds instead of hours because they only read the relevant chunks of data, drastically reducing I/O overhead.

How do you manage the costs of scalable analytics?

Scalability can be expensive if not managed correctly. We use “Auto-scaling” and “Spot Instances” to manage costs. Auto-scaling automatically shrinks the cluster when it’s not in use, while Spot Instances allow us to use spare cloud capacity at a 70-90% discount. Additionally, by separating compute from storage, you only pay for high-performance processing when you are actually running a query.

Can scalable analytics work with legacy on-premise systems?

Yes, through a hybrid-cloud or federated approach. You can keep your sensitive data on-premise while using cloud-based control planes to orchestrate the analytics. This allows you to leverage the elasticity of the cloud for processing without the risk or cost of a full data migration.

Conclusion

Building a Scalable data analytics workflow is no longer just a technical challenge—it is a strategic necessity for any organization aiming to lead in the data-driven era. By moving away from siloed, legacy architectures and embracing the flexibility of a Trusted Data Lakehouse, you can eliminate the “engineering wait-time” and ensure your analysts are working with real-time, high-quality insights.

At Lifebit, we are dedicated to providing the tools—from Federated AI to our R.E.A.L. (Real-time Evidence & Analytics Layer)—that make this possible for the world’s most sensitive biomedical data. Whether you are harmonizing multi-omic datasets or running large-scale pharmacovigilance, the right architecture ensures that as your data grows, your insights only get faster.

Ready to see how a scalable architecture can transform your research? Explore the Trusted Data Lakehouse today.