How AI is Fast-Tracking the Drug Discovery Pipeline

Cut Drug Discovery Timelines by 90% with an AI Drug Discovery Pipeline

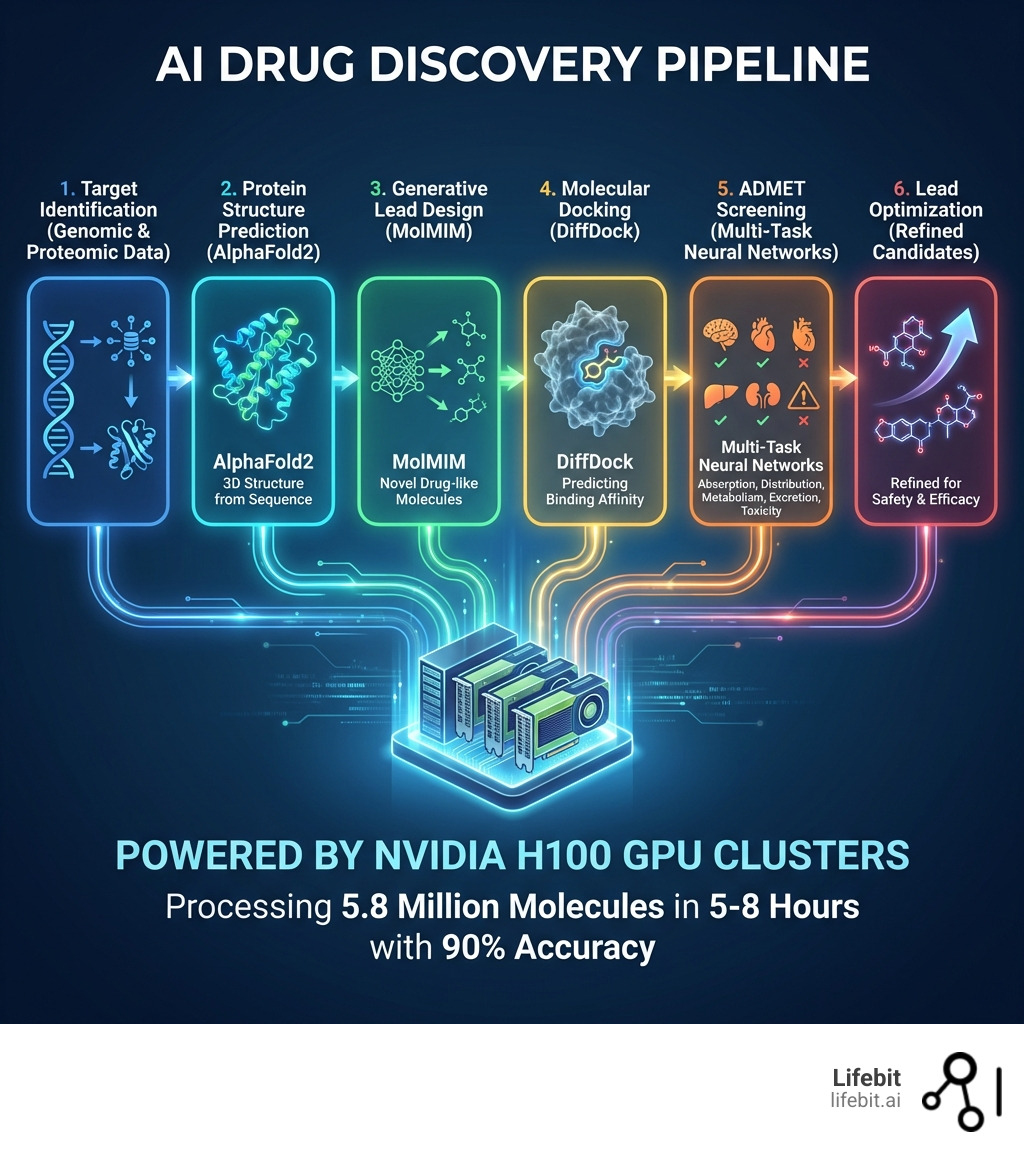

An ai drug discovery pipeline uses machine learning, generative models, and high-performance computing to accelerate target identification, lead optimization, and ADMET screening — cutting timelines from 15 years to under 12 months and reducing costs from $2 billion to a fraction of that.

How the AI Drug Discovery Pipeline Works:

- Target Identification — AI analyzes genomic and proteomic data to identify disease-related proteins

- Protein Structure Prediction — Tools like AlphaFold2 predict 3D protein structures from amino acid sequences

- Lead Generation — Generative AI models design novel drug-like molecules optimized for binding affinity

- Molecular Docking — AI predicts how compounds bind to target proteins using tools like DiffDock

- ADMET Screening — Machine learning models predict absorption, distribution, metabolism, excretion, and toxicity

- Lead Optimization — Multi-task models refine compounds for safety, efficacy, and manufacturability

Traditional drug discovery is painfully slow. Getting a single drug from concept to market takes 15 years and costs between $1 billion and $2 billion — with less than 5% of candidates ever reaching approval. The process involves screening millions of chemical compounds, validating targets, optimizing leads, and predicting toxicity — all while navigating a chemical space estimated at 10^60 possible structures.

AI changes everything. By leveraging deep learning, message-passing neural networks, and GPU-accelerated computing, researchers can now screen 5.8 million molecules in 5 to 8 hours instead of years. Leading research teams have achieved 10x acceleration in virtual screening and molecular docking using NVIDIA H100 GPU clusters and specialized AI microservices like AlphaFold2, MolMIM, and DiffDock.

The shift from manual, rule-based methods to AI-driven pipelines isn’t just faster — it’s fundamentally more accurate. Multi-task machine learning models trained on massive datasets (exceeding 65 petabytes of phenomics, transcriptomics, and proteomics data) can predict ADMET properties with 90% accuracy, identify the top 1% of therapeutic candidates in hours, and optimize lead compounds far beyond what traditional computational methods could achieve.

But speed and accuracy mean nothing without secure, federated access to the diverse, siloed datasets that power these models — something most pharma organizations still struggle with. That’s where platforms designed for real-time, compliant, multi-omic integration become critical.

I’m Dr. Maria Chatzou Dunford, CEO and Co-founder of Lifebit, where we’ve spent over 15 years building federated AI infrastructure for global healthcare organizations to securely analyze genomic and biomedical data — including powering ai drug discovery pipelines across pharmaceutical and public sector institutions. My background in computational biology, high-performance computing, and AI has given me a front-row seat to how these technologies are reshaping precision medicine and accelerating the path from target to therapy.

Glossary for ai drug discovery pipeline:

The $2 Billion Failure: Why Traditional Drug Discovery Pipelines are Broken

The traditional pharmaceutical R&D model is often described as a “pipeline,” but in reality, it has historically been an iterative, manual, and high-risk marathon. The primary bottleneck is the sheer scale of the current challenges of drug discovery. Scientists must navigate a “chemical universe” of approximately 10^60 potential small molecules to find a single needle in the haystack that is safe, effective, and stable. This astronomical number is more than the number of atoms in the solar system, making manual exploration an impossible task.

Traditional Computer-Aided Drug Design (CADD) relied on simplified physical models and rule-based systems that frequently failed to capture the complex, dynamic biological reality of human systems. These models often treated proteins as rigid structures, ignoring the “induced fit” and conformational changes that occur in a living organism. This led to a staggering 95% attrition rate, where drugs that looked promising in a lab failed during clinical trials due to unforeseen toxicity or lack of efficacy. This phenomenon is often referred to as “Eroom’s Law”—the observation that drug discovery is becoming slower and more expensive over time, despite improvements in technology.

Beyond the biology, the data itself is a hurdle. Research is often trapped in fragmented silos across different departments, therapeutic areas, or global organizations. Manually ingesting and parsing millions of data points from hundreds of sources—ranging from legacy spreadsheets to unstructured clinical notes—slows decision-making to a crawl. Furthermore, the “Valley of Death” in drug development—the gap between basic research and clinical application—is widened by the lack of predictive power in early-stage screening. When you factor in the 15-year delay and the $2 billion price tag, the old way of doing things isn’t just inefficient—it’s unsustainable for addressing urgent global health needs like emerging viral threats or rare genetic disorders. For a deeper look at these foundational hurdles, see this research on early drug discovery principles.

Engineering the Modern AI Drug Discovery Pipeline

To fix the broken model, we must move toward an end-to-end drug discovery approach powered by artificial intelligence. A modern ai drug discovery pipeline replaces manual trial-and-error with high-throughput AI-driven drug discovery. This transition requires a fundamental shift from “digitalizing” old processes to building native AI workflows where data flows seamlessly between predictive models.

At the core of this engineering feat is the integration of “Data, Models, and Compute.” By using high-performance hardware like NVIDIA H100 GPUs, researchers can process vast multi-omic datasets—genomics, transcriptomics, and proteomics—to find patterns that human eyes would miss. This infrastructure supports specialized AI drug discovery software and microservices designed to handle specific tasks, from predicting how a protein folds to how a molecule will behave in the human liver. To understand the broader shift toward these digital biology systems, explore this research on AI-enabled drug discovery platforms.

Step 1: Target Selection and Protein Prediction with AlphaFold2

The first step in any ai drug discovery platform is identifying the biological “target”—usually a protein—that plays a role in a disease. However, knowing the protein’s name isn’t enough; we need to know its 3D shape to design a drug that fits into it like a key in a lock. Historically, determining a protein’s structure required years of labor-intensive work using X-ray crystallography or cryo-electron microscopy.

The AlphaFold2 NIM microservice has revolutionized this stage. By providing a protein sequence, AlphaFold2 can accurately predict the complex 3D structure of the target in minutes. This capability is particularly transformative for “undruggable” targets—proteins that were previously ignored because their structures were too complex or unstable to map. With the target’s structure in hand, we can move to leveraging AI for target validation, using machine learning to simulate how inhibiting or activating this protein affects broader biological pathways, ensuring that hitting this protein will actually result in a therapeutic benefit without causing systemic harm.

Step 2: Generative Lead Design Using MolMIM

Once we have a target, we need a “lead” compound. Instead of searching through existing libraries of known chemicals—which are limited by what we have already synthesized—we use generative AI to create new ones. This is the difference between choosing from a menu and having a master chef create a custom dish for your specific nutritional needs.

Using MolMIM for optimized lead generation, researchers can generate novel molecular structures that are “born” with the right properties. MolMIM uses a latent space representation of molecules, allowing it to navigate the 10^60 chemical space intelligently. It optimizes for drug-likeness (QED scores), solubility, and molecular similarity simultaneously. This multi-objective optimization ensures that the generated molecules aren’t just theoretically effective at binding, but are also synthesizable in a lab and stable enough to be manufactured. This allows us to focus only on molecules that have the highest probability of success, drastically reducing the number of physical compounds that need to be tested in wet labs.

Case Study: Achieving 10x Acceleration in Virtual Screening

Real-world performance metrics prove that the ai drug discovery pipeline is no longer theoretical. By integrating NVIDIA NIM microservices into high-performance workflows, researchers have achieved a 10x acceleration in virtual screening. This isn’t just about doing things faster; it’s about doing things that were previously impossible due to time constraints.

| Metric | Traditional Pipeline | AI-Driven Pipeline |

|---|---|---|

| Screening Volume | ~100k molecules | 5.8 million molecules |

| Time Required | Weeks/Months | 5–8 hours |

| Lead Optimization Accuracy | ~60-70% | 90% |

| ADMET Profiling (1M compounds) | Months | A few hours |

This level of speed allows for rapid biomarker discovery and therapeutic identification. By using advanced deep learning methods, researchers can filter out toxic compounds early, identifying the top 1% of candidates with the highest therapeutic potential before they ever enter a physical lab. This “fail fast, fail early” approach saves hundreds of millions of dollars in downstream clinical trial costs.

Molecular Docking with DiffDock in the AI Drug Discovery Pipeline

After generating lead molecules, we must predict exactly how they bind to the target protein. This is known as molecular docking. Traditional docking methods rely on scoring functions that approximate physics, which are often computationally expensive and struggle with accuracy when the protein structure is flexible.

DiffDock for molecular docking uses a diffusion-based generative model to predict binding poses with unprecedented speed and precision. Unlike traditional methods that sample thousands of random orientations, DiffDock learns the distribution of successful binding poses. It identifies the optimum binding sites and predicts the orientation of the molecule within the protein pocket with high confidence. This step is a critical component of improving efficiency in drug discovery workflows, as it allows researchers to virtually “test” millions of combinations in a single afternoon, providing a level of granularity that was once reserved for the final stages of lead optimization.

High-Speed Processing with NVIDIA H100 GPU Clusters

The sheer volume of data in an ai drug discovery pipeline requires massive computational throughput. NVIDIA H100 GPU clusters enable this by using parallelism techniques—data, model, and pipeline parallelism—to distribute the workload across thousands of cores. These GPUs are specifically optimized for the transformer architectures used in modern biological LLMs (Large Language Models).

These clusters allow for real-time analytics and the processing of drug discovery 2.0 innovative drugs data insights. Without this level of “compute,” the complex math required for deep learning models—such as calculating the electronic density of a molecule or the free energy of binding—would remain a bottleneck, regardless of how much data we have. The H100’s Transformer Engine specifically accelerates the training and inference of models like ESMFold and AlphaFold2, making the pipeline truly “real-time.”

Scaling Data and Compute: From 65 Petabytes to Generative Models

To train an AI that truly understands biology, you need data—and lots of it. We are seeing a move toward massive, proprietary datasets that combine multiple layers of biological information. This “multi-omic” approach is essential because a drug’s effect isn’t limited to a single protein; it ripples through the entire cellular ecosystem. Key data types include:

- Phenomics: High-content imaging data showing how cell morphology changes in response to drug candidates.

- Transcriptomics: Measuring the expression levels of thousands of genes simultaneously to see how a drug “reprograms” a cell.

- Proteomics: Mapping the entire set of proteins expressed by a genome to understand the functional output of the cell.

- ADME: Longitudinal data on how the body absorbs, distributes, metabolizes, and excretes a drug, often derived from real-world evidence (RWE).

Leading biopharma organizations have built datasets exceeding 65 petabytes. To put that in perspective, that is equivalent to over 13,000 years of high-definition video. When these datasets are fed into AI in drug development, the models can learn the “language of life,” predicting how a new molecule will interact with a human cell with high fidelity. This integration is particularly vital for oncology drug design innovations, where personalized treatments require understanding complex genetic interactions and the tumor microenvironment.

However, the challenge is no longer just about having the data; it’s about accessing it. Much of the world’s most valuable biomedical data is locked behind strict privacy regulations (like GDPR or HIPAA) or resides in different geographic jurisdictions. This is where Federated Learning becomes a cornerstone of the pipeline. Instead of moving sensitive patient data to the AI model, the model is sent to the data. This allows researchers to train on diverse, global datasets—improving model generalization and reducing bias—without ever compromising patient privacy or data sovereignty. This federated approach is what enables the scaling of the ai drug discovery pipeline from a single lab to a global collaborative effort.

The Future of the AI Drug Discovery Pipeline: Agentic AI and Quantum Computing

We are entering the era of “Agentic AI,” where AI doesn’t just answer questions—it takes action. Future pipelines will likely feature multi-agent teams where one AI agent identifies a target, another designs the molecule, and a third orchestrates the ADMET screening. These agents will be capable of reasoning through complex biological problems, such as identifying why a specific molecule might cause cardiotoxicity based on its structural similarity to known toxins.

Emerging research on AI Agents in Drug Discovery suggests these systems will provide user-guided design, allowing human scientists to set high-level goals—such as “design a non-addictive analgesic that targets the Nav1.7 channel”—while the AI handles the execution of millions of simulations. Furthermore, the convergence of AI and quantum computing promises to simulate molecular interactions at the subatomic level. While classical computers use approximations, quantum computers could theoretically calculate the exact quantum states of electrons in a binding pocket, providing even greater accuracy for target identification with real-world evidence.

Automating the AI Drug Discovery Pipeline with Multi-Agent Systems

The goal of AI drug discovery 2.0 is full automation, often referred to as “Self-Driving Labs” (SDLs). Using frameworks like BioNeMo, companies can build orchestrated multi-agent therapeutic design systems that connect digital simulations directly to robotic wet labs. In this closed-loop system, the AI designs a molecule, a robotic system synthesizes it, an automated assay tests its efficacy, and the results are fed back into the AI to refine the next design iteration.

These “digital labs” can run 24/7, continuously refining molecules and running simulations, effectively scaling biomarker discovery workflows to a level previously unimaginable. This reduces the human bottleneck to high-level oversight and ethical validation, allowing the pipeline to explore the chemical universe at a scale that matches the complexity of human biology. As these systems mature, the time from identifying a new pathogen to having a validated drug candidate could drop from years to weeks, fundamentally changing our global response to health crises.

Frequently Asked Questions about AI Drug Discovery

How much time does an AI drug discovery pipeline save?

An ai drug discovery pipeline can reduce the early stages of drug discovery (from target identification to lead optimization) from 5–7 years down to less than 12 months. In specific virtual screening tasks, it can accelerate processing by up to 10x.

What are the best AI tools for protein structure prediction?

AlphaFold2 is currently the gold standard for protein structure prediction. It is widely used via microservices like NVIDIA NIM to provide high-accuracy 3D models of disease targets.

Can AI accurately predict drug toxicity and ADMET properties?

Yes. Modern multi-task neural networks can predict ADMET and toxicity properties with approximately 90% accuracy. This allows researchers to “fail fast” and eliminate dangerous or ineffective candidates long before they reach human trials.

Conclusion: Secure the Data, Accelerate the Cure

The ai drug discovery pipeline is the most significant technological leap in the history of medicine. By combining the predictive power of AlphaFold2, the generative creativity of MolMIM, and the docking precision of DiffDock, we are finally moving at the speed of the diseases we are trying to cure.

However, the “3 key ingredients” of data, models, and compute are only effective if they can work together securely. At Lifebit, we believe the future of drug discovery is federated. Our platform provides the secure data solutions needed to link global biomedical datasets without moving them, ensuring compliance while enabling real-time AI-powered target identification.

Whether you are a global pharma leader or a research institute, the time to modernize your pipeline is now. To start your journey into the next generation of medicine, you can get started with generative virtual screening and see how AI can supercharge your research.