Diving into the AI-powered Research Lakehouse for Healthcare

Why 75% of Healthcare AI Projects Fail Before They Launch

An AI-powered research lakehouse is a unified data architecture that combines the flexibility of data lakes with the reliability of data warehouses, natively designed to support AI and machine learning workloads on structured, unstructured, and multimodal data—particularly genomic, clinical, and scientific datasets. In the high-stakes world of biomedical research, where the difference between a breakthrough and a failure is measured in petabytes of data and years of clinical trials, the underlying data infrastructure is no longer just a technical detail; it is the primary determinant of success.

Key characteristics of an AI-powered research lakehouse:

- Open table formats (Apache Iceberg, Delta Lake, Hudi) enabling ACID transactions, schema evolution, and time travel for reproducible science.

- Native AI capabilities including integrated vector search, RAG (Retrieval-Augmented Generation), and LLM orchestration directly on the storage layer.

- High-performance Python access via Apache Arrow and specialized kernels to eliminate the “Python tax” in data science workflows.

- Unified governance with attribute-based access control (ABAC), federated analytics, and end-to-end lineage tracking for regulatory compliance.

- Real-time feature serving to eliminate offline-online skew, ensuring that models in production see the same data distribution as they did during training.

The challenge is stark: nearly 48% of all AI models fail to reach production in 2024, and a staggering 75% of AI projects never make it out of development. The culprit? Fragmented data foundations. Most organizations are still struggling with “Data Swamps”—massive repositories of raw data that lack the metadata, quality controls, and accessibility required for modern machine learning. When data is siloed across different departments, formats, and cloud providers, the time spent on data engineering (cleaning, joining, and moving data) consumes up to 80% of a researcher’s time, leaving only 20% for actual discovery.

Traditional data architectures force research organizations into a two-tier nightmare: raw data sits in lakes while curated data moves to warehouses through rigid, brittle ETL (Extract, Transform, Load) pipelines. This creates data staleness, duplication, and governance gaps. For healthcare and life sciences organizations handling multi-omic data, clinical records, imaging, and real-time patient data, this fragmentation becomes catastrophic. A researcher might be analyzing a genomic variant based on a clinical record that was updated three days ago in the warehouse, but the raw sequencing data in the lake hasn’t been synced, leading to inconsistent results and potentially dangerous clinical conclusions.

The AI-powered research lakehouse solves this by storing all data—genomic sequences, electronic health records (EHR), medical images (DICOM), clinical trial data, and even handwritten physician notes—in one place with open formats. This eliminates the firefighting that data teams face daily: stitching together pipelines, troubleshooting dependencies, and explaining why critical datasets are hours or days out of date. By providing a single source of truth that supports both SQL-based business intelligence and Python-based machine learning, the lakehouse architecture bridges the gap between the data analyst and the data scientist.

Research shows that organizations combining data warehouses and lakes see 30-50% reduction in infrastructure costs with a lakehouse approach. But the real breakthrough isn’t just cost—it’s enabling real-time pharmacovigilance, multi-omic analysis, and agentic AI workflows that were impossible before. Imagine an AI agent that can autonomously scan a lakehouse for new adverse event reports, cross-reference them with the latest genomic research, and alert a safety officer in real-time. This is the promise of the AI-powered research lakehouse.

I’m Maria Chatzou Dunford, CEO and Co-founder of Lifebit, where we’ve built one of the world’s largest federated genomics and biomedical data platforms. Over 15 years, I’ve seen how the right AI-powered research lakehouse architecture transforms drug discovery, precision medicine, and population health research—and how the wrong one kills promising AI initiatives before they start.

Why Traditional Data Architectures Kill 75% of AI Research Projects

In high-stakes biomedical research, data is the new “digital gold,” but most of it is buried under layers of architectural sediment. We’ve all been there: the data science team is excited to train a new model for early cancer detection, but they spend six months just trying to get the genomic files to “talk” to the clinical records. This delay isn’t just an inconvenience; in the context of drug discovery, a six-month delay can cost millions in lost patent time and, more importantly, delay life-saving treatments for patients.

According to Gartner, nearly half of all AI models (48%) fail to cross the chasm to production. When you zoom out to all corporate AI initiatives, that number jumps—75% of AI projects fail to reach production.

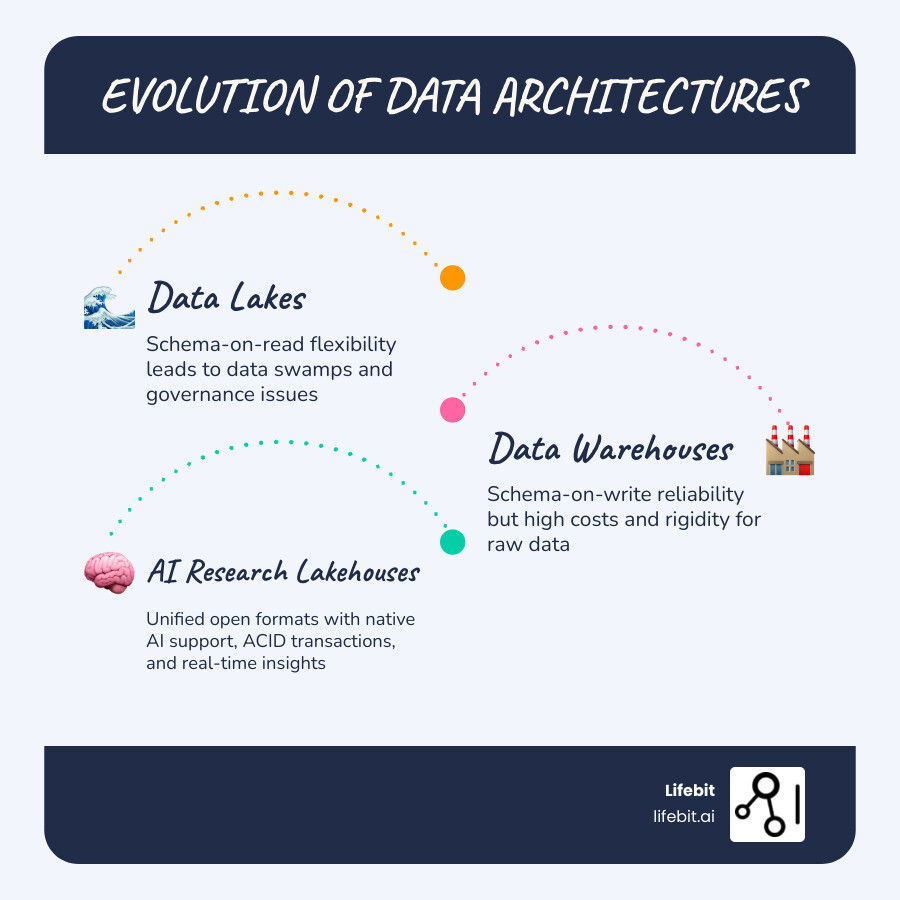

Why is the failure rate so high? Because traditional architectures weren’t built for the “Agentic Future.” They rely on a two-tier system that is fundamentally at odds with the needs of modern AI:

- The Data Lake: A massive dumping ground for raw, unstructured data (images, PDFs, FASTQ files). It’s cheap, but it’s often a “data swamp” with zero reliability. There are no ACID (Atomicity, Consistency, Isolation, Durability) transactions, meaning if a write operation fails halfway through, you end up with corrupted data. There is no schema enforcement, so a column that should contain integers might suddenly contain strings, breaking every downstream model.

- The Data Warehouse: A highly structured, expensive silo. To get data here, you need complex ETL (Extract, Transform, Load) pipelines. These pipelines are the “Achilles’ heel” of the modern data stack. They are brittle, difficult to maintain, and introduce significant latency.

By the time the data is cleaned and moved, it’s stale. For a researcher looking at real-time patient safety or a biotech company trying to beat a competitor to a patent, “stale” is just another word for “useless.” This fragmentation is exactly what is a Data Lakehouse? was designed to fix. It merges the two tiers into one, providing a single source of truth that is both flexible and governed. It allows you to run high-performance SQL queries for reporting while simultaneously allowing data scientists to access the same raw data for deep learning.

The Critical Need for an AI-powered Research Lakehouse in Biomedicine

Biomedical data is uniquely difficult. It’s multimodal, spanning everything from structured lab results to unstructured physician notes and massive multi-omic datasets. A single human genome can be hundreds of gigabytes; multiply that by a population-scale study of 500,000 individuals (like the UK Biobank), and you’re looking at petabytes of data that traditional warehouses simply cannot handle without astronomical costs.

An AI-powered research lakehouse is essential because it treats these complex data types as first-class citizens. It doesn’t just store them as “blobs”; it provides the metadata and indexing required to make them searchable and actionable. Whether it’s application of Data Lakehouses in Life Sciences for drug repurposing or real-time disease surveillance, the lakehouse provides:

- Multimodal Support: Handling tensors, vectors, and tables in one environment. This allows a researcher to query for “all patients with a specific genetic mutation who also show specific patterns in their MRI scans.”

- Data Freshness: Eliminating the “offline-online skew” where the data used to train a model doesn’t match the data the model sees in the real world. In a lakehouse, the production model and the training pipeline look at the exact same data layer.

- Reproducibility and Time Travel: If a researcher finds a breakthrough, they need to be able to “time travel” back to the exact state of the data when the discovery was made. Open table formats like Iceberg allow you to query the data as it existed at a specific timestamp, which is critical for regulatory audits and scientific validation.

- Scalability: Leveraging cloud object storage (S3, Azure Blob, GCS) means the lakehouse can scale to exabytes of data while maintaining the performance of a local database.

Core Components of an AI-powered Research Lakehouse

What actually makes a lakehouse “AI-powered”? It’s not just a marketing label; it’s a specific stack of open-source technologies that provide the “long-term memory” for Large Language Models (LLMs) and the high-throughput engine for deep learning.

At the foundation, we use Open Table Formats. These are the metadata layers that sit on top of your cloud storage and give it “database-like” powers. Without these formats, a data lake is just a collection of files; with them, it becomes a structured, transactional database.

| Feature | Apache Iceberg | Delta Lake | Apache Hudi |

|---|---|---|---|

| Origin | Netflix | Databricks | Uber |

| ACID Transactions | Yes | Yes | Yes |

| Time Travel | Yes | Yes | Yes |

| Schema Evolution | Strong (Full support) | Strong | Moderate |

| Partition Evolution | Automatic | Manual | Manual |

| Best For | Large, slow-changing datasets & multi-engine support | High-performance Spark environments | Fast, incremental updates & streaming |

These formats ensure Apache Iceberg transaction management and Delta Lake transaction management provide ACID guarantees—meaning your data won’t get corrupted if two researchers try to update a clinical trial record at the same time. Following Data Lakehouse Best Practices means choosing the format that best fits your specific research workload. For instance, Iceberg is often preferred in multi-cloud environments because of its engine-agnostic design, while Delta Lake offers superior performance within the Spark ecosystem.

Accelerating Discovery with Vector Search and RAG

In the past, searching through 100,000 medical research papers meant looking for exact keyword matches. If you searched for “malignancy,” you might miss papers that only used the word “cancer.” This “keyword gap” has historically slowed down literature reviews and hypothesis generation.

An AI-powered research lakehouse uses Vector Search to solve this. It converts unstructured text, images, and even genomic sequences into vector embeddings—numerical representations of semantic meaning. These embeddings are stored in a specialized vector index within the lakehouse. This allows for Vector Search for indexing that understands intent and context.

When you combine this with Retrieval-Augmented Generation (RAG), you can build “Agentic Workflows.” Imagine an AI research assistant that can query your entire lakehouse, find the most relevant genomic variants, and summarize the latest clinical findings—all while citing its sources directly from your governed data. This eliminates the “hallucination” problem common in generic LLMs because the AI is grounded in your organization’s specific, verified research data.

Solving the Python Performance Bottleneck in AI-powered Research Lakehouse Environments

For years, there was a “Python Tax.” Data scientists love Python (Pandas, Polars, PyTorch, TensorFlow), but most data platforms were built for SQL. To get data into a Python notebook, it had to be serialized into a format like CSV or JSON, sent over a slow JDBC/ODBC connection, and then de-serialized. This resulted in poor throughput, often bottlenecking GPUs that were waiting for data to arrive.

The modern AI-powered research lakehouse uses Apache Arrow, a cross-language development platform for in-memory data. Arrow uses a columnar memory format that is identical across different languages. Organizations like Netflix developed fast Python clients that use Arrow-native transfer protocols (like Arrow Flight). This allows data to move from the lakehouse to a researcher’s Python environment up to 9-45x faster than traditional methods.

Furthermore, by using technologies like DuckDB or DataFusion as query engines within the lakehouse, researchers can run complex analytical queries directly on their local machines or in the cloud with sub-second latency. When your data moves at the speed of thought, your researchers can iterate faster, leading to quicker breakthroughs in scientific discovery.

Securing Sensitive Healthcare Data with Federated Governance

In healthcare, “moving data” is often a legal, ethical, and logistical “no-go.” GDPR in Europe, HIPAA in the US, and national data sovereignty laws in countries like the UK and Japan mean that sensitive patient data often must stay within its country or institution of origin. The traditional model of “centralize everything in one cloud bucket” is increasingly impossible for global research collaborations.

This is where the “Federated” part of our platform comes in. Instead of the old “centralize everything” approach, we advocate for “bringing AI to the data.” A federated AI-powered research lakehouse allows you to run queries and train models across multiple locations without the data ever leaving its secure vault. You send the code to the data, not the data to the code.

Key security features include:

- Zero-ETL: Accessing data where it lives using zero-ETL promises. This means you don’t create multiple copies of sensitive data, which significantly reduces the “attack surface” and the risk of data exposure during transit.

- Attribute-Based Access Control (ABAC): Unlike traditional Role-Based Access Control (RBAC), ABAC allows for much finer granularity. You can grant access based on the researcher’s role, the sensitivity of the data (e.g., “de-identified” vs. “identifiable”), the specific research project, and even the researcher’s current location or IP address.

- End-to-End Lineage and Auditing: In a regulated environment, you must be able to prove exactly who accessed what data, when, and for what purpose. The lakehouse provides a permanent, immutable record of every action, which is essential for maintaining trust with patients and regulators.

This robust Data Lakehouse Governance is what transforms a simple storage solution into a Trusted Data Lakehouse. By utilizing these benefits of Federated Data Lakehouse in Life Sciences, organizations can collaborate globally on rare disease research or vaccine development while remaining 100% compliant with local laws.

Implementing Trusted Research Environments (TRE)

To truly empower researchers, you need more than just data; you need a safe space to work. We call this a Trusted Research Environment (TRE). It’s a secure, “air-gapped” digital workspace where researchers can access the lakehouse, run their analyses using pre-approved tools (like Jupyter, RStudio, or Nextflow), and only export the final, de-identified results (like a summary table or a graph).

Our TRE includes key features of a Federated Data Lakehouse, such as:

- Secure Collaboration: Multi-party computing and federated learning, where models are trained locally at different sites and only the model weights (not the data) are aggregated.

- Audit Logging: A permanent record of every action taken within the environment, including every command run in a terminal or every cell executed in a notebook.

- Governance at Scale: Managing thousands of users and petabytes of data across 5 continents. This requires a control plane that can orchestrate permissions across different cloud providers (AWS, Azure, GCP) and on-premise servers simultaneously.

Real-World Impact: From 175TB Scientific Indexes to Real-Time Safety

The theory of a unified architecture is great, but does it work in the messy reality of clinical research? Absolutely. Look at the field of Pharmacovigilance (drug safety). Traditionally, identifying a rare side effect in a new drug could take years of manual reporting and retrospective studies. By the time a safety signal was confirmed, thousands of patients might have been affected.

With an AI-powered research lakehouse, we can now perform real-time safety surveillance. By connecting clinical trial data, real-world evidence (RWE) from hospital records, and even social media signals in a single, governed lakehouse, AI agents can flag potential safety issues in days, not years. For example, an LLM-based agent can monitor physician notes across hundreds of hospitals, identifying subtle patterns of adverse reactions that would be missed by traditional keyword-based reporting systems.

A prime example is the management of one of the world’s largest datasets of indexed scientific research—over 175TB of data—using advanced lakehouse technologies. This dataset includes millions of full-text papers, patents, and clinical trial protocols. Researchers are now using semantic operators to perform bulk reasoning over these massive text corpora. By running these operations directly within the lakehouse, they have achieved 28x speedups over previous state-of-the-art pipelines that required moving data to a separate compute cluster.

This isn’t just about speed; it’s about accuracy. In fact-checking applications, where AI is used to verify the claims made in scientific posters or marketing materials against the underlying clinical data, these AI-powered systems have shown nearly 10 percentage point gains in accuracy over generic LLM approaches. This is because the lakehouse provides the necessary context and “ground truth” that generic models lack. For a deeper dive into how this architecture is built, check out our AI Data Lakehouse Ultimate Guide.

Another transformative use case is in Population Genomics. Organizations like Genomics England or the All of Us Research Program use lakehouse architectures to manage the intersection of genomic data and longitudinal health records. This allows researchers to perform Genome-Wide Association Studies (GWAS) at a scale and speed that was previously unthinkable, identifying new genetic markers for diseases like diabetes or Alzheimer’s in weeks rather than months.

Frequently Asked Questions about AI Research Lakehouses

How does an AI-powered research lakehouse differ from a standard data lake?

A standard data lake is a “passive” storage repository—it just holds files in a hierarchical structure. It lacks the metadata to understand what those files contain or how they relate to each other. An AI-powered research lakehouse is “active.” It includes a metadata layer for ACID transactions, native support for vectors and tensors, and high-performance query engines that allow AI models to stream data directly from storage to GPUs at wire speed. It provides the structure and reliability of a warehouse with the massive scale and low cost of a lake.

Can I use an AI-powered research lakehouse for multi-omic data?

Yes! In fact, that is one of its strongest use cases. Multi-omic data (genomics, proteomics, metabolomics, transcriptomics) is massive, complex, and largely unstructured. The lakehouse architecture allows you to store these as “tensors”—multi-dimensional arrays—and query them alongside structured clinical data using SQL or Python. This unification is critical for identifying new therapeutic targets and understanding the complex biological pathways of disease. Without a lakehouse, these datasets usually live in separate silos, making cross-omic analysis a manual and error-prone process.

What are the cost benefits of migrating to a lakehouse architecture?

Organizations typically see a 30-50% reduction in Total Cost of Ownership (TCO). This comes from several factors:

- Eliminating proprietary licenses: Moving away from expensive, closed-source data warehouse licenses to open-source formats and cloud object storage.

- Reducing ETL maintenance: By eliminating the need to move and transform data between a lake and a warehouse, you save hundreds of hours of engineering time.

- Storage efficiency: Using low-cost cloud object storage (like S3) as the primary storage layer for all data types.

- Improved Success Rates: By improving AI project success rates, the “Return on Data” increases significantly, turning a cost center into a value driver.

How does a lakehouse handle data sovereignty and compliance?

Through federated governance. A modern lakehouse platform like Lifebit allows you to keep data in its original location (e.g., a hospital’s local server or a specific country’s cloud region) while still allowing researchers to query it through a central interface. The platform manages the permissions, ensures that only de-identified data is shared, and maintains a full audit trail, making it compliant with GDPR, HIPAA, and other strict regulations.

Is it difficult to migrate from a traditional warehouse to a lakehouse?

While any migration requires planning, the use of open table formats like Apache Iceberg makes it easier than ever. You can often “register” your existing data files in the lakehouse metadata layer without having to rewrite them. Most modern BI tools (like Tableau or PowerBI) and AI frameworks (like PyTorch) already have native connectors for lakehouse formats, ensuring a smooth transition for end-users.

How to Build Your AI-Ready Research Foundation in 90 Days

The era of fragmented research data is over. To compete in the age of Large Language Models and agentic AI, healthcare and life sciences organizations must move toward a unified, governed, and high-performance architecture. The 75% failure rate for AI projects is a choice—a choice to continue using outdated, siloed infrastructure.

At Lifebit, we’ve spent years perfecting the AI-powered research lakehouse specifically for the most sensitive and complex data on earth. Our platform—featuring the Trusted Data Lakehouse (TDL) and R.E.A.L. (Real-time Evidence & Analytics Layer)—is built to ensure your AI projects don’t just “fail to reach production,” but instead drive the next decade of medical breakthroughs.

Our 90-day implementation roadmap focuses on three key pillars:

- Data Unification: Connecting your existing silos (LIMS, EHR, Imaging) into a single, Iceberg-based metadata layer without moving the raw data.

- Governance Setup: Implementing ABAC and federated access controls to ensure immediate compliance with global regulations.

- AI Enablement: Deploying vector search and RAG capabilities so your researchers can start building agentic workflows on day one.

We help you “bring AI to your data,” ensuring that sovereignty and security are never sacrificed for the sake of innovation. Whether you are managing population-scale genomic databases or building the next generation of pharmacovigilance tools, the lakehouse is your foundation. The future of medicine is being written in data; make sure your foundation is strong enough to support it.

Stop fighting your infrastructure. Start discovering.