Federated Data Integration 101: Why Your Data Doesn’t Need a Passport

Biomedical Data: No Passport Needed. Unlock Insights, Cut Risk.

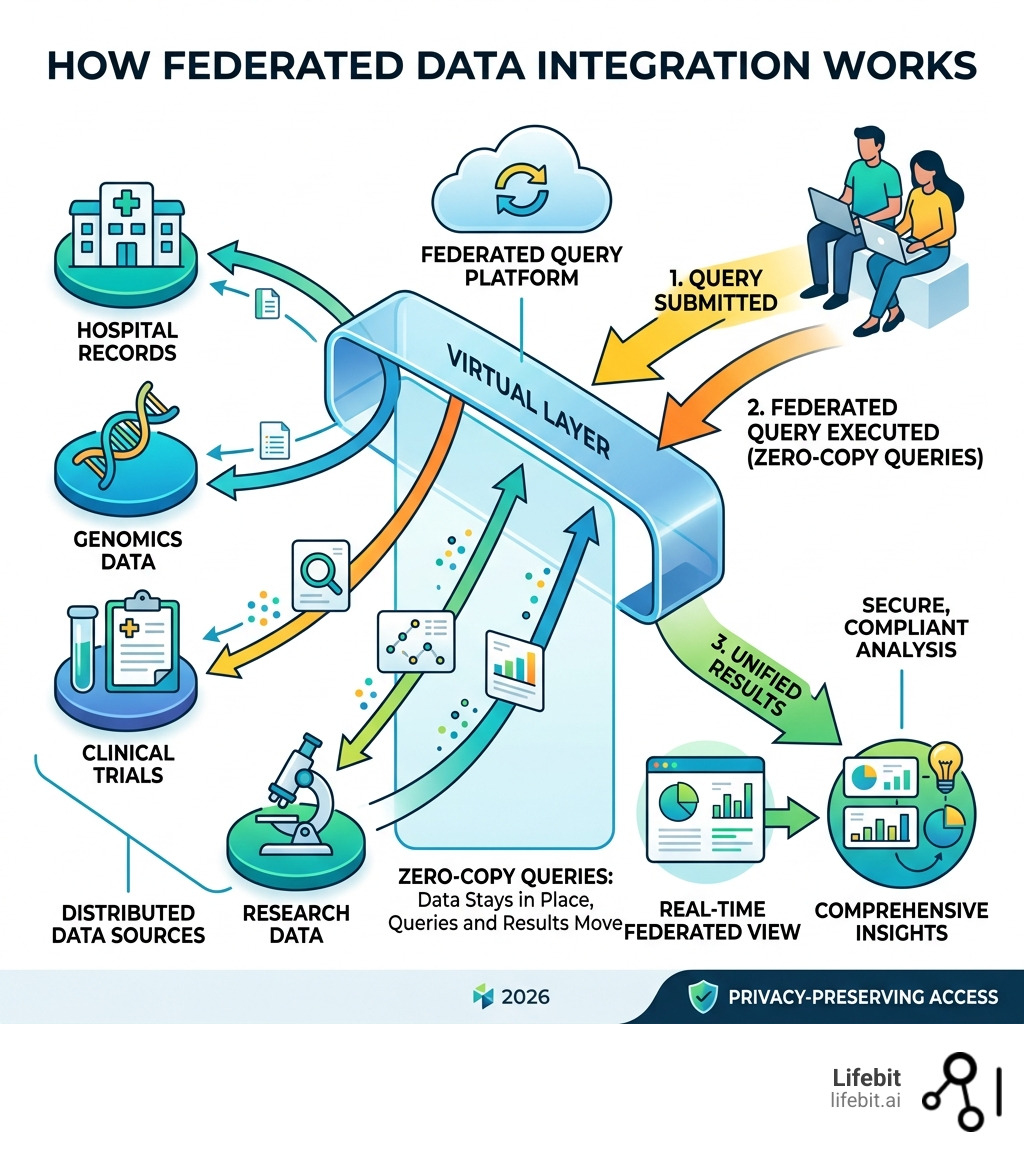

Federated data integration is a technique that lets you query and analyze data across multiple, separate systems — without ever moving or copying it.

Here’s what that means in practice:

| Question | Answer |

|---|---|

| What does it do? | Creates a virtual layer so you can query many data sources as if they were one |

| Does data move? | No — data stays in its original location at all times |

| How is it different from ETL? | ETL physically moves data to a central store; federation queries it in place |

| Who benefits most? | Organizations with distributed, sensitive, or regulated data — like biomedical research, healthcare, and finance |

| What’s the core advantage? | Real-time access + lower costs + stronger compliance |

Nearly 97% of enterprise data remains untapped. Not because it doesn’t exist — but because it’s locked inside fragmented systems that don’t talk to each other.

In biomedical research, this problem is acute. Patient records sit in hospital systems. Genomics data lives in separate repositories. Claims data is held by payers. Clinical trial results are scattered across sites and geographies. Each dataset has its own rules, formats, and access controls.

Getting a unified view has traditionally meant one thing: move all that data into one place. But moving sensitive health data creates compliance nightmares, inflates storage costs, and introduces the risk of breaches at every step.

Federated data integration solves this by doing something elegant — it leaves the data exactly where it is, and brings the query to the data instead.

I’m Dr. Maria Chatzou Dunford, CEO and Co-founder of Lifebit, where I’ve spent over 15 years building federated data infrastructure for some of the world’s leading genomics and biomedical research institutions — making federated data integration the backbone of privacy-preserving, cross-institutional discovery. My work on platforms like Nextflow and Lifebit’s federated analytics environment has shown me, firsthand, how this architecture unlocks data that would otherwise remain permanently out of reach.

Common Federated data integration vocab:

Stop Moving Data: The Power of Federated Data Integration

In the old world of IT, if you wanted to analyze data from three different databases, you had to physically move that data into a single “bucket.” This created massive data silos and required constant synchronization. Federated data integration flips this script. It acts as a sophisticated middleware layer—a “virtual database”—that provides a single source of truth without the physical heavy lifting. This architecture is often referred to as the “Mediator-Wrapper” pattern. The ‘mediator’ handles the global schema and query decomposition, while ‘wrappers’ translate those queries into the specific dialects of the underlying source systems, whether they are SQL, NoSQL, or flat files.

Think of it like a smart library catalog. Instead of moving every book from every branch into one giant building, you just use a digital catalog that tells you exactly where every book is and lets you “read” them remotely. This enables real-time analytics because you are querying the live data, not a week-old copy sitting in a warehouse. This approach is gaining massive traction, as seen in initiatives like the U.S. Data Federation, which aims to streamline how government agencies share information without compromising security. By avoiding the “Data Gravity” problem—where large datasets become too heavy and expensive to move—federation allows organizations to remain agile and responsive to new information as it is generated.

At Lifebit, we see this as the only viable path forward for federated data analysis. When you are dealing with petabytes of genomic data, the “copy-paste” method isn’t just slow—it’s impossible. Furthermore, the traditional approach of centralization often leads to “data swamps,” where the lack of context and the sheer volume of moved data make it impossible to find meaningful insights. Federation preserves the local context and metadata, ensuring that the data remains high-quality and interpretable.

Why Federated Data Integration is the Future of Multi-Cloud Strategy

Most modern enterprises don’t live in just one cloud. They use a hybrid cloud setup, perhaps keeping sensitive patient records on-premise in London or New York while running intensive AI workloads in AWS or Azure. Federated data integration is the “glue” for these multi-cloud environments. It allows for cross-platform querying, meaning a researcher can join a table in a local SQL database with a massive dataset in a public cloud bucket as if they were in the same room. This prevents vendor lock-in and respects data sovereignty—ensuring that data stays within its legal or geographic boundaries. This is particularly critical for global organizations that must navigate the “Cloud Hotel” effect, where data is easy to check in but prohibitively expensive to check out due to egress fees.

The Death of Traditional ETL Pipelines

Traditional Extract, Transform, Load (ETL) pipelines are becoming a bottleneck. They introduce significant data latency; by the time data is extracted from a source, transformed into a new format, and loaded into a warehouse, it might already be stale. Furthermore, the extraction costs and transformation complexity are skyrocketing. We are seeing a shift where organizations realize that the load bottlenecks of centralized systems are killing their agility. Federation offers a “zero-copy” alternative that bypasses these hurdles entirely. Instead of building rigid pipelines that break whenever a source schema changes, federation uses dynamic mapping to adapt to changes in real-time, providing a resilient and scalable infrastructure for modern data science.

Cut Storage Costs: Why Federation Beats Traditional ETL

The global data integration market is projected to grow from $17.10 billion in 2025 to over $47 billion by 2034. A huge driver of this is the sheer cost of storing data twice. When you use ETL to move data into a warehouse, you pay for the storage at the source and the storage at the destination. In the era of Big Data, where datasets can reach the exabyte scale, this 2x storage cost is no longer a line item—it is a barrier to entry. Beyond storage, the hidden costs of ETL include the engineering hours required to maintain pipelines and the compute costs of the transformation processes themselves.

| Feature | Data Federation | Data Warehouse (ETL) | Data Lake |

|---|---|---|---|

| Data Movement | Zero (Stay in-situ) | High (Full copy) | High (Raw copy) |

| Storage Cost | Low (No duplication) | High (Double storage) | Medium (Cheap storage) |

| Data Freshness | Real-time | Latent (Batched) | Latent |

| Setup Speed | Fast (Virtual) | Slow (Pipeline build) | Medium |

By adopting a federated data platform, organizations can achieve massive infrastructure overhead reductions. You don’t need to build and maintain complex pipelines for every new data source; you simply connect the source to the federated layer and start querying. This “just-in-time” approach to data integration means that resources are only consumed when a query is actually run, rather than paying for the continuous movement of data that may never be analyzed.

When to Choose Federation Over Data Lakes

While data lakes are great for long-term storage of raw data, they aren’t always the best for rapid action. Choose federated data integration when you need:

- Ad-hoc queries: “I need to know X across these five systems right now.” This is essential for emergency response or rapid market shifts.

- Operational reporting: Real-time dashboards that can’t wait for a nightly sync, such as monitoring hospital bed capacity or live clinical trial enrollment.

- Regulatory restrictions: When GDPR, HIPAA, or the EU AI Act says the data cannot leave its region or its specific security enclave.

- Rapid prototyping: Testing a hypothesis before committing to a full integration project. Federation allows you to “fail fast” without investing in heavy infrastructure.

Eliminating the 97% Untapped Data Gap

The “97% gap” is largely composed of dark biomedical data. This is information hidden in fragmented systems, legacy databases, or siloed SaaS applications. Because federation uses API connectivity and standard connectors, it can reach into these dark corners. Whether it’s a 20-year-old SQL server in a basement or a modern CRM, federation brings them into the light. This is particularly important for “Long Tail” data—smaller, specialized datasets that are often ignored by centralized efforts but contain the key to understanding rare diseases or specific patient sub-populations.

Inside the Engine: Query Processing and Federated Data Preprocessing

How does this actually work under the hood? It’s not magic; it’s high-performance engineering. When a user submits a query to a federated analytics engine, the system doesn’t pull all the data back to its center. Instead, it uses a virtualization engine to decompose that query into optimized sub-tasks. This process involves a complex cost-based optimizer that evaluates the network bandwidth, the processing power of the source systems, and the size of the data to determine the most efficient execution plan.

How Virtualization Engines Execute Federated Data Integration Queries

- Sub-query translation: The engine translates the master query into the “native language” of each source (e.g., SQL for one, NoSQL for another). This ensures that the source system can execute the query using its own optimized indexes.

- Predicate pushdown: This is the secret sauce. The engine tells the source database to do the filtering locally. Instead of asking for a million rows and filtering them, it asks the source to only send the 10 rows that match the criteria. This drastically reduces network traffic and speeds up response times.

- Result aggregation: The engine collects the tiny “answer fragments” from all sources and merges them into a single, coherent response for the user. This may involve performing joins or unions across data that originated in completely different formats.

- Latency mitigation: Using connection pooling, intelligent caching, and parallel execution, the system ensures that the “virtual” experience feels as fast as a local one. Advanced engines can even predict common query patterns and pre-fetch metadata to reduce overhead.

Solving Data Quality with Federated Data Preprocessing (FedPS)

One of the biggest hurdles in distributed environments is data quality and semantic interoperability. If Source A uses “Celsius” and Source B uses “Fahrenheit,” or if one hospital uses ICD-9 codes while another uses ICD-10, your analysis will break. This is where Federated Data Preprocessing (FedPS) comes in. It uses aggregated statistics and data sketching to harmonize data without looking at individual records. FedPS can perform automated ontology alignment, ensuring that “Heart Attack” in one system is correctly mapped to “Myocardial Infarction” in another. Research shows that federated preprocessing can increase model accuracy by up to 17% in non-IID (Independent and Identically Distributed) settings by ensuring global scaling and consistent imputation across all sites, all while maintaining the privacy of the underlying records.

Secure Your Assets: Solving Governance in Distributed Environments

Security is the number one concern for our clients in Europe, Canada, and the USA. In a federated model, the data never leaves its secure “home,” which inherently reduces the attack surface. However, you still need a robust federated data governance strategy to manage who can see what. This involves moving from a “perimeter-based” security model to a “Zero Trust” architecture, where every access request is verified regardless of where it originates. By keeping data in-situ, organizations can maintain strict control over their digital assets while still participating in global research collaborations.

By using a federated trusted research environment, organizations can enforce “security by design.” The global data governance market is expected to hit $18 billion by 2032, reflecting the urgent need for these controls. Federation allows for “Sovereignty-by-Design,” where the data owner retains the ultimate “kill switch” over their data, ensuring that access can be revoked instantly if a policy is violated.

Best Practices for Implementing Federated Security

- Role-Based Access Control (RBAC): Ensure that a researcher in Singapore only sees the specific columns they are authorized to access, even if the database is in London. This can be extended to Attribute-Based Access Control (ABAC) for even finer granularity.

- Encryption at Rest & In-Transit: Always the baseline, but critical when results are being aggregated across the web. Advanced implementations may also use Homomorphic Encryption to perform computations on encrypted data.

- Immutable Audit Trails: Every single query must be logged. You need to know exactly who asked what, and what the source system returned. This is vital for compliance with regulations like GDPR, which require a clear record of data processing activities.

- Identity Resolution: Mapping a “User ID” in a CRM to a “Patient ID” in a clinical database without exposing PII (Personally Identifiable Information). This often involves the use of secure hashing or third-party tokenization services.

Navigating the Complexity of Schema Drift

In a federated environment, source systems change. A database admin might add a column or change a data type. This is “schema drift.” Modern federated tools use automated discovery and metadata synchronization to detect these changes in real-time. By using a semantic layer, the federated engine can shield the end-user from these changes, ensuring that the virtual layer stays consistent even when the underlying “ground” is shifting. This reduces the maintenance burden on data engineers and ensures that analytical dashboards don’t break unexpectedly.

Real-World Impact: Accelerating Biomedical Breakthroughs

At Lifebit, we don’t just talk about federation—we use it to save lives. By applying federated learning in healthcare, we enable researchers to train AI models on diverse patient populations without moving a single record. This is particularly transformative for rare disease research, where no single hospital has enough patients to train a robust model. By federating data across dozens of institutions, we can achieve the statistical power necessary to identify new biomarkers and therapeutic targets.

Accelerating Biomedical Research and Drug Discovery

The impact of federated technology in population genomics is staggering. Researchers can now:

- Access Multi-omic Data: Combine genomic, proteomic, and clinical data from five different continents to find rare disease variants. This holistic view is essential for precision medicine, where treatment is tailored to the individual’s unique biological profile.

- Integrate Clinical Trials: Monitor patient safety across global sites in real-time, catching adverse events faster. Federation allows for the creation of “Virtual Control Arms,” where historical patient data from multiple sources is used to augment current trials, reducing the number of patients needed and accelerating the path to market.

- Protect Patient Privacy: Comply with the strictest HIPAA and GDPR standards while still allowing for high-impact science. Techniques like Differential Privacy can be applied to the query results, adding mathematical “noise” to ensure that no individual patient can be re-identified from the aggregate data.

- Pharmacovigilance: Use our R.E.A.L. (Real-time Evidence & Analytics Layer) to perform AI-driven safety surveillance on live data streams. This allows pharmaceutical companies to detect potential side effects in the real world much faster than traditional reporting methods, potentially saving thousands of lives.

By breaking down the silos between academia, healthcare providers, and the pharmaceutical industry, federated data integration is creating a new ecosystem of “Open Science” that is both collaborative and secure. This is the foundation of the next generation of medical breakthroughs, from personalized cancer vaccines to gene therapies for previously untreatable conditions.

The Future of Data: AI-Powered Integration and Edge Computing

The next frontier of federated data integration is moving toward autonomous intelligence. We are moving beyond simple queries into AI for federation, where AI agents handle the heavy lifting of data plumbing. These agents can automatically discover new data sources, suggest optimal mappings, and even predict which datasets are most likely to contain the answers to a researcher’s questions. This creates a “Self-Healing Data Fabric” that requires minimal human intervention to maintain.

- AI-Driven Optimization: Using machine learning to predict which queries will be slow and automatically caching the results or suggesting better indexing strategies to the source administrators.

- Edge Federation: Processing data directly on IoT devices or hospital imaging machines (the “edge”) and only sending the insights back to the central hub. This is critical for high-bandwidth data like MRI scans or real-time genomic sequencing, where moving the raw data is physically impossible.

- Blockchain for Trust: Using decentralized ledgers to verify data provenance and ensure that the “audit trail” is truly tamper-proof. This provides an extra layer of confidence for researchers and regulators alike, ensuring that the data used in a study hasn’t been altered or manipulated.

- Federated Learning 2.0: Training massive Large Language Models (LLMs) on private, distributed enterprise data without the data ever being exposed to the model’s creators. This allows organizations to leverage the power of Generative AI while keeping their proprietary intellectual property and sensitive patient data completely secure.

Scaling with Federated Learning and AI Agents

AI agents require context. If an agent is helping a doctor, it needs context from the electronic health record, the latest research papers, and the patient’s wearable device data. A federated data layer acts as the “connective tissue” or “unified memory surface” for these agents, allowing them to assemble context in real-time without duplicating data. This enables a new generation of “Clinical Decision Support Systems” that can provide personalized recommendations at the point of care, informed by the totality of global medical knowledge.

Edge Computing and the Rise of Hybrid Multi-Cloud

As more data is generated by sensors and IoT devices, the bandwidth required to move it all to the cloud is becoming cost-prohibitive. Edge federation allows for localized processing—analyzing the data where it’s born—and only integrating the “meaning” of that data into the broader multi-cloud ecosystem. This reduces latency for time-sensitive applications, such as robotic surgery or real-time patient monitoring, while still allowing the insights to be used for long-term research and population health management.

Frequently Asked Questions about Federated Data Integration

Is data federation faster than a data warehouse?

It depends on the use case. For real-time access and small-to-medium queries, federation is significantly faster because there is no ETL delay; you are querying the data the moment it is created. However, for massive, multi-petabyte historical “scan everything” jobs, a dedicated data warehouse may perform better because the data is pre-optimized and physically co-located. Most modern organizations use a “Hybrid” approach, using federation for agility and real-time needs, and warehouses for heavy historical reporting.

How does data federation handle sensitive PII?

Federation is actually safer for PII (Personally Identifiable Information). Because the data never moves, you don’t have to worry about “leaks” during the transfer process or the creation of multiple vulnerable copies. You can apply masking, pseudonymization, and differential privacy at the federated layer, ensuring that researchers only see anonymized or aggregated results. Furthermore, the source system retains full control and can block any query that it deems too granular or risky.

Can I use data federation for machine learning training?

Yes! This is called Federated Learning. Instead of moving data to the model, you move the model to the data. The model learns from the local data and only sends “weight updates” (mathematical summaries) back to a central server. This allows for training on massive, diverse datasets while maintaining 100% data privacy. It is the gold standard for AI development in highly regulated industries like healthcare and finance.

Does federation work with unstructured data?

Absolutely. Modern federated engines can connect to NoSQL databases, document stores, and even raw file systems like S3 or Azure Blob Storage. By using “Schema-on-Read” techniques, the federated layer can impose structure on this data at the time of the query, allowing you to join a structured SQL table with an unstructured collection of JSON documents seamlessly.

What is the impact on source system performance?

This is a common concern. However, by using “Predicate Pushdown” and intelligent query throttling, the impact on source systems is usually minimal. Most federated engines allow administrators to set resource limits, ensuring that analytical queries never interfere with the primary operational functions of the source database.

Conclusion

The era of the “data passport” is over. You no longer need to move your data across borders, clouds, or departments just to get an answer. Lifebit’s federated AI platform is designed for this new reality, providing a secure, real-time bridge to the world’s most valuable biomedical data.

Whether you are using our Trusted Research Environment (TRE) to collaborate with global partners or our Trusted Data Lakehouse (TDL) to unify your internal silos, the goal is the same: faster insights, lower risk, and better outcomes for patients everywhere.

Ready to see how your data can work together without ever leaving home? Access the Trusted Data Marketplace and start your journey toward a truly federated future.