Data-Driven Democracy: How Analytics Empowers the Federal Government

Stop Wasting Billions: How is Data Analytics Important for the Federal Government?

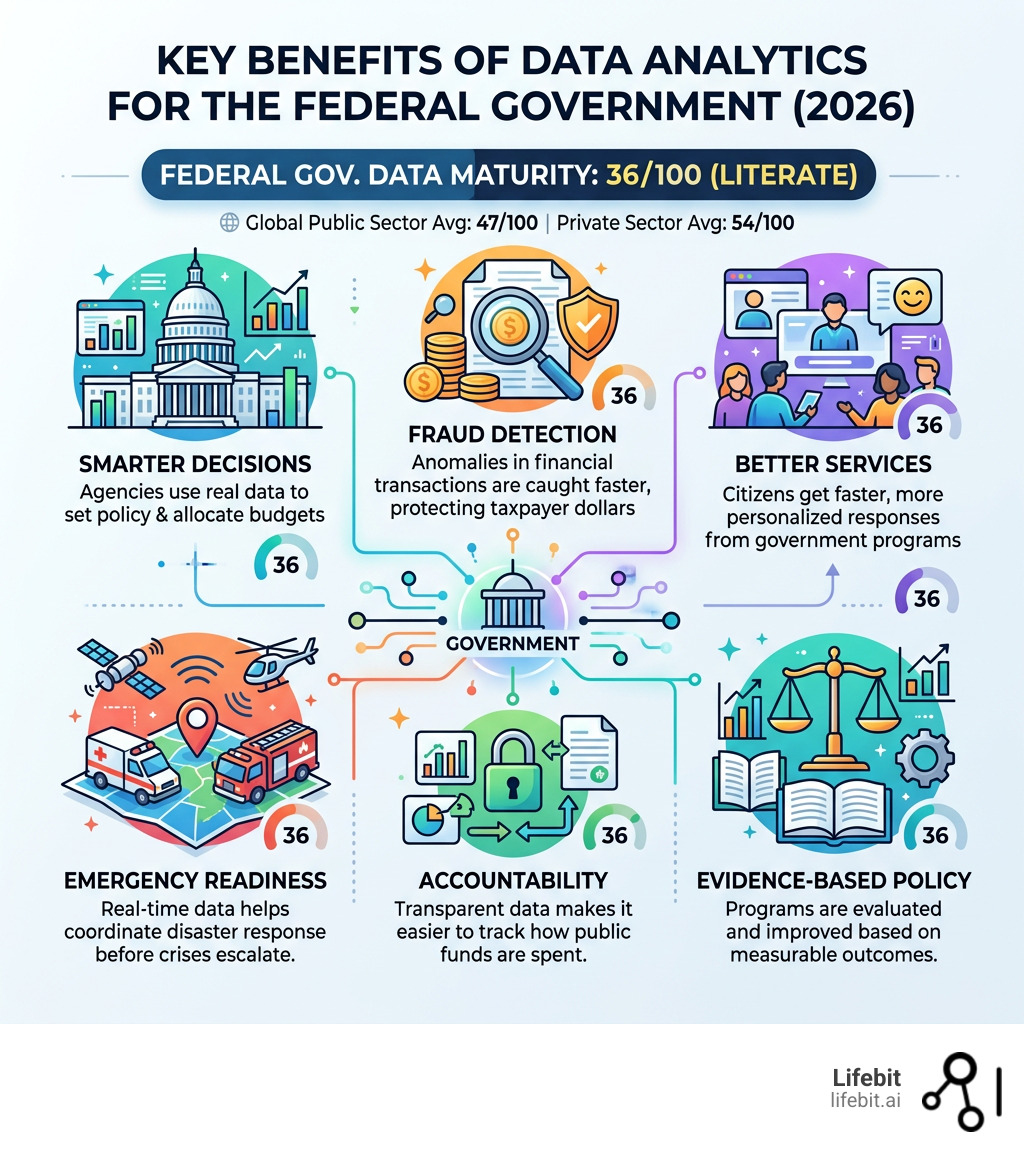

How is data analytics important for the federal government comes down to one core idea: better data leads to better decisions — and better decisions mean better outcomes for every citizen.

Here’s a quick summary of why it matters:

| Benefit | What It Means in Practice |

|---|---|

| Smarter decisions | Agencies use real data — not guesswork — to set policy and allocate budgets |

| Fraud detection | Anomalies in financial transactions are caught faster, protecting taxpayer dollars |

| Better services | Citizens get faster, more personalized responses from government programs |

| Emergency readiness | Real-time data helps coordinate disaster response before crises escalate |

| Accountability | Transparent data makes it easier to track how public funds are spent |

| Evidence-based policy | Programs can be evaluated — and improved — based on measurable outcomes |

The opportunity is massive. The federal government sits on the largest collection of current and historical U.S. datasets in existence. Yet today, federal agencies score just 36 out of 100 on data and digital maturity — classified as “literate,” and trailing both the global public sector (47/100) and private sector (54/100).

That gap has real consequences. When governments don’t use their data well, students drop out of school unnoticed, tax fraud goes undetected, and disaster relief arrives too late. When they do — like Guatemala’s Ministry of Education, which used analytics to cut lower secondary dropout rates by 9% — the results are concrete and measurable.

The good news? Agencies aren’t starting from scratch. Legislation like the Foundations for Evidence-Based Policymaking Act, frameworks like the Federal Data Strategy, and a growing ecosystem of AI and machine learning tools are creating a clear path forward.

I’m Dr. Maria Chatzou Dunford, CEO and Co-founder of Lifebit, and with over 15 years of experience in AI, federated data analysis, and biomedical informatics, I’ve seen how understanding how is data analytics important for the federal government can transform public institutions — from accelerating drug discovery to enabling real-time, privacy-preserving insights across secure government environments. In this guide, I’ll walk you through exactly what analytics can do for federal agencies, where the gaps are, and what it takes to close them.

Basic how is data analytics important for the federal government vocab:

Stop Fraud and Slash Response Times: 5 Ways Data Analytics Delivers

When we talk about the importance of analytics in the public sector, we aren’t just talking about spreadsheets. We are talking about a fundamental shift in how democracy functions. By repurposing administrative data—the information already collected during daily operations—agencies can move from reactive “firefighting” to proactive governance.

A World Bank report, Data for Better Governance, explains how data can be used to increase the efficiency and responsiveness of government bodies. For us at Lifebit, this resonates deeply. Whether it’s providing federal health tips for 2025 or managing multi-omic datasets, the goal is the same: use data to improve lives.

Here are five ways analytics changes the game:

- Strategic Resource Allocation: Analytics allows agencies to prioritize investments where they will have the most impact. Instead of blanket funding, data identifies specific communities or sectors in need. For example, the Department of Housing and Urban Development (HUD) can use geospatial analytics to identify “opportunity zones” where infrastructure investment will yield the highest return in terms of job creation and poverty reduction.

- Enhanced Transparency and Accountability: By monitoring spending in real-time, the government can show taxpayers exactly where their money goes, fostering public trust. This involves moving beyond static annual reports to dynamic, public-facing dashboards that track the progress of major legislative initiatives like the Infrastructure Investment and Jobs Act.

- Personalized Citizen Services: Much like a private sector “personal assistant,” AI can send reminders about school enrollment or vaccination deadlines based on existing records. This reduces the administrative burden on citizens, who often have to navigate complex, overlapping agency requirements to access the benefits they are entitled to.

- Operational Efficiency: Identifying bottlenecks in internal workflows can save millions of dollars and thousands of man-hours. By applying process mining to federal procurement cycles, agencies can identify why certain contracts take months to approve and implement automated workflows to speed up the delivery of critical supplies.

- Evidence-Based Policy Evaluation: Instead of guessing if a program works, agencies use data to measure actual outcomes and refine policies iteratively. This is the heart of the “What Works” movement in government, ensuring that programs that fail to meet their metrics are defunded or redesigned, while successful ones are scaled.

How is data analytics important for the federal government in fraud prevention?

Fraud and the misuse of public funds are constant threats to the integrity of federal programs. According to the Government Accountability Office (GAO), the federal government loses hundreds of billions of dollars annually to improper payments. Data analytics acts as a high-tech detective, spotting financial anomalies that human auditors might miss.

Traditionally, agencies relied on a “pay-and-chase” model—paying out claims first and then trying to recover fraudulent funds later. Modern analytics shifts this to a “prevent-and-protect” model. By analyzing massive volumes of transaction data in real-time, agencies can identify irregularities, such as duplicate payments, identity theft patterns, or suspicious clusters of claims originating from the same IP address.

Real-world success stories prove this isn’t theoretical. Tax authorities in Ecuador and Peru successfully collected millions of dollars in additional revenue by using data to detect evasion and optimize their enforcement resources. In the U.S., the Centers for Medicare & Medicaid Services (CMS) uses predictive modeling to flag high-risk claims before they are paid, saving billions in potential losses. This ensures that social welfare and national security funds reach their intended targets rather than lining the pockets of bad actors.

How is data analytics important for the federal government in emergency response?

In a crisis, time is the only currency that matters. Whether it’s an unprecedented flood, a wildfire, or a public health surge, government analytics allow for real-time situational awareness. By integrating data from weather sensors, hospital records, social media feeds, and infrastructure monitors, agencies can coordinate timely support to the most vulnerable areas.

For example, during flooding events, the Federal Emergency Management Agency (FEMA) uses integrated data assessments to understand the immediate impact on local economies and resources. By layering flood maps with demographic data, they can identify neighborhoods with high concentrations of elderly residents or people with disabilities who may need specialized evacuation assistance. This proactive approach is also critical for monitoring public health trends, enabling agencies like the CDC to deploy medical supplies and personnel before an outbreak reaches its peak in a specific geographic region.

Cut Downtime by 50%: Predict Infrastructure Failure with Federal AI

The “Big Data” era has evolved into the “AI Era.” Advanced technologies like Natural Language Processing (NLP) and predictive modeling are now the backbone of modern federal initiatives. The NRC Data Analytics Centre, for instance, uses AI to reduce the time it takes to resolve air traveler complaints by automatically categorizing and routing issues to the correct department. It also employs image analytics for early detection of emerging technological threats by scanning global patent filings and research papers.

In the realm of health, the impact is even more profound. Utilizing a defense health data platform allows for the integration of clinical, environmental, and genomic data, moving us closer to the goal of precision medicine for service members. Furthermore, the Veterans Affairs department has explored using algorithms to identify veterans at the highest risk of suicide by analyzing patterns in medical records and pharmacy data, allowing for life-saving clinical interventions before a tragedy occurs.

These tools don’t just process data; they learn from it. Machine learning can predict when critical infrastructure like power grids, bridges, or water treatment plants might fail. By using “Digital Twins”—virtual replicas of physical assets fed by real-time sensor data—agencies can simulate various stress scenarios. This predictive maintenance can extend asset lifespans by 20-40% and reduce unplanned downtime by half, ensuring that the nation’s backbone remains resilient against both natural wear and tear and cyber-physical attacks.

Furthermore, the Department of Energy (DOE) is leveraging supercomputing and AI to optimize the national grid, balancing the intermittent nature of renewable energy sources like wind and solar with traditional baseload power. This level of complex, multi-variable optimization is impossible for human operators alone but is perfectly suited for advanced data analytics.

Close the 36% Maturity Gap: Why Federal Agencies Trail the Private Sector

Despite the clear benefits, there is a significant hurdle: the maturity gap. According to the Federal Data and Digital Maturity Index (FDDMI), U.S. agencies are currently “literate” but not yet “leaders.” This means that while the importance of data is recognized, it is not yet fully integrated into the daily DNA of every department.

| Metric | Federal Agency Score (0-100) | Global Public Sector | Target (5 Years) |

|---|---|---|---|

| Overall Maturity | 36 | 47 | 75 |

| Human Capital | 28 | – | 67 |

| Mission & Strategy | 42 | – | 80 |

| Data Governance | 31 | – | 70 |

The lowest scores often fall under “Human Capital” and “Reimagining Government.” Only 12% of governments in some regions have a dedicated career track for data analysts, making it difficult to recruit and retain the talent needed to run complex AI systems. Federal agencies often find themselves in a bidding war with Silicon Valley for data scientists, often losing out due to rigid pay scales and slower hiring processes.

Beyond talent, the technical debt of legacy systems is a massive anchor. Many agencies still rely on mainframe systems and programming languages like COBOL that date back to the 1970s. These systems create “data silos,” where information is trapped in formats that modern analytics tools cannot read.

Furthermore, FedRAMP compliance and data security remain top concerns. In fact, 62% of government organizations cite privacy and security as their primary constraint. To bridge this gap, agencies must move away from siloed, fragmented systems and embrace interoperability frameworks. This requires a cultural shift as much as a technical one—moving from a culture of “data ownership” to one of “data stewardship,” where sharing information across agency lines is the default, not the exception. The Foundations for Evidence-Based Policymaking Act (the Evidence Act) is a critical step here, mandating that agencies appoint Chief Data Officers (CDOs) and create comprehensive data inventories.

Stop Building Vulnerable Databases: The Secure Federated Data Path

The future of federal data isn’t about building one massive, vulnerable database that acts as a single point of failure for hackers. It’s about building a federated data ecosystem. This approach aligns with the Federal Data Strategy and the FAIR principles (Findable, Accessible, Interoperable, and Reusable), ensuring that data can be analyzed where it lives without compromising security.

In a federated model, the data stays behind the agency’s firewall. Only the analytical queries or the AI models travel to the data. This is particularly vital for sensitive areas like national security and public health. For instance, the NIAID (National Institute of Allergy and Infectious Diseases) can collaborate with international partners on pandemic research without ever moving sensitive patient records across borders.

Strategic priorities for the coming years include:

- Indigenous Data Sovereignty: Respecting the rights of Tribal Nations and indigenous communities to design, manage, and control their own data programs. This ensures that data collection benefits the community and respects cultural sensitivities.

- Open Science Incentives: Encouraging the sharing of federally funded research data to accelerate innovation in energy, climate, and health. By making data “open by default,” the government can catalyze private sector breakthroughs.

- Privacy-Enhancing Technologies (PETs): Investing in technologies like differential privacy and homomorphic encryption. These allow agencies to extract statistical insights from datasets without ever seeing the underlying individual records, providing a mathematical guarantee of privacy.

- Energy-Efficient Computing: As AI models like GPT-3 consume massive amounts of power, the government is prioritizing R&D into sustainable, “green” data centers. This is essential for meeting federal sustainability goals while still scaling up computational power.

- Global Leadership: Partnering with international stakeholders to set standards for ethical AI and data integrity. As the world’s largest data producer, the U.S. federal government has a unique responsibility to lead the development of global norms for the responsible use of AI in governance.

Stop the Confusion: Your Federal Data Analytics Questions Answered

What is the Federal Data Strategy?

The Federal Data Strategy is a comprehensive framework released by the Office of Management and Budget (OMB). It provides a mission statement, core principles, and a set of 40 practices to guide agencies through 2030. Unlike previous initiatives, it includes annual Action Plans that set specific, measurable goals for agencies, such as creating data inventories and improving data skills among the workforce. Its goal is to treat data as a strategic national asset, ensuring it is managed ethically and used to drive evidence-based decisions across the entire government.

How does AI improve government services?

AI improves services by making them more responsive, accurate, and personalized. Chatbots and virtual assistants can answer citizen queries 24/7, reducing wait times at call centers. Predictive maintenance algorithms ensure that public infrastructure—like water supplies and transport grids—remains operational, preventing costly emergency repairs. In Singapore, AI is even used to send school enrollment reminders and suggest career paths based on labor market data, ensuring no citizen falls through the cracks. In the U.S., AI is being used to speed up the processing of patent applications and veteran benefit claims, reducing backlogs that have persisted for years.

What are the biggest challenges in federal data adoption?

The primary challenges are privacy concerns, security risks, and a lack of strategic alignment. Many agencies struggle with “data silos,” where information is trapped in legacy systems that can’t communicate with modern analytics tools. Additionally, there is a significant skills gap; agencies need more trained data scientists and a culture that embraces data-driven experimentation. There is also the challenge of “algorithmic bias”—ensuring that the AI models used by the government do not inadvertently discriminate against certain demographic groups. Overcoming these requires robust data governance and a commitment to transparency.

How does the Evidence Act impact data analytics?

The Foundations for Evidence-Based Policymaking Act of 2018 (Evidence Act) is a landmark piece of legislation. It requires agencies to build evidence about their programs through rigorous evaluation and data analysis. It mandates the appointment of a Chief Data Officer (CDO), an Evaluation Officer, and a Statistical Official at every major agency. This creates a formal leadership structure for data, ensuring that analytics is not just a side project but a core component of agency strategy. It also encourages the sharing of data between agencies for research purposes, provided that strict privacy protections are in place.

Stop Guessing: Build a Data-Driven Federal Agency Today

How is data analytics important for the federal government is no longer a question of “if,” but “how fast.” The transition from “literate” to “leader” requires more than just new software; it requires a new way of thinking about data as the lifeblood of modern democracy.

At Lifebit, we are committed to this transformation. We provide a next-generation federated AI platform that enables secure, real-time access to global biomedical and multi-omic data. Our technology helps federal agencies overcome data silos and workforce gaps while maintaining the highest levels of compliance and security. Whether you are looking for solutions for government or want to learn how to build a Federated Data Platform, we are here to help you turn your agency’s data into its most powerful strategic asset.

Let’s build a more efficient, transparent, and data-driven future together.