How Tech is Taking the Trial out of Clinical Trials

Why Data and Technology in Clinical Trials Is Broken — And How It’s Being Fixed

Data and technology in clinical trials are at a turning point. For decades, the promise has been the same: faster drug development, less burden on patients, smarter decisions. But one bottleneck has quietly undermined nearly every advance — the inability to access high-quality, usable data when it actually matters.

Here is what that looks like in practice:

| Problem | Impact |

|---|---|

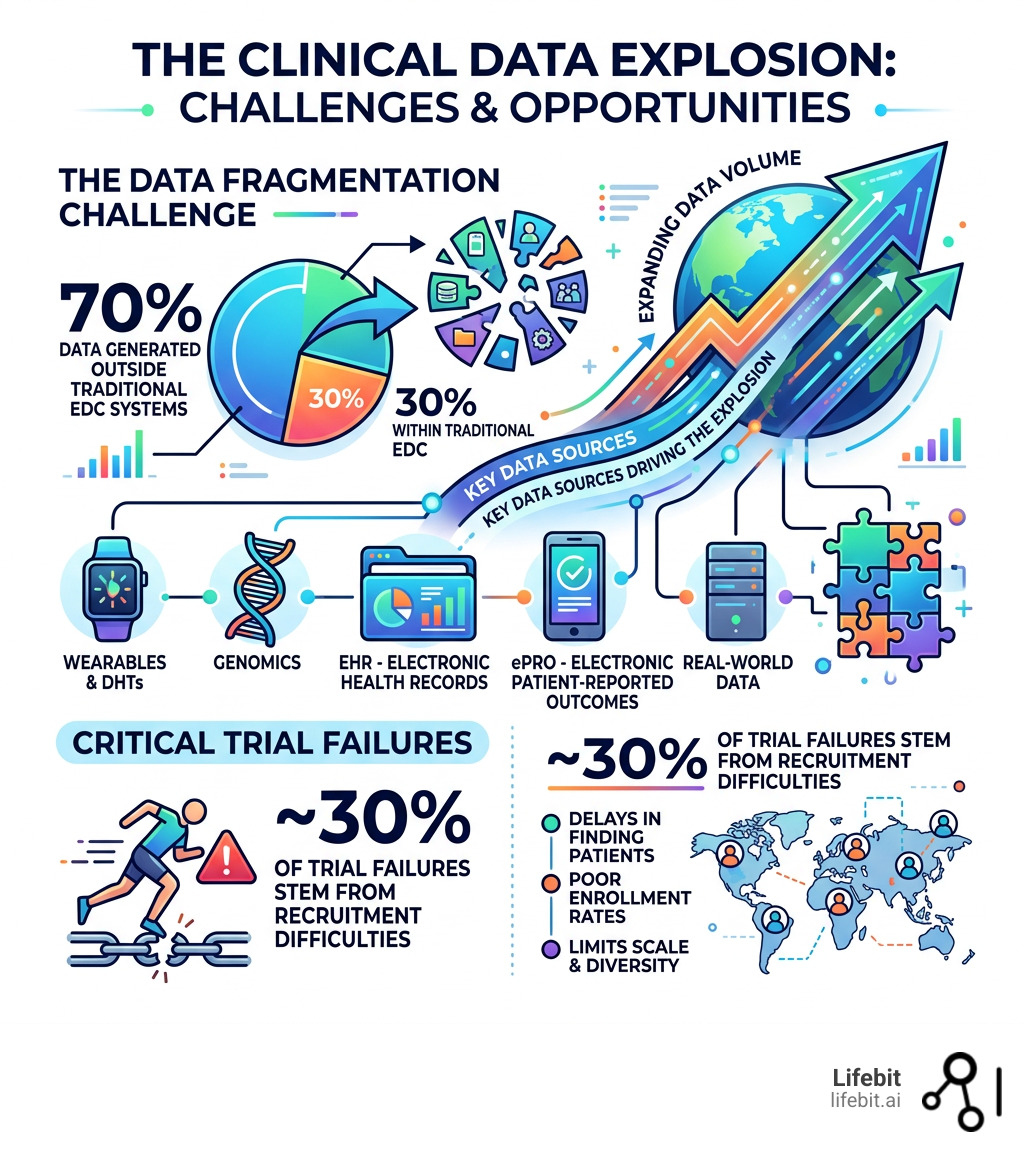

| ~70% of trial data generated outside traditional EDC systems | Data is fragmented across genomics, wearables, ePRO, and real-world sources |

| Recruitment difficulties | Cause ~30% of all study failures |

| Fewer than 10% of U.S. patients enroll in a trial | Limits scale, diversity, and generalizability |

| Data in incompatible formats | Delays analysis, submissions, and decisions |

| Rising trial complexity | Cycle times up 30%+, costs averaging $2.6B per approved drug |

The gap between the data that exists and the data that gets used is where trials fail.

The good news? That gap is finally closing. A wave of converging forces — digital health technologies (DHTs), agentic AI, evolving regulatory frameworks, and new data standards — is reshaping how clinical trials are designed, run, and analyzed.

This guide breaks down exactly how.

I’m Maria Chatzou Dunford, CEO and Co-founder of Lifebit, with over 15 years in computational biology, federated AI, and biomedical data platforms — I’ve spent my career working at the intersection of data and technology in clinical trials, from genomic workflow tools to enterprise-scale federated research environments. In the sections ahead, I’ll walk you through what’s actually changing, what still needs to change, and what the most forward-thinking sponsors and regulators are doing right now.

Data and technology in clinical trials word roundup:

The Data Explosion: Why 70% of Trial Data Lives Outside EDC

Remember when clinical trials were simple? A patient walked into a clinic, a coordinator wrote numbers on a paper Case Report Form (CRF), and eventually, someone typed that into an Electronic Data Capture (EDC) system. Those days are gone. Today, a single Phase III trial can generate 3.6 million data points—three times more than just 15 years ago. This “data tsunami” is driven by the shift from episodic data collection (monthly clinic visits) to continuous monitoring.

The real challenge we face is that nearly 70% of trial data is now generated outside traditional EDC systems. We are witnessing a flood of information from diverse, high-volume sources that require a new approach to Clinical Trial Data Management:

- Genomics and Multi-omics: High-resolution biological data, including transcriptomics and proteomics, provides a molecular-level view of drug response. However, a single whole-genome sequence can exceed 100GB, making traditional data transfer impossible.

- Wearables and Sensors: Continuous streams of heart rate, sleep, and activity data provide “digital biomarkers” that capture the patient’s experience in the real world, rather than just a sterile clinic environment.

- ePRO (Electronic Patient-Reported Outcomes): Real-time symptoms reported via smartphones reduce recall bias, ensuring that side effects are captured as they happen.

- Real-World Evidence (RWE): Data from Electronic Health Records (EHRs) and insurance claims allow researchers to see how drugs perform in diverse, non-trial populations.

This diversity is a double-edged sword. While it offers a richer picture of patient health, it often results in “data silos”—disconnected systems where formats are incompatible and insights are buried. According to scientific research on digital health growth, this explosion in digital health technologies (DHTs) has seen their use in trials like neurology jump from 0.7% in 2010 to over 11% by 2020. The industry is currently struggling with the “Four Vs” of big data: Volume (the sheer amount), Velocity (the speed of incoming sensor data), Variety (the different formats), and Veracity (the reliability of the data). Without end-to-end standards, sponsors spend more time “cleaning” data than actually analyzing it, leading to significant delays in bringing life-saving therapies to market.

Modernizing Operations with Data and Technology in Clinical Trials

The shift toward Clinical Research Technology isn’t just about having more data; it’s about changing how we interact with patients. Over the last decade, DHTs like wearables and smartphones have evolved from “interesting experiments” to essential operational tools that reduce the “geographic tax” on participants.

Take the Apple Heart Study, which enrolled an incredible 420,000 individuals entirely through an app, or the mPower study, which used smartphone sensors to track Parkinson’s symptoms for over 9,000 participants. These aren’t just big numbers; they represent a fundamental shift in how we approach Clinical Trial Patient Recruitment. By removing the need for physical site visits, these studies reached a scale that would have been impossible using traditional methods.

Decentralized Trials: Solving the 10% Enrollment Crisis

The traditional “site-based” model is failing. Currently, fewer than 10% of patients in the U.S. ever enroll in a clinical trial. Why? Because participating often requires traveling long distances to a specific hospital during work hours. Research shows that 70% of potential participants live more than two hours away from the nearest study site, creating a massive barrier to entry for rural and low-income populations.

Decentralized Clinical Trials (DCTs) use technology to bring the trial to the patient. By utilizing virtual visits, local pharmacies for lab work, and remote monitoring, we can reach underserved populations who were previously excluded. The impact of this shift was highlighted during COVID-19, when weekly virtual healthcare visits for Medicare beneficiaries skyrocketed from just 13,000 to 1.7 million in a single month. This isn’t just a pandemic trend; it’s the new standard for Remote Clinical Trial Monitoring. Hybrid models, which combine occasional site visits with remote data collection, are becoming the preferred choice for sponsors looking to balance data quality with patient convenience.

Regulatory Support for Data and Technology in Clinical Trials

Regulators are no longer the bottleneck—they are often the ones leading the charge. The FDA’s PDUFA VII commitments and the Real-Time Oncology Review (RTOR) are game-changers. RTOR, for instance, allows the FDA to evaluate data incrementally as it becomes available, rather than waiting for a massive “data dump” at the end of a study. This iterative process allows for earlier identification of safety signals and faster approvals.

As noted in the FDA Real-Time Oncology Review framework, this flexibility speeds up the submission process significantly. Regulators are actively encouraging sponsors to use Real World Data in Clinical Research to support their applications, provided the data meets high standards of reliability and quality. This includes using EHR data to create “synthetic control arms,” which can reduce the number of patients who need to be assigned to a placebo group.

The Four Shifts Enabling Seamless Data Flow

To achieve what we call “Data at the Speed of Light,” the industry is undergoing four major shifts. We are moving away from manual, “paper-on-glass” processes toward a standards-first architecture that prioritizes interoperability from the moment a data point is generated.

| Feature | Traditional Pipeline | Standards-First Pipeline |

|---|---|---|

| Data Capture | Manual entry into EDC | Automated ingestion from DHTs/EHR |

| Data Cleaning | Batch processing (weeks/months) | Real-time AI-driven validation |

| Interoperability | Custom mappings per study | CDISC/USDM standards from day one |

| Insights | Static reports | Live Clinical Trial Data Analytics |

These shifts are powered by Metadata Repositories (MDR), which act as a “single source of truth” for data definitions. By standardizing metadata, sponsors can ensure that a “heart rate” measurement from an Apple Watch is treated the same as one from a clinical-grade EKG. This is essential for meeting CDISC standards like SDTM (Study Data Tabulation Model) and ADaM (Analysis Data Model), which are required for regulatory submissions.

Implementing Agentic AI and Data and Technology in Clinical Trials

We are moving beyond simple chatbots. Agentic AI refers to AI systems that can actually do work—ingesting multimodal data, tagging it, and identifying safety signals without human intervention. Unlike traditional Generative AI, which might just summarize a document, Agentic AI has the “agency” to execute complex workflows. For example, an AI agent can monitor incoming sensor data, detect an anomaly that suggests an adverse event, and automatically trigger a notification to the medical monitor while simultaneously drafting the necessary regulatory report.

When we use AI for Clinical Trials, we aren’t just replacing people; we are empowering them. Instead of spending 80% of their time on manual data entry and reconciliation, clinical teams can focus on patient safety and scientific discovery. This is the core of AI Clinical Trials, where technology acts as a force multiplier for human expertise, reducing cycle times and lowering the astronomical costs of drug development.

Overcoming Ethical Hurdles and the Digital Divide

Innovation must not come at the cost of equity. As we lean more on data and technology in clinical trials, we have to be careful not to widen the “digital divide.” If a trial requires a high-speed internet connection and a $1,000 smartphone, we are effectively excluding a huge portion of the global population, particularly those in lower socioeconomic brackets or developing nations.

Ethical challenges include:

- Data Quality and Bias: Ensuring that a wearable’s “step count” or “oxygen saturation” sensor is as accurate for a patient with a walker or different skin tones as it is for a marathon runner. AI models trained on non-diverse data can perpetuate health inequities.

- Privacy and Sovereignty: Using Trusted Research Environments to ensure that sensitive patient data is never exposed. This is particularly important for genomic data, which is inherently identifiable.

- Authentication and Compliance: Using biometric recognition (like fingerprint or iris scans) to ensure the person wearing the device is actually the trial participant, preventing “proxy” data entry.

According to scientific research on the digital divide, we must proactively provide devices and data plans to participants to ensure our trials remain representative of the real world. Furthermore, we must address the “Black Box” problem of AI. For regulators to trust AI-driven insights, the systems must be explainable (XAI). We need to know why an AI flagged a specific patient as a safety risk, not just that it did. This transparency is the foundation of ethical clinical research in the digital age.

Future Trends: Digital Twins and Real-Time Insights

What does the next five years look like? We are moving toward Digital Twins—virtual models of patients built from their own biological, clinical, and lifestyle data. In early-phase trials, a digital twin can help predict how a patient might react to a new drug based on their unique genetic profile, allowing researchers to adjust dosages before a single pill is taken. This moves us from “population-based” medicine to truly “personalized” medicine.

Initiatives like the Million Veteran Program and the All of Us study are already building the massive, longitudinal datasets needed to fuel these models. These datasets allow us to see how diseases progress over decades, providing a baseline for what “normal” looks like for different demographics. When we combine this with The Future of Clinical Trial Technology, we see a world where trials are:

- Adaptive: Changing in real-time based on incoming data. If a drug shows high efficacy in a specific sub-group, the trial can be automatically adjusted to enroll more of those patients.

- Personalized: Tailoring treatments to a patient’s specific genetic profile, reducing the “trial and error” approach to prescribing.

- Smarter: Using Clinical Trial Technology Trends like federated learning to analyze data across borders. Federated learning allows AI models to be trained on data at different hospitals without the data ever leaving its original location, solving the problem of data residency and privacy laws.

As discussed in scientific research on health digital twins, these technologies promise to turn clinical research into a high-precision engineering discipline. We are entering an era where we can simulate trial outcomes before they happen, significantly reducing the risk of late-stage failures and ensuring that only the most promising candidates move forward into human testing.

Frequently Asked Questions about Clinical Trial Tech

What are the main challenges in accessing high-quality trial data?

The primary issues are fragmentation and lack of standards. With 70% of data coming from non-traditional sources like wearables and EHRs, it often arrives in different formats (imaging, text, sensor data) that don’t “talk” to each other. This creates “data silos” that require manual reconciliation. Solving this requires a standards-first approach using tools like a Clinical Trial Data Management Complete Guide to ensure data is FAIR (Findable, Accessible, Interoperable, and Reusable).

How do digital health technologies improve patient recruitment?

DHTs remove the “geographic tax” on participation. By using AI Clinical Trial Recruitment, sponsors can identify eligible patients through EHR data and allow them to participate from home via decentralized models. This can double enrollment rates, reduce drop-out rates by making participation less burdensome, and significantly increase participant diversity by reaching beyond major urban medical centers.

What is an agentic blueprint for clinical trials?

An agentic blueprint is a strategic roadmap for implementing AI that can perform autonomous tasks rather than just generating text. It starts with diagnosing data maturity and then deploying AI “agents” to handle specific, high-value workflows—like automatically generating Case Report Forms, mapping raw data to SDTM standards, or monitoring for adverse events in real-time. This blueprint ensures that AI is integrated into the trial’s DNA rather than being a bolt-on tool.

How does federated learning protect patient privacy?

Federated learning is a decentralized machine learning technique. Instead of moving sensitive patient data to a central server (which creates security risks), the AI model is sent to the data. The model “learns” from the data locally at the hospital or research center and then sends only the updated model weights back to the central system. This ensures that the raw patient data never leaves its secure environment, complying with strict regulations like GDPR and HIPAA.

Conclusion

The “trial” in clinical trials shouldn’t refer to the difficulty of managing data. By embracing the latest in data and technology in clinical trials, we can finally move toward a model where the data flows as fast as the science.

At Lifebit, we are building the infrastructure to make this possible. Our next-generation federated AI platform provides secure, real-time access to global biomedical and multi-omic data. Whether it’s through our Trusted Research Environment (TRE), our Trusted Data Lakehouse, or our R.E.A.L. (Real-time Evidence & Analytics Layer), we enable sponsors to collaborate securely across hybrid data ecosystems.

We don’t just move data; we move insights. By Celebrating the Role of Data in Clinical Trials, we are helping the world’s leading biopharma companies and public health agencies turn complex data into the life-saving treatments of tomorrow.

Ready to modernize your data pipeline? Explore the Lifebit Platform or read our Clinical Trial Complete Guide to learn more.