The Secret Sauce for GDPR Compliant Research Environments

Why GDPR Compliance Is Non-Negotiable for Research Organizations

GDPR compliant research environments are secure, controlled digital workspaces that enable researchers to analyze sensitive health, genetic, and clinical data while meeting EU data protection requirements—including lawful processing bases, technical safeguards like encryption and pseudonymization, and strict governance protocols for data access, retention, and breach response.

Key requirements for establishing GDPR compliant research environments:

- Lawful basis for processing – Identify appropriate legal grounds (public interest, legitimate interests, or explicit consent) before collecting or analyzing personal data

- Technical safeguards – Implement AES-256 encryption, pseudonymization, multi-factor authentication, and attribute-based access controls

- Data Protection Impact Assessments (DPIAs) – Conduct mandatory risk assessments for large-scale processing of health, genetic, or biometric data

- Trusted Research Environments (TREs) – Deploy secure workspaces that keep data centralized and prevent unauthorized extraction or movement

- Breach response protocols – Establish 72-hour notification procedures and maintain comprehensive audit trails of all data access and processing activities

The stakes are high. GDPR fines can reach €20 million or 4% of global annual revenue—whichever is higher. In the first 20 months after GDPR enforcement, major companies like Google and Facebook faced penalties totaling over €114 million, and over 160,000 data breach notifications were reported across the EU. For research organizations handling genetic, clinical trial, or population health data, non-compliance doesn’t just risk financial penalties—it threatens participant trust, collaborative partnerships, and the ability to conduct cross-border studies.

Yet compliance isn’t just about avoiding fines. When done right, GDPR principles like data minimization, purpose limitation, and privacy by design actually accelerate research by building trust, enabling secure data sharing, and creating repeatable, auditable workflows. Organizations that treat GDPR as a checkbox exercise struggle. Those that embed it culturally—through federated architectures, Trusted Research Environments, and clear governance—open up faster insights while protecting the rights of data subjects.

I’m Maria Chatzou Dunford, CEO and Co-founder of Lifebit, where we’ve spent over a decade building federated AI platforms that power GDPR compliant research environments for public health agencies and pharmaceutical companies analyzing genomic and clinical data at scale. Our work with secure, in situ analytics has shown that compliance and innovation aren’t trade-offs—they’re two sides of the same coin.

The Core Pillars of GDPR Compliant Research Environments

Building gdpr compliant research environments isn’t just about a fancy firewall; it’s about baking seven core principles into your organization’s DNA. Think of these as the “unbreakable rules” of the data playground. These principles, outlined in Article 5 of the GDPR, serve as the framework for all data processing activities within a research context.

First, we have data minimization. This means only collecting the data you absolutely need for your specific research question. If you’re studying lung capacity, you probably don’t need the participant’s high school GPA or their voting record. In genomic research, this is particularly challenging; researchers must justify why full-genome sequencing is necessary versus targeted exome sequencing. Next is purpose limitation—you can’t collect data for a cancer study and then secretly use it to sell life insurance or share it with marketing firms. The data must be used for the specific, documented scientific purpose for which it was originally gathered.

Then there’s storage limitation. Personal data shouldn’t hang around forever like that one guest who won’t leave your party. Once the research is done, the data must be deleted or anonymized unless there’s a specific legal reason to keep it, such as longitudinal study requirements or statutory clinical trial retention periods (which can often be 25 years for certain clinical data). We also must ensure integrity and confidentiality (keeping data safe from hackers and accidents) and accuracy (making sure the data isn’t wrong, which is vital for scientific validity).

Finally, the big one: accountability. It’s not enough to follow the rules; you have to prove you’re following them. This involves keeping detailed Principles and grounds for processing | ICO records, including logs of who accessed what data and when. For a deeper dive into how these apply to the lab, check out our guide on data privacy research.

Lawful Bases for Processing Health and Genetic Data

Before we even touch a single byte of data, we need a “hall pass”—a lawful basis for processing. Under GDPR, there are six general bases, but for research, three are the most common:

- Public Interest: Often used by universities or government agencies (like the NHS) where the research is considered a “task in the public interest.” This is frequently cited in large-scale population health studies.

- Legitimate Interests: Used by private companies when the research benefit outweighs the privacy risk, provided a “balancing test” is documented. This requires a formal Legitimate Interest Assessment (LIA) to be performed and stored.

- Explicit Consent: This is the gold standard but can be tricky. Consent must be “freely given, specific, informed, and unambiguous.” In research, this often involves a multi-layered consent form where participants can opt-in to specific types of data use.

However, health and genetic information are “Special Category Data.” This means they get extra protection under Article 9. To process this, we usually rely on Article 9(2)(j), which specifically allows processing for scientific research purposes, provided appropriate safeguards are in place. It is important to distinguish between “consent” as a lawful basis for GDPR and “informed consent” as an ethical requirement under the Declaration of Helsinki or the Clinical Trials Regulation (CTR). You can read more about these research provisions to see which fits your project best.

Technical Measures for GDPR Compliant Research Environments

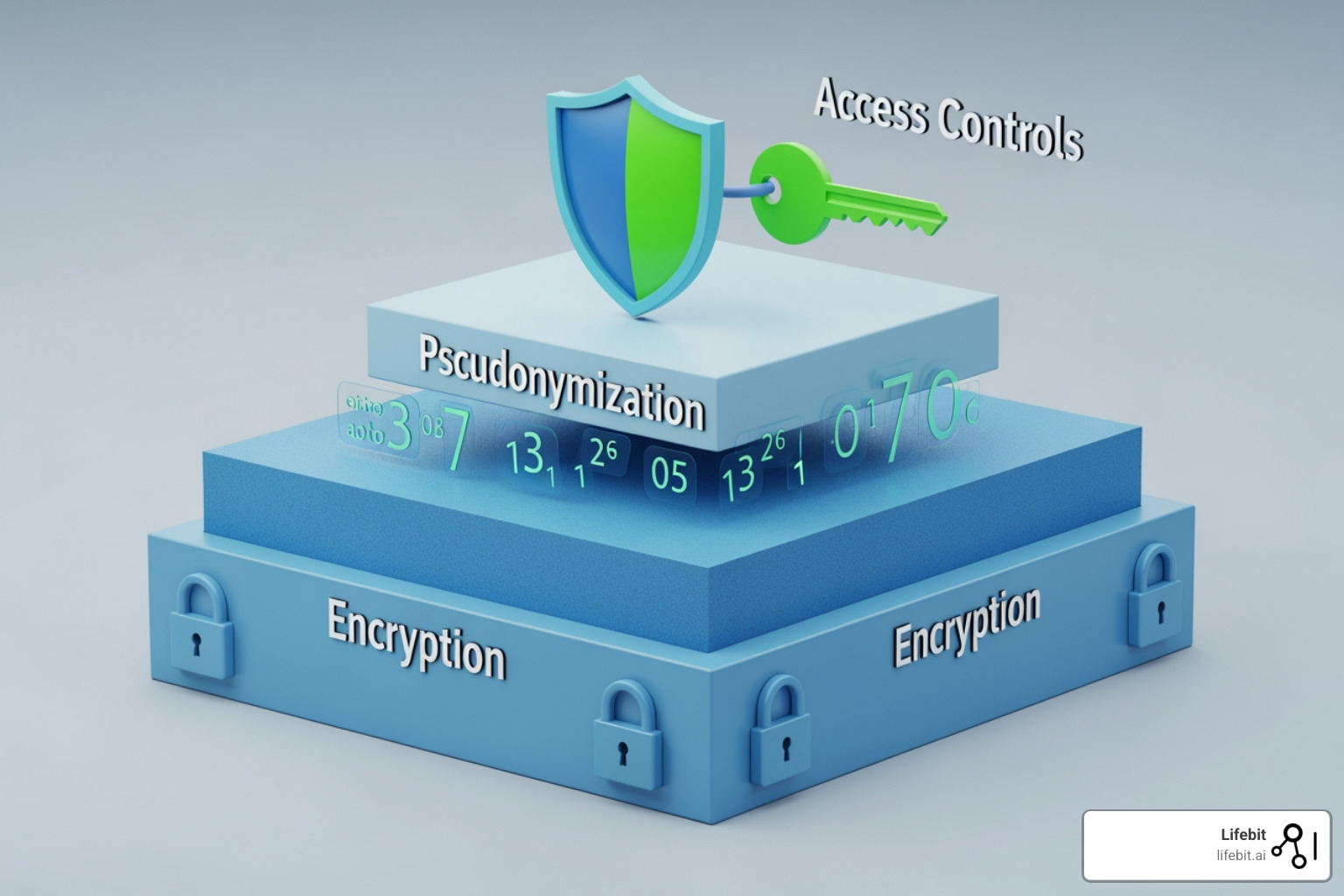

Now, let’s talk about the “techy” stuff. To keep gdpr compliant research environments secure, we use a “defense in depth” strategy, ensuring that if one layer fails, others remain to protect the data subject’s rights.

- AES-256 Encryption: This is the industry standard. We encrypt data both “at rest” (while it’s sitting on a hard drive) and “in transit” (while it’s moving across the internet). This ensures that even if a physical drive is stolen or a packet is intercepted, the data remains unreadable.

- Pseudonymization: This is a researcher’s best friend. We replace identifying info (like names or social security numbers) with a unique code or “token.” The data is still “personal” under GDPR because a “key” exists to link it back, but it drastically reduces risk. Unlike anonymization, pseudonymization allows for longitudinal tracking of a patient’s progress without exposing their identity to the analyst.

- Attribute-Based Access Control (ABAC): Instead of just giving someone a password, ABAC looks at who they are, where they are, and what they are trying to do. “Is this Dr. Smith? Is she on a secure hospital laptop? Is it during work hours? Does she have the ‘Genomics Researcher’ attribute?” If all conditions are met, she gets in. This is far more secure than traditional Role-Based Access Control (RBAC).

- Multi-Factor Authentication (MFA): Because “Password123” is not a security strategy. MFA requires at least two forms of identification (something you know, something you have, or something you are) before granting access.

Implementing these measures ensures you are handling GDPR compliant data with the respect it deserves.

Implementing Trusted Research Environments (TREs) and the Five Safes

In the old days, researchers would “download” datasets to their own computers or local servers. In the GDPR era, that’s a nightmare waiting to happen, as it leads to “data sprawl” where sensitive information exists on dozens of unmanaged devices. Enter the Trusted Research Environment (TRE).

A TRE (sometimes called a Secure Data Environment or Data Safe Haven) is a secure digital box. The data stays inside the box, and we bring the researchers to the data. They can analyze it using built-in tools (like RStudio, Jupyter Notebooks, or SAS), but they can’t take the raw data home with them. To make this work, we use The Five Safes Framework, a globally recognized standard for data access:

- Safe People: Only researchers with the right training and credentials get in. This often involves mandatory GDPR training and institutional vetting of the researcher’s background.

- Safe Projects: The research must be ethical and for a valid purpose. Projects are reviewed by a Data Access Committee (DAC) to ensure the data use aligns with the original consent and public benefit.

- Safe Settings: The environment itself is locked down. This means no copy-pasting out of the environment, no unauthorized internet access, and no ability to upload external software that hasn’t been security-vetted.

- Safe Data: Data is pseudonymized or de-identified before the researcher sees it. This minimizes the risk of accidental re-identification during the analysis phase.

- Safe Outputs: Before results (like charts, tables, or model weights) leave the TRE, they are checked via a process called Statistical Disclosure Control (SDC). This ensures that no individuals can be re-identified from the aggregate results (e.g., ensuring a cell in a table doesn’t represent a single unique patient).

For a full breakdown, see our trusted research environment complete guide.

Why Federated Architectures are the Gold Standard for GDPR Compliant Research Environments

If a TRE is a secure box, a federated architecture is a network of secure boxes that talk to each other. This is the “secret sauce” for global research, particularly in rare disease studies where data is scattered across many countries.

Traditionally, if a scientist in New York wanted to study data from a hospital in London, the data would have to travel across the Atlantic. This triggers complex “international transfer” rules and potential conflicts with local data residency laws. With a federated approach, the data stays in London. The researcher sends their analysis code to the data, the code runs locally in London within a secure container, and only the results (which are non-personal, aggregate statistics) are sent back to New York.

This ensures data residency and cloud sovereignty—the data never leaves its home jurisdiction. This is a perfect response to the “Brussels Effect,” where EU privacy standards are becoming the global norm. By keeping data in situ, organizations avoid the legal hurdles of the Schrems II ruling and the complexities of Standard Contractual Clauses (SCCs). We’ve written extensively on how TREs secure global health data sharing using this exact model.

The Role of Data Controllers and Processors in Clinical Trials

In clinical trials, roles must be crystal clear to ensure accountability.

- Data Controller: Usually the Sponsor (the pharma company or lead university). They decide why and how data is processed. They carry the ultimate legal burden for compliance and are the primary point of contact for the Data Protection Authority (DPA).

- Data Processor: Usually the CRO (Contract Research Organization) or a technology provider like Lifebit. They handle the data on behalf of the Sponsor and must follow strict instructions outlined in a contract.

- Trial Sites: Hospitals or clinics often act as joint controllers because they collect the data directly from patients and have their own clinical responsibilities.

It is vital to have Data Processing Agreements (DPAs) in place that outline exactly who is responsible for what, especially when it comes to reporting data breaches. A common mistake is assuming the CRO handles everything—but the Sponsor is always on the hook for the “big” compliance decisions, such as determining the lawful basis or approving the DPIA.

Operationalizing Compliance: DPIAs, Data Mapping, and Breach Response

Compliance isn’t a one-time event; it’s an operational rhythm that must be maintained throughout the lifecycle of a research project.

One of the most important tools is the Data Protection Impact Assessment (DPIA). Under Article 35, if you are doing something high-risk—like analyzing the genomes of 50,000 people or using AI to predict health outcomes—a DPIA is mandatory. A DPIA is a formal process to identify risks (e.g., data leakage, re-identification) and figure out how to lower them. It must involve the Data Protection Officer (DPO) and, in some cases, requires consultation with the national supervisory authority if the residual risk remains high.

You also need Data Mapping and a Record of Processing Activities (RoPA). You should be able to answer: “Where did this data come from? Who has access to it? What is the legal basis? When will we delete it?” If you can’t answer these questions within a reasonable timeframe during an audit, you’re not compliant. RoPAs are the first thing a regulator will ask for during an investigation.

And then there’s the 72-hour rule. If you have a data breach that puts people at risk (e.g., a lost unencrypted laptop or a database hack), you have exactly 72 hours from the moment you become aware of it to notify the authorities. This requires a “fire drill” mentality—you need a team ready to jump into action, assess the scope of the breach, and mitigate the damage immediately. For more on these rules, see our summary of data privacy regulations.

Managing Individual Rights and the Right to Erasure in GDPR Compliant Research Environments

GDPR gives individuals (data subjects) significant power over their information. They have the right to access their data, the right to portability (moving it to another provider), and the famous right to erasure (right to be forgotten.).

In a research context, the right to erasure can be complex. If a participant withdraws from a study and asks for their data to be deleted, you generally have 30 days (up to 90 in complex cases) to comply. However, Article 17(3)(d) provides an exemption if the erasure would “render impossible or seriously impair the achievement of the objectives of that processing” for scientific research. This is not a blanket excuse; you must still delete what you can without ruining the study’s integrity. This is why “unintelligent keys” and segregated database architectures are so important. If your data is a giant, tangled mess, deleting one person’s info without breaking the whole database is nearly impossible.

International Data Transfers and Extraterritorial Scope

Research is a global team sport, but GDPR is a bit of a homebody. To move data outside the EU/EEA, you need transfer data outside the EU mechanisms like:

- Adequacy Decisions: The EU has decided certain countries (like Canada, Japan, or the UK) have “essentially equivalent” laws.

- Standard Contractual Clauses (SCCs): Pre-written legal contracts that promise to protect data to EU standards. Following the Schrems II ruling, these often require a Transfer Impact Assessment (TIA) to ensure the destination country’s laws don’t undermine the SCCs.

- Binding Corporate Rules (BCRs): For massive multinational companies to move data internally across borders.

Even if your organization is based in New York, Singapore, or Sydney, if you are monitoring the behavior of people in the EU (like in a clinical trial) or offering them services, GDPR applies to you. This is the “extraterritorial scope” (Article 3). We take preserving patient data privacy and security seriously, regardless of where the server is located, ensuring that global collaboration doesn’t come at the cost of individual privacy.

Frequently Asked Questions about GDPR Compliant Research

Do non-EU organizations need to comply with GDPR?

Yes! This is the “extraterritorial scope.” If you offer goods or services to EU residents, or if you monitor their behavior (which covers almost all clinical research and observational studies involving EU participants), you must comply. Many non-EU organizations are required to appoint an EU Representative to act as a point of contact for regulators and data subjects.

When is a Data Protection Officer (DPO) required for research?

A DPO is mandatory if your core activities consist of processing operations which require regular and systematic monitoring of data subjects on a large scale, or if you process special categories of data (like health or genetics) on a large scale. Specifically, a DPO is required if you:

- Are a public authority (like a state university or hospital).

- Conduct “large-scale systematic monitoring” of individuals (e.g., tracking health via wearables).

- Process “special category data” (health/genetics) on a large scale. Even if not strictly required, having a DPO is a best practice for any serious research environment to ensure ongoing compliance and expert guidance.

Can anonymized data be taken out of GDPR scope?

Yes, but “anonymization” is a very high bar in the eyes of EU regulators. It must be irreversible. If there is any way to re-identify the person (even by combining the data with other public info or using advanced AI re-identification techniques), it is still “pseudonymized” and falls under GDPR. Most research data is pseudonymized, not anonymized, to maintain its scientific utility.

What is the “Secondary Use” of data under GDPR?

Secondary use refers to using data for a purpose other than the one for which it was originally collected. GDPR Article 5(1)(b) states that further processing for scientific research purposes shall not be considered incompatible with the initial purposes. However, this still requires a valid lawful basis and appropriate safeguards, such as pseudonymization, to ensure the rights of the data subjects are protected.

| Feature | Pseudonymization | Anonymization |

|---|---|---|

| GDPR Scope | Still applies | Does not apply |

| Re-identification | Possible with a “key” | Irreversible |

| Research Utility | High (can link datasets) | Lower (links are broken) |

| Security Risk | Moderate | Very Low |

Conclusion: Future-Proofing Your Research with Federated AI

At Lifebit, we believe that the future of medicine shouldn’t be held back by paperwork. By building gdpr compliant research environments that use federated AI, we allow the world’s best minds to collaborate on the world’s most sensitive data—without ever moving a single record.

This approach doesn’t just “check the box” for compliance; it builds a foundation of trust with patients and partners. When people know their genetic “blueprints” are safe, they are more willing to participate in the breakthroughs that will save lives tomorrow.

Ready to see how we can secure your data ecosystem? Read more about the Lifebit approach to data governance security or visit our Trust Center to get started.