Why your AI should stay where the data lives

Federated AI Research Environment: Access 250M+ Patient Records Without Moving Data

Federated AI research environments let multiple institutions train shared AI models without ever moving sensitive data from its source. This paradigm shift addresses the “Data Paradox” in modern medicine: we have more data than ever before, yet 97% of hospital data remains unused because it is too risky or difficult to move.

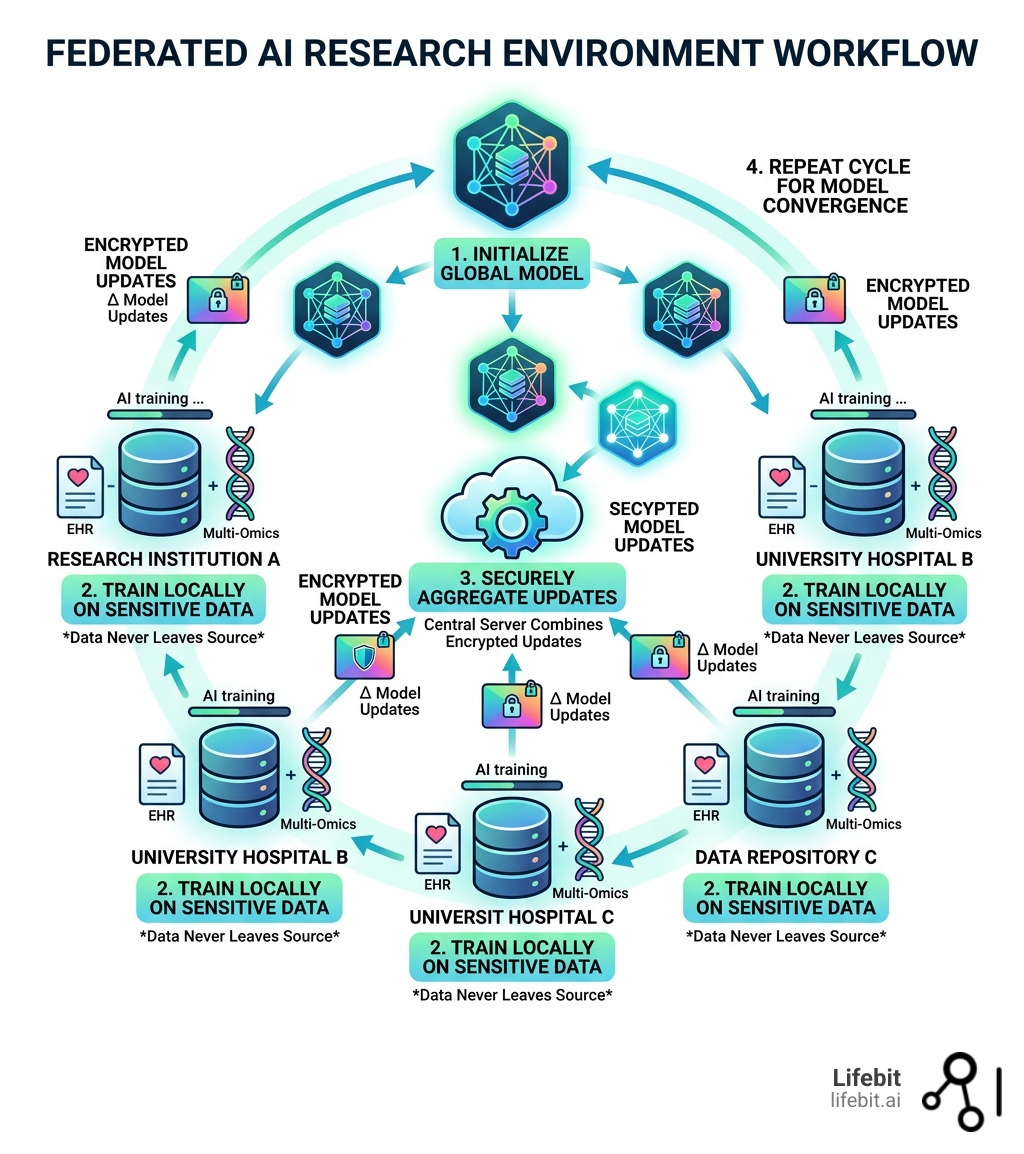

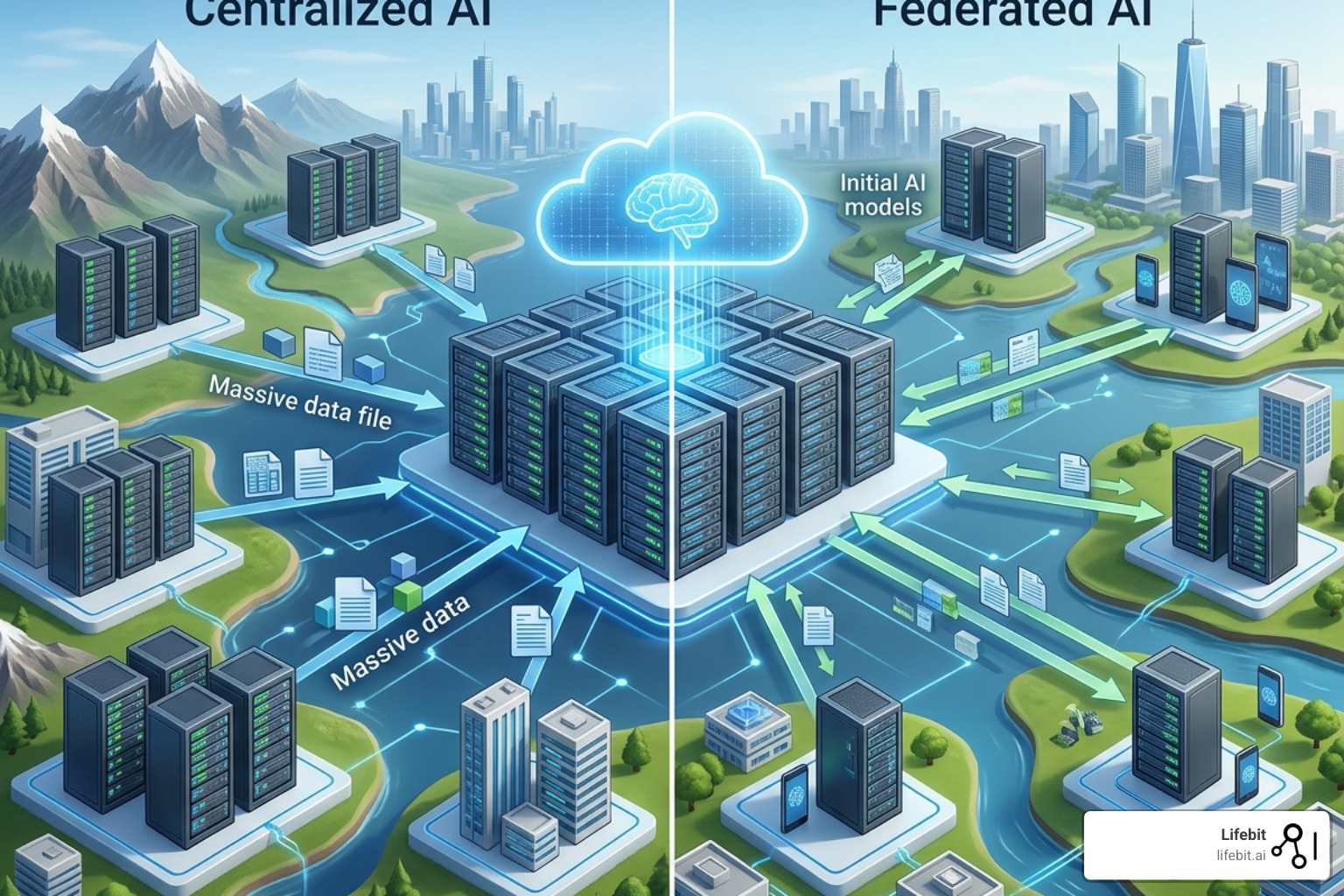

In a traditional centralized model, data must be aggregated into a single repository. In a federated model, the computation is decentralized. Here’s the core idea at a glance:

| Step | What Happens |

|---|---|

| 1. Initialize | A global model is created and distributed to each data node (e.g., a hospital or biobank) |

| 2. Train locally | Each site trains the model on its own local data — raw patient data never leaves the firewall |

| 3. Share updates | Only encrypted model updates (gradients or weights) are sent back to the central server |

| 4. Aggregate | A central server combines these updates into an improved global model using secure algorithms |

| 5. Repeat | The cycle continues for multiple rounds until the model reaches the desired accuracy |

This approach directly solves the core problem facing pharma, public health agencies, and research institutions: sensitive patient data — EHR records, genomics, multi-omics — is locked in siloed, jurisdiction-bound systems. You can’t simply copy it to a central server. Regulations like GDPR in Europe, HIPAA in the US, and the Data Security Law in China prohibit or severely restrict the cross-border transfer of identifiable health information. Ethics boards block it. Data custodians, protective of their patients’ privacy and their institution’s intellectual property, refuse it.

The result? Researchers wait months or even years to access data. Insights that could accelerate drug discovery, identify rare disease biomarkers, or improve pharmacovigilance never materialize. Patients don’t get the treatments they need faster because the “data gravity” — the sheer weight and sensitivity of the information — makes it immobile.

Federated AI flips the model entirely. Instead of bringing data to the AI, you bring the AI to the data.

I’m Maria Chatzou Dunford, CEO and Co-founder of Lifebit, and I’ve spent over 15 years building computational tools for exactly this problem — from genomic data pipelines at the Centre for Genomic Regulation to leading the platform that now powers federated AI research environments across datasets from more than 250 million patients. In this guide, I’ll walk you through how to deploy federated AI across institutional silos, practically and compliantly, ensuring that your research is limited only by your imagination, not by data access hurdles.

Federated AI research environment terms to remember:

Federated AI Research Environment: Why Centralized Data Lakes Are Killing Research

In the “old days” (which was basically last Tuesday in tech years), if we wanted to train a model on data from three different hospitals, we had to beg, plead, and sign 400-page legal documents to move that data into one central “data lake.” This centralized approach is increasingly impossible. Data is too big to move (a single whole-genome sequence is ~100GB), too sensitive to share, and too legally protected to migrate across borders.

A federated AI research environment is a decentralized ecosystem where data stays exactly where it was born—inside the hospital, the biobank, or the national health registry. Instead of moving the data, we move the computation. This shift isn’t just a technical preference; it’s a regulatory necessity. By keeping data in-place, we adhere to the “Five Safes” framework, which is the gold standard for data privacy:

- Safe People: Only vetted researchers gain access to the environment.

- Safe Projects: Research must be approved for public interest or specific scientific goals.

- Safe Settings: The environment limits what users can do (e.g., no raw data downloads).

- Safe Data: Data is de-identified and treated to minimize disclosure risk.

- Safe Outputs: Only aggregate results or model weights leave the environment, never individual records.

As highlighted in the AI4EOSC: a Federated Cloud Platform for Artificial Intelligence in Scientific Research paper, the scientific community is moving toward these federated cloud platforms because they allow for FAIR (Findable, Accessible, Interoperable, and Reusable) data principles without compromising sovereignty. For a deeper dive into how these architectures are built, check out our Federated Research Environment Complete Guide.

Core Principles of a Federated AI Research Environment

The magic of this environment rests on four pillars that ensure both performance and privacy:

- Local Training: The heavy lifting happens at the edge. Each site uses its own hardware (GPUs/CPUs) to train the model. This distributes the computational cost, which is a massive advantage for institutions that cannot afford massive centralized supercomputers.

- Model Aggregation: Only the “learnings”—mathematical representations known as weights and gradients—are sent to a central coordinator. These updates are essentially a summary of what the model learned from the local data.

- Global Model Refinement: The coordinator combines these updates—often using an algorithm like Federated Averaging (FedAvg)—to improve the master model. This ensures the global model benefits from the diversity of all participating sites.

- Iterative Learning: This process repeats over many rounds. With each round, the model becomes more generalized and accurate, eventually reaching a performance level comparable to a model trained on a centralized dataset.

This decentralized loop is the backbone of modern Federated Data Analysis, ensuring that the global model benefits from the diversity of all datasets combined without the risks of data pooling.

How Federated Learning Differs from Traditional AI

Traditional AI is like a potluck where everyone has to bring their ingredients to one person’s house to cook. If one person forgets an ingredient or the health inspector (regulator) shuts down the transport, the meal is ruined. Federated AI is like everyone staying in their own kitchens, following the same recipe, and then calling each other to say, “Hey, I found that adding a pinch of salt makes it better.”

The benefits are massive and multifaceted:

- Data Minimization: We only share what is strictly necessary for the model to learn. This aligns perfectly with the GDPR principle of data minimization.

- Reduced Egress Fees: Moving petabytes of genomic data across cloud regions or from on-prem to cloud is prohibitively expensive. Sending a few megabytes of model updates is practically free.

- Real-time Insights: You can train on the most current data available at the source (e.g., live EHR feeds) rather than waiting for quarterly data dumps that are often outdated by the time they are processed.

- Diversity and Inclusion: Federated environments allow researchers to include data from underrepresented populations in different geographic regions, reducing algorithmic bias and improving the generalizability of medical AI.

For more on why this is the future of data science, see our Federated Analytics Ultimate Guide.

Federated AI Research Environment: How to Train Models Across Global Silos

Deploying a federated AI research environment across different organizations—say, a university in London, a hospital in Tokyo, and a pharma lab in New York—requires a clear strategy on how the data “looks” across those sites. Depending on how the data is partitioned, we use different architectural approaches.

1. Horizontal Federated Learning (HFL)

This is used when sites have the same types of data but for different people. For example, two different hospitals both have “Blood Pressure,” “Age,” and “Genomic Variant” columns, but for different sets of patients. This is the most common setup in Federated Learning Applications for healthcare, where we want to increase the sample size for a study without merging patient registries.

2. Vertical Federated Learning (VFL)

This is the “Lego” approach. Imagine a bank in Singapore has a person’s financial history, and an e-commerce site has their shopping habits. In healthcare, this might be a diagnostic lab having a patient’s genomic data while a separate clinic has their clinical outcomes. They have different information about the same people. VFL allows them to build a combined model (e.g., predicting disease risk based on both genetics and lifestyle) without showing each other their private columns.

3. Federated Transfer Learning (FTL)

When there is very little overlap in both people and data types, FTL uses a pre-trained model (often trained on a large public dataset) and fine-tunes it across the federated network. This is becoming a standard for foundation models in biology, where a model trained on general protein sequences is fine-tuned on proprietary, site-specific drug-target interaction data.

Choosing the Right Framework for Your Federated AI Research Environment

You don’t have to build this from scratch. There are several powerful tools available, each with its own strengths:

- Flower (flwr): A very popular, “friendly” framework used by over 6,800 researchers. It’s great because it is framework-agnostic, meaning it works with any ML library (PyTorch, TensorFlow, JAX, etc.) and scales from a single laptop to thousands of edge nodes. Its architecture separates the communication layer from the ML logic, making it highly flexible.

- FederatedScope: An event-driven framework developed by Alibaba. It is excellent for research because it includes built-in hyperparameter optimization and supports complex graph neural networks, which are essential for modeling molecular structures in drug discovery.

- FedScale: If you need to test how your model performs across millions of mobile devices or edge nodes, this is the benchmark engine to use. It provides realistic datasets and simulation environments to evaluate the scalability of federated algorithms.

- NVFlare: NVIDIA’s framework, which is highly optimized for medical imaging and healthcare workflows, providing robust security features like provisioning and identity management.

While these open-source tools are fantastic for prototyping, enterprise-grade research often requires a platform that handles the “boring but critical” stuff: security, networking, and compliance. That’s where we focus our efforts at Lifebit, providing a turnkey solution that guarantees results and handles the orchestration of these complex workflows.

Step-by-Step Workflow for Collaborative Training

Ready to hit “run”? Here is the standard operating procedure for a federated training session:

- Global Initialization: The lead researcher defines the model architecture (e.g., a ResNet for imaging or a Transformer for genomics) and the “training plan” (number of rounds, learning rate, etc.).

- Distribution: The central server (or “SuperLink” in Flower terminology) sends the initial model weights to all participating “SuperNodes” (the data sites).

- Local Computation: Each site trains on its local data for a few “epochs.” This is where the actual learning happens. The data remains encrypted at rest and in use within the site’s secure environment.

- Encrypted Updates: Sites send back their model updates. To prevent anyone from “reverse-engineering” the data from the updates (a risk known as an inference attack), we use techniques like Secure Multi-Party Computation (SMPC) or differential privacy.

- Weighted Aggregation: The server averages the updates. If one hospital has 10,000 patients and another has 100, the first hospital’s update usually gets more “weight” to ensure the model isn’t biased toward smaller, potentially noisier datasets.

This rigorous process is detailed in our Federated Data Sharing Complete Guide.

3 Federated AI Research Environment Hurdles That Are Killing Your Results

If federated AI was easy, everyone would be doing it. But as we’ve learned from managing massive datasets for global pharma, there are three primary hurdles you’ll need to clear to get publication-quality results.

| Challenge | Impact | Solution |

|---|---|---|

| Data Heterogeneity | Models perform poorly because data is “Non-IID” (different distributions) | Personalized FL, Client Clustering, & FedProx |

| Communication Overhead | Slow networks delay training rounds and increase costs | Gradient Compression, Sparse Updates, & Quantization |

| Security Vulnerabilities | Risk of model poisoning or gradient leakage attacks | SMPC, Differential Privacy, & Robust Aggregation |

Detailed frameworks like OmniFed are now emerging to handle these challenges across the “edge-to-HPC” continuum, allowing researchers to switch algorithms with a single line of code depending on the hardware available at each site.

Overcoming Data Heterogeneity and Non-IID Samples

In a perfect world, every hospital would record data the same way. In the real world, Hospital A uses metric units, Hospital B uses imperial, and Hospital C has a patient population that is 20 years older on average than the others. This “Non-IID” (Not Independently and Identically Distributed) data can cause “model drift,” where the global model becomes biased toward the largest site or fails to converge entirely.

We solve this through Personalized Federated Learning, where the global model is used as a base, but each site keeps a small “local” version of the model that is fine-tuned to its specific population. Another approach is FedProx, which adds a proximal term to the local objective function to limit how far the local update can stray from the global model. Proper governance is key here, as outlined in our Federated Governance Complete Guide.

Reducing Communication Overhead in Large-Scale Deployments

Training a model requires sending updates back and forth hundreds of times. If your research sites are on slow connections or have high data egress costs, this becomes a bottleneck.

- Gradient Compression: We only send the most important changes in the model weights (e.g., Top-K compression), which can reduce the data sent by 99% without losing accuracy.

- Adaptive Scheduling: We only check in with sites that have finished their training, rather than waiting for the slowest “straggler” node to finish. This prevents one slow server from holding up the entire global research project.

- Quantization: Reducing the precision of the weights (e.g., from 32-bit floats to 8-bit integers) significantly shrinks the size of the updates sent over the wire.

These optimizations are core to a high-performing Federated Data Platform Ultimate Guide.

Defending Against Adversarial Attacks

Federated systems are vulnerable to “Model Poisoning,” where a malicious or compromised site sends fake updates to ruin the global model. We implement Robust Aggregation methods, such as the “Krum” or “Median” aggregators, which identify and discard outlier updates that look suspicious. This ensures that even if one node in the network is compromised, the integrity of the research remains intact.

Federated AI Research Environment: Guarantee 100% Compliance Without Risking Privacy

The #1 question we get from Chief Information Security Officers (CISOs) is: “Is it actually safe?” The answer lies in the Federated Trusted Research Environment (TRE).

A TRE is a secure “walled garden” where data sits. In a federated setup, the data never leaves this garden. Instead, the AI model is invited in, does its work, and is escorted out after its “pockets” (the model updates) are checked for any raw data leaks. This is often referred to as “Data Visiting” rather than “Data Sharing.”

Compliance with GDPR and HIPAA isn’t just about encryption; it’s about control and auditability. As noted in the CIFAR AI Insights Policy Brief, federated strategies are now being officially recommended for national health data access in Canada and the UK because they minimize risk while maximizing scientific utility. They allow for “sovereign data” where the data owner retains full control over who uses the data and for what purpose.

At Lifebit, our Federated Trusted Research Environment is the only one that contractually guarantees results, handling datasets from over 250 million patients globally while maintaining strict adherence to local laws.

Implementing Secure Multi-Party Computation (SMPC)

SMPC is a cryptographic trick where multiple parties can jointly compute a function over their inputs while keeping those inputs private. In federated AI, this means the central server can aggregate model updates without ever seeing the individual updates themselves.

- Homomorphic Encryption: This allows the central server to perform mathematical operations (like addition) on the model updates while they are still encrypted. The server never actually sees the numbers it is adding! It only sees the final, aggregated result once it is decrypted.

- Trusted Execution Environments (TEEs): Think of this as a “black box” in the hardware (like Intel SGX or AWS Nitro Enclaves) where the model training happens. Even the person who owns the computer or the cloud provider cannot see what’s happening inside that box. It provides a hardware-level guarantee of privacy.

For more on these technical safeguards, see our guide on Federated Data Governance.

Automating Compliance in a Federated AI Research Environment

Manual compliance checks are too slow for modern research. We use automated systems to ensure safety at every step:

- Role-Based Access Control (RBAC): Ensuring only authorized researchers with specific credentials can initiate a federated training job. Access can be revoked instantly if a project ends or a researcher leaves an institution.

- Airlock Systems: A digital checkpoint that automatically scans model updates using statistical methods to ensure no “PII” (Personally Identifiable Information) is being smuggled out. For example, it checks if the update is too specific to a single patient record.

- Immutable Audit Trails: Every single action taken in the Federated Learning In Healthcare environment—from the initial data query to the final model aggregation—is logged on a ledger that cannot be changed. This makes regulatory audits a breeze and provides full transparency to data custodians.

Federated AI Research Environment: 5 Questions Every Researcher Must Ask

1. How does federated learning protect patient privacy compared to anonymization?

Anonymization is often insufficient; with enough external data, “anonymized” records can often be re-identified. Federated learning protects privacy by ensuring that raw data—like names, medical images, or genomic sequences—never leaves its original, secure location. We add extra layers like Differential Privacy, which adds mathematical “noise” to the model updates. This ensures that the contribution of any single individual to the model is hidden, making it mathematically impossible to work backward to identify a specific patient.

2. Can federated AI handle multi-omic and genomic datasets?

Absolutely. In fact, this is where it shines. Genomic files are massive (often 100GB+ per person). Moving these files for a study of 50,000 people is a logistical and financial nightmare. In a federated AI research environment, we keep those files on local high-performance storage and only transmit the tiny model updates (often just a few megabytes), making population-scale genomics finally feasible for even small research teams.

3. What are the infrastructure requirements for a federated research environment?

You need three core components:

- Local Compute: Servers at each data site (on-prem or cloud) to run the training. These must have access to the local data but be isolated from the public internet.

- Secure Orchestrator: A central hub to coordinate the rounds, manage the “Airlock” process, and aggregate updates.

- Standardized APIs: A way for these systems to talk to each other securely across firewalls without requiring hospitals to open dangerous ports. Lifebit’s platform is designed to be 5x faster than traditional setups, getting you from “data connection” to “insights” in record time.

4. How do you handle data quality and standardization across sites?

This is a major challenge. We use Federated Data Profiling to understand the data distribution at each site before training begins. We also employ automated ETL (Extract, Transform, Load) pipelines that map local data to common data models like OMOP or CDISC, ensuring that the AI model is seeing “apples to apples” across all participating institutions.

5. Is the model accuracy as good as centralized training?

In most cases, yes. Research has shown that with proper optimization (like FedAvg or FedProx), federated models can achieve 99% of the accuracy of centralized models. In some cases, they actually perform better because they are trained on a more diverse, real-world dataset that hasn’t been “sanitized” for a central repository.

Start Your Federated AI Research Environment and Cut Timelines by 80%

The future of AI isn’t in a single, giant data center. It’s distributed. It’s decentralized. And most importantly, it’s private. By adopting a federated AI research environment, you are not just checking a compliance box—you are unlocking access to the world’s most valuable data that was previously “off-limits.”

For biopharma companies, this means reducing the time to identify drug targets. For clinicians, it means more accurate diagnostic tools that work for everyone, not just the majority population. For patients, it means faster access to life-saving precision medicine.

At Lifebit, we are proud to power this revolution. Our Lifebit Federated Biomedical Data Platform is built to handle the complexities of global precision medicine, providing a secure, real-time bridge between researchers and the data that will define the next generation of healthcare. We handle the orchestration, the security, and the compliance, so you can focus on the science.

Stop moving your data. Start moving your AI.

Ready to see federated AI in action? Contact Lifebit today to learn how our federated Trusted Research Environment can accelerate your research by 5x while keeping you 100% compliant with global data regulations.