Why Your Data Mesh Needs Federated Governance to Survive

The 4 Pillars of a Federated Data Mesh

#

A federated data mesh is a decentralized data architecture that distributes data ownership across business domains — such as finance, sales, or clinical research — while enforcing shared governance standards across all of them. Instead of funneling everything into a central data lake or warehouse, each domain owns, manages, and publishes its data as a product.

Here is what that means in practice:

| Concept | What It Means |

|---|---|

| Federated | Autonomous domains operate independently but follow common rules |

| Data Mesh | Data ownership is distributed across business domains, not held centrally |

| Governance | Global standards (security, compliance, quality) are enforced — often automatically |

| Key benefit | Analyze data across domains without moving or centralizing it |

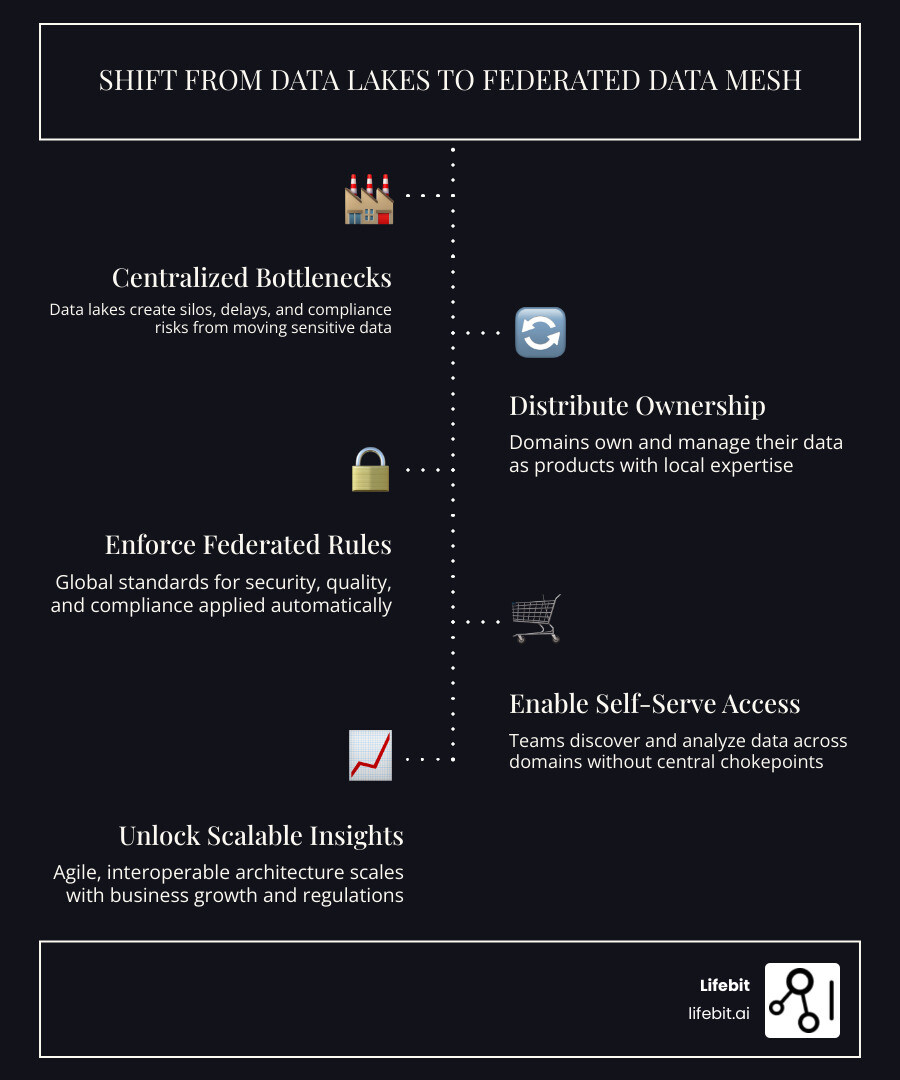

The problem with centralized data architectures is well-documented. As data volumes grow — and as organizations operate across more regions, systems, and regulatory environments — central data lakes become bottlenecks. Data quality degrades because the central team lacks the context of the data’s origin. Teams wait weeks for access because every request must pass through a single IT gatekeeper. And for organizations handling sensitive data like genomics, health records, or financial transactions, moving data at all can be a compliance nightmare.

In a centralized model, the “Data Engineering” team is often expected to be omniscient. They are asked to transform clinical trial data in the morning and marketing attribution data in the afternoon. This lack of domain expertise leads to the “Data Swamp” phenomenon, where data is stored but its meaning is lost.

Data mesh was created to solve exactly this. Originated by Zhamak Dehghani at Thoughtworks, it mirrors the same logic that made microservices successful in software engineering: distribute ownership to the teams who actually understand the data. By shifting from a monolithic architecture to a distributed one, we align the technical architecture with the organizational structure.

But distribution without structure is chaos. That is where federated governance comes in — and why getting it right is not optional.

I am Dr. Maria Chatzou Dunford, CEO and Co-founder of Lifebit, where I have spent over 15 years building federated data infrastructure for some of the world’s most security-sensitive biomedical research environments. My work sits at the intersection of federated data mesh principles and real-world genomics data analysis — where the stakes of getting governance wrong are measured in patient outcomes, not just IT budgets.

Federated data mesh basics:

To understand why a federated data mesh works, we have to look at its foundation. It isn’t just a technical change; it is a sociotechnical shift. Think of it like a group of independent countries (domains) that agree on a common set of trade laws (governance) so they can work together without losing their sovereignty.

- Domain-Driven Ownership: We stop pretending a central IT team understands the nuances of oncology data as well as the oncologists do. Ownership stays with the experts who create and use the data daily. This ensures that the data is accurate, relevant, and timely.

- Data as a Product: Data isn’t just a byproduct of an app; it is a product in its own right. This means it must meet specific quality standards: it must be discoverable, addressable, trustworthy, self-describing, interoperable, and secure. If a domain’s data product doesn’t meet these criteria, it isn’t ready for the mesh.

- Self-Serve Infrastructure: The central platform team provides the tools (the “roads and bridges”), but the domains drive the cars. This platform abstracts away the complexity of storage, compute, and networking, allowing domain experts to focus on data logic rather than infrastructure management.

- Federated Computational Governance: This is the “secret sauce.” It is a model where a global group defines the rules (e.g., “all PII must be encrypted”), but those rules are baked directly into the data products using code. This ensures compliance at scale without manual intervention.

Federated Computational Governance ⚓︎ allows us to maintain a balance. If we are too centralized, we stifle innovation and create bottlenecks. If we are too decentralized, we end up with a “data wild west” where nothing is compatible, and cross-domain analysis becomes impossible. By automating these policies, we create a system that is both flexible and secure.

Why a Federated Data Mesh Outperforms Centralized Data Lakes

Traditional data lakes often turn into “data swamps.” You pour data in from everywhere, and eventually, it becomes a murky mess where nobody knows what is what. A federated data mesh avoids this by prioritizing local context and accountability.

When a domain team manages their own data, they ensure it is accurate because they are the primary consumers of that data. This leads to several key advantages:

- Massive Scalability: You can add as many domains as you want without overwhelming a central team. The workload is distributed across the entire organization.

- Unmatched Agility: Need a new data product? The domain team builds it. No waiting in a six-month IT queue. This allows organizations to respond to market changes or research breakthroughs in real-time.

- Reduced Technical Debt: By treating data as a product from the start, we avoid the “fix it later” mentality that plagues centralized repositories. Quality is built-in, not bolted on.

- Enhanced Security: Data stays within its original security perimeter. Instead of moving sensitive data to a central location (increasing the attack surface), we bring the analysis to the data.

For a deeper dive into how these platforms function, check out our guide on Federated Data Platform Ultimate Guide.

How Federated Computational Governance Ensures Interoperability

How do we make sure a “Customer” in the Sales domain means the same thing as a “Patient” in the Clinical domain? We use Federated Governance.

This isn’t about a dusty binder of rules that nobody reads. It is about Policy-as-Code. We use automated compliance checks and Data Contracts—digital agreements between producers and consumers that specify schema, quality, and security requirements.

By applying governance requirements programmatically, we ensure that every data product in the mesh is “born” compliant. This is the difference between checking a car’s safety after a crash and building the brakes directly into the assembly line.

Data Mesh 101: The impact of federated computational governance highlights that this approach increases participation and efficiency across the board by removing the friction of manual compliance audits.

Why Federated Learning is the Secret to Decentralized AI

If a federated data mesh is the skeleton, AI is the brain. But traditional AI hates decentralization. Usually, you have to move all your data to one place to train a model. In security-sensitive industries like healthcare, defense, or finance, that is often illegal, unethical, or technically impossible due to data gravity.

Enter Federated Learning (FL).

FL allows us to train AI models across decentralized domains without ever moving the raw data. The process works in cycles: the global model is sent to each local domain, the model learns from the local data, and then only the “insights” (mathematical model updates or gradients) are sent back to a central aggregator. This creates a No-Peek Policy. We can perform Federated Data Analysis across 50 different hospitals globally without any patient record ever leaving its home server.

This is a natural fit for a federated data mesh. Research in Empowering Data Mesh with Federated Learning shows that this integration enables cross-domain analysis while keeping data local and preserving privacy. It transforms the mesh from a passive storage architecture into an active intelligence network.

Scaling AI with Federated Data Mesh and Split Learning

Within a federated data mesh, we often use a technique called Split Learning. This is particularly useful when computational resources are unevenly distributed across the mesh. We split the AI model into parts: the first few layers of the model stay within the domain (processing the raw, sensitive data), and the later layers are shared or processed centrally.

This allows for:

- Cross-domain analysis: Learning patterns from diverse datasets (e.g., combining genomic data from one domain with lifestyle data from another) without exposing the raw underlying records.

- High Model Performance: Studies show that models trained via Split Learning in a mesh architecture maintain competitive performance compared to centralized models, often outperforming them because they can access a wider variety of data sources.

- Domain Diversity: The more domains we add, the smarter the model gets. It’s like a group of specialists consulting on a case—each brings a unique perspective, leading to a more robust and less biased global model.

- Reduced Latency: By processing data locally, we eliminate the time-consuming process of uploading massive datasets to the cloud for training.

For more on this, see Federated Learning in Healthcare.

Overcoming Traditional Machine Learning Bottlenecks

Traditional ML faces three massive hurdles in decentralized environments that a federated approach specifically addresses:

- Security Sensitivity: You can’t just “upload” a nation’s genomic database to the cloud. The risk of a data breach is too high. Federated learning ensures that the most sensitive data never leaves its secure environment.

- Data Movement Costs: Moving petabytes of data is expensive due to egress fees and slow due to bandwidth limitations. By moving the model instead of the data, we reduce network traffic by orders of magnitude.

- Regulatory Hurdles: GDPR, HIPAA, and the EU AI Act make cross-border data transfers a legal minefield. A federated data mesh with FL solves this by keeping the data within its legal jurisdiction, ensuring that data residency requirements are always met.

At Lifebit, we’ve seen this first-hand. Our Federated Research Environment Complete Guide explains how to power research without the “data tax” of movement. By leveraging Privacy-Preserving Technologies (PETs) like Differential Privacy and Secure Multi-Party Computation (SMPC) alongside the mesh, we can guarantee that even the model updates themselves cannot be reverse-engineered to reveal sensitive information.

The Role of Differential Privacy in the Mesh

To further enhance security, federated data meshes often incorporate Differential Privacy. This adds a calculated amount of mathematical “noise” to the model updates. This noise is enough to mask individual data points (ensuring no single patient or customer can be identified) but small enough that the overall statistical accuracy of the AI model remains intact. This is the gold standard for privacy in modern data science, and it is a core component of a mature federated data strategy.

Building the Infrastructure: Technical Components of a Federated Data Mesh

Building a federated data mesh isn’t about buying one piece of software. It is about assembling a stack that supports decentralization while maintaining a unified user experience. The goal is to make the decentralized nature of the data invisible to the end-user.

- Decentralized Storage: Each domain might use different tech—one on AWS S3, another on a local SQL server, and a third on a specialized genomic database. The mesh must be technology-agnostic.

- Orchestration: Tools that manage the flow of requests, model training, and data processing across the mesh. This layer ensures that tasks are executed in the right order and that results are aggregated correctly.

- Self-Service Portals: A place where researchers and analysts can find and request access to data products. This portal should provide a “shopping cart” experience for data, where access is granted automatically based on the user’s credentials.

- Federated Access Control: Managing permissions across a dozen different domains from a single point of truth. This often involves integrating with existing Identity and Access Management (IAM) systems like Okta or Azure AD.

Check out the Key Features Federated Data Lakehouse to see how these parts fit together into a cohesive ecosystem.

The Role of a Data Mesh Catalog in Discoverability

If you have 100 domains, how do you find anything? You need a Data Mesh Catalog. Without discoverability, a data mesh is just a collection of disconnected silos.

Think of this as the “Google” for your organization’s data. It doesn’t store the data; it stores the metadata (data about the data).

- Active Metadata: Unlike traditional static catalogs, an active catalog automatically updates when a domain changes its schema or adds new data. It uses crawlers and APIs to stay in sync with the actual data products.

- Product Scorecards: Users can see if a data product is “certified” for high-quality research. These scorecards track metrics like uptime, data freshness, and user ratings.

- Lineage Tracking: You can see exactly where a data point came from, how it was transformed, and who has touched it. This is critical for reproducibility in scientific research and for auditing in financial services.

This solves what we call the “metadata paradox”—where autonomy usually leads to fragmentation. A good catalog, like those discussed in Metadata Management in Data Mesh: Federated Ownership Patter , ensures enterprise-wide coherence while respecting domain independence.

Essential Tools for Federated Governance and Automation

Automation is the only way to scale. You cannot have a human “governance officer” manually checking every table in a mesh. We use:

- Computational Policies: Rules written in code (e.g., “Always mask PII in this domain”). These are enforced at the query level, ensuring that unauthorized data never leaves the domain.

- API-Driven Workflows: Automating the “request and approve” cycle. When a researcher requests access, the system checks their training records and project approvals automatically, granting access in seconds rather than weeks.

- Standardized Schemas: Ensuring that when two domains talk, they speak the same language. This often involves using industry standards like OMOP for healthcare or ISO 20022 for finance.

- Data Contracts: These are machine-readable files (often in YAML or JSON) that define the “interface” of a data product. They specify the schema, the expected quality levels (e.g., “no more than 1% null values”), and the security requirements. If a domain team makes a change that breaks the contract, the CI/CD pipeline will automatically block the update, preventing downstream errors.

Real-World Impact: How Global Leaders Scale Without Centralizing Data

Organizations aren’t just adopting a federated data mesh for technical elegance—they are doing it because it delivers measurable business value. In a world where data is the primary competitive advantage, the ability to access and analyze it quickly is the difference between leading the market and falling behind.

| Metric | Centralized Architecture | Federated Data Mesh |

|---|---|---|

| Time to Access Data | 4-6 Weeks | < 24 Hours |

| Regulatory Reporting | Slow / Manual | Automated / Real-time |

| Cost of Data Movement | High (Egress fees) | Zero (Data stays local) |

| Data Quality | Variable (Central team guess) | High (Domain experts own it) |

| Innovation Cycle | Months | Days |

Case Studies: From Streaming Giants to Financial Leaders

Many multi-million dollar organizations have already made the jump, proving that the mesh architecture is ready for enterprise-scale deployment.

- Zalando: As one of Europe’s largest fashion platforms, Zalando was an early adopter of the data mesh. They moved away from a central data lake to a model where over 300 engineering teams own their data. This shift allowed them to scale their recommendation engines and logistics systems far beyond what a central team could manage, resulting in a significantly more personalized customer experience.

- Paypal: PayPal uses mesh principles to handle complex financial data across global regions. By decentralizing data ownership, they’ve improved their ability to detect fraud in real-time. Each regional domain understands the specific fraud patterns of its market, allowing for more accurate models than a single, global “one-size-fits-all” approach.

- Fortune 500 Oil & Gas: One global energy company used a federated data mesh approach to integrate data from thousands of sensors across offshore rigs. By processing this data locally and only federating the insights, they decreased regulatory reporting time by three weeks and improved predictive maintenance, saving an estimated $10 million in potential downtime annually.

- HelloFresh: The meal-kit giant used a data catalog and mesh principles to reach a 3-year business adoption target in just 3 months. They reduced manual data enrichment by 50%, allowing their data scientists to spend more time on predictive modeling and less time on data cleaning.

The ROI of Federated Data Mesh

The Return on Investment (ROI) for a federated data mesh comes from three main areas: Efficiency, Risk Mitigation, and Opportunity. Efficiency is gained by removing bottlenecks. Risk is mitigated by ensuring data never leaves its secure home and is governed by code. Opportunity is created by allowing teams to experiment and launch new data-driven products faster than ever before.

These examples show that whether you are delivering kits for dinner or analyzing global financial fraud, the Federated Data Analysis 2 approach is the modern standard for high-performing organizations.

Overcoming Adoption Hurdles: Best Practices for Enterprise Success

Let’s be honest: the hardest part of a federated data mesh isn’t the code. It is the culture. Most organizations have spent decades building “command and control” structures for data. Moving from “IT owns the data” to “The Business owns the data” is a massive mindset change that requires strong leadership and a clear vision.

It requires Trust Federation. You have to trust that the Sales team will govern their data correctly, and they have to trust that the platform you provide will make their lives easier, not harder. This trust is built through transparency and the consistent application of automated rules.

Best Practices for Success:

- Start Small (The Pilot Phase): Don’t try to mesh the whole company at once. Pick one high-value domain (like “Customer Insights” or “Precision Medicine”) and build a pilot. Use this pilot to prove the value and refine your platform tools.

- Focus on the “Why”: Show teams how much time they will save by owning their own data products. Frame it as empowerment, not extra work. When teams realize they no longer have to wait for IT to run a report, adoption happens naturally.

- Invest in Enablement: Create an “Enablement Team” whose only job is to help domain teams become successful data publishers. This team provides training, documentation, and “golden paths” (pre-approved templates) for building data products.

- Define Clear Incentives: Reward teams that produce high-quality, highly-used data products. Make “Data Product Quality” a key performance indicator (KPI) for domain leaders.

For a step-by-step roadmap, see our Federated Governance Complete Guide.

Practical Steps to Transition from Monolith to Mesh

- Identify Business Domains: Look at your org chart and your value stream. Who actually generates the data? Those are your natural domains. Avoid creating domains based on technical layers (e.g., “The SQL Domain”); instead, base them on business functions (e.g., “The Claims Domain”).

- Create a Platform Blueprint: Define the standard tools everyone will use for security, discovery, and communication. This ensures that while domains are autonomous, they aren’t using 50 different, incompatible technologies.

- Define Data Contracts: Start requiring teams to sign digital “contracts” for their data products. This introduces a level of professional rigor to data sharing that is often missing in traditional environments.

- Rollout Incrementally: Onboard one domain at a time. Each new domain adds value to the mesh, creating a network effect where the mesh becomes more useful as it grows.

The Role of the Data Product Manager

A key hire for any domain in a mesh is the Data Product Manager. This person treats the domain’s data as a product, talking to “customers” (data consumers in other domains) to understand their needs and ensuring the data product roadmap aligns with business goals. This role bridges the gap between technical engineering and business strategy, ensuring the mesh remains focused on delivering value.

Frequently Asked Questions about Federated Data Mesh

How does a federated data mesh differ from a traditional data lake?

A traditional data lake is a centralized “dumping ground” where one team manages everything. A federated data mesh is a decentralized network where the teams who know the data best own it, while a central platform provides the governance and tools to keep it all connected.

Why is federated learning necessary for a data mesh in regulated industries?

In industries like healthcare or finance, you often cannot legally move data across borders or organizations. Federated learning allows you to train AI and perform Federated Analytics on that data without ever moving it, ensuring compliance with laws like GDPR.

What are the primary technical requirements for building a federated data mesh?

You need a self-serve data platform, a robust metadata catalog for discoverability, automated governance tools (Policy-as-Code), and a way to manage identities and access across decentralized nodes.

Conclusion

The era of the “all-encompassing” central data lake is over. It simply cannot keep up with the speed, scale, and security requirements of modern business. To survive and thrive, your data strategy must evolve toward a federated data mesh.

At Lifebit, we specialize in this evolution. Our Lifebit Federated Biomedical Data Platform is built specifically for the world’s most complex and sensitive data. We provide the Trusted Research Environment (TRE) and Trusted Data Lakehouse (TDL) components that allow global organizations to collaborate on multi-omic research and AI-driven insights without ever compromising on security or sovereignty.

Ready to see how a federated data mesh can transform your research? Lifebit is here to help you bridge the gap between data silos and global innovation.