Cloud Data Platforms: The Next-Gen Foundation for Your Business

Stop Wasting Millions on Legacy Silos: Why a Cloud Data Platform is Your Only Path to 2026 Scalability

A cloud data platform is a unified, cloud-based environment that combines data storage, processing, analytics, and AI capabilities into a single integrated system—replacing fragmented on-premises data warehouses, data lakes, and ETL tools with elastic, consumption-based infrastructure that scales automatically with your workload.

Key characteristics of cloud data platforms:

- Unified architecture – Combines data warehouse, data lake, and analytics tools in one environment

- Elastic scalability – Separates storage and compute for independent scaling without downtime

- Consumption-based pricing – Pay only for resources used, not fixed infrastructure

- Built-in AI/ML support – Native integration with machine learning and generative AI frameworks

- Real-time processing – Handles both batch and streaming data for instant insights

- Multi-layered security – End-to-end encryption, RBAC, audit trails, and compliance tools

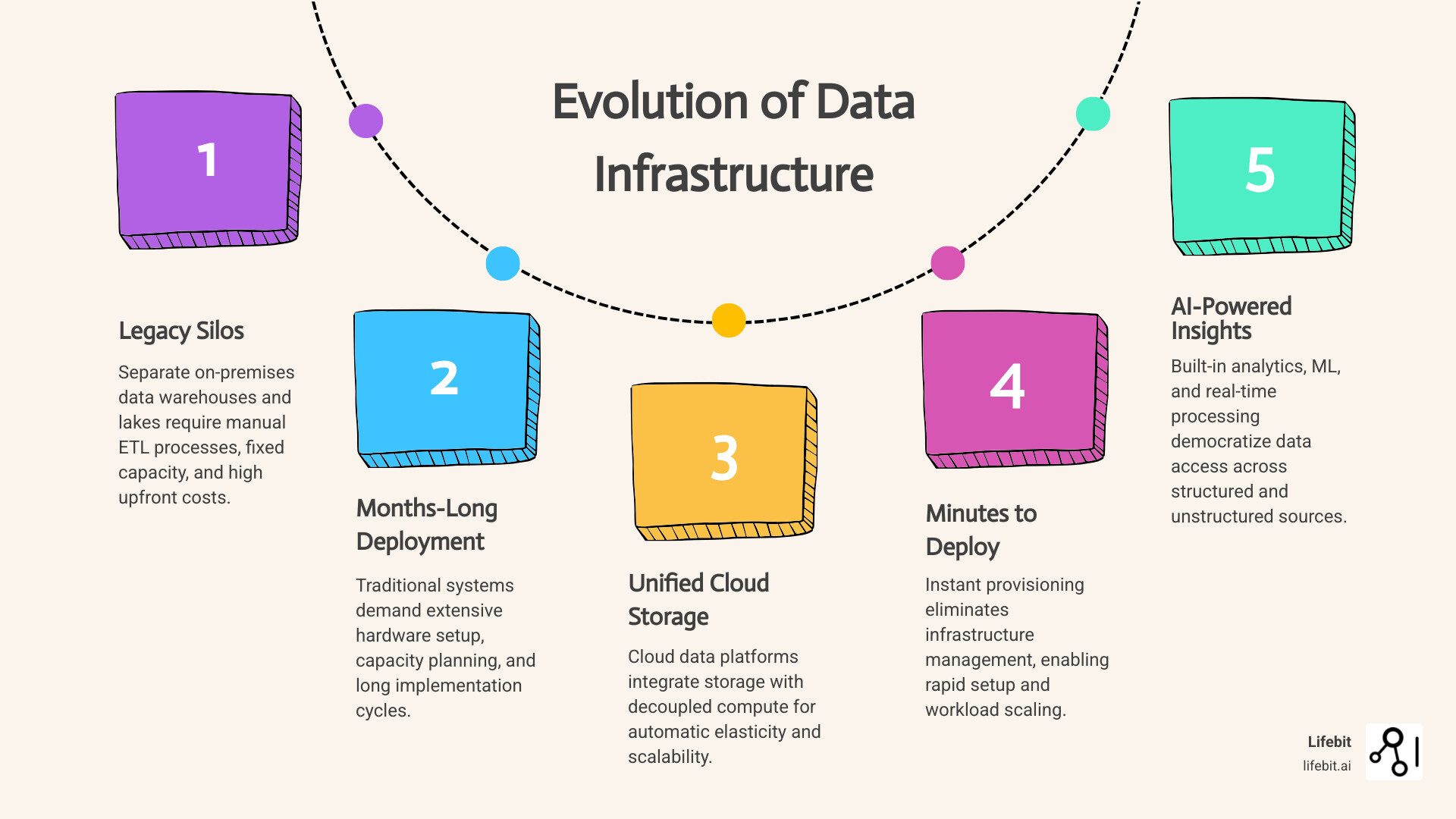

With an estimated 402.74 million terabytes of data created daily and the global datasphere projected to reach 221 zettabytes by 2026, organizations face mounting pressure to manage, analyze, and derive insights from exponential data growth. Traditional on-premises data warehouses can’t keep pace with this volume—they require massive upfront capital investment, months-long deployment cycles, and constant capacity planning to avoid performance bottlenecks.

Cloud data platforms solve this by eliminating infrastructure management entirely. They deliver 3x better price-performance than legacy systems, reduce infrastructure costs by up to 50%, and deploy in minutes instead of months. Organizations like EOS Group cut infrastructure spending in half after migrating to cloud platforms, while cloud-native integration services now run hundreds of millions of integration jobs monthly—demonstrating the scale and efficiency gains possible with modern cloud architecture.

But speed and cost savings are only part of the story. Cloud data platforms fundamentally change how organizations work with data—enabling real-time analytics, democratizing insights through natural language queries, and powering AI models with unified governance across structured and unstructured datasets. They support everything from IoT sensor streams to genomic sequencing files, all queryable through a single interface with consistent security policies.

I’m Dr. Maria Chatzou Dunford, CEO and Co-founder of Lifebit, where we’ve built a federated genomics and biomedical cloud data platform that enables secure, compliant analysis across distributed datasets. With over 15 years in computational biology, AI, and health-tech entrepreneurship, I’ve seen how the right cloud infrastructure transforms drug discovery, precision medicine, and regulatory decision-making.

Terms related to cloud data platform:

Why On-Prem is Dead: How a Cloud Data Platform Handles 221 Zettabytes Without Breaking the Bank

The era of buying stacks of servers and praying you have enough storage for next quarter is officially over. A cloud data platform acts as a virtualized data center, providing a relocation point for your entire data ecosystem. Unlike traditional setups, it allows you to store, manage, and analyze data from any location without worrying about hardware maintenance.

The shift is driven by sheer necessity. With 402.74 million terabytes of daily data being generated, the old way of “buying more disks” simply doesn’t scale. By 2026, we expect a global datasphere of 221 zettabytes, which is a number so large it’s hard to visualize (think of it as billions of high-definition movies). This explosion is fueled by the “Three Vs” of big data: Volume (the sheer amount), Velocity (the speed at which it is generated), and Variety (the different formats, from structured SQL tables to unstructured video files).

Traditional Data warehouses were designed for structured data like sales records. But today, the most valuable insights often hide in unstructured formats—images, genomic sequences, and sensor data. Cloud platforms provide the flexibility to handle both, offering a level of agility that on-premises infrastructure can’t touch. Furthermore, the concept of “Data Gravity” has shifted the landscape. As more applications move to the cloud, the data they generate naturally accumulates there. Trying to pull that data back to an on-premises server for analysis creates latency and massive egress fees. A modern cloud data platform keeps the compute power close to the data, ensuring high-speed processing and lower costs.

| Feature | On-Premises Infrastructure | Cloud Data Platform |

|---|---|---|

| Scaling | Manual, slow, requires hardware | Elastic, instant, automated |

| Cost Model | Large upfront CAPEX | Pay-as-you-go OPEX |

| Maintenance | High (IT staff needed for hardware) | Low (Managed by provider) |

| Performance | Limited by local hardware | Virtually unlimited via compute clusters |

| Security | Perimeter-based (Harder to update) | Multi-layered, automated patching |

Core Architecture of a Modern Cloud Data Platform

The secret sauce of a modern cloud data platform is the separation of storage and compute. In the old days, if you needed more processing power, you had to buy more storage too because they were physically tethered in the same server rack. Now, you can scale your “brain” (compute) and your “memory” (storage) independently. This means during a heavy end-of-month reporting cycle, you can spin up 100 compute nodes for two hours and then shut them down, paying only for those 120 minutes of work.

The architecture typically follows a layered approach:

- Data Ingestion: Automated ingestion tools and Data Transfer Services bring data in via batch or real-time streams. This includes Change Data Capture (CDC) which monitors databases for changes and updates the platform in real-time.

- Scalable Storage: Usually built on object storage (like S3 or Azure Blob) using “hot” tiers for frequent access and “cold” tiers for archival. This allows for virtually infinite retention of historical data at a fraction of the cost of high-performance disks.

- Change Engine: This is where raw data is cleaned, normalized, and transformed. Our Data integration guide explains how these pipelines ensure data quality through automated validation and schema enforcement.

- Metadata & Governance: A centralized catalog that tracks what data you have, where it came from (lineage), and who can see it. This is critical for meeting regulatory requirements and ensuring that data scientists aren’t working with stale or incorrect information.

Many organizations are now moving toward a lakehouse architecture. What is a data lakehouse? It is a hybrid that combines the cheap, flexible storage of a data lake with the high-performance query capabilities and ACID (Atomicity, Consistency, Isolation, Durability) transactions of a data warehouse. This unified approach eliminates the need to move data between different systems, which is a major source of errors, security vulnerabilities, and delays.

Powering AI and Machine Learning with a Cloud Data Platform

If data is the fuel for AI, then the cloud data platform is the high-performance engine. Modern platforms are “AI-ready,” meaning they don’t just store data; they actively help you build models. They provide integrated environments for Jupyter notebooks, automated machine learning (AutoML), and feature stores that allow data scientists to share and reuse variables across different models.

For example, leading cloud-native platforms now offer built-in support for vector search and generative AI. This allows developers to ground LLMs (Large Language Models) in “enterprise truth”—ensuring the AI isn’t just hallucinating, but is actually using your real business data. In the healthcare sector, this is revolutionary. An AI platform for healthcare can now process multimodal data—combining patient records with medical images and real-time wearable data—to provide a 360-degree view of health.

We are seeing this in biology. How cloud AI powers drug discovery is by running real-time ML inference on massive genomic datasets. Managed cloud solutions often deliver a 3.9x performance boost over open-source distributed processing engines, allowing researchers to find potential drug targets in hours instead of weeks. This speed is not just a convenience; it represents a fundamental shift in the economics of R&D, where the cost of failure is reduced and the speed to market for life-saving treatments is accelerated.

Cut Infrastructure Costs by 50%: The Real-World ROI of a Cloud Data Platform

The most immediate impact of switching to a cloud data platform is on the balance sheet. By moving from a fixed-cost model to a consumption-based one, we only pay for what we use. This shift from Capital Expenditure (CAPEX) to Operational Expenditure (OPEX) is a CFO’s dream, as it replaces massive, unpredictable hardware refreshes with a predictable, usage-linked monthly bill.

- 3x Price-Performance: Modern cloud-native platforms offer significantly better performance for the price compared to legacy warehouses. This is achieved through automated query optimization and the use of specialized hardware (like GPUs or TPUs) that would be too expensive for most companies to own and maintain on-site.

- 50% Infrastructure Savings: Many organizations see their costs drop by half almost immediately after migration. This isn’t just about the servers; it’s about the “hidden costs” of on-prem: electricity for cooling, physical security for the data center, the real estate costs of the server room, and the opportunity cost of having highly-paid engineers spend their time swapping out failed hard drives instead of building new features.

- Automated Everything: From backups to security patches, the platform handles the “boring” stuff. This reduces the need for a large IT operations team. Instead of a 10-person team managing hardware, you can have a 2-person team managing the data architecture, allowing the other 8 to focus on high-value data engineering and analytics.

- Eliminating the “Innovation Tax”: In a legacy environment, testing a new idea requires requesting budget, ordering hardware, and waiting weeks for installation. This is an innovation tax. With a cloud data platform, an analyst can spin up a sandbox environment in seconds for a few dollars. If the idea fails, they shut it down. If it succeeds, they scale it up. This culture of experimentation is what separates market leaders from laggards.

Our Next-gen data platform guide highlights that the real value isn’t just saving money—it’s agility. When a data analyst can provision a new environment in minutes to test a hypothesis, the “time to insight” drops to near zero. In a competitive market, being the first to identify a new consumer trend or a supply chain bottleneck is worth far more than the savings on the cloud bill itself.

Is Your Data Safe? How a Cloud Data Platform Fixes the Security Gaps in Your Basement Servers

“Is the cloud safe?” is the question we get most often. The answer is: usually safer than your own basement. Leading platforms implement a “defense-in-depth” approach, layering security at the chip, network, and application levels. While a private company might have a handful of security experts, a major cloud provider has thousands, backed by billions of dollars in R&D and real-time threat intelligence from across the globe.

To meet GDPR compliance and other strict regulations like HIPAA or CCPA, modern platforms provide a “Shared Responsibility Model.” The provider ensures the security of the cloud (the physical data centers and the underlying software), while the user ensures security in the cloud (configuring access and encrypting their own data). This partnership allows for a much higher security posture than most organizations could achieve alone.

Key security features include:

- End-to-End Encryption: Data is encrypted at rest using AES-256 and in transit using TLS 1.2+. Many platforms now also support “encryption in use” through confidential computing, ensuring data is protected even while it is being processed in memory.

- Role-Based Access Control (RBAC) and Attribute-Based Access Control (ABAC): You can define exactly who sees what, down to the specific column or row in a table. For example, a marketing analyst might see customer trends but have the “Social Security Number” column automatically masked.

- Audit Trails and Observability: Every single query, login attempt, and access request is logged in an immutable ledger. This creates a transparent history for regulators and allows security teams to use AI to detect anomalous behavior, such as a user downloading an unusually large amount of data at 3:00 AM.

- Network Isolation: Using Virtual Private Clouds (VPCs) and private links, organizations can ensure their data never even touches the public internet, effectively creating a “private cloud” experience within the public cloud infrastructure.

At Lifebit, we take this a step further with our Trusted data lakehouse features. We use “Data Airlocks” and Trusted Research Environments (TREs) to ensure that sensitive data never leaves its secure environment. Researchers can bring their code to the data, analyze it, and only take the results out—never the raw, sensitive files. This is the gold standard for Data governance guide compliance, particularly in fields like genomics where data privacy is a matter of national security and individual ethics.

Stop Guessing: How to Choose a Cloud Data Platform That Scales Without the Headache

Choosing a vendor is like choosing a partner—you’re going to be spending a lot of time together, so choose wisely. The market is crowded with options, from the “Big Three” cloud providers (AWS, Azure, Google Cloud) to specialized independent platforms like Snowflake or Databricks. When evaluating a cloud data platform, prioritize ease of use, machine learning workload support, and deep integration with your existing AI tools.

The Evaluation Checklist

When conducting your search, consider these five critical pillars:

- Interoperability: Does the platform play well with others? Avoid vendor lock-in by choosing platforms that support open data formats (like Parquet or Avro) and provide robust APIs for third-party tool integration.

- Performance at Scale: Don’t just look at how it handles a few gigabytes. Run a stress test with terabytes of data and complex, multi-join queries to see if the performance holds up without the costs spiraling out of control.

- Governance and Compliance: Does it have the specific certifications you need (SOC2, ISO 27001, FedRAMP)? Can it handle data residency requirements by keeping data within specific geographic borders?

- Developer Experience: A platform is only useful if your team uses it. Look for intuitive interfaces, support for multiple languages (SQL, Python, R, Scala), and a strong community or support ecosystem.

- Total Cost of Ownership (TCO): Look beyond the sticker price. Factor in the cost of data egress, the time required for training, and the potential savings from automated management features.

For organizations in highly regulated sectors like healthcare or government, the choice often comes down to how the platform handles data sovereignty and security. When building your Enterprise data platform strategy, start with a Proof of Concept (POC). Pick a specific, high-value use case—like real-time IoT analytics or a new BI dashboard—and see how the platform handles it. Following Bioinformatics platform best practices ensures that your implementation is built for scale from day one.

The Future of Federated Cloud Data Platforms

The next frontier isn’t just “the cloud”—it’s federation. As data residency laws get stricter (such as the European Health Data Space), moving data across borders is becoming legally and logistically impossible. The solution is a Federated data platform guide approach.

Instead of moving data to a central hub, we move the analysis to where the data lives. Lifebit CloudOS federation allows a researcher in New York to query a dataset in London without the data ever moving. This decentralized AI model respects data sovereignty while still allowing for global collaboration. Trusted Research Environments are the key to this future, providing the secure “room” where this work happens. This approach effectively solves the conflict between the need for big data and the need for local privacy.

Frequently Asked Questions about Cloud Data Platforms

How do cloud data platforms handle unstructured data?

Unlike traditional databases, modern platforms use object storage to hold “blobs” of data like PDFs, images, or audio files. They then use AI-driven metadata extraction or specialized engines (like Spark) to index and query this data alongside your structured tables. This allows you to, for example, search for all medical records that contain a specific keyword in a scanned PDF doctor’s note.

What is the difference between a data lake and a lakehouse?

A data lake is essentially a massive storage bin for raw data in its native format. It’s cheap but can be messy (a “data swamp”) because it lacks organization and quality control. A lakehouse adds a layer of structure, governance, and high-performance indexing on top of that lake. It gives you the performance and ACID transactions of a warehouse with the low cost and flexibility of a lake.

Are cloud data platforms secure enough for highly regulated industries?

Yes. In fact, many government agencies and global banks now prefer the cloud because providers invest billions in security—far more than any single company could. With features like VPC (Virtual Private Cloud) isolation, hardware-level encryption, and continuous automated compliance monitoring, they meet the most stringent global standards, including those required for national defense and genomic research.

What is FinOps in the context of a cloud data platform?

FinOps (Financial Operations) is the practice of bringing financial accountability to the variable spend model of the cloud. Because cloud data platforms allow anyone to spin up resources, costs can spiral if not monitored. FinOps involves using automated tools to track spending, set budgets, and optimize resource usage (e.g., identifying and shutting down “zombie” compute clusters that aren’t being used).

Can I use multiple cloud providers at once?

Yes, this is known as a multi-cloud strategy. Many modern cloud data platforms are designed to be “cloud-agnostic,” meaning they can run on AWS, Azure, or Google Cloud simultaneously. This prevents vendor lock-in and allows you to take advantage of specific features from different providers (e.g., using Google’s AI tools with data stored on Azure).

The End of Data Waiting Rooms: Start Getting Real-Time Insights Today

The transition to a cloud data platform is about more than just technology; it’s about empowerment. It’s about a world where a data scientist doesn’t have to wait three weeks for a server to be provisioned, and where a business leader can ask a question in plain English and get an answer backed by billions of data points in seconds.

At Lifebit, we are proud to be at the forefront of this revolution. Our next-generation federated AI platform is designed specifically for the most complex data on earth—biomedical and multi-omic data. We provide the tools for harmonization, advanced analytics, and federated governance that power research for biopharma and public health agencies globally.

Ready to see how a modern, secure, and federated approach can transform your research?